Rust专项——实战案例:文本分析与词频统计系统

Rust专项——实战案例:文本分析与词频统计系统

红目香薰

发布于 2025-12-16 16:42:21

发布于 2025-12-16 16:42:21

本项目综合运用第八章所学知识,构建一个功能完整的文本分析与词频统计系统。该系统将展示 Vec、HashMap、BTreeMap、HashSet、迭代器链式调用、并行处理等技术的综合应用。

1. 项目概述

功能需求

- 📄 文本预处理:读取文件、清理标点、转换为小写

- 📊 词频统计:统计每个单词的出现次数

- 🔝 Top K 查询:找出出现频率最高的 K 个词

- 📈 统计分析:总词数、不同单词数、平均频率

- 🔍 关键词搜索:按词频范围查询

- ⚡ 并行处理:使用 rayon 加速大规模文本处理

技术亮点

- 多种集合类型的组合使用

- 迭代器链式编程风格

- 并行迭代器优化

- 错误处理与用户体验

2. 项目结构

text_analyzer/

├── Cargo.toml

└── src/

├── main.rs # 主程序入口

├── analyzer.rs # 文本分析核心逻辑

└── stats.rs # 统计功能模块3. 核心数据结构设计

use std::collections::{HashMap, BTreeMap, HashSet};

use std::cmp::Reverse;

// 词频统计结果

pub struct WordFrequency {

word: String,

count: usize,

}

// 文本分析器

pub struct TextAnalyzer {

word_counts: HashMap<String, usize>,

total_words: usize,

}

impl TextAnalyzer {

pub fn new() -> Self {

Self {

word_counts: HashMap::new(),

total_words: 0,

}

}

}4. 完整代码实现

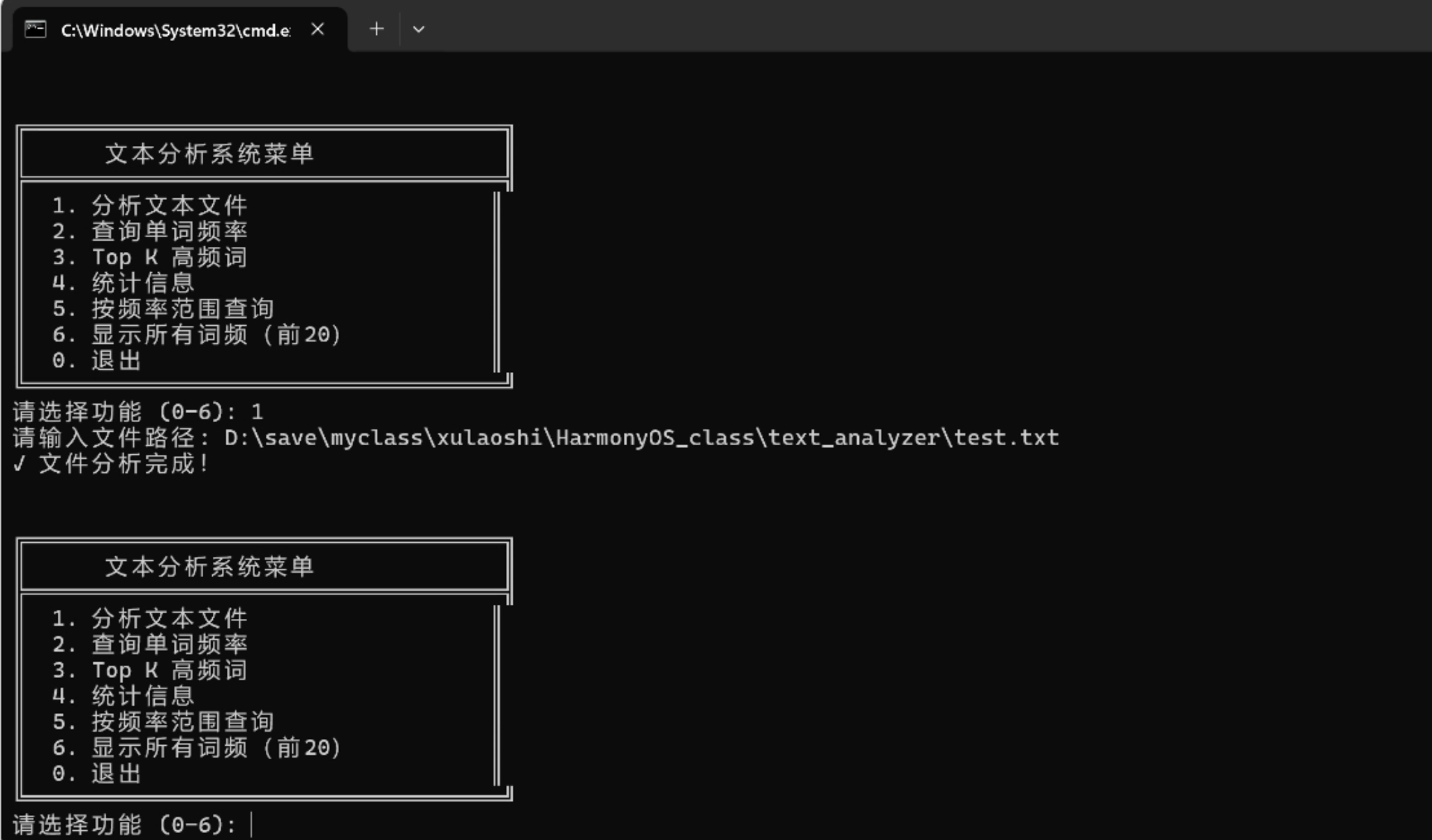

先演示一下具体效果再去看代码内容。 具体可参考视频:

Rust集合示例-文本分析与词频统计系统

效果演示

在这里插入图片描述

4.1 Cargo.toml

[package]

name = "text_analyzer"

version = "0.1.0"

edition = "2021"

[dependencies]

rayon = "1.8"4.2 src/analyzer.rs

use std::collections::{HashMap, BTreeMap};

use std::fs;

use rayon::prelude::*;

pub struct TextAnalyzer {

word_counts: HashMap<String, usize>,

total_words: usize,

}

impl TextAnalyzer {

pub fn new() -> Self {

Self {

word_counts: HashMap::new(),

total_words: 0,

}

}

/// 从文件加载文本并分析

pub fn analyze_file(&mut self, path: &str) -> std::io::Result<()> {

let content = fs::read_to_string(path)?;

self.analyze_text(&content);

Ok(())

}

/// 分析文本内容

pub fn analyze_text(&mut self, text: &str) {

// 预处理:转小写、分割单词、过滤空字符串

let words: Vec<String> = text

.to_lowercase()

.split(|c: char| !c.is_alphanumeric())

.filter(|s| !s.is_empty())

.map(|s| s.to_string())

.collect();

self.total_words = words.len();

// 使用迭代器统计词频

self.word_counts = words

.into_iter()

.fold(HashMap::new(), |mut acc, word| {

*acc.entry(word).or_insert(0) += 1;

acc

});

}

/// 并行分析(适合大文件)

pub fn analyze_text_parallel(&mut self, text: &str) {

// 预处理

let words: Vec<String> = text

.to_lowercase()

.split(|c: char| !c.is_alphanumeric())

.filter(|s| !s.is_empty())

.map(|s| s.to_string())

.collect();

self.total_words = words.len();

// 并行统计词频

self.word_counts = words

.par_iter()

.fold(HashMap::new, |mut acc: HashMap<String, usize>, word| {

*acc.entry(word.clone()).or_insert(0) += 1;

acc

})

.reduce(HashMap::new, |mut acc, map| {

for (word, count) in map {

*acc.entry(word).or_insert(0) += count;

}

acc

});

}

/// 获取词频统计

pub fn get_word_count(&self, word: &str) -> usize {

self.word_counts.get(&word.to_lowercase()).copied().unwrap_or(0)

}

/// 获取所有词频(按词排序)

pub fn get_all_frequencies(&self) -> BTreeMap<&String, &usize> {

// BTreeMap 自动按键排序

self.word_counts.iter().collect()

}

/// Top K 高频词

pub fn top_k_words(&self, k: usize) -> Vec<(String, usize)> {

use std::cmp::Reverse;

use std::collections::BinaryHeap;

let mut heap = BinaryHeap::new();

// 使用最小堆维护 Top K

for (word, &count) in &self.word_counts {

if heap.len() < k {

heap.push(Reverse((count, word.clone())));

} else if let Some(&Reverse((min_count, _))) = heap.peek() {

if count > min_count {

heap.pop();

heap.push(Reverse((count, word.clone())));

}

}

}

// 转换为有序列表(从高到低)

let mut result: Vec<(String, usize)> = heap

.into_iter()

.map(|Reverse((count, word))| (word, count))

.collect();

result.sort_by(|a, b| b.1.cmp(&a.1));

result

}

/// 按频率范围查询

pub fn words_by_frequency_range(&self, min: usize, max: usize) -> Vec<(String, usize)> {

self.word_counts

.iter()

.filter(|(_, &count)| count >= min && count <= max)

.map(|(word, &count)| (word.clone(), count))

.collect()

}

/// 获取统计信息

pub fn get_statistics(&self) -> Statistics {

let unique_words = self.word_counts.len();

let avg_frequency = if unique_words > 0 {

self.total_words as f64 / unique_words as f64

} else {

0.0

};

Statistics {

total_words: self.total_words,

unique_words,

avg_frequency,

}

}

}

#[derive(Debug)]

pub struct Statistics {

pub total_words: usize,

pub unique_words: usize,

pub avg_frequency: f64,

}4.3 src/main.rs

mod analyzer;

use analyzer::TextAnalyzer;

use std::io::{self, Write};

fn main() {

println!("╔════════════════════════════════════╗");

println!("║ 文本分析与词频统计系统 ║");

println!("╚════════════════════════════════════╝\n");

let mut analyzer = TextAnalyzer::new();

loop {

show_menu();

print!("请选择功能 (0-6): ");

io::stdout().flush().unwrap();

let mut input = String::new();

io::stdin().read_line(&mut input).unwrap();

let choice = input.trim();

match choice {

"1" => {

print!("请输入文件路径: ");

io::stdout().flush().unwrap();

let mut path = String::new();

io::stdin().read_line(&mut path).unwrap();

match analyzer.analyze_file(path.trim()) {

Ok(_) => println!("✓ 文件分析完成!"),

Err(e) => println!("✗ 读取文件失败: {}", e),

}

},

"2" => {

print!("请输入要查询的单词: ");

io::stdout().flush().unwrap();

let mut word = String::new();

io::stdin().read_line(&mut word).unwrap();

let count = analyzer.get_word_count(word.trim());

println!("\n单词 \"{}\" 出现次数: {}", word.trim(), count);

},

"3" => {

print!("请输入 K 值: ");

io::stdout().flush().unwrap();

let mut k = String::new();

io::stdin().read_line(&mut k).unwrap();

if let Ok(k) = k.trim().parse::<usize>() {

let top_k = analyzer.top_k_words(k);

println!("\nTop {} 高频词:", k);

for (i, (word, count)) in top_k.iter().enumerate() {

println!(" {}. {}: {} 次", i + 1, word, count);

}

} else {

println!("✗ 无效的数字");

}

},

"4" => {

let stats = analyzer.get_statistics();

println!("\n=== 统计信息 ===");

println!("总词数: {}", stats.total_words);

println!("不同单词数: {}", stats.unique_words);

println!("平均频率: {:.2}", stats.avg_frequency);

},

"5" => {

print!("请输入最小频率: ");

io::stdout().flush().unwrap();

let mut min = String::new();

io::stdin().read_line(&mut min).unwrap();

print!("请输入最大频率: ");

io::stdout().flush().unwrap();

let mut max = String::new();

io::stdin().read_line(&mut max).unwrap();

if let (Ok(min), Ok(max)) = (min.trim().parse::<usize>(), max.trim().parse::<usize>()) {

let words = analyzer.words_by_frequency_range(min, max);

println!("\n频率在 [{}, {}] 范围内的词:");

for (word, count) in words {

println!(" {}: {} 次", word, count);

}

} else {

println!("✗ 无效的数字");

}

},

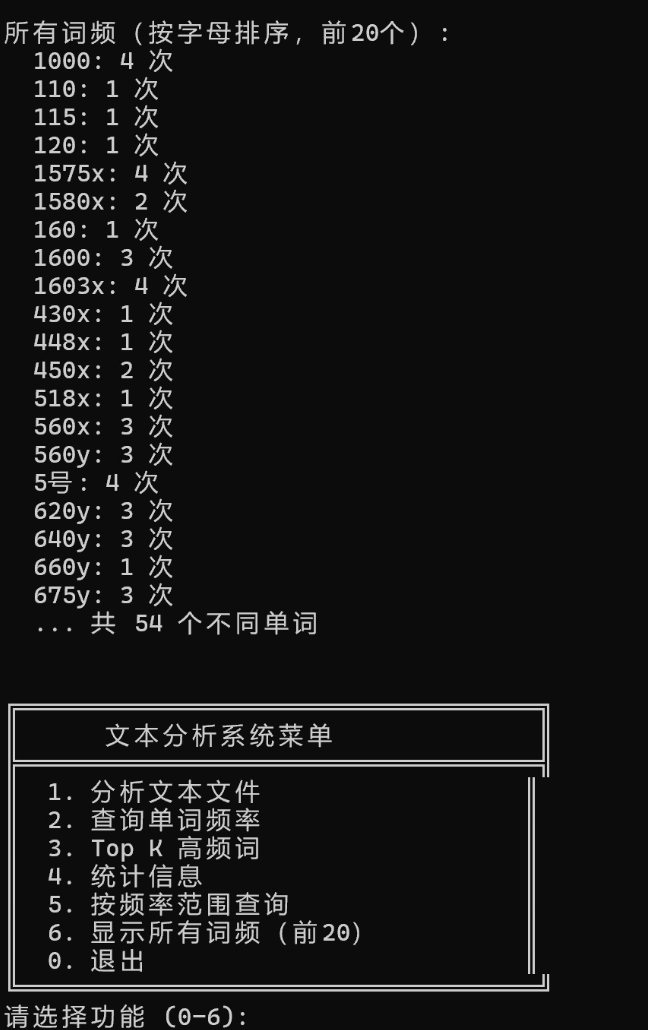

"6" => {

let all = analyzer.get_all_frequencies();

println!("\n所有词频(按字母排序):");

for (word, &count) in all.iter().take(20) {

println!(" {}: {} 次", word, count);

}

if all.len() > 20 {

println!(" ... 共 {} 个不同单词", all.len());

}

},

"0" => {

println!("感谢使用,再见!");

break;

},

_ => println!("✗ 无效的选择,请重新输入"),

}

println!();

}

}

fn show_menu() {

println!("\n╔════════════════════════════════════╗");

println!("║ 文本分析系统菜单 ║");

println!("╠════════════════════════════════════╣");

println!("║ 1. 分析文本文件 ║");

println!("║ 2. 查询单词频率 ║");

println!("║ 3. Top K 高频词 ║");

println!("║ 4. 统计信息 ║");

println!("║ 5. 按频率范围查询 ║");

println!("║ 6. 显示所有词频(前20) ║");

println!("║ 0. 退出 ║");

println!("╚════════════════════════════════════╝");

}5. 技术要点解析

5.1 集合类型的使用

- HashMap:存储词频(

word_counts: HashMap<String, usize>),O(1) 快速查找 - BTreeMap:按字母顺序排序输出(

get_all_frequencies),支持范围查询 - BinaryHeap:实现 Top K(最小堆维护 K 个最大值)

- Vec:存储结果列表,动态大小

image-20251031230207590

5.2 迭代器链式调用

// 预处理链:转小写 → 分割 → 过滤 → 收集

text.to_lowercase()

.split(|c: char| !c.is_alphanumeric())

.filter(|s| !s.is_empty())

.map(|s| s.to_string())

.collect()

// 词频统计:fold 累积

words.into_iter()

.fold(HashMap::new(), |mut acc, word| {

*acc.entry(word).or_insert(0) += 1;

acc

})

image-20251031230123026

5.3 并行处理优化

// 使用 rayon 并行处理大文件

words.par_iter()

.fold(HashMap::new, |mut acc, word| { /* ... */ })

.reduce(HashMap::new, |mut acc, map| { /* 合并 */ })6. 使用示例

示例1:分析文件

创建测试文件 test.txt:

Rust is a systems programming language. Rust is fast and safe.

Rust provides zero-cost abstractions.运行程序:

请选择功能: 1

请输入文件路径: test.txt

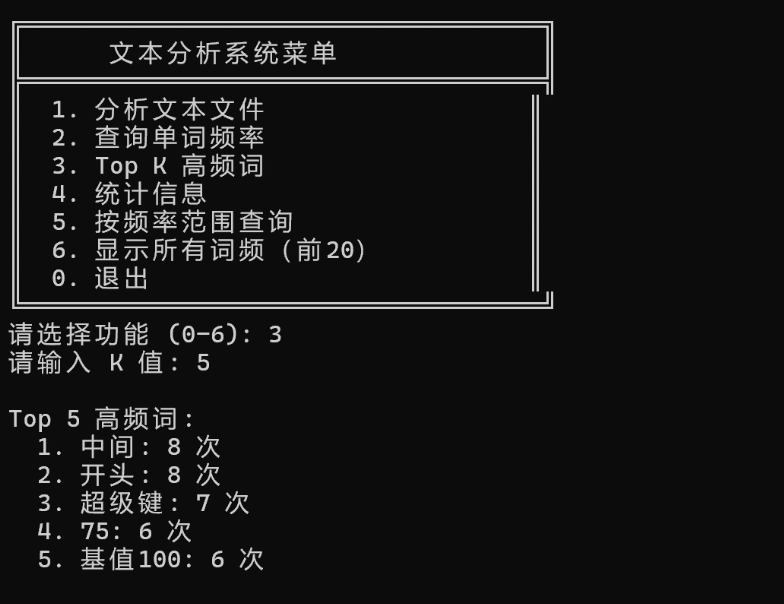

✓ 文件分析完成!示例2:查询 Top 5

请选择功能: 3

请输入 K 值: 5

Top 5 高频词:

1. rust: 3 次

2. is: 2 次

3. a: 1 次

4. systems: 1 次

5. programming: 1 次7. 项目扩展建议

扩展1:停用词过滤

use std::collections::HashSet;

fn filter_stopwords(words: Vec<String>) -> Vec<String> {

let stopwords: HashSet<&str> = ["the", "a", "an", "is", "are", "and", "or"].iter().cloned().collect();

words.into_iter()

.filter(|w| !stopwords.contains(w.as_str()))

.collect()

}扩展2:N-gram 分析

fn ngrams(text: &str, n: usize) -> Vec<String> {

text.chars()

.collect::<Vec<_>>()

.windows(n)

.map(|w| w.iter().collect())

.collect()

}扩展3:多文件批量处理

use rayon::prelude::*;

fn analyze_multiple_files(paths: Vec<&str>) -> HashMap<String, usize> {

paths.par_iter()

.flat_map(|path| {

let mut analyzer = TextAnalyzer::new();

analyzer.analyze_file(path).ok()?;

Some(analyzer.word_counts)

})

.reduce(HashMap::new, |mut acc, map| {

for (word, count) in map {

*acc.entry(word).or_insert(0) += count;

}

acc

})

}8. 性能优化技巧总结

优化点 | 方法 | 效果 |

|---|---|---|

大文件处理 | 使用 rayon 并行 | 2-4倍加速 |

内存使用 | 流式处理,避免一次性加载 | 减少内存占用 |

查找性能 | HashMap 存储词频 | O(1) 查找 |

Top K | BinaryHeap 最小堆 | O(n log k) 而非 O(n log n) |

字符串处理 | 使用 &str 切片 | 减少克隆 |

9. 测试与验证

创建测试文件验证功能:

# 运行项目

cargo run

# 测试功能

# 1. 分析文件

# 2. 查询单词

# 3. Top K

# 4. 统计信息10. 总结

本项目综合运用了:

- ✅ 多种集合类型:HashMap、BTreeMap、BinaryHeap、Vec

- ✅ 迭代器链式编程:map、filter、fold、collect

- ✅ 并行处理:rayon 加速大规模数据处理

- ✅ 算法设计:Top K 问题、范围查询

- ✅ 错误处理:Result 类型、用户友好提示

项目亮点:

- 代码简洁、高效、易维护

- 展示了 Rust 函数式编程风格

- 适合作为文本处理、数据分析的基础框架

此项目可作为更多复杂应用的基础,如:搜索引擎、日志分析、数据挖掘等。

本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2025-12-16,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录