当我将图像提供给经过训练的模型时,它为模型中未经过训练的对象提供了80%+精度。

当我将图像提供给经过训练的模型时,它为模型中未经过训练的对象提供了80%+精度。

提问于 2019-04-02 07:07:18

我正在开发Unity应用程序,它可以识别手势。我用来训练模型的图像是50x50黑白图像,通过HSV值进行手工分割。现在,在测试模型时也是这样做的,但问题是:当相机中没有手时,它仍然检测到一些东西(任何-through移动相机),因为HSV是不准确的,当使用(没有手)的图像被输入到模型时,它仍然给出了80%+的准确性,并为确定了一个随机类。

通过图像和代码来训练模型是向下链接的。

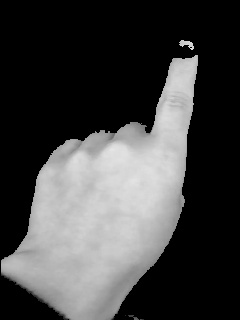

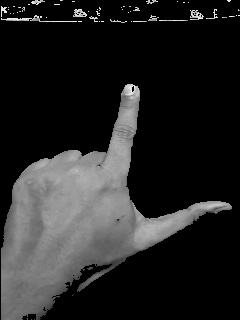

我使用TensorflowSharp加载我的模型。对于openCV,我使用的是统一OpenCV ,我有4手势(4个类),其中每个类有4-4.5k图像,总共有17k图像。样本图像

类别1

类别2

3级

4级

如果您需要任何其他信息,请告诉我,任何帮助将不胜感激。

- 我试过手检测模型,这样它就可以在没有手的情况下进行检测,但它们并不准确。

- 我已经尝试从用户输入触摸他的手在哪里,它的工作很好,但当手被移除时,它再次开始随机检测,因为HSV。

- 我通过SIFT等尝试了功能匹配,但是它们非常昂贵。

- 我尝试了模板匹配,从我的角度来看,这应该是可行的,但给出了一些奇怪的结果。

using (var graph = new TFGraph())

{

graph.Import(buffer);

using (var session = new TFSession(graph))

{

Stopwatch sw = new Stopwatch();

sw.Start();

var runner = session.GetRunner();

Mat gray = new Mat();

Mat HSVMat = new Mat();

Imgproc.resize(touchedRegionRgba, gray, new

OpenCVForUnity.Size(50, 50));

Imgproc.cvtColor(gray, HSVMat, Imgproc.COLOR_RGB2HSV_FULL);

Imgproc.cvtColor(gray, gray, Imgproc.COLOR_RGBA2GRAY);

for (int i = 0; i < gray.rows(); i++)

{

int count = 0;

for (int j = 200; count<gray.cols(); j++)

{

double[] Hvalue = HSVMat.get(i, count);

if (!((detector.mLowerBound.val[0] <= Hvalue[0] && Hvalue[0] <= detector.mUpperBound.val[0]) &&

(detector.mLowerBound.val[1] <= Hvalue[1] && Hvalue[1] <= detector.mUpperBound.val[1]) &&

(detector.mLowerBound.val[2] <= Hvalue[2] && Hvalue[2] <= detector.mUpperBound.val[2])))

{

gray.put(i, count, new byte[] { 0 });

}

}

}

var tensor = Util.ImageToTensorGrayScale(gray);

//runner.AddInput(graph["conv1_input"][0], tensor);

runner.AddInput(graph["zeropadding1_1_input"][0], tensor);

//runner.Fetch(graph["outputlayer/Softmax"][0]);

//runner.Fetch(graph["outputlayer/Sigmoid"][0]);

runner.Fetch(graph["outputlayer/Softmax"][0]);

var output = runner.Run();

var vecResults = output[0].GetValue();

float[,] results = (float[,])vecResults;

sw.Stop();

int result = Util.Quantized(results);

//numberOfFingersText.text += $"Length={results.Length} Elapsed= {sw.ElapsedMilliseconds} ms, Result={result}, Acc={results[0, result]}";

}

}# EDITED MODEL, MODEL 1

model = models.Sequential()

model.add(layers.ZeroPadding2D((2, 2), batch_input_shape=(None, 50, 50, 1), name="zeropadding1_1"))

#54x54 fed in due to zero padding

model.add(layers.Conv2D(8, (5, 5), activation='relu', name='conv1_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding1_2"))

model.add(layers.Conv2D(8, (5, 5), activation='relu', name='conv1_2'))

model.add(layers.MaxPooling2D((2, 2), strides=(2, 2), name="maxpool_1")) #convert 50x50 to 25x25

#25x25 fed in

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding2_1"))

model.add(layers.Conv2D(16, (5, 5), activation='relu', name='conv2_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding2_2"))

model.add(layers.Conv2D(16, (5, 5), activation='relu', name='conv2_2'))

model.add(layers.MaxPooling2D((5, 5), strides=(5, 5), name="maxpool_2")) #convert 25x25 to 5x5

#5x5 fed in

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding3_1"))

model.add(layers.Conv2D(40, (5, 5), activation='relu', name='conv3_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding3_2"))

model.add(layers.Conv2D(32, (5, 5), activation='relu', name='conv3_2'))

model.add(layers.Dropout(0.2))

model.add(layers.Flatten())

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dropout(0.2))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dropout(0.15))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dropout(0.1))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(512, activation='relu'))

model.add(layers.Dense(4, activation='softmax', name="outputlayer"))# MODEL 2, used a few more that I haven't mentioned

model = models.Sequential()

model.add(layers.ZeroPadding2D((2, 2), batch_input_shape=(None, 50, 50, 1), name="zeropadding1_1"))

#54x54 fed in due to zero padding

model.add(layers.Conv2D(8, (5, 5), activation='relu', name='conv1_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding1_2"))

model.add(layers.Conv2D(8, (5, 5), activation='relu', name='conv1_2'))

model.add(layers.MaxPooling2D((2, 2), strides=(2, 2), name="maxpool_1")) #convert 50x50 to 25x25

#25x25 fed in

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding2_1"))

model.add(layers.Conv2D(16, (5, 5), activation='relu', name='conv2_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding2_2"))

model.add(layers.Conv2D(16, (5, 5), activation='relu', name='conv2_2'))

model.add(layers.MaxPooling2D((5, 5), strides=(5, 5), name="maxpool_2")) #convert 25x25 to 5x5

#5x5 fed in

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding3_1"))

model.add(layers.Conv2D(40, (5, 5), activation='relu', name='conv3_1'))

model.add(layers.ZeroPadding2D((2, 2), name="zeropadding3_2"))

model.add(layers.Conv2D(32, (5, 5), activation='relu', name='conv3_2'))

model.add(layers.Dropout(0.2))

model.add(layers.Flatten())

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dropout(0.2))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dropout(0.15))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dropout(0.1))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dense(512, activation='tanh'))

model.add(layers.Dense(4, activation='sigmoid', name="outputlayer"))预期结果:训练后的模型实际4级精度较高,其余4类精度较低。

实际结果:对实际的4个类别以及其他图像提供的更高的准确性。

回答 1

Stack Overflow用户

回答已采纳

发布于 2019-04-02 11:23:29

据我所知,基本的问题是您无法检测到图像中是否有一只手。你得把手定位。

- 首先,我们需要检测手是否存在。您可以尝试使用暹罗网络来完成这些任务。我已经成功地使用了它们来检测皮肤异常。您可以参考这个-> “使用Keras的暹罗网络的一次学习”,由Harshvardhan https://link.medium.com/htBzNmUCyV和“PyTorch中的暹罗网络的面部相似性”

- 网络将提供一个二进制输出。如果手是存在的,那么靠近一个人的价值就会被看到。否则,就会看到接近于零的数值。

另外,像YOLO这样的ML模型被用于对象定位,但是暹罗网络简单而清醒。

连体网络实际上使用同样的CNN,因此它们是连体或连成一体的。它们测量图像嵌入之间的绝对误差,并试图逼近图像之间的相似函数。

经过适当的检测,可以进行分类。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/55468817

复制相关文章

相似问题