如何将Keras TensorBoard回调用于网格搜索

如何将Keras TensorBoard回调用于网格搜索

提问于 2017-08-02 08:00:58

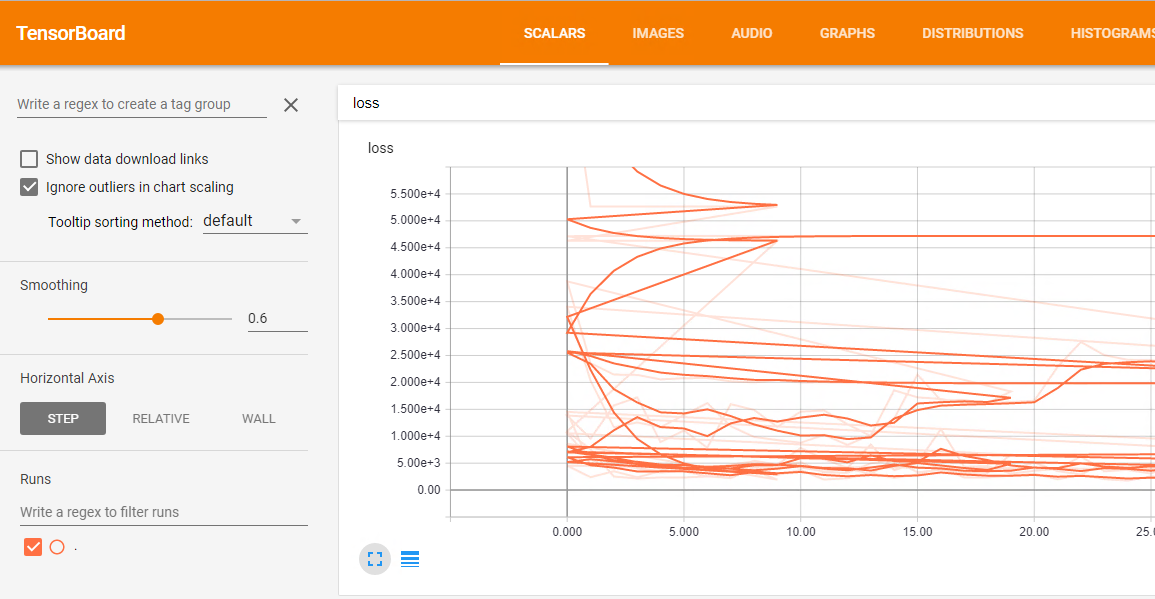

我正在使用Keras TensorBoard回调。我想运行一个网格搜索和可视化的结果,每一个模型在张量板。问题是,所有不同运行的结果都合并在一起,损失情节就像这样一团糟:

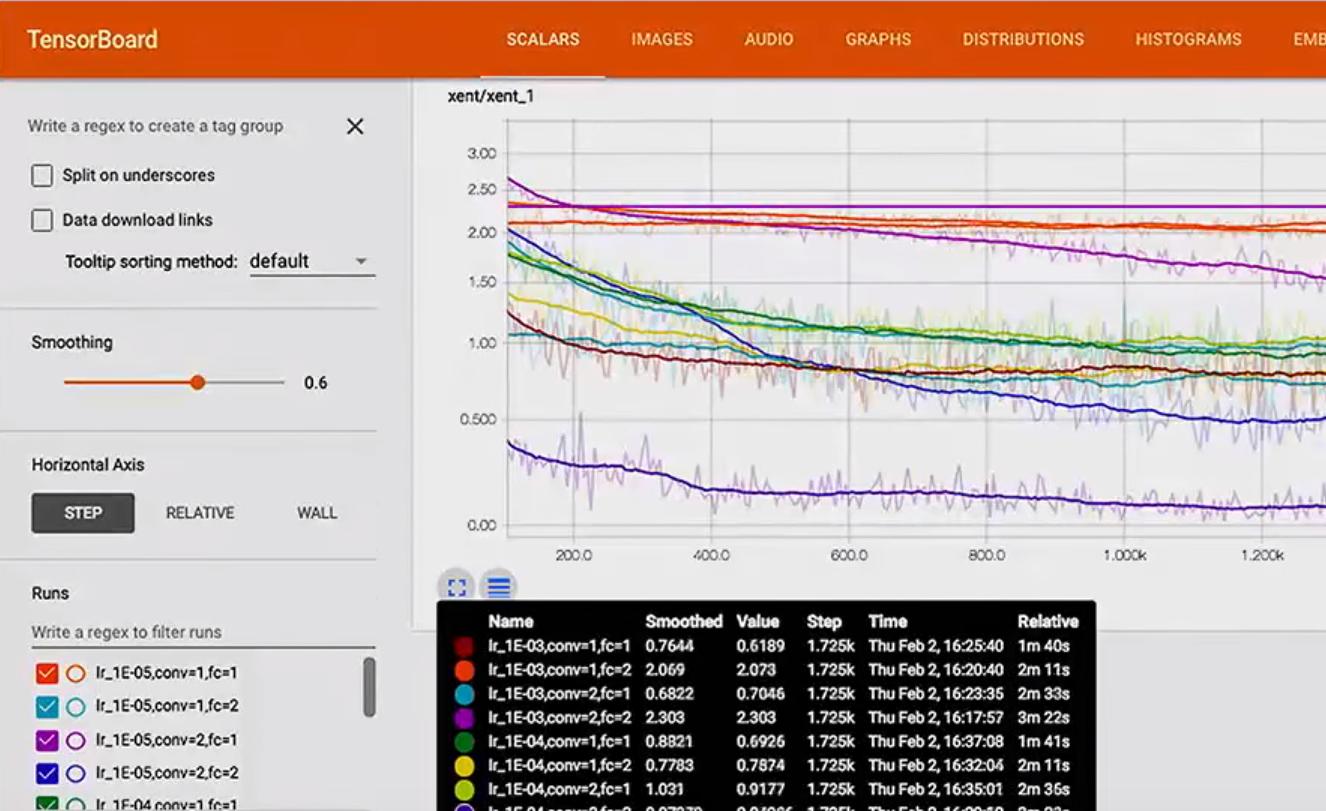

如何将每个运行重命名为具有类似于此的内容:

在这里,网格搜索的代码:

df = pd.read_csv('data/prepared_example.csv')

df = time_series.create_index(df, datetime_index='DATE', other_index_list=['ITEM', 'AREA'])

target = ['D']

attributes = ['S', 'C', 'D-10','D-9', 'D-8', 'D-7', 'D-6', 'D-5', 'D-4',

'D-3', 'D-2', 'D-1']

input_dim = len(attributes)

output_dim = len(target)

x = df[attributes]

y = df[target]

param_grid = {'epochs': [10, 20, 50],

'batch_size': [10],

'neurons': [[10, 10, 10]],

'dropout': [[0.0, 0.0], [0.2, 0.2]],

'lr': [0.1]}

estimator = KerasRegressor(build_fn=create_3_layers_model,

input_dim=input_dim, output_dim=output_dim)

tbCallBack = TensorBoard(log_dir='./Graph', histogram_freq=0, write_graph=True, write_images=False)

grid = GridSearchCV(estimator=estimator, param_grid=param_grid, n_jobs=-1, scoring=bug_fix_score,

cv=3, verbose=0, fit_params={'callbacks': [tbCallBack]})

grid_result = grid.fit(x.as_matrix(), y.as_matrix())回答 2

Stack Overflow用户

回答已采纳

发布于 2017-08-02 09:01:17

我不认为有任何方法可以将“每次运行”参数传递给GridSearchCV。也许最简单的方法是子类KerasRegressor来做您想做的事情。

class KerasRegressorTB(KerasRegressor):

def __init__(self, *args, **kwargs):

super(KerasRegressorTB, self).__init__(*args, **kwargs)

def fit(self, x, y, log_dir=None, **kwargs):

cbs = None

if log_dir is not None:

params = self.get_params()

conf = ",".join("{}={}".format(k, params[k])

for k in sorted(params))

conf_dir = os.path.join(log_dir, conf)

cbs = [TensorBoard(log_dir=conf_dir, histogram_freq=0,

write_graph=True, write_images=False)]

super(KerasRegressorTB, self).fit(x, y, callbacks=cbs, **kwargs)你会把它当作:

# ...

estimator = KerasRegressorTB(build_fn=create_3_layers_model,

input_dim=input_dim, output_dim=output_dim)

#...

grid = GridSearchCV(estimator=estimator, param_grid=param_grid,

n_jobs=1, scoring=bug_fix_score,

cv=2, verbose=0, fit_params={'log_dir': './Graph'})

grid_result = grid.fit(x.as_matrix(), y.as_matrix())更新:

由于交叉验证,GridSearchCV多次运行相同的模型(即相同的参数配置),因此前面的代码将在每次运行中放置多个跟踪。查看源代码(这里和这里),似乎没有检索“当前拆分id”的方法。同时,您不应该只检查现有文件夹并根据需要添加子补丁,因为作业可能会并行运行(至少,我不确定Keras/TF是否是这样)。你可以试试这样的东西:

import itertools

import os

class KerasRegressorTB(KerasRegressor):

def __init__(self, *args, **kwargs):

super(KerasRegressorTB, self).__init__(*args, **kwargs)

def fit(self, x, y, log_dir=None, **kwargs):

cbs = None

if log_dir is not None:

# Make sure the base log directory exists

try:

os.makedirs(log_dir)

except OSError:

pass

params = self.get_params()

conf = ",".join("{}={}".format(k, params[k])

for k in sorted(params))

conf_dir_base = os.path.join(log_dir, conf)

# Find a new directory to place the logs

for i in itertools.count():

try:

conf_dir = "{}_split-{}".format(conf_dir_base, i)

os.makedirs(conf_dir)

break

except OSError:

pass

cbs = [TensorBoard(log_dir=conf_dir, histogram_freq=0,

write_graph=True, write_images=False)]

super(KerasRegressorTB, self).fit(x, y, callbacks=cbs, **kwargs)为了兼容Python2,我使用了os调用,但是如果使用Python3,您可能会考虑使用更好的模块来处理路径和目录。

注意:我忘了前面提到它,但为了以防万一,请注意,传递write_graph=True将记录每次运行的图表,这取决于您的模型,可能意味着这个空间的很多(相对来说)。这同样适用于write_images,尽管我不知道这个特性所需要的空间。

Stack Overflow用户

发布于 2019-03-01 16:57:11

很简单,只需将日志保存到以dir形式连接的参数字符串分隔成dir:

下面是使用date作为run名称的示例:

from datetime import datetime

datetime_str = ('{date:%Y-%m-%d-%H:%M:%S}'.format(date=datetime.now()))

callbacks = [

ModelCheckpoint(model_filepath, monitor='val_loss', save_best_only=True, verbose=0),

TensorBoard(log_dir='./logs/'+datetime_str, histogram_freq=0, write_graph=True, write_images=True),

]

history = model.fit_generator(

generator=generator.batch_generator(is_train=True),

epochs=config.N_EPOCHS,

steps_per_epoch=100,

validation_data=generator.batch_generator(is_train=False),

validation_steps=10,

verbose=1,

shuffle=False,

callbacks=callbacks)页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/45454905

复制相关文章

相似问题