mxnet LinearRegressionOutput性能差

mxnet LinearRegressionOutput性能差

提问于 2016-12-19 07:25:20

我一直无法获得合理的性能使用mxnet LinearRegressionOutput层。

下面的自给示例尝试执行简单多项式函数(y = x1 + x2^2 + x3^3)的回归,并加入少量随机噪声。

使用给定这里的mxnet回归示例,以及包含一个隐藏层的稍微复杂的网络。

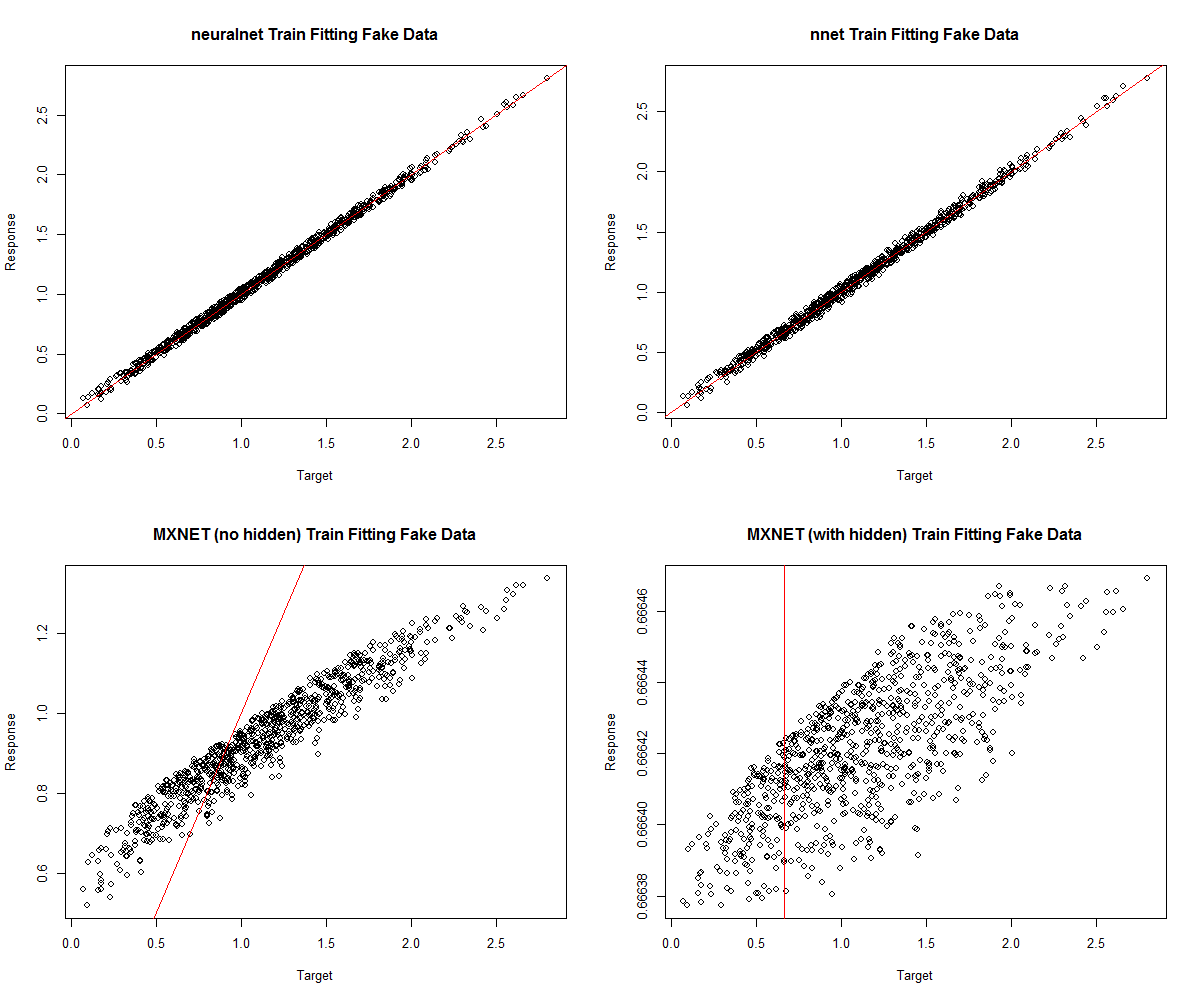

下面的示例还使用neuralnet和nnet包对回归网络进行了培训,从图中可以看出这两个包的性能要好得多。

我意识到对性能不佳的网络的答案是做一些超参数调优,但是我已经尝试了一系列的值,但性能没有任何改善。因此,我有以下问题:

- 我的mxnet回归实现中有错误吗?

- 有没有人有经验可以帮助我从mxnet获得一个简单的回归问题的合理性能,就像这里考虑的问题?

- 还有其他人有一个性能良好的mxnet回归示例吗?

我的安排如下:

MXNet version: 0.7

R `sessionInfo()`: R version 3.3.2 (2016-10-31)

Platform: x86_64-w64-mingw32/x64 (64-bit)

Running under: Windows 7 x64 (build 7601) Service Pack 1mxnet的不良回归结果

从这个可复制的例子来看:

## SIMPLE REGRESSION PROBLEM

# Check mxnet out-of-the-box performance VS neuralnet, and caret/nnet

library(mxnet)

library(neuralnet)

library(nnet)

library(caret)

library(tictoc)

library(reshape)

# Data definitions

nObservations <- 1000

noiseLvl <- 0.1

# Network config

nHidden <- 3

learnRate <- 2e-6

momentum <- 0.9

batchSize <- 20

nRound <- 1000

verbose <- FALSE

array.layout = "rowmajor"

# GENERATE DATA:

df <- data.frame(x1=runif(nObservations),

x2=runif(nObservations),

x3=runif(nObservations))

df$y <- df$x1 + df$x2^2 + df$x3^3 + noiseLvl*runif(nObservations)

# normalize data columns

# df <- scale(df)

# Seperate data into train/test

test.ind = seq(1, nObservations, 10) # 1 in 10 samples for testing

train.x = data.matrix(df[-test.ind, -which(colnames(df) %in% c("y"))])

train.y = df[-test.ind, "y"]

test.x = data.matrix(df[test.ind, -which(colnames(df) %in% c("y"))])

test.y = df[test.ind, "y"]

# Define mxnet network, following 5-minute regression example from here:

# http://mxnet-tqchen.readthedocs.io/en/latest//packages/r/fiveMinutesNeuralNetwork.html#regression

data <- mx.symbol.Variable("data")

label <- mx.symbol.Variable("label")

fc1 <- mx.symbol.FullyConnected(data, num_hidden=1, name="fc1")

lro1 <- mx.symbol.LinearRegressionOutput(data=fc1, label=label, name="lro")

# Train MXNET model

mx.set.seed(0)

tic("mxnet training 1")

mxModel1 <- mx.model.FeedForward.create(lro1, X=train.x, y=train.y,

eval.data=list(data=test.x, label=test.y),

ctx=mx.cpu(), num.round=nRound,

array.batch.size=batchSize,

learning.rate=learnRate, momentum=momentum,

eval.metric=mx.metric.rmse,

verbose=FALSE, array.layout=array.layout)

toc()

# Train network with a hidden layer

fc1 <- mx.symbol.FullyConnected(data, num_hidden=nHidden, name="fc1")

tanh1 <- mx.symbol.Activation(fc1, act_type="tanh", name="tanh1")

fc2 <- mx.symbol.FullyConnected(tanh1, num_hidden=1, name="fc2")

lro2 <- mx.symbol.LinearRegressionOutput(data=fc2, label=label, name="lro")

tic("mxnet training 2")

mxModel2 <- mx.model.FeedForward.create(lro2, X=train.x, y=train.y,

eval.data=list(data=test.x, label=test.y),

ctx=mx.cpu(), num.round=nRound,

array.batch.size=batchSize,

learning.rate=learnRate, momentum=momentum,

eval.metric=mx.metric.rmse,

verbose=FALSE, array.layout=array.layout)

toc()

# Train neuralnet model

mx.set.seed(0)

tic("neuralnet training")

nnModel <- neuralnet(y~x1+x2+x3, data=df[-test.ind, ], hidden=c(nHidden),

linear.output=TRUE, stepmax=1e6)

toc()

# Train caret model

mx.set.seed(0)

tic("nnet training")

nnetModel <- nnet(y~x1+x2+x3, data=df[-test.ind, ], size=nHidden, trace=F,

linout=TRUE)

toc()

# Check response VS targets on training data:

par(mfrow=c(2,2))

plot(train.y, compute(nnModel, train.x)$net.result,

main="neuralnet Train Fitting Fake Data", xlab="Target", ylab="Response")

abline(0,1, col="red")

plot(train.y, predict(nnetModel, train.x),

main="nnet Train Fitting Fake Data", xlab="Target", ylab="Response")

abline(0,1, col="red")

plot(train.y, predict(mxModel1, train.x, array.layout=array.layout),

main="MXNET (no hidden) Train Fitting Fake Data", xlab="Target",

ylab="Response")

abline(0,1, col="red")

plot(train.y, predict(mxModel2, train.x, array.layout=array.layout),

main="MXNET (with hidden) Train Fitting Fake Data", xlab="Target",

ylab="Response")

abline(0,1, col="red")回答 1

Stack Overflow用户

回答已采纳

发布于 2016-12-21 02:15:25

我在mxnet github (链接)中也问了同样的问题,乌照建议使用一种不同的优化方法,所以这都归功于他们。

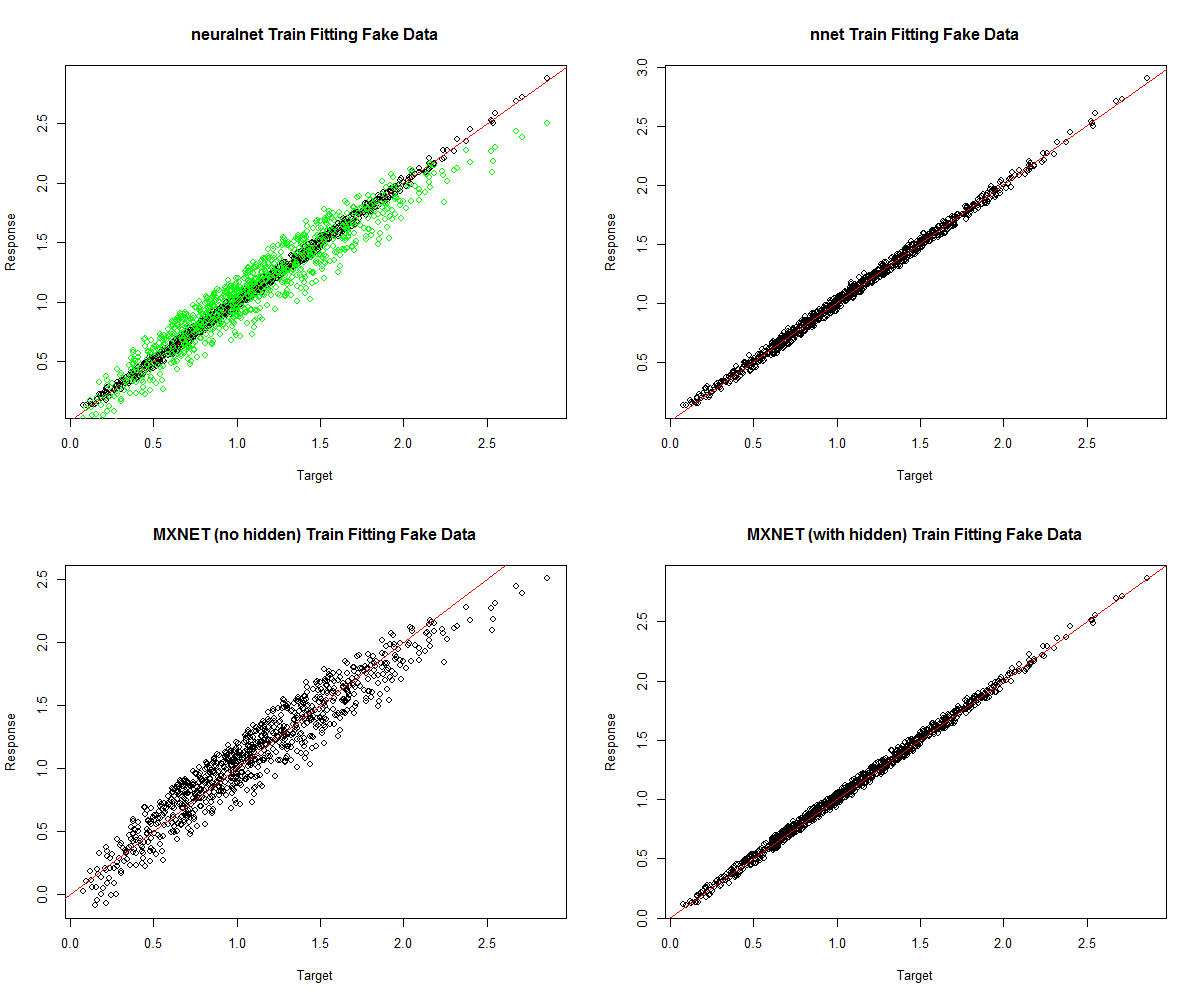

使用"rmsprop“优化器,以及增加批处理大小,使mxnet能够在这个简单的回归任务上提供与neuralnet和nnet工具相当的性能。我还包括了线性lm回归的性能。

结果和独立的示例代码包括在下面。我希望这对其他人(或将来的我自己)有帮助。

五种模型的均方误差:

$mxModel1

[1] 0.1404579862

$mxModel2

[1] 0.03263213499

$nnet

[1] 0.03222651138

$neuralnet

[1] 0.03054112057

$linearModel

[1] 0.1404421006显示mxnet回归良好/合理性能的地块(绿色线性回归结果):

最后是这个独立示例的代码:

## SIMPLE REGRESSION PROBLEM

# Check mxnet out-of-the-box performance VS neuralnet, and caret/nnet

library(mxnet)

library(neuralnet)

library(nnet)

library(caret)

library(tictoc)

library(reshape)

# Data definitions

nObservations <- 1000

noiseLvl <- 0.1

# Network config

nHidden <- 3

batchSize <- 100

nRound <- 400

verbose <- FALSE

array.layout = "rowmajor"

optimizer <- "rmsprop"

# GENERATE DATA:

set.seed(0)

df <- data.frame(x1=runif(nObservations),

x2=runif(nObservations),

x3=runif(nObservations))

df$y <- df$x1 + df$x2^2 + df$x3^3 + noiseLvl*runif(nObservations)

# normalize data columns

# df <- scale(df)

# Seperate data into train/test

test.ind = seq(1, nObservations, 10) # 1 in 10 samples for testing

train.x = data.matrix(df[-test.ind, -which(colnames(df) %in% c("y"))])

train.y = df[-test.ind, "y"]

test.x = data.matrix(df[test.ind, -which(colnames(df) %in% c("y"))])

test.y = df[test.ind, "y"]

# Define mxnet network, following 5-minute regression example from here:

# http://mxnet-tqchen.readthedocs.io/en/latest//packages/r/fiveMinutesNeuralNetwork.html#regression

data <- mx.symbol.Variable("data")

label <- mx.symbol.Variable("label")

fc1 <- mx.symbol.FullyConnected(data, num_hidden=1, name="fc1")

lro1 <- mx.symbol.LinearRegressionOutput(data=fc1, label=label, name="lro")

# Train MXNET model

mx.set.seed(0)

tic("mxnet training 1")

mxModel1 <- mx.model.FeedForward.create(lro1, X=train.x, y=train.y,

eval.data=list(data=test.x, label=test.y),

ctx=mx.cpu(), num.round=nRound,

array.batch.size=batchSize,

eval.metric=mx.metric.rmse,

verbose=verbose,

array.layout=array.layout,

optimizer=optimizer

)

toc()

# Train network with a hidden layer

fc1 <- mx.symbol.FullyConnected(data, num_hidden=nHidden, name="fc1")

tanh1 <- mx.symbol.Activation(fc1, act_type="tanh", name="tanh1")

fc2 <- mx.symbol.FullyConnected(tanh1, num_hidden=1, name="fc2")

lro2 <- mx.symbol.LinearRegressionOutput(data=fc2, label=label, name="lro2")

tic("mxnet training 2")

mx.set.seed(0)

mxModel2 <- mx.model.FeedForward.create(lro2, X=train.x, y=train.y,

eval.data=list(data=test.x, label=test.y),

ctx=mx.cpu(), num.round=nRound,

array.batch.size=batchSize,

eval.metric=mx.metric.rmse,

verbose=verbose,

array.layout=array.layout,

optimizer=optimizer

)

toc()

# Train neuralnet model

set.seed(0)

tic("neuralnet training")

nnModel <- neuralnet(y~x1+x2+x3, data=df[-test.ind, ], hidden=c(nHidden),

linear.output=TRUE, stepmax=1e6)

toc()

# Train caret model

set.seed(0)

tic("nnet training")

nnetModel <- nnet(y~x1+x2+x3, data=df[-test.ind, ], size=nHidden, trace=F,

linout=TRUE)

toc()

# Check response VS targets on training data:

par(mfrow=c(2,2))

plot(train.y, compute(nnModel, train.x)$net.result,

main="neuralnet Train Fitting Fake Data", xlab="Target", ylab="Response")

abline(0,1, col="red")

# Plot linear model performance for reference

linearModel <- linearModel <- lm(y~., df[-test.ind, ])

points(train.y, predict(linearModel, data.frame(train.x)), col="green")

plot(train.y, predict(nnetModel, train.x),

main="nnet Train Fitting Fake Data", xlab="Target", ylab="Response")

abline(0,1, col="red")

plot(train.y, predict(mxModel1, train.x, array.layout=array.layout),

main="MXNET (no hidden) Train Fitting Fake Data", xlab="Target",

ylab="Response")

abline(0,1, col="red")

plot(train.y, predict(mxModel2, train.x, array.layout=array.layout),

main="MXNET (with hidden) Train Fitting Fake Data", xlab="Target",

ylab="Response")

abline(0,1, col="red")

# Create and print table of results:

results <- list()

rmse <- function(target, response) {

return(sqrt(mean((target - response)^2)))

}

results$mxModel1 <- rmse(train.y, predict(mxModel1, train.x,

array.layout=array.layout))

results$mxModel2 <- rmse(train.y, predict(mxModel2, train.x,

array.layout=array.layout))

results$nnet <- rmse(train.y, predict(nnetModel, train.x))

results$neuralnet <- rmse(train.y, compute(nnModel, train.x)$net.result)

results$linearModel <- rmse(train.y, predict(linearModel, data.frame(train.x)))

print(results)页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/41217664

复制相关文章

相似问题