深度自动编解码结构/形状误差中的Keras NoneTypeError

深度自动编解码结构/形状误差中的Keras NoneTypeError

提问于 2021-04-23 07:35:53

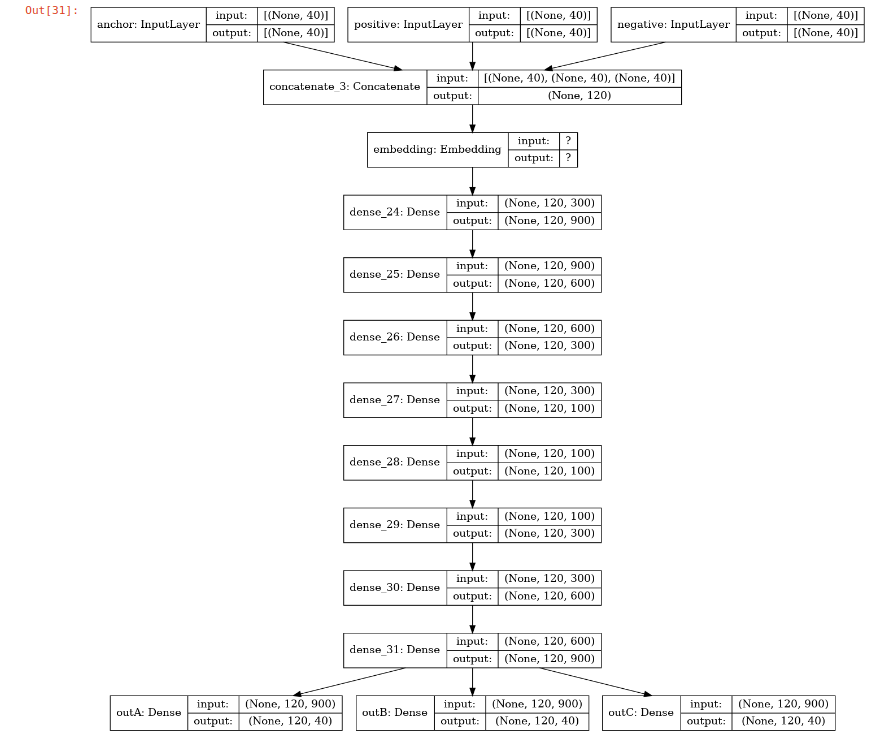

我的神经网络训练很困难。我把神经网络定义如下:

shared = embedding_layer

inputA = keras.Input(shape=(40, ), name="anchor") # Variable-length sequence of ints

inputP = keras.Input(shape=(40, ), name="positive") # Variable-length sequence of ints

inputN = keras.Input(shape=(40, ), name="negative") # Binary vectors of size num_tags

concatenated = layers.concatenate([inputA, inputP, inputN])

embedded_A = shared(concatenated)

encoded = Dense(900, activation = "relu")(embedded_A)

encoded = Dense(600, activation = "relu")(encoded)

encoded = Dense(300, activation = "relu")(encoded)

encoded = Dense(100, activation = "relu")(encoded)

decoded = Dense(100, activation = "relu")(encoded)

decoded = Dense(300, activation = "relu")(decoded)

decoded = Dense(600, activation = "relu")(decoded)

decoded = Dense(900, activation = "relu")(decoded)

predictionsA = Dense(40, activation="sigmoid", name ='outA')(decoded)

predictionsP = Dense(40, activation="sigmoid", name ='outB')(decoded)

predictionsN = Dense(40, activation="sigmoid", name ='outC')(decoded)

ml_model = keras.Model(

inputs=[inputA, inputP, inputN],

outputs=[predictionsA, predictionsP, predictionsN]

)

ml_model.compile(

optimizer='adam',

loss='mse'

)

ml_model.fit(

{"anchor": anchor, "positive": positive, "negative": negative},

{"outA": anchor, "outB": positive, 'outC': negative},

epochs=2)从原理上看就像

嵌入层的定义如下:

embedding_m = model.syn0

embedding_layer = Embedding(len(vocab),

300,

weights=[embedding_m],

input_length=40,

trainable=True)What I feed into the network is three numpy arrays of shape (120000, 40) which look like this:

array([[ 2334, 23764, 7590, ..., 3000001, 3000001, 3000001],

[3000000, 1245, 1124, ..., 3000001, 3000001, 3000001],

[ 481, 491, 5202, ..., 3000001, 3000001, 3000001],

...,

[3000000, 125, 20755, ..., 3000001, 3000001, 3000001],

[1217971, 168575, 239, ..., 9383, 1039, 87315],

[ 12990, 91, 258231, ..., 3000001, 3000001, 3000001]])输入和输出是一样的,因为我正在制作一个自动编码器解码器。

我得到的错误是:

尺寸必须相等,但对于输入形状为: 32, 120 ,40,32的{节点均方_平方差}}=SquaredDifferenceT=DT_FLOAT,则为120和32。

但我似乎找不出原因,也找不出解决办法.有什么想法吗?如果需要,我可以提供更多的例子。我怀疑存在某种尺寸错误,因为理想情况下,我希望输出的形状(120000,40)与我的输入完全相同。

回答 2

Stack Overflow用户

回答已采纳

发布于 2021-04-23 11:26:59

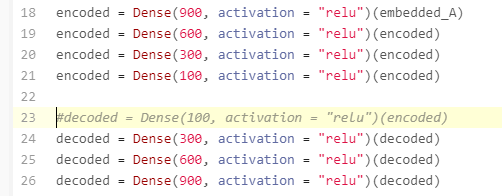

修正了有问题的编码器-解码器的版本:

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

from keras.layers import Dense

#shared = embedding_layer

#Simulate that...

shared=Dense(1,activation="relu")

inputA = keras.Input(shape=(40, ), name="anchor") # Variable-length sequence of ints

inputP = keras.Input(shape=(40, ), name="positive") # Variable-length sequence of ints

inputN = keras.Input(shape=(40, ), name="negative") # Binary vectors of size num_tags

concatenated = layers.concatenate([inputA, inputP, inputN])

embedded_A = shared(concatenated)

encoded = Dense(900, activation = "relu")(embedded_A)

encoded = Dense(600, activation = "relu")(encoded)

encoded = Dense(300, activation = "relu")(encoded)

encoded = Dense(100, activation = "relu")(encoded)

#decoded = Dense(100, activation = "relu")(encoded)

decoded = Dense(300, activation = "relu")(encoded)

decoded = Dense(600, activation = "relu")(decoded)

decoded = Dense(900, activation = "relu")(decoded)

predictionsA = Dense(40, activation="sigmoid", name ='outA')(decoded)

predictionsP = Dense(40, activation="sigmoid", name ='outB')(decoded)

predictionsN = Dense(40, activation="sigmoid", name ='outC')(decoded)

ml_model = keras.Model(

inputs=[inputA, inputP, inputN],

outputs=[predictionsA, predictionsP, predictionsN]

)

ml_model.compile(

optimizer='adam',

loss='mse'

)

#Simulate...

anchor=tf.random.uniform((100,40))

positive=tf.random.uniform((100,40))

negative=tf.random.uniform((100,40))

ml_model.fit(

{"anchor": anchor, "positive": positive, "negative": negative},

{"outA": anchor, "outB": positive, 'outC': negative},

epochs=2)Stack Overflow用户

发布于 2021-04-23 09:30:59

删除“解码”行以修复网络结构:

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/67225803

复制相关文章

相似问题