优化Keras CNN

我在提高我的CNN的准确性和减少损失方面遇到了困难。

以下是一些初始参数:

batch_size = 32

image_shape = 150 # Sizes input to 150x150

EPOCHS = 250

STEPS_PER_EPOCH = 7

IMAGES_IN_CLASS_FOLDERS > 100我将训练和验证集设置为相同的图像,但我对培训图像进行预处理,这样验证图像就不一样了:

# Image formatting - Preprocessing images into floating point tensors before being fed into the network

# Generator for our training data - Rescales the image, Flips Images Horizontally, Rotates it

train_image_generator = ImageDataGenerator(rescale=1./255, horizontal_flip=True, rotation_range=45)

# Generator for our validation data - Rescales the image

validation_image_generator = ImageDataGenerator(rescale=1./255)

# Applies scaling and resizing

train_data_gen = train_image_generator.flow_from_directory(batch_size=batch_size,

directory=training_Images,

shuffle=True,

target_size=(image_shape,image_shape), #(100,100)

class_mode='categorical')

val_data_gen = validation_image_generator.flow_from_directory(batch_size=batch_size,

directory=validate_Images,

shuffle=True ,

target_size=(image_shape, image_shape),

class_mode='categorical')此外,我还有一个顺序模型,我尝试过各种参数,比如输入CONV2D(32) -> CONV2D(64) -> CONV2D(128),但是我目前正在测试这个模型,但没有成功:

# Defining our model

model = tf.keras.models.Sequential([

# Old Method #

tf.keras.layers.Conv2D(8 , (2,2) , activation='LeakyReLU', input_shape=(image_shape, image_shape, 3)),

tf.keras.layers.Conv2D(16, (2,2) , activation='LeakyReLU'),

tf.keras.layers.Conv2D(32, (2,2) , activation='LeakyReLU'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Conv2D(40, (2,2) , activation='LeakyReLU'),

tf.keras.layers.Conv2D(56, (2,2) , activation='LeakyReLU'),

tf.keras.layers.Conv2D(64, (2,2) , activation='LeakyReLU'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Conv2D(96, (2,2) , activation='LeakyReLU'),

tf.keras.layers.Conv2D(128, (2,2), activation='LeakyReLU'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(16, activation='softmax'),

#tf.keras.layers.Dense(128, activation='relu'),

#tf.keras.layers.Dense(512, activation='relu'),

tf.keras.layers.Dense(120)

# End Old Method #

])我尝试过不同的CONV2D层,不同的激活方法。下面是model.compile:

model.compile(optimizer=SGD(lr=0.01),

loss=tf.keras.losses.CategoricalCrossentropy(),

metrics=['accuracy'])我使用的是SGD Optimizer,我尝试过ADAM,但结果相似。Loss的加班费减少了,但它似乎达到了一定的值范围,并且在没有增加Accuracy的情况下停滞不前。

model.fit:

history = model.fit(

train_data_gen,

steps_per_epoch= stepForEpoch,

epochs=EPOCHS,

validation_data=val_data_gen,

validation_steps=stepForEpoch

)有人能给我提供一些建议或指出正确的方向如何提高准确性和进一步减少损失吗?谢谢!

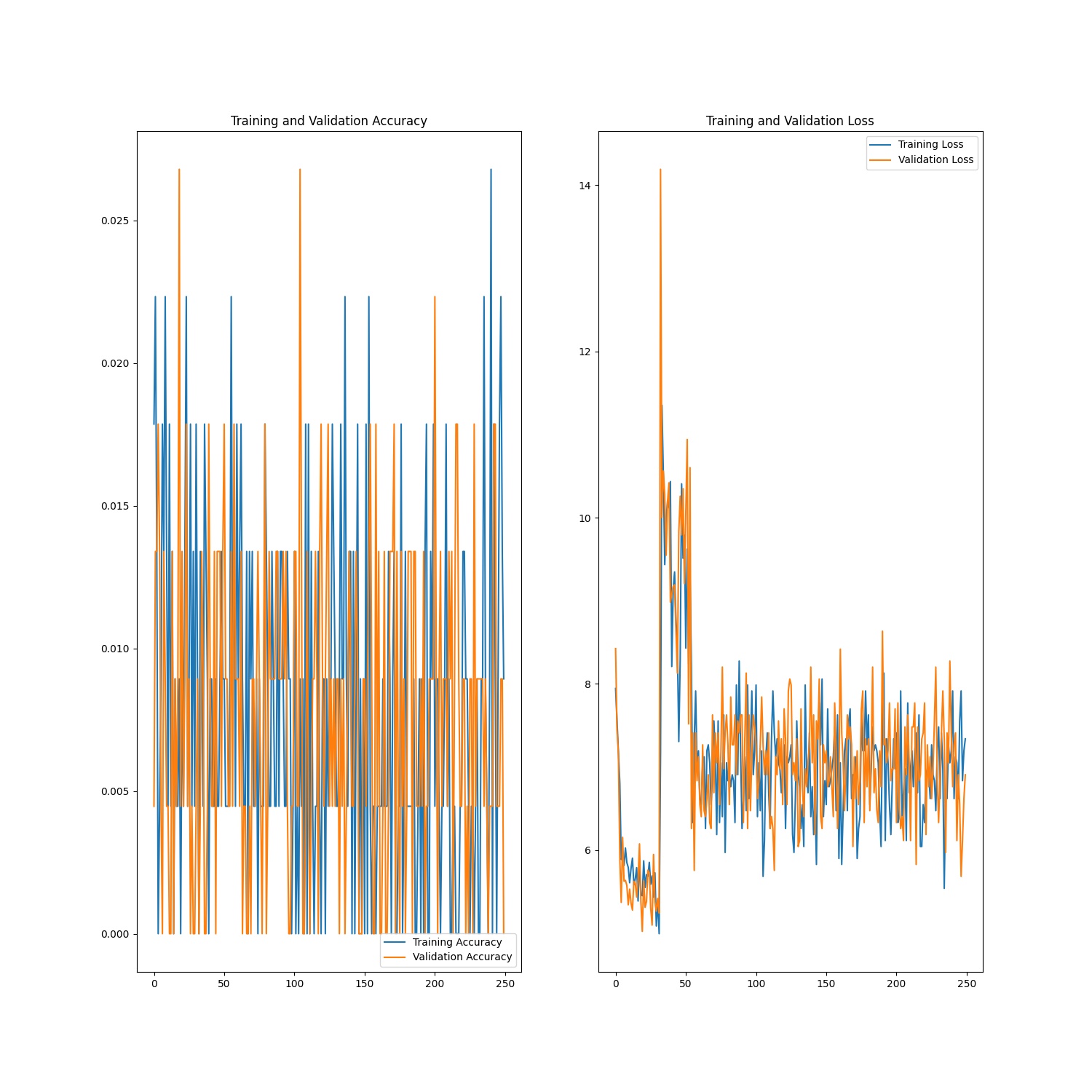

结果图像

06/23/2021的最后更新--我的模型正在显著改进,不仅有更多的时代,而且有更多的STEPS_PER_EPOCH:

除以像这样的图像数(IMAGES_IN_DATASET(20700) / BATCH_SIZE(32) = 677 STEPS_PER_EPOCH),并选择100个历元进行测试,我得到的精度值增加了+ 10%,而且随着MSE的改进,损失也在不断减少。

ACCURACY_INCREASE = %10

MSE_IMPROVEMENT = -0.0004

ACCURACY_LOSS_IMPROVEMENT = -1.1感谢用户@Reda El Hail博士

回答 1

Stack Overflow用户

发布于 2021-06-22 17:33:59

总结注释中的讨论,错误来自未设置激活函数的最后一层tf.keras.layers.Dense(120)。

对于分类任务,应该是tf.keras.layers.Dense(120, activation = 'softmax')。

正如@Snoopy所宣布的:在隐藏层中使用softmax是没有意义的。它应该只用于输出层。

https://stackoverflow.com/questions/68087196

复制相似问题