如何使用PyBullet将PyBullet模拟坐标投影到渲染的帧像素坐标?

如何使用PyBullet将PyBullet模拟坐标投影到渲染的帧像素坐标?

提问于 2021-11-01 22:54:59

如何将PyBullet中的对象位置转换为像素坐标,并使用PyBullet和OpenCV在帧上画一条线?

我们希望这样做,因为PyBullet本机addUserDebugLine()函数在直接模式下不可用。

import pybullet as p

import numpy as np

import time

import pybullet_data

import cv2

VIDEO_RESOLUTION = (1280, 720)

MY_COLORS = [(255,0,0), (0,255,0), (0,0,255)]

def capture_frame(base_pos=[0,0,0], _cam_dist=3, _cam_yaw=45, _cam_pitch=-45):

_render_width, _render_height = VIDEO_RESOLUTION

view_matrix = p.computeViewMatrixFromYawPitchRoll(

cameraTargetPosition=base_pos,

distance=_cam_dist,

yaw=_cam_yaw,

pitch=_cam_pitch,

roll=0,

upAxisIndex=2)

proj_matrix = p.computeProjectionMatrixFOV(

fov=90, aspect=float(_render_width) / _render_height,

nearVal=0.01, farVal=100.0)

(_, _, px, _, _) = p.getCameraImage(

width=_render_width, height=_render_height, viewMatrix=view_matrix,

projectionMatrix=proj_matrix, renderer=p.ER_TINY_RENDERER) # ER_BULLET_HARDWARE_OPENGL)

rgb_array = np.array(px, dtype=np.uint8)

rgb_array = np.reshape(rgb_array, (_render_height, _render_width, 4))

rgb_array = rgb_array[:, :, :3]

return rgb_array, view_matrix, proj_matrix

def render():

frame, vmat, pmat = capture_frame()

p1, cubeOrn = p.getBasePositionAndOrientation(1)

p2, cubeOrn = p.getBasePositionAndOrientation(2)

frame, view_matrix, proj_matrix = capture_frame()

frame = cv2.resize(frame, VIDEO_RESOLUTION)

points = {}

# reshape matrices

my_order = 'C'

pmat = np.array(proj_matrix).reshape((4,4), order=my_order)

vmat = np.array(view_matrix).reshape((4,4), order=my_order)

fmat = vmat.T @ pmat.T

# compute origin from origin point in simulation

origin = np.array([0,0,0,1])

frame_origin = (fmat @ origin)[:3]*np.array([1280, 640, 0]) + np.array([640, 360, 0])

# define unit vectors

unit_vectors = [ np.array([1,0,0,1]),

np.array([0,1,0,1]),

np.array([0,0,1,1]) ]

for col_id, unit_vector in enumerate(unit_vectors):

cur_point = (fmat @ unit_vector)[:3]*np.array([1280, 640, 0]) + np.array([640, 360, 0])

cv2.line(frame, (640,360), (int(cur_point[0]),int(cur_point[1])), color=MY_COLORS[col_id], thickness=2)

cv2.imwrite("my_rendering.jpg", frame)

print(p1,p2)

if __name__ == '__main__':

physicsClient = p.connect(p.DIRECT)#or p.DIRECT for non-graphical version

p.setAdditionalSearchPath(pybullet_data.getDataPath()) #optionally

p.setGravity(0,0,-10)

planeId = p.loadURDF("plane.urdf")

startPos = [1,0,0.2]

startOrientation = p.getQuaternionFromEuler([0,0,0])

boxId = p.loadURDF("r2d2.urdf",startPos, startOrientation)

startPos = [0,2,0.2]

boxId = p.loadURDF("r2d2.urdf",startPos, startOrientation)

#set the center of mass frame (loadURDF sets base link frame) startPos/Ornp.resetBasePositionAndOrientation(boxId, startPos, startOrientation)

for i in range (2400):

if i == 2399:

render()

p.stepSimulation()

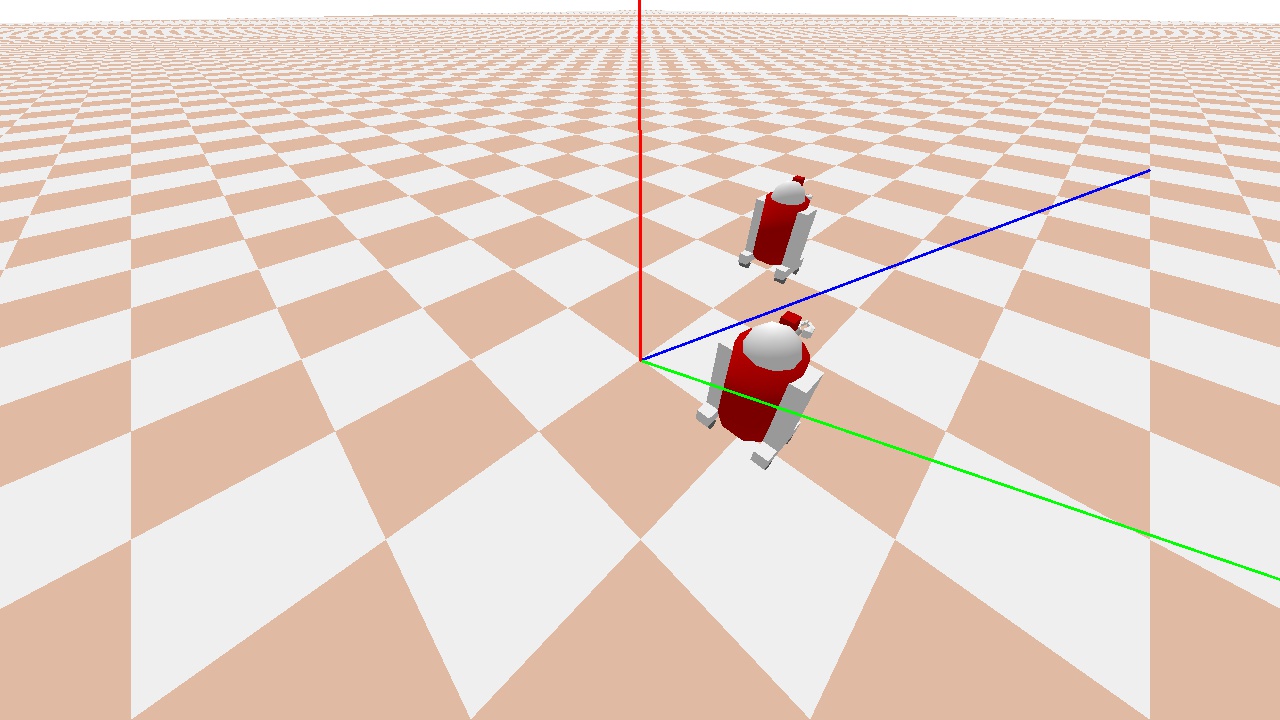

p.disconnect()预期的输出将是以下帧,但原点坐标帧绘制正确。例如X、Y和Z轴分别是红色、蓝色和绿色。

由于这两个R2D2机器人分别位于1,0,0和0,1,0,0,我们可以看到坐标帧是关闭的。(见下图)

我们尝试了以下几点:

matrices

- changing的

- 变换矩阵的

- ,而不是按计算fmat的顺序,例如pmat @ vmat,而不是vmat @ pmat等,

。

任何帮助都是非常感谢的。

回答 1

Stack Overflow用户

回答已采纳

发布于 2021-11-15 22:31:28

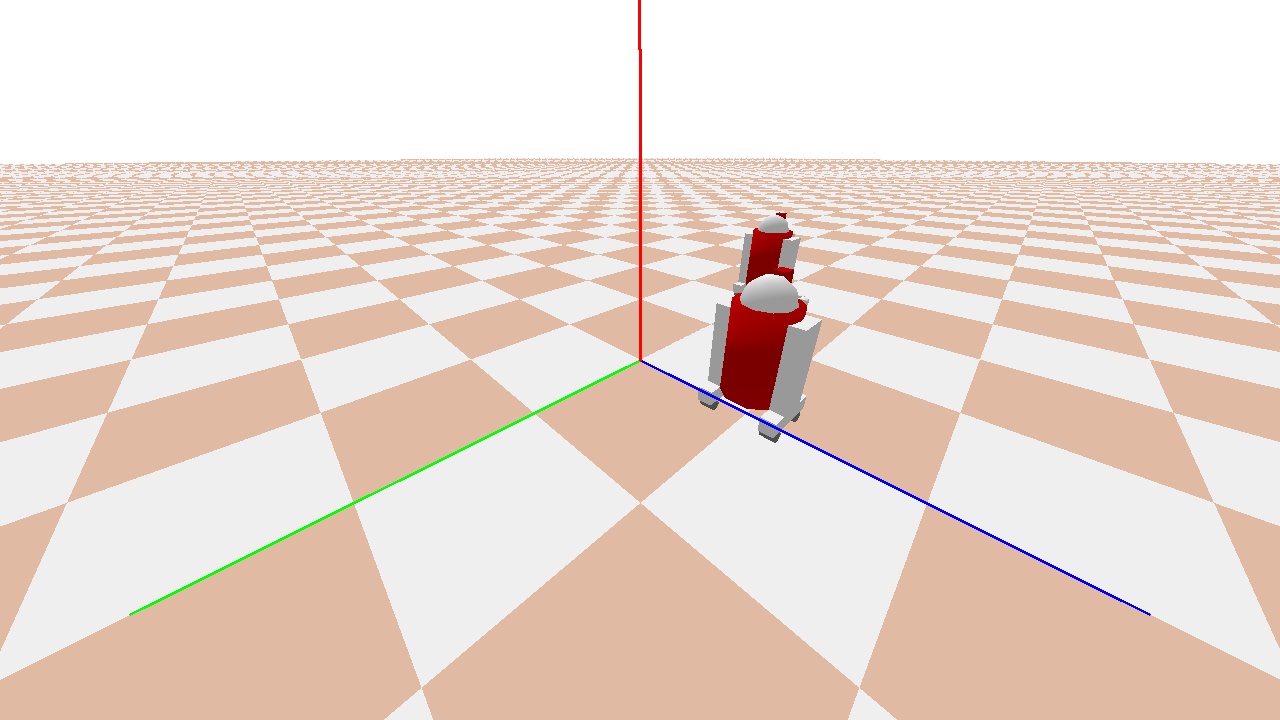

经过大量的摆弄,我想出了一个解决办法。玩了一会儿,我到了一个点,它看起来几乎没有问题,除了轴的旋转给偏航角。因此,我对computeViewMatrixFromYawPitchRoll进行了第二次调用,但是使用了相反的偏航,以便计算轴的转换。不幸的是,我不知道为什么这样做.但很管用!注意: base_pos、_cam_dist、_cam_yaw和_cam_pitch已被替换为render()注意:向上的方向也被颠倒了(不要问为什么.(-)一个相当混乱的解释,我必须承认.

import pybullet as p

import numpy as np

import time

import pybullet_data

import cv2

import os

VIDEO_RESOLUTION = (1280, 720)

MY_COLORS = [(255,0,0), (0,255,0), (0,0,255)]

K=np.array([[1280,0,0],[0,720,0],[0,0,1]])

def capture_frame(base_pos, _cam_dist, _cam_yaw, _cam_pitch):

_render_width, _render_height = VIDEO_RESOLUTION

view_matrix = p.computeViewMatrixFromYawPitchRoll(

cameraTargetPosition=base_pos,

distance=_cam_dist,

yaw=_cam_yaw,

pitch=_cam_pitch,

roll=0,

upAxisIndex=2)

proj_matrix = p.computeProjectionMatrixFOV(

fov=90, aspect=float(_render_width) / _render_height,

nearVal=0.01, farVal=100.0)

(_, _, px, _, _) = p.getCameraImage(

width=_render_width, height=_render_height, viewMatrix=view_matrix,

projectionMatrix=proj_matrix, renderer=p.ER_TINY_RENDERER) # ER_BULLET_HARDWARE_OPENGL)

rgb_array = np.array(px, dtype=np.uint8)

rgb_array = np.reshape(rgb_array, (_render_height, _render_width, 4))

rgb_array = rgb_array[:, :, :3]

return rgb_array, view_matrix, proj_matrix

def render():

p1, cubeOrn = p.getBasePositionAndOrientation(1)

p2, cubeOrn = p.getBasePositionAndOrientation(2)

base_pos=[0,0,0]

_cam_dist=3

_cam_yaw=45

_cam_pitch=-30

frame, view_matrix, proj_matrix = capture_frame(base_pos, _cam_dist, _cam_yaw, _cam_pitch)

frame = cv2.resize(frame, VIDEO_RESOLUTION)

points = {}

# inverse transform

view_matrix = p.computeViewMatrixFromYawPitchRoll(

cameraTargetPosition=base_pos,

distance=_cam_dist,

yaw=-_cam_yaw,

pitch=_cam_pitch,

roll=0,

upAxisIndex=2)

my_order = 'C'

pmat = np.array(proj_matrix).reshape((4,4), order=my_order)

vmat = np.array(view_matrix).reshape((4,4), order=my_order)

fmat = pmat @ vmat.T

# compute origin from origin point in simulation

origin = np.array([0,0,0,1])

frame_origin = (fmat @ origin)[:3]*np.array([1280, 720, 0]) + np.array([640, 360, 0])

# define unit vectors

unit_vectors = [ np.array([1,0,0,1]),

np.array([0,1,0,1]),

np.array([0,0,-1,1]) ]

for col_id, unit_vector in enumerate(unit_vectors):

cur_point = (fmat @ unit_vector)[:3]*np.array([1280, 720, 0]) + np.array([640, 360, 0])

cv2.line(frame, (640,360), (int(cur_point[0]),int(cur_point[1])), color=MY_COLORS[col_id], thickness=2)

cv2.imwrite("my_rendering.jpg", frame)

print(p1,p2)

if __name__ == '__main__':

physicsClient = p.connect(p.DIRECT)#or p.DIRECT for non-graphical version

#physicsClient = p.connect(p.GUI)#or p.DIRECT for non-graphical version

p.setAdditionalSearchPath(pybullet_data.getDataPath()) #optionally

p.setGravity(0,0,-10)

planeId = p.loadURDF("plane.urdf")

#arrows = p.loadURDF("arrows.urdf")

startPos = [1,0,0.2]

startOrientation = p.getQuaternionFromEuler([0,0,0])

boxId = p.loadURDF("r2d2.urdf",startPos, startOrientation)

startPos = [0,2,0.2]

boxId = p.loadURDF("r2d2.urdf",startPos, startOrientation)

#set the center of mass frame (loadURDF sets base link frame) startPos/Ornp.resetBasePositionAndOrientation(boxId, startPos, startOrientation)

for i in range (2400):

if i == 2399:

render()

p.stepSimulation()

p.disconnect()结果是:致以最良好的问候。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/69803623

复制相关文章

相似问题