Tensorflow梯度带返回空

Tensorflow梯度带返回空

提问于 2022-03-31 17:18:19

我试图在tensorflow中使用梯度带来计算梯度。

描述-

- A - tf.constant

- X - tf.Variable

- Y - tf.Variable

函数

- get_regularization_loss -计算L1/L2惩罚

- construct_loss_function -计算损失

- get_gradients_ -自差损失和梯度wrt到X& Y

的计算

目前,我没有得到任何X,Y。有什么可能是错误的建议吗?

import tensorflow as tf

def get_regularization_loss(X, loss_info):

penalty = loss_info['penalty_type']

alpha = loss_info['alpha']

#Extract sub matrix

X_00, X_10, X_01, X_11 = loss_info['X_start_row'], loss_info['X_end_row'], loss_info['X_start_col'], loss_info['X_end_col']

if penalty == 'L2':

loss_regularization_X = get_L2_penalty(X[X_00:X_10, X_01:X_11], alpha)

elif penalty == 'L1':

loss_regularization_X = get_L1_penalty(X[X_00:X_10, X_01:X_11], alpha)

else:

loss_regularization_X = tf.Variable(0, dtype=tf.float64)

return loss_regularization_X

def construct_loss_function(A, X, Y, loss_info):

#Extract sub matrix

A_00, A_10, A_01, A_11 = loss_info['A_start_row'], loss_info['A_end_row'], loss_info['A_start_col'], loss_info['A_end_col']

X_00, X_10, X_01, X_11 = loss_info['X_start_row'], loss_info['X_end_row'], loss_info['X_start_col'], loss_info['X_end_col']

Y_00, Y_10, Y_01, Y_11 = loss_info['Y_start_row'], loss_info['Y_end_row'], loss_info['Y_start_col'], loss_info['Y_end_col']

loss_name = loss_info['loss']

if loss_name == 'binary_crossentropy':

exp_value = tf.math.exp(tf.matmul(X[X_00:X_10, X_01:X_11],Y[Y_00:Y_10, Y_01:Y_11]))

log_odds = exp_value/(1+exp_value)

loss = tf.reduce_sum(tf.keras.losses.binary_crossentropy(A[A_00:A_10, A_01:A_11], log_odds))

else:

loss = tf.Variable(0, dtype=tf.float64)

return loss

def get_gradients(A, X, Y, Z_loss_list, X_loss_list, Y_loss_list):

Z_loss = tf.Variable(0, dtype=tf.float64)

X_loss = tf.Variable(0, dtype=tf.float64)

Y_loss = tf.Variable(0, dtype=tf.float64)

with tf.GradientTape(persistent=True) as tape:

tape.watch(X)

tape.watch(Y)

for loss_info in A_loss_list:

Z_loss.assign(Z_loss + construct_loss_function(A, X, Y, loss_info))

for loss_info in X_loss_list:

X_loss.assign(X_loss + get_regularization_loss(X, loss_info))

for loss_info in Y_loss_list:

Y_loss.assign(Y_loss+get_regularization_loss(Y, loss_info))

loss = X_loss + Y_loss + Z_loss

return_dictionary = {

'total_loss': loss,

'Z_loss': Z_loss,

'loss_regularization_X': X_loss,

'loss_regularization_Y': Y_loss,

'gradients': tape.gradient(loss, {'X': X, 'Y': Y})

}

return return_dictionary

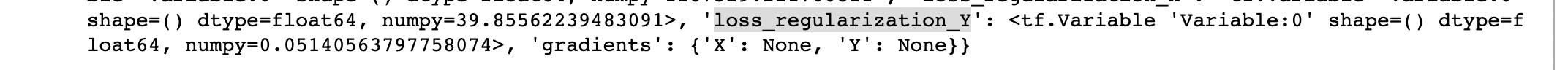

print(get_gradients(A, X, Y, Z_loss_list, X_loss_list, Y_loss_list))产出-

回答 1

Stack Overflow用户

发布于 2022-04-01 12:38:16

尝试在线处使用X,Y张量的所有值:

exp_value = tf.math.exp(tf.matmul(X[X_00:X_10, X_01:X_11],Y[Y_00:Y_10, Y_01:Y_11]))

loss_regularization_X = get_L2_penalty(X[X_00:X_10, X_01:X_11], alpha)与分割X和Y不同,您可以用较大的负数填充其他值,这样它们就不会影响损失的值,然后使用整个X变量。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/71696739

复制相关文章

相似问题