Democratizing AI Compute, Part 3: How did CUDA succeed?

Democratizing AI Compute, Part 3: How did CUDA succeed?

用户9732312

发布于 2026-03-18 20:48:34

发布于 2026-03-18 20:48:34

Chris Lattner Modular创始人,LLVM/Clang/Swift/MLIR之父

如果我们希望作为一个生态系统取得进步,就需要理解CUDA是如何变得如此强大的。理论上存在替代方案——AMD的ROCm、Intel的oneAPI、基于SYCL的框架——但实践中,CUDA仍是GPU计算领域无可争议的王者。

这一切是如何发生的?

答案不仅在于技术卓越性——尽管这起到了作用。CUDA是一个通过卓越执行力、深度战略投资、持续投入、生态系统锁定,当然还有一点运气构建起来的开发者平台。

本文剖析了CUDA如此成功的原因,揭示了NVIDIA战略的多层布局——从早期押注通用并行计算,到与PyTorch和TensorFlow等AI框架的紧密耦合。归根结底,CUDA的统治地位不仅是软件的胜利,更是长期平台思维战略的典范。

CUDA的早期发展 构建计算平台的一个关键挑战在于吸引开发者学习并投入其中,而若仅能针对小众硬件,则难以形成发展势头。在《Acquired》播客的一期精彩访谈中,黄仁勋提到,NVIDIA早期的一项核心策略是保持其GPU的跨代兼容性。这使得他们能够利用已广泛普及的游戏GPU用户群(这些显卡最初为运行基于DirectX的PC游戏而售出),同时让开发者得以通过低价桌面电脑学习CUDA,并逐步扩展到性能更强、价格更高的硬件中。

CUDA的早期崛起与AI软件浪潮的把握 如今看来,这一策略似乎显而易见,但在当时却是一个大胆的赌注:NVIDIA没有为不同用例(笔记本电脑、台式机、物联网、数据中心等)开发独立优化的产品线,而是打造了一条统一的GPU产品线。这意味着需要接受某些权衡(例如功耗或成本效率的不足),但换来的回报是构建了一个统一的生态系统——开发者对CUDA的投入可以无缝扩展到从游戏GPU到高性能数据中心加速器的所有硬件。这种策略与苹果维护和推动iPhone产品线发展的方式十分相似。

这种策略带来了双重好处:

降低进入门槛 —— 开发者可以使用已有的GPU学习CUDA,轻松进行实验和适配。 形成网络效应 —— 随着开发者数量的增加,更多软件和库被开发出来,平台价值持续提升。

早期的用户基础使得CUDA从游戏领域扩展到科学计算、金融、AI和高性能计算(HPC)。一旦CUDA在这些领域站稳脚跟,其相对于替代方案的优势便愈发明显:NVIDIA的持续投入确保CUDA始终处于GPU性能的最前沿,而竞争对手却难以构建可匹敌的生态系统。

抓住AI浪潮,乘势而上 CUDA的统治地位因深度学习的爆发而巩固。2012年,点燃现代AI革命的神经网络AlexNet,正是通过两块NVIDIA GeForce GTX 580 GPU完成训练。这一突破不仅证明GPU在深度学习中速度更快,更表明GPU是AI进步的核心工具,并推动CUDA迅速成为深度学习的默认计算后端。

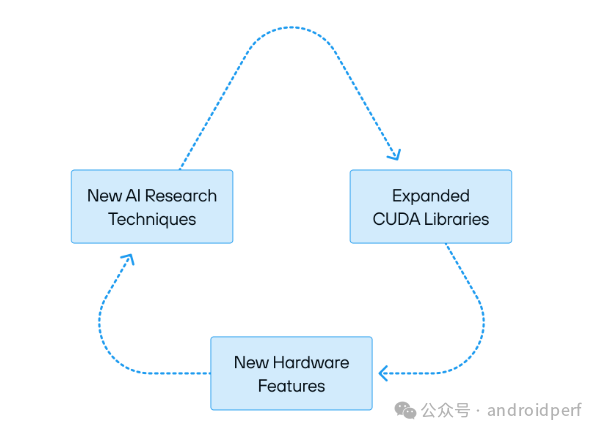

随着深度学习框架的兴起(尤其是谷歌2015年推出的TensorFlow和Meta 2016年推出的PyTorch),NVIDIA抓住机会,大力优化其CUDA库(High-Level CUDA Libraries),以确保这些框架在其硬件上以最高效率运行。NVIDIA没有让AI框架团队自行处理底层CUDA性能调优,而是主动承担重任,持续完善cuDNN和TensorRT。

这一策略不仅显著提升了PyTorch和TensorFlow在NVIDIA GPU上的运行速度,还通过减少与谷歌和Meta的协调成本,使NVIDIA得以实现硬件与软件的深度整合。每一代新硬件推出时,都会伴随新版本的CUDA,以充分释放硬件的新能力。渴望速度和效率的AI社区非常乐意将这一责任交给NVIDIA——这直接导致这些框架与NVIDIA硬件深度绑定。

为何谷歌和Meta放任CUDA一家独大? 实际上,谷歌和Meta并未专注于构建广泛的AI硬件生态系统——他们的核心目标是利用AI推动收入增长、优化产品并解锁新研究。其顶尖工程师优先处理高影响力的内部项目以提升公司核心指标。例如,这些公司选择自主研发TPU芯片,将精力集中在优化自家第一方硬件上。因此,将GPU的主导权交给NVIDIA是合理的选择。

替代硬件厂商面临艰巨挑战——它们试图在缺乏同等硬件整合专注度的情况下,复刻NVIDIA庞大且不断扩张的CUDA库生态系统。竞争对手不仅举步维艰,更陷入无休止的循环:始终在NVIDIA硬件上追逐最新的AI进展。这种情况甚至影响了谷歌和Meta的芯片自研项目,催生了XLA、PyTorch 2等众多方案。尽管曾抱有期待,但如今可见,尚无硬件创新者能真正匹敌CUDA平台的能力。

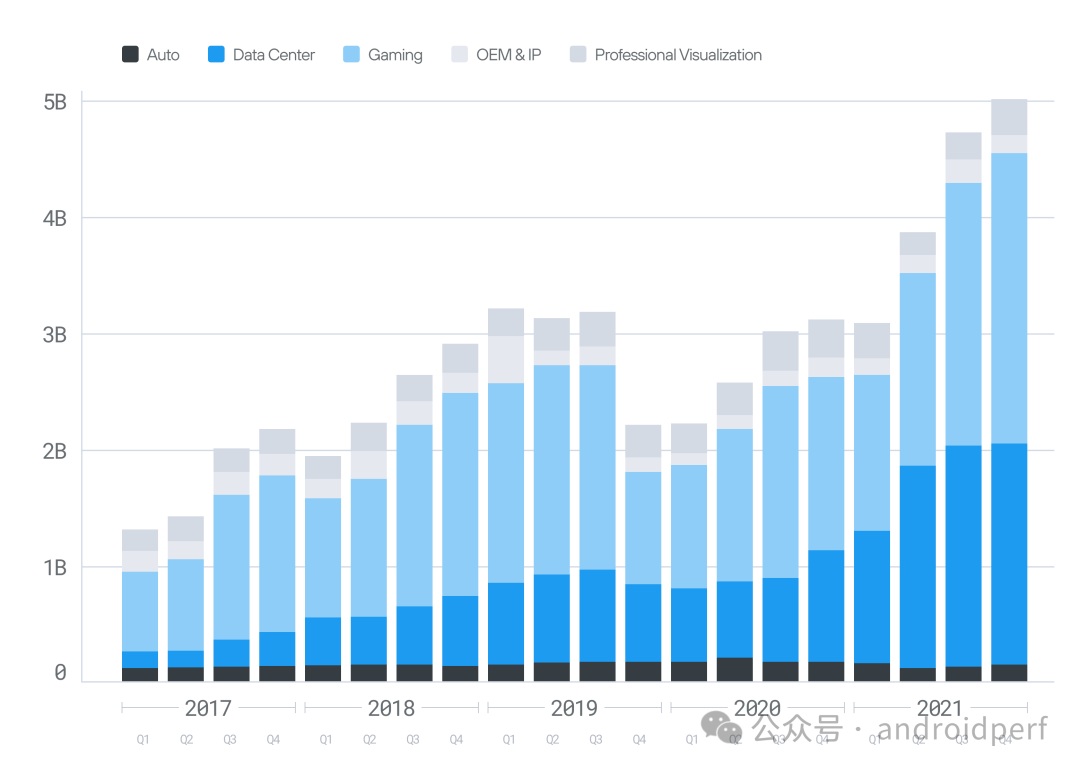

NVIDIA通过每一代硬件的迭代持续扩大优势。突然,在2022年底,ChatGPT横空出世,生成式AI和GPU计算由此进入主流视野。

生成式AI浪潮 几乎一夜之间,AI算力需求激增——它成为价值数十亿美元的产业、消费级应用和公司竞争战略的基石。科技巨头和风投机构向AI研究初创公司及资本性支出(CapEx)建设投入了数十亿美元,而这些资金最终几乎全部流向了唯一能应对算力需求爆发的NVIDIA。

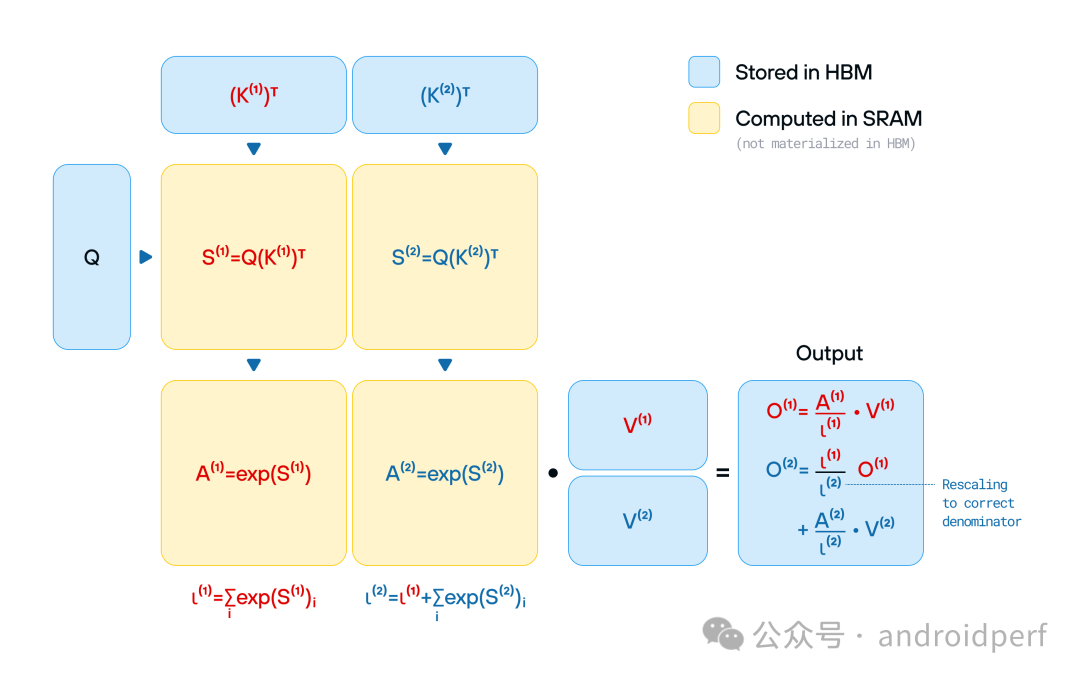

随着AI算力需求的激增,企业面临一个残酷现实:训练和部署生成式AI模型的成本极其高昂。任何效率提升(无论多微小)都能在规模化中转化为巨额成本节省。由于NVIDIA硬件早已在数据中心占据主导地位,AI公司面临一个严峻选择:优化CUDA代码,或被市场淘汰。几乎瞬间,整个行业转向编写针对CUDA的专用代码。结果如何?如今,AI的突破不再仅由模型和算法驱动——它们更依赖于从CUDA优化代码中榨取每一滴效率的能力。

以FlashAttention-3为例:这项前沿优化技术大幅降低了Transformer模型的运行成本,但它专为Hopper架构GPU设计,通过确保最佳性能仅在其最新硬件上可用,进一步强化了NVIDIA的生态锁定效应。后续的研究创新也沿袭了这一路径,例如当DeepSeek直接深入至PTX汇编层,在最低硬件层级上实现完全控制。随着NVIDIA新一代Blackwell架构的临近,行业或将迎来新一轮从底层开始的全面代码重构。

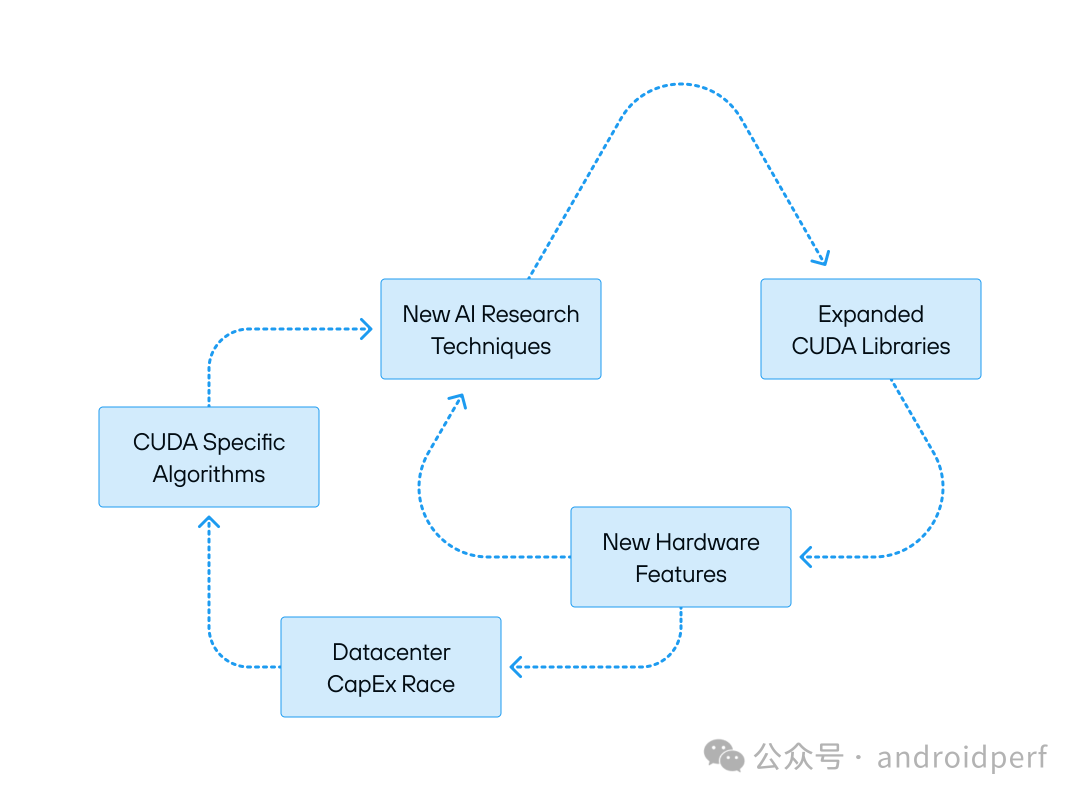

强化CUDA主导地位的循环动力 这一系统正在加速自我强化。生成式AI已成为一股失控的力量,催生出对算力的无尽需求,而NVIDIA掌控着所有筹码。其庞大的硬件装机量确保大多数AI研究基于CUDA开展,这反过来又推动了对NVIDIA平台优化的持续投入。每一代新硬件的推出都带来性能提升与效率革新,但也迫使行业重写软件、进行新一轮优化,并加深对NVIDIA技术栈的依赖。未来似乎已成定局:CUDA对AI计算的掌控只会愈发收紧。

但CUDA并非完美 巩固CUDA主导地位的力量,也正逐渐成为瓶颈——技术挑战、效率损耗以及更广泛创新的阻碍。这种垄断地位真的在服务AI研究社区吗?CUDA究竟造福开发者,还是仅仅利好NVIDIA?

If we as an ecosystem hope to make progress, we need to understand how the CUDA software empire became so dominant. On paper, alternatives exist—AMD’s ROCm, Intel’s oneAPI, SYCL-based frameworks—but in practice, CUDA remains the undisputed king of GPU compute.

How did this happen?

The answer isn’t just about technical excellence—though that plays a role. CUDA is a developer platform built through brilliant execution, deep strategic investment, continuity, ecosystem lock-in, and, of course, a little bit of luck.

This post breaks down why CUDA has been so successful, exploring the layers of NVIDIA’s strategy—from its early bets on generalizing parallel compute to the tight coupling of AI frameworks like PyTorch and TensorFlow. Ultimately, CUDA’s dominance is not just a triumph of software but a masterclass in long-term platform thinking.

The Early Growth of CUDA

The Early Growth of CUDA

A key challenge of building a compute platform is attracting developers to learn and invest in it, and it is hard to gain momentum if you can only target niche hardware. In a great “Acquired” podcast, Jensen Huang shares that a key early NVIDIA strategy was to keep their GPUs compatible across generations. This enabled NVIDIA to leverage its install base of already widespread gaming GPUs, which were sold for running DirectX-based PC games. Furthermore, it enabled developers to learn CUDA on low-priced desktop PCs and scale into more powerful hardware that commanded high prices.

This might seem obvious now, but at the time it was a bold bet: instead of creating separate product lines optimized for different use-cases (laptops, desktops, IoT, datacenter, etc.), NVIDIA built a single contiguous GPU product line. This meant accepting trade-offs—such as power or cost inefficiencies—but in return, it created a unified ecosystem where every developer’s investment in CUDA could scale seamlessly from gaming GPUs to high-performance datacenter accelerators. This strategy is quite analogous to how Apple maintains and drives its iPhone product line forward.

The benefits of this approach were twofold:

Lowering Barriers to Entry – Developers could learn CUDA using the GPUs they already had, making it easy to experiment and adopt.

Creating a Network Effect – As more developers started using CUDA, more software and libraries were created, making the platform even more valuable.

This early install base allowed CUDA to grow beyond gaming into scientific computing, finance, AI, and high-performance computing (HPC). Once CUDA gained traction in these fields, its advantages over alternatives became clear: NVIDIA’s continued investment ensured that CUDA was always at the cutting edge of GPU performance, while competitors struggled to build a comparable ecosystem.

Catching and Riding the Wave of AI Software

CUDA’s dominance was cemented with the explosion of deep learning. In 2012, AlexNet, the neural network that kickstarted the modern AI revolution, was trained using two NVIDIA GeForce GTX 580 GPUs. This breakthrough not only demonstrated that GPUs were faster at deep learning—it proved they were essential for AI progress and led to CUDA’s rapid adoption as the default compute backend for deep learning.

As deep learning frameworks emerged—most notably TensorFlow (Google, 2015) and PyTorch (Meta, 2016)—NVIDIA seized the opportunity and invested heavily in optimizing its High-Level CUDA Libraries to ensure these frameworks ran as efficiently as possible on its hardware. Rather than leaving AI framework teams to handle low-level CUDA performance tuning themselves, NVIDIA took on the burden by aggressively refining cuDNN and TensorRT as we discussed in Part 2.

This move not only made PyTorch and TensorFlow significantly faster on NVIDIA GPUs—it also allowed NVIDIA to tightly integrate its hardware and software (a process known as “hardware/software co-design”) because it reduced coordination with Google and Meta. Each major new generation of hardware would come out with a new version of CUDA that exploited the new capabilities of the hardware. The AI community, eager for speed and efficiency, was more than willing to delegate this responsibility to NVIDIA—which directly led to these frameworks being tied to NVIDIA hardware.

But why did Google and Meta let this happen? The reality is that Google and Meta weren’t singularly focused on building a broad AI hardware ecosystem—they were focused on using AI to drive revenue, improve their products, and unlock new research. Their top engineers prioritized high-impact internal projects to move internal company metrics. For example, these companies decided to build their own proprietary TPU chips—pouring their effort into optimizing for their own first-party hardware. It made sense to give the reins to NVIDIA for GPUs.

Makers of alternative hardware faced an uphill battle—trying to replicate the vast, ever-expanding NVIDIA CUDA library ecosystem without the same level of consolidated hardware focus. Rival hardware vendors weren’t just struggling—they were trapped in an endless cycle, always chasing the next AI advancement on NVIDIA hardware. This impacted Google and Meta’s in-house chip projects as well, which led to numerous projects, including XLA and PyTorch 2. We can dive into these deeper in subsequent articles, but despite some hopes, we can see today that nothing has enabled hardware innovators to match the capabilities of the CUDA platform.

With each generation of its hardware, NVIDIA widened the gap. Then suddenly, in late 2022, ChatGPT exploded onto the scene, and with it, GenAI and GPU compute went mainstream.

Capitalizing on the Generative AI Surge

Almost overnight, demand for AI compute skyrocketed—it became the foundation for billion-dollar industries, consumer applications, and competitive corporate strategy. Big tech and venture capital firms poured billions into AI research startups and CapEx buildouts—money that ultimately funneled straight to NVIDIA, the only player capable of meeting the exploding demand for compute.

As demand for AI compute surged, companies faced a stark reality: training and deploying GenAI models is incredibly expensive. Every efficiency gain—no matter how small—translated into massive savings at scale. With NVIDIA’s hardware already entrenched in data centers, AI companies faced a serious choice: optimize for CUDA or fall behind. Almost overnight, the industry pivoted to writing CUDA-specific code. The result? AI breakthroughs are no longer driven purely by models and algorithms—they now hinge on the ability to extract every last drop of efficiency from CUDA-optimized code.

Take FlashAttention-3, for example: this cutting-edge optimization slashed the cost of running transformer models—but it was built exclusively for Hopper GPUs, reinforcing NVIDIA’s lock-in by ensuring the best performance was only available on its latest hardware. Continuous research innovations followed the same trajectory, for example when DeepSeek went directly to PTX assembly, gaining full control over the hardware at the lowest possible level. With the new NVIDIA Blackwell architecture on the horizon, we can look forward to the industry rewriting everything from scratch again.

The Reinforcing Cycles That Power CUDA’s Grip

This system is accelerating and self-reinforcing. Generative AI has become a runaway force, driving an insatiable demand for compute, and NVIDIA holds all the cards. The biggest install base ensures that most AI research happens in CUDA, which in turn drives investment into optimizing NVIDIA’s platform.

Every new generation of NVIDIA hardware brings new features and new efficiencies, but it also demands new software rewrites, new optimizations, and deeper reliance on NVIDIA’s stack. The future seems inevitable: a world where CUDA’s grip on AI compute only tightens.

Except CUDA isn't perfect.

The same forces that entrench CUDA’s dominance are also becoming a bottleneck—technical challenges, inefficiencies, and barriers to broader innovation. Does this dominance actually serve the AI research community? Is CUDA good for developers, or just good for NVIDIA?

Let’s take a step back: We looked at what CUDA is and why it is so successful, but is it actually good? We’ll explore this in Part 4—stay tuned and let us know if you find this series useful, or have suggestions/requests!

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-05-05,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读