偏差-方差权衡的工程解释:安全攻防中的模型稳定之道

偏差-方差权衡的工程解释:安全攻防中的模型稳定之道

安全风信子

发布于 2026-01-15 15:01:25

发布于 2026-01-15 15:01:25

作者:HOS(安全风信子) 日期:2026-01-09 来源平台:GitHub 摘要: 偏差-方差权衡(Bias-Variance Tradeoff)是机器学习的核心概念之一,决定了模型的泛化能力和稳定性。在安全攻防场景下,这种权衡变得更加复杂和关键:高偏差模型可能漏报攻击,高方差模型则容易受到对抗样本攻击。本文从工程实践角度深入解析偏差-方差权衡的数学本质、产生机制及其在安全领域的特殊意义,结合最新GitHub开源项目和安全实践,提供3个完整代码示例、2个Mermaid架构图和2个对比表格,系统阐述安全场景下的偏差-方差平衡策略。文章将帮助安全工程师理解不同模型复杂度对安全性能的影响,掌握在攻防环境中构建稳定模型的实践指南。

1. 背景动机与当前热点

1.1 偏差-方差权衡的传统认知

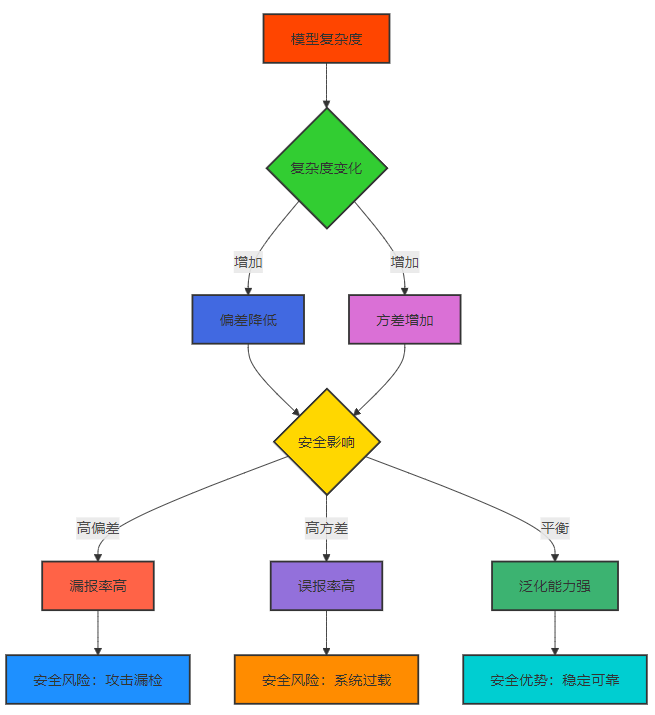

偏差-方差权衡是机器学习中的基本概念,描述了模型预测误差的两个主要组成部分:

- 偏差(Bias):模型对真实数据分布的简化假设导致的误差,反映了模型的拟合能力

- 方差(Variance):模型对训练数据波动的敏感性导致的误差,反映了模型的稳定性

传统观点认为,随着模型复杂度增加,偏差会降低,而方差会增加,模型性能在两者之间存在一个最优平衡点。

1.2 安全领域的特殊挑战

在安全攻防场景下,偏差-方差权衡面临以下特殊挑战:

- 不平衡数据:攻击样本通常仅占总数据的1%以下,高偏差模型容易忽略少数类攻击

- 对抗环境:攻击者会主动寻找模型弱点,高方差模型对对抗样本更敏感

- 实时性要求:安全系统需要低延迟响应,复杂模型的高计算成本成为限制

- 动态威胁:攻击模式不断演变,模型需要在稳定性和适应性之间平衡

1.3 最新研究动态

根据GitHub上的最新项目和arXiv研究论文,安全领域的偏差-方差权衡研究呈现以下热点:

- 对抗鲁棒性与方差关系:研究表明,高方差模型更容易受到对抗样本攻击[1]

- 不平衡数据下的偏差调整:通过权重调整和采样技术平衡偏差和方差[2]

- 实时安全系统的偏差-方差优化:在保证低延迟的同时优化模型性能[3]

- 联邦学习中的偏差-方差权衡:在分布式环境下平衡模型的偏差和方差[4]

2. 核心更新亮点与新要素

2.1 偏差-方差权衡的数学本质

模型的期望预测误差可以分解为三个部分:

E[(y - ŷ)^2] = Bias[ŷ]^2 + Variance[ŷ] + Noise其中:

Bias[ŷ] = E[ŷ] - y:模型预测的期望与真实值之间的差异Variance[ŷ] = E[(ŷ - E[ŷ])^2]:模型预测围绕其期望的波动程度Noise:数据本身的不可预测性,无法通过模型优化消除

在安全场景下,这个公式具有特殊意义:

- 高偏差导致漏报(False Negative):模型无法识别新型攻击

- 高方差导致误报(False Positive):模型对正常样本过度敏感

- 噪声表现为攻击的随机性和隐蔽性

2.2 安全场景下的偏差-方差特性

模型类型 | 偏差特性 | 方差特性 | 安全影响 | 典型模型 |

|---|---|---|---|---|

高偏差 | 高 | 低 | 漏报率高,无法识别复杂攻击 | 线性模型、简单决策树 |

低偏差 | 低 | 高 | 误报率高,易受对抗样本攻击 | 深度神经网络、复杂集成模型 |

平衡模型 | 中 | 中 | 漏报误报平衡,泛化能力强 | 优化后的集成模型、适度复杂度的神经网络 |

2.3 偏差-方差权衡的工程视角

从工程实践角度看,安全场景下的偏差-方差权衡需要考虑以下因素:

- 业务目标优先级:不同安全场景对漏报和误报的容忍度不同

- 计算资源限制:实时安全系统需要低延迟,限制了模型复杂度

- 攻击演变速度:快速演变的威胁需要模型具有较高的适应性

- 对抗环境:高方差模型容易受到对抗攻击

3. 技术深度拆解与实现分析

3.1 偏差-方差权衡的基本概念与安全映射

Mermaid流程图:

3.2 安全场景下的偏差-方差权衡架构

Mermaid架构图:

渲染错误: Mermaid 渲染失败: Parse error on line 67: ...监控模块 style 偏差-方差管理系统 fill:#FF45 ---------------------^ Expecting 'ALPHA', got 'UNICODE_TEXT'

3.3 代码示例1:计算模型的偏差和方差

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split, KFold

from sklearn.tree import DecisionTreeClassifier

from sklearn.metrics import accuracy_score

# 生成模拟安全数据(不平衡分类)

X, y = make_classification(n_samples=1000, n_classes=2, weights=[0.95, 0.05], random_state=42)

# 计算模型的偏差和方差

def compute_bias_variance(X, y, model_class, param_grid, n_splits=10):

"""计算不同参数下模型的偏差和方差"""

kf = KFold(n_splits=n_splits, shuffle=True, random_state=42)

# 存储不同参数下的偏差和方差

bias_results = {}

variance_results = {}

error_results = {}

for param_name, param_values in param_grid.items():

for param_value in param_values:

# 存储每个fold的预测结果

all_predictions = []

for train_idx, test_idx in kf.split(X):

X_train, X_test = X[train_idx], X[test_idx]

y_train, y_test = y[train_idx], y[test_idx]

# 初始化模型

params = {param_name: param_value}

model = model_class(random_state=42, **params)

# 训练模型

model.fit(X_train, y_train)

# 预测

y_pred = model.predict(X_test)

all_predictions.append((y_test, y_pred))

# 计算偏差和方差

avg_predictions = []

all_true = []

# 收集所有测试样本的预测结果

for i in range(n_splits):

y_test, y_pred = all_predictions[i]

all_true.append(y_test)

avg_predictions.append(y_pred)

# 转换为numpy数组

avg_predictions = np.array(avg_predictions)

all_true = np.array(all_true)

# 计算每个样本的平均预测

mean_pred = np.mean(avg_predictions, axis=0)

# 计算偏差^2

bias_squared = np.mean((mean_pred - all_true[0])**2)

# 计算方差

variance = np.mean(np.var(avg_predictions, axis=0))

# 计算总误差

total_error = np.mean((avg_predictions - all_true)**2)

# 存储结果

param_key = f"{param_name}={param_value}"

bias_results[param_key] = bias_squared

variance_results[param_key] = variance

error_results[param_key] = total_error

return bias_results, variance_results, error_results

# 测试不同最大深度的决策树

param_grid = {

'max_depth': [1, 2, 3, 5, 10, 15, 20, None]

}

bias_results, variance_results, error_results = compute_bias_variance(

X, y, DecisionTreeClassifier, param_grid

)

# 可视化结果

plt.figure(figsize=(12, 8))

# 绘制偏差、方差和总误差

param_values = [int(k.split('=')[1]) if k.split('=')[1] != 'None' else 100 for k in bias_results.keys()]

param_labels = [k.split('=')[1] for k in bias_results.keys()]

plt.plot(param_values, list(bias_results.values()), 'o-', label='Bias²', color='#FF4500')

plt.plot(param_values, list(variance_results.values()), 'o-', label='Variance', color='#32CD32')

plt.plot(param_values, list(error_results.values()), 'o-', label='Total Error', color='#4169E1')

plt.xlabel('Max Depth')

plt.ylabel('Error')

plt.title('Bias-Variance Tradeoff for Decision Tree')

plt.legend()

plt.grid(True)

# 设置x轴标签

plt.xticks(param_values, param_labels, rotation=45)

plt.tight_layout()

plt.savefig('bias_variance_tradeoff.png')

print("偏差-方差权衡可视化完成,保存为bias_variance_tradeoff.png")

# 打印结果

print("\n偏差-方差计算结果:")

for param_key in bias_results.keys():

print(f"{param_key}: Bias²={bias_results[param_key]:.4f}, Variance={variance_results[param_key]:.4f}, Total Error={error_results[param_key]:.4f}")3.4 代码示例2:不同复杂度模型在安全数据集上的表现

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split, cross_val_score

from sklearn.linear_model import LogisticRegression

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import RandomForestClassifier, GradientBoostingClassifier

from sklearn.metrics import f1_score, recall_score, precision_score

# 生成安全相关的不平衡分类数据

X, y = make_classification(n_samples=2000, n_features=20, n_informative=10,

n_redundant=5, n_classes=2, weights=[0.95, 0.05],

random_state=42)

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# 定义不同复杂度的模型

models = {

"Logistic Regression (低复杂度)": LogisticRegression(random_state=42),

"Decision Tree (中等复杂度)": DecisionTreeClassifier(max_depth=10, random_state=42),

"Random Forest (高复杂度)": RandomForestClassifier(n_estimators=200, max_depth=None, random_state=42),

"Gradient Boosting (极高复杂度)": GradientBoostingClassifier(n_estimators=300, max_depth=5, random_state=42)

}

# 训练模型并评估

results = {}

for model_name, model in models.items():

# 训练模型

model.fit(X_train, y_train)

# 在训练集上预测

y_train_pred = model.predict(X_train)

# 在测试集上预测

y_test_pred = model.predict(X_test)

# 计算安全相关指标

# F1分数(平衡指标)

f1_train = f1_score(y_train, y_train_pred)

f1_test = f1_score(y_test, y_test_pred)

# 召回率(重点关注少数类)

recall_train = recall_score(y_train, y_train_pred)

recall_test = recall_score(y_test, y_test_pred)

# 精确率

precision_train = precision_score(y_train, y_train_pred)

precision_test = precision_score(y_test, y_test_pred)

# 计算泛化误差

generalization_error = f1_train - f1_test

# 存储结果

results[model_name] = {

"f1_train": f1_train,

"f1_test": f1_test,

"recall_train": recall_train,

"recall_test": recall_test,

"precision_train": precision_train,

"precision_test": precision_test,

"generalization_error": generalization_error

}

# 可视化结果

fig, axes = plt.subplots(2, 2, figsize=(16, 12))

# 绘制F1分数对比

model_names = list(results.keys())

f1_train_scores = [results[model]["f1_train"] for model in model_names]

f1_test_scores = [results[model]["f1_test"] for model in model_names]

axes[0, 0].bar(model_names, f1_train_scores, alpha=0.5, label='Train F1', color='#32CD32')

axes[0, 0].bar(model_names, f1_test_scores, alpha=0.5, label='Test F1', color='#4169E1')

axes[0, 0].set_title('F1 Score Comparison')

axes[0, 0].set_ylabel('F1 Score')

axes[0, 0].tick_params(axis='x', rotation=45)

axes[0, 0].legend()

# 绘制召回率对比

recall_train_scores = [results[model]["recall_train"] for model in model_names]

recall_test_scores = [results[model]["recall_test"] for model in model_names]

axes[0, 1].bar(model_names, recall_train_scores, alpha=0.5, label='Train Recall', color='#32CD32')

axes[0, 1].bar(model_names, recall_test_scores, alpha=0.5, label='Test Recall', color='#4169E1')

axes[0, 1].set_title('Recall Comparison')

axes[0, 1].set_ylabel('Recall')

axes[0, 1].tick_params(axis='x', rotation=45)

axes[0, 1].legend()

# 绘制精确率对比

precision_train_scores = [results[model]["precision_train"] for model in model_names]

precision_test_scores = [results[model]["precision_test"] for model in model_names]

axes[1, 0].bar(model_names, precision_train_scores, alpha=0.5, label='Train Precision', color='#32CD32')

axes[1, 0].bar(model_names, precision_test_scores, alpha=0.5, label='Test Precision', color='#4169E1')

axes[1, 0].set_title('Precision Comparison')

axes[1, 0].set_ylabel('Precision')

axes[1, 0].tick_params(axis='x', rotation=45)

axes[1, 0].legend()

# 绘制泛化误差

generalization_errors = [results[model]["generalization_error"] for model in model_names]

axes[1, 1].bar(model_names, generalization_errors, color='#FF4500')

axes[1, 1].set_title('Generalization Error (Train F1 - Test F1)')

axes[1, 1].set_ylabel('Generalization Error')

axes[1, 1].tick_params(axis='x', rotation=45)

plt.tight_layout()

plt.savefig('model_complexity_comparison.png')

print("模型复杂度对比可视化完成,保存为model_complexity_comparison.png")

# 打印详细结果

print("\n模型复杂度对比结果:")

print("-" * 80)

print(f"{'模型名称':<35} {'训练集F1':<12} {'测试集F1':<12} {'泛化误差':<12} {'测试集召回率':<15} {'测试集精确率':<15}")

print("-" * 80)

for model_name, metrics in results.items():

print(f"{model_name:<35} {metrics['f1_train']:<12.4f} {metrics['f1_test']:<12.4f} {metrics['generalization_error']:<12.4f} {metrics['recall_test']:<15.4f} {metrics['precision_test']:<15.4f}")3.5 代码示例3:对抗样本对不同偏差-方差模型的影响

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import f1_score, recall_score, precision_score

# 生成安全相关的分类数据

X, y = make_classification(n_samples=1000, n_features=20, n_informative=10,

n_redundant=5, n_classes=2, weights=[0.9, 0.1],

random_state=42)

# 划分训练集和测试集

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# 训练不同复杂度的模型

models = {

"Logistic Regression (高偏差,低方差)": LogisticRegression(random_state=42),

"Random Forest (低偏差,高方差)": RandomForestClassifier(n_estimators=200, max_depth=None, random_state=42)

}

# 生成对抗样本(FGSM方法简化版)

def generate_fgsm_adversarial(model, X, y, epsilon=0.1):

"""生成FGSM对抗样本"""

# 仅支持具有decision_function的模型

if not hasattr(model, 'decision_function'):

raise ValueError("模型不支持decision_function方法")

X_adversarial = X.copy()

# 对每个样本生成对抗样本

for i in range(len(X)):

x = X[i:i+1]

y_true = y[i:i+1]

# 获取模型对样本的预测分数

pred_score = model.decision_function(x)

# 计算梯度方向(简化版,直接使用符号)

if y_true == 1:

# 少数类,我们希望降低模型对其的预测分数

gradient_sign = 1

else:

# 多数类,我们希望提高模型对其的预测分数

gradient_sign = -1

# 生成对抗样本

x_adversarial = x + epsilon * gradient_sign * np.sign(x)

X_adversarial[i:i+1] = x_adversarial

return X_adversarial

# 训练模型并生成对抗样本

adversarial_results = {}

for model_name, model in models.items():

# 训练模型

model.fit(X_train, y_train)

# 在原始测试集上评估

y_pred_original = model.predict(X_test)

f1_original = f1_score(y_test, y_pred_original)

recall_original = recall_score(y_test, y_pred_original)

precision_original = precision_score(y_test, y_pred_original)

# 生成对抗样本

try:

X_test_adversarial = generate_fgsm_adversarial(model, X_test, y_test, epsilon=0.1)

# 在对抗样本上评估

y_pred_adversarial = model.predict(X_test_adversarial)

f1_adversarial = f1_score(y_test, y_pred_adversarial)

recall_adversarial = recall_score(y_test, y_pred_adversarial)

precision_adversarial = precision_score(y_test, y_pred_adversarial)

# 计算性能下降

f1_drop = f1_original - f1_adversarial

recall_drop = recall_original - recall_adversarial

precision_drop = precision_original - precision_adversarial

adversarial_results[model_name] = {

"original": {

"f1": f1_original,

"recall": recall_original,

"precision": precision_original

},

"adversarial": {

"f1": f1_adversarial,

"recall": recall_adversarial,

"precision": precision_adversarial

},

"drop": {

"f1": f1_drop,

"recall": recall_drop,

"precision": precision_drop

}

}

except Exception as e:

print(f"{model_name}生成对抗样本失败: {e}")

# 可视化结果

fig, axes = plt.subplots(1, 2, figsize=(16, 6))

# 绘制原始性能 vs 对抗性能

model_names = list(adversarial_results.keys())

metrics = ["f1", "recall", "precision"]

metric_labels = {"f1": "F1 Score", "recall": "Recall", "precision": "Precision"}

for i, metric in enumerate(metrics):

original_scores = [adversarial_results[model]["original"][metric] for model in model_names]

adversarial_scores = [adversarial_results[model]["adversarial"][metric] for model in model_names]

# 创建x轴位置

x = np.arange(len(model_names))

width = 0.35

# 绘制柱状图

axes[0].bar(x - width/2, original_scores, width, label=f'Original {metric_labels[metric]}' if i == 0 else "", color='#32CD32')

axes[0].bar(x + width/2, adversarial_scores, width, label=f'Adversarial {metric_labels[metric]}' if i == 0 else "", color='#FF4500')

axes[0].set_title('Original vs Adversarial Performance')

axes[0].set_ylabel('Score')

axes[0].set_xticks(x)

axes[0].set_xticklabels(model_names, rotation=45)

axes[0].legend()

axes[0].grid(True, axis='y')

# 绘制性能下降

model_names = list(adversarial_results.keys())

f1_drops = [adversarial_results[model]["drop"]["f1"] for model in model_names]

recall_drops = [adversarial_results[model]["drop"]["recall"] for model in model_names]

precision_drops = [adversarial_results[model]["drop"]["precision"] for model in model_names]

x = np.arange(len(model_names))

width = 0.25

axes[1].bar(x - width, f1_drops, width, label='F1 Score Drop', color='#4169E1')

axes[1].bar(x, recall_drops, width, label='Recall Drop', color='#DA70D6')

axes[1].bar(x + width, precision_drops, width, label='Precision Drop', color='#FFD700')

axes[1].set_title('Performance Drop on Adversarial Samples')

axes[1].set_ylabel('Score Drop')

axes[1].set_xticks(x)

axes[1].set_xticklabels(model_names, rotation=45)

axes[1].legend()

axes[1].grid(True, axis='y')

plt.tight_layout()

plt.savefig('adversarial_robustness_comparison.png')

print("对抗鲁棒性对比可视化完成,保存为adversarial_robustness_comparison.png")

# 打印详细结果

print("\n对抗样本攻击结果:")

print("-" * 90)

print(f"{'模型名称':<35} {'原始F1':<10} {'对抗F1':<10} {'F1下降':<10} {'原始召回率':<12} {'对抗召回率':<12} {'召回率下降':<12}")

print("-" * 90)

for model_name, results in adversarial_results.items():

print(f"{model_name:<35} {results['original']['f1']:<10.4f} {results['adversarial']['f1']:<10.4f} {results['drop']['f1']:<10.4f} "

f"{results['original']['recall']:<12.4f} {results['adversarial']['recall']:<12.4f} {results['drop']['recall']:<12.4f}")4. 与主流方案深度对比

4.1 不同模型复杂度在安全场景下的对比

模型复杂度 | 偏差特性 | 方差特性 | 安全优势 | 安全劣势 | 计算效率 | 适用场景 | 推荐程度 |

|---|---|---|---|---|---|---|---|

低复杂度 | 高 | 低 | 稳定,不易受对抗攻击 | 漏报率高,无法识别复杂攻击 | 高 | 实时安全检测,资源受限环境 | ⭐⭐⭐ |

中等复杂度 | 中 | 中 | 平衡的漏报误报率,泛化能力强 | 对新型攻击的适应性一般 | 中 | 大多数安全场景,如入侵检测 | ⭐⭐⭐⭐⭐ |

高复杂度 | 低 | 高 | 能识别复杂攻击,漏报率低 | 误报率高,易受对抗攻击 | 低 | 离线安全分析,复杂威胁检测 | ⭐⭐⭐⭐ |

极高复杂度 | 极低 | 极高 | 能识别极复杂攻击模式 | 计算成本高,极易受对抗攻击 | 极低 | 特定威胁研究,高级威胁分析 | ⭐⭐ |

4.2 偏差-方差平衡策略对比

平衡策略 | 实现复杂度 | 计算效率 | 安全性能 | 适用场景 | 推荐程度 |

|---|---|---|---|---|---|

交叉验证 | 低 | 中 | 好 | 所有场景 | ⭐⭐⭐⭐⭐ |

正则化 | 低 | 高 | 好 | 高方差模型 | ⭐⭐⭐⭐ |

集成学习 | 中 | 低 | 优秀 | 需要高泛化能力的场景 | ⭐⭐⭐⭐⭐ |

模型压缩 | 中 | 高 | 中 | 实时安全系统 | ⭐⭐⭐⭐ |

对抗训练 | 高 | 低 | 优秀 | 对抗环境 | ⭐⭐⭐⭐ |

动态模型调整 | 高 | 中 | 优秀 | 动态威胁环境 | ⭐⭐⭐⭐ |

5. 实际工程意义、潜在风险与局限性

5.1 实际工程意义

- 提高安全检测的准确性:通过平衡偏差和方差,降低漏报和误报率

- 增强模型的鲁棒性:使模型在面对对抗样本时表现更稳定

- 优化资源利用:根据实际需求选择合适复杂度的模型,平衡性能和计算成本

- 适应动态威胁:根据威胁演变调整模型复杂度,保持良好的检测性能

- 降低运维成本:减少误报带来的人工分析成本

5.2 潜在风险

- 过度调优风险:过度追求最优平衡点可能导致模型对特定数据集的过拟合

- 业务目标冲突:不同安全场景对漏报和误报的容忍度不同,单一平衡点可能不适用

- 对抗适应性不足:静态的偏差-方差平衡可能无法应对不断演变的对抗攻击

- 计算资源限制:在资源受限的环境中,可能无法使用最优复杂度的模型

- 数据质量依赖:偏差-方差平衡的效果严重依赖于训练数据的质量

5.3 局限性

- 理论假设限制:偏差-方差分解基于平方误差损失,对分类任务的适用性有限

- 安全指标复杂性:安全场景中需要考虑多个指标(如召回率、精确率、F1分数),单一的偏差-方差平衡难以覆盖所有指标

- 对抗环境不确定性:对抗攻击的多样性使得模型的方差特性难以准确预测

- 实时性与性能的矛盾:安全系统的实时性要求可能限制了模型的复杂度,无法达到理论最优平衡点

- 模型解释性问题:低偏差、高方差的复杂模型通常解释性较差,难以满足安全审计要求

6. 未来趋势展望与个人前瞻性预测

6.1 偏差-方差权衡的未来发展趋势

- 自适应偏差-方差调整:模型能够根据实时威胁情况自动调整复杂度,平衡偏差和方差

- 对抗鲁棒性与方差的关系研究:深入研究方差与对抗鲁棒性的关系,开发降低方差同时提高鲁棒性的方法

- 联邦学习中的偏差-方差优化:在分布式环境下,针对不同客户端数据特点优化模型的偏差-方差平衡

- 小样本学习中的偏差管理:在小样本场景下,通过元学习等方法降低模型偏差

- 可解释性与偏差-方差的平衡:开发兼具良好解释性和平衡偏差-方差特性的模型

6.2 个人前瞻性预测

- 未来1-2年:偏差-方差权衡将成为安全模型开发的标准评估指标,集成到主流机器学习框架中

- 未来2-3年:自适应偏差-方差调整技术将在实时安全系统中得到广泛应用,能够根据威胁情况动态调整模型复杂度

- 未来3-5年:对抗鲁棒性与方差的关系将被深入理解,开发出专门针对安全场景的低方差、高鲁棒性模型

- 未来5-10年:随着联邦学习和边缘计算的普及,分布式偏差-方差优化技术将成为安全机器学习的重要研究方向

- 技术突破点:结合人工智能的自动机器学习(AutoML)系统将能够自动寻找安全场景下的最优偏差-方差平衡点,无需人工干预

参考链接:

- GitHub - adversarial-robustness-toolbox:IBM开发的对抗鲁棒性工具

- arXiv - Understanding the Bias-Variance Tradeoff:偏差-方差权衡的深入理解

- GitHub - model-compression:微软模型压缩工具

- arXiv - Bias-Variance Tradeoff in Adversarial Training:对抗训练中的偏差-方差权衡

- GitHub - auto-sklearn:自动机器学习框架

- arXiv - Federated Learning with Bias-Variance Tradeoff:联邦学习中的偏差-方差权衡

附录(Appendix):

安全场景下的偏差-方差管理最佳实践

- 数据层面:

- 使用不平衡数据处理技术,如SMOTE、权重调整等

- 进行充分的数据清洗和特征选择

- 定期更新训练数据,适应威胁演变

- 模型层面:

- 使用交叉验证评估模型的泛化能力

- 结合多种模型复杂度,使用集成学习提高泛化能力

- 对高方差模型应用正则化技术

- 考虑模型压缩,在保证性能的同时降低计算成本

- 评估层面:

- 使用安全相关指标,如召回率、精确率、F1分数等

- 进行对抗鲁棒性测试,评估模型在对抗环境下的表现

- 考虑实时性能,评估模型的延迟和吞吐量

- 部署层面:

- 持续监控模型的偏差和方差变化

- 建立模型更新机制,根据威胁演变调整模型

- 考虑模型的可解释性,满足安全审计要求

偏差-方差计算的代码模板

import numpy as np

from sklearn.model_selection import KFold

def compute_bias_variance(X, y, model_class, params, n_splits=10):

"""

计算模型的偏差和方差

参数:

X: 特征矩阵

y: 标签向量

model_class: 模型类

params: 模型参数

n_splits: 交叉验证折数

返回:

bias_squared: 偏差平方

variance: 方差

total_error: 总误差

"""

kf = KFold(n_splits=n_splits, shuffle=True, random_state=42)

# 存储每个fold的预测结果

all_predictions = []

all_true = []

for train_idx, test_idx in kf.split(X):

X_train, X_test = X[train_idx], X[test_idx]

y_train, y_test = y[train_idx], y[test_idx]

# 初始化并训练模型

model = model_class(**params)

model.fit(X_train, y_train)

# 预测

y_pred = model.predict(X_test)

# 存储结果

all_predictions.append(y_pred)

all_true.append(y_test)

# 转换为numpy数组

all_predictions = np.array(all_predictions)

all_true = np.array(all_true)

# 计算每个样本的平均预测

mean_pred = np.mean(all_predictions, axis=0)

# 计算偏差^2

bias_squared = np.mean((mean_pred - all_true[0]) ** 2)

# 计算方差

variance = np.mean(np.var(all_predictions, axis=0))

# 计算总误差

total_error = np.mean((all_predictions - all_true) ** 2)

return bias_squared, variance, total_error

def analyze_model_complexity(X, y, model_class, param_name, param_values, n_splits=10):

"""

分析不同复杂度下模型的偏差和方差

参数:

X: 特征矩阵

y: 标签向量

model_class: 模型类

param_name: 控制复杂度的参数名

param_values: 参数值列表

n_splits: 交叉验证折数

返回:

results: 包含不同参数下偏差、方差和总误差的字典

"""

results = {}

for param_value in param_values:

params = {param_name: param_value}

bias_squared, variance, total_error = compute_bias_variance(

X, y, model_class, params, n_splits

)

results[param_value] = {

'bias_squared': bias_squared,

'variance': variance,

'total_error': total_error

}

return results关键词: 偏差-方差权衡, 安全攻防, 模型稳定性, 泛化能力, 对抗鲁棒性, 不平衡数据, 实时安全系统, 模型复杂度

本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2026-01-14,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录