DeepSearcher实战

原创DeepSearcher实战

原创

happywei

发布于 2025-05-14 16:55:32

发布于 2025-05-14 16:55:32

git clone https://github.com/zilliztech/deep-searcher.git

cd /root/deep-searcher

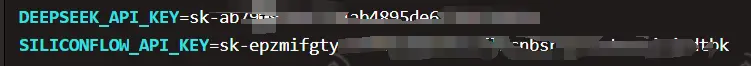

(llm) [root@localhost deep-searcher]# python deepsearch.py创建 .env 文件配置api_key

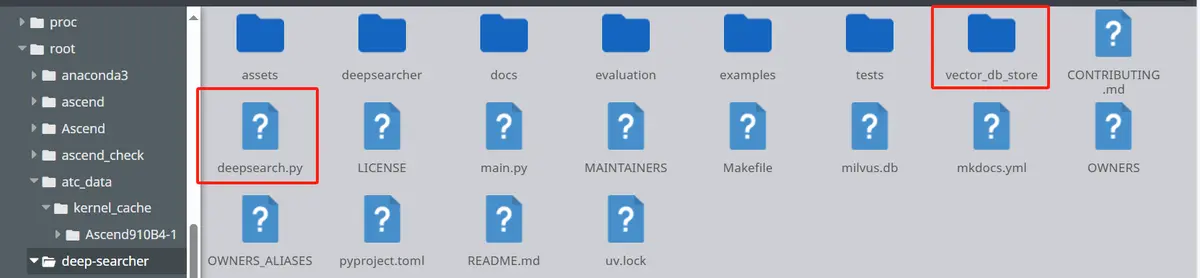

创建vector_db_store文件夹和deepsearch.py文件:

deepsearch.py如下:

import os

from dotenvimport load_dotenv

load_dotenv()

from deepsearcher.configurationimport Configuration, init_config

from deepsearcher.online_queryimport query

config = Configuration()

# Customize your config here,

# more configuration see the Configuration Details section below.

config.set_provider_config("llm","SiliconFlow", {"model":"Qwen/Qwen3-8B"})

# config.set_provider_config("llm", "custom", {

# "api_base": "http://localhost:8000/v1/chat/completions", # vllm 默认开放的 OpenAI 兼容接口路径

# "api_key": "EMPTY", # 本地部署一般不需要API key

# "model": "/data01/downloadModel/Qwen", # 你本地部署的模型名称,根据你 `vllm` serve 的 --served-model-name 设置

# })

config.set_provider_config("embedding","SiliconflowEmbedding", {"model":"BAAI/bge-m3"})

config.set_provider_config("vector_db","Milvus", {"uri":"./milvus.db","token":""})

init_config(config = config)

# Load your local data

from deepsearcher.offline_loadingimport load_from_local_files

load_from_local_files(paths_or_directory="/root/deep-searcher/vector_db_store")

#(Optional) Load from web crawling (`FIRECRAWL_API_KEY` env variable required)

# from deepsearcher.offline_loading import load_from_website

# load_from_website(urls=website_url)

# Query

result = query("总结一下科比的社会评价")# Your question here

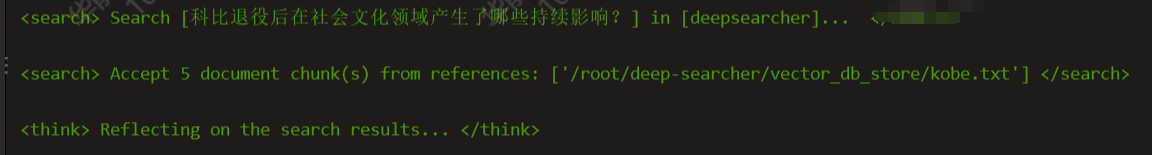

部分运行结果:

原创声明:本文系作者授权腾讯云开发者社区发表,未经许可,不得转载。

如有侵权,请联系 cloudcommunity@tencent.com 删除。

原创声明:本文系作者授权腾讯云开发者社区发表,未经许可,不得转载。

如有侵权,请联系 cloudcommunity@tencent.com 删除。

评论

登录后参与评论

推荐阅读