【大模型部署实战】VLLM+OpenWebUI实现DeepSeek模型部署,文末有福利

【大模型部署实战】VLLM+OpenWebUI实现DeepSeek模型部署,文末有福利

AI浩

发布于 2025-03-17 15:44:41

发布于 2025-03-17 15:44:41

摘要

vLLM(Very Large Language Model Serving)是由加州大学伯克利分校团队开发的高性能、低延迟大语言模型(LLM)推理和服务框架。其核心创新在于PagedAttention技术,通过将注意力键值(KV)缓存分页管理,显著提升显存利用率并降低碎片化问题,使吞吐量比传统框架(如Hugging Face Transformers)提升24倍。该框架支持连续批处理、动态显存分配和多GPU并行推理,能够高效处理8k+长上下文请求,并兼容OpenAI API接口,开发者可快速部署Hugging Face模型。通过集成FP8、AWQ等量化技术,vLLM在保证推理精度的同时大幅降低资源消耗,目前已成为企业级AI部署(如DeepSeek-R1 671B模型分布式集群)的首选方案。

中文文档:https://vllm.hyper.ai/docs/

vLLM 核心特性

- 最先进的服务吞吐量

- 通过 PagedAttention 技术实现内存优化,吞吐量比传统框架(如 Hugging Face Transformers)提升 24 倍2,4,5,9。

- PagedAttention 内存管理

- 将注意力键值(KV)缓存分页存储,减少显存碎片化,支持更大上下文长度(如 8k+)2,4,5,9。

- 连续批处理(Continuous Batching)

- 动态合并请求,最大化 GPU 利用率,支持高并发推理场景7,9。

- CUDA/HIP 图优化

- 预编译 GPU 操作序列,减少重复启动开销,加速模型执行速度7,9。

- 多精度量化支持

- 兼容 GPTQ、AWQ、INT4/8、FP8 等量化技术,降低显存占用并提升推理速度4,7,9。

- 优化的 CUDA 内核

- 集成 FlashAttention 和 FlashInfer,提升注意力计算效率4,9。

- 推测性解码(Speculative Decoding)

- 预生成潜在 token 并验证,减少实时文本生成的延迟4,7。

- 分块预填充(Chunked Prefill)

- 将长序列拆分为块处理,降低内存峰值占用5,7。

vLLM 灵活性与易用性

- 模型生态集成

- 无缝支持 Hugging Face 模型库,支持 LLaMA、GPT、DeepSeek 等主流架构2,9。

- 多样化解码算法

- 支持并行采样、束搜索(Beam Search)等算法,提升生成多样性4,7。

- 分布式推理能力

- 支持张量并行(Tensor Parallelism)和流水线并行(Pipeline Parallelism)3,9。

- 流式输出支持

- 实时返回生成结果,适用于聊天机器人等交互场景5,9。

- OpenAI 兼容 API

- 提供与 OpenAI API 一致的接口,便于迁移现有服务1,7。

- 跨硬件支持

- 适配 NVIDIA/AMD/Intel GPU、TPU 及 AWS Neuron 加速器3,9。

- 前缀缓存优化

- 重用相同前缀请求的预计算键值,减少冗余计算7。

- 多 LoRA 微调支持

- 同时部署基础模型的多个微调版本,提升资源利用率7,9。

VLLM安装

新建虚拟环境,命令如下:

conda create --name vllm python=3.9

然后,激活虚拟环境,命令如下:

conda activate vllm

安装pytorch,命令如下:

conda install pytorch==2.5.1 torchvision==0.20.1 torchaudio==2.5.1 pytorch-cuda=12.1 -c pytorch -c nvidia

其他的应该不用安装。关于pytorch的版本,其实现在不用严格指定,即使本地没有安装cuda,pytorch也会自动安装的。

下载模型

我们去魔搭社区下载模型。官网:https://www.modelscope.cn/my/overview 安装modelscope,执行命令:

pip install modelscope

新建脚本,将下面的复制进去并运行。

#模型下载

from modelscope import snapshot_download

model_dir = snapshot_download('deepseek-ai/DeepSeek-R1-Distill-Qwen-1.5B')

等这个代码执行完成就得到了/DeepSeek-R1-Distill-Qwen-1.5B模型文件。

VLLM部署

有两种部署方法,第一种使用vllm serve,我们使用1.5B的模型举例,执行命令:

vllm serve deepseek/DeepSeek-R1-Distill-Qwen-1.5B --tensor-parallel-size 1 --max-model-len 32768 --enforce-eager --port 11111 --api-key token-abc123

测试代码:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:11111/v1",

api_key="token-abc123",

)

completion = client.chat.completions.create(

model="deepseek/DeepSeek-R1-Distill-Qwen-1.5B",

messages=[

{"role": "user", "content": "Hello!"}

]

)

print(completion.choices[0].message)

测试结果:

ChatCompletionMessage(content='Alright, the user said "Hello!" and I should respond warmly.\n\nI\'ll greet them and offer my help.\n\nMake sure to keep it friendly and open-ended.\n</think>\n\nHello! How can I assist you today?', refusal=None, role='assistant', audio=None, function_call=None, tool_calls=[], reasoning_content=None)

第二种,使用vllm.entrypoints.openai.api_server,执行命令:

python -m vllm.entrypoints.openai.api_server --model deepseek/DeepSeek-R1-Distill-Qwen-1.5B --served-model-name "openchat"

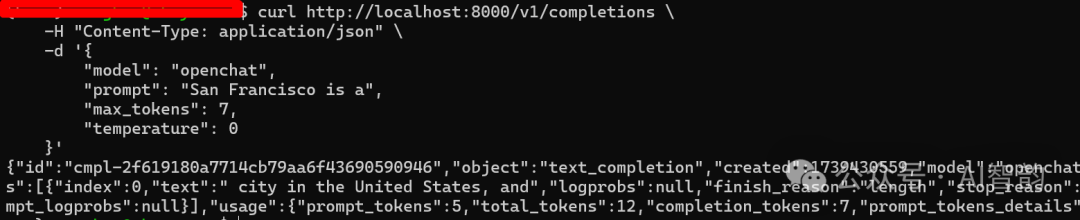

另起一个终端,在命令行里输入:

curl http://localhost:8000/v1/completions \

-H "Content-Type: application/json" \

-d '{

"model": "openchat",

"prompt": "San Francisco is a",

"max_tokens": 7,

"temperature": 0

}'

返回结果:

{"id":"cmpl-50e672d51948481bb573112f2b0562e5","object":"text_completion","created":1739353907,"model":"openchat","choices":[{"index":0,"text":" city in the United States, and","logprobs":null,"finish_reason":"length","stop_reason":null,"prompt_logprobs":null}],"usage":{"prompt_tokens":5,"total_tokens":12,"completion_tokens":7,"prompt_tokens_details":null}}

在这里插入图片描述

有时候,有些显卡被占用了,需要指定显卡部署,执行命令:

export CUDA_VISIBLE_DEVICES=4,5&&vllm serve deepseek-ai/DeepSeek-R1-Distill-Qwen-32B --dtype auto --api-key token-abc123 --tensor-parallel-size 2 --port 11112 --cpu-offload-gb 20还有一种情况,显卡被占用了,还有一些空间,这时候就要通过--gpu-memory-utilization,来控制显存的占用,执行命令如下:

export CUDA_VISIBLE_DEVICES=4,5&&vllm serve deepseek-ai/DeepSeek-R1-Distill-Qwen-32B --dtype auto --api-key token-abc123 --tensor-parallel-size 2 --port 11112 --cpu-offload-gb 20 --gpu-memory-utilization 0.6本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2025-03-16,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录