需要帮助找出两个单独的轮廓,而不是MICR代码中的组合轮廓。

我在银行支票上运行OCR,使用pyimagesearch教程检测micr代码.本教程中使用的代码从包含符号的参考图像中检测组轮廓&字符轮廓。

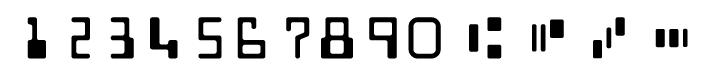

在本教程中,查找下面符号的等值线时

代码使用内置的python迭代器在等高线上迭代(这里有3个单独的等高线),并结合给出一个字符以供识别。

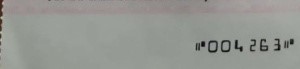

但是在我使用的支票数据集中,我的符号是低分辨率的。

支票的实际底数是:

这使得迭代器将轮廓-2&轮廓-3看作一个单一的轮廓.由于这个原因,迭代器迭代上面的符号后面的字符(这里是'0'),并准备一个不正确的模板来匹配引用符号。为了更好地理解,您可以看到下面的代码。

我知道这里图像中的噪声是一个因素,但是是否有可能降低噪声&也能找到准确的轮廓来检测符号?

我尝试了像cv2.fastNlMeansDenoising和cv2.GaussianBlur这样的降噪技术,在cv2.findContours步骤之前,将轮廓2和3检测为单一轮廓,而不是2个单独的等高线。此外,我还尝试更改“cv2.findContos”参数。

下面是迭代字符的工作代码,以便更好地理解python内置迭代器:

def extract_digits_and_symbols(image, charCnts, minW=5, minH=10):

# grab the internal Python iterator for the list of character

# contours, then initialize the character ROI and location

# lists, respectively

charIter = charCnts.__iter__()

rois = []

locs = []

# keep looping over the character contours until we reach the end

# of the list

while True:

try:

# grab the next character contour from the list, compute

# its bounding box, and initialize the ROI

c = next(charIter)

(cX, cY, cW, cH) = cv2.boundingRect(c)

roi = None

# check to see if the width and height are sufficiently

# large, indicating that we have found a digit

if cW >= minW and cH >= minH:

# extract the ROI

roi = image[cY:cY + cH, cX:cX + cW]

rois.append(roi)

cv2.imshow('roi',roi)

cv2.waitKey(0)

locs.append((cX, cY, cX + cW, cY + cH))

# otherwise, we are examining one of the special symbols

else:

# MICR symbols include three separate parts, so we

# need to grab the next two parts from our iterator,

# followed by initializing the bounding box

# coordinates for the symbol

parts = [c, next(charIter), next(charIter)]

(sXA, sYA, sXB, sYB) = (np.inf, np.inf, -np.inf,

-np.inf)

# loop over the parts

for p in parts:

# compute the bounding box for the part, then

# update our bookkeeping variables

# c = next(charIter)

# (cX, cY, cW, cH) = cv2.boundingRect(c)

# roi = image[cY:cY+cH, cX:cX+cW]

# cv2.imshow('symbol', roi)

# cv2.waitKey(0)

# roi = None

(pX, pY, pW, pH) = cv2.boundingRect(p)

sXA = min(sXA, pX)

sYA = min(sYA, pY)

sXB = max(sXB, pX + pW)

sYB = max(sYB, pY + pH)

# extract the ROI

roi = image[sYA:sYB, sXA:sXB]

cv2.imshow('symbol', roi)

cv2.waitKey(0)

rois.append(roi)

locs.append((sXA, sYA, sXB, sYB))

# we have reached the end of the iterator; gracefully break

# from the loop

except StopIteration:

break

# return a tuple of the ROIs and locations

return (rois, locs)编辑:轮廓2和3,而不是等值线1和2

回答 1

Stack Overflow用户

发布于 2019-08-22 14:32:25

尝试找到正确的阈值,而不是使用cv2.THRESH_OTSU。似乎应该有可能从所提供的例子中找到合适的阈值。如果找不到适用于所有图像的阈值,可以尝试使用1像素宽的结构元素对阈值结果进行形态学关闭。

编辑(步骤):

对于阈值,您需要手工找到适当的值,在您的图像中,三个销售值100似乎是有效的:

i = cv.imread('image.png')

g = cv.cvtColor(i, cv.COLOR_BGR2GRAY)

_, tt = cv.threshold(g, 100, 255, cv.THRESH_BINARY_INV)至于结尾变式:

_, t = cv.threshold(g, 0,255,cv.THRESH_BINARY_INV | cv.THRESH_OTSU)

kernel = np.ones((12,1), np.uint8)

c = cv.morphologyEx(t, cv.MORPH_OPEN, kernel)注意,我使用了import cv2 as cv。我还使用了打开而不是关闭,因为在示例中,它们在阈值处理过程中倒置颜色。

https://stackoverflow.com/questions/57604850

复制相似问题