应用720 p USB摄像头增加树莓Pi 3的FPS

应用720 p USB摄像头增加树莓Pi 3的FPS

提问于 2019-04-09 08:24:57

我用python编写了一些代码来打开USB摄像头并从中抓取帧。我对http流使用我的代码。对于JPEG编码,我使用lib涡轮JPEG库。为此,我使用64位操作系统。

product: Raspberry Pi 3 Model B Rev 1.2

serial: 00000000f9307746

width: 64 bits

capabilities: smp cp15_barrier setend swp我用不同的决心做了一些测试。

Resolution FPS Time for encode

640 x 480 ~35 ~0.01

1280 x 720 ~17 ~0.028这是我的密码

import time

import os

import re

import uvc

from turbojpeg import TurboJPEG, TJPF_GRAY, TJSAMP_GRAY

jpeg = TurboJPEG("/opt/libjpeg-turbo/lib64/libturbojpeg.so")

camera = None

import numpy as np

from threading import Thread

class ProcessJPG(Thread):

def __init__(self, data):

self.jpeg_data = None

self.data = data

super(ProcessJPG, self).__init__()

def run(self):

self.jpeg_data = jpeg.encode((self.data))

def get_frame(self):

self.frame = camera.get_frame()

global camera

dev_list = uvc.device_list()

print("devices: ", dev_list)

camera = uvc.Capture(dev_list[1]['uid'])

camera.frame_size = camera.frame_sizes[2] // set 1280 x 720

camera.frame_rate = camera.frame_rates[0] // set 30 fps

class GetFrame(Thread):

def __init__(self):

self.frame = None

super(GetFrame, self).__init__()

def run(self):

self.frame = camera.get_frame()

_fps = -1

count_to_fps = 0

_real_fps = 0

from time import time

_real_fps = ""

cfps_time = time()

while True:

if camera:

t = GetFrame()

t.start()

t.join()

img = t.frame

timestamp = img.timestamp

img = img.img

ret = 1

t_start = time()

t = ProcessJPG(img)

t.start()

t.join()

jpg = t.jpeg_data

t_end = time()

print(t_end - t_start)

count_to_fps += 1

if count_to_fps >= _fps:

t_to_fps = time() - cfps_time

_real_fps = 1.0 / t_to_fps

cfps_time = time()

count_to_fps = 0

print("FPS, ", _real_fps)编码线是:jpeg.encode((self.data))

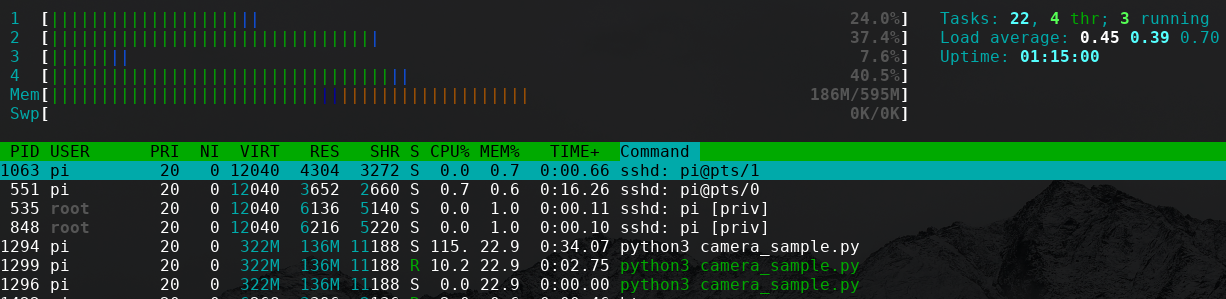

我的问题是,它的是可以提高FPS 1280 x 720 (例如30 FPS)分辨率,还是我应该使用更强大的设备?当我在计算过程中查看htop时,CPU不会在100%中使用。

编辑:相机格式:

[video4linux2,v4l2 @ 0xa705c0] Raw : yuyv422 : YUYV 4:2:2 : 640x480 1280x720 960x544 800x448 640x360 424x240 352x288 320x240 800x600 176x144 160x120 1280x800

[video4linux2,v4l2 @ 0xa705c0] Compressed: mjpeg : Motion-JPEG : 640x480 1280x720 960x544 800x448 640x360 800x600 416x240 352x288 176x144 320x240 160x120

回答 1

Stack Overflow用户

回答已采纳

发布于 2019-04-24 18:53:23

这是可能的,你不需要更强大的硬件。

* Capture instance will always grab mjpeg conpressed frames from cameras.

当您的代码访问.img属性时,调用jpeg2yuv (请参阅这里和这里)。然后您将使用jpeg_encode()重新编码。在捕获之后尝试使用frame.jpeg_buffer,完全不要碰.img。

我看了一下带有RPi2的罗技C310上的pyuvc,并做了一个简化的例子,

import uvc

import time

def main():

dev_list = uvc.device_list()

cap = uvc.Capture(dev_list[0]["uid"])

cap.frame_mode = (1280, 720, 30)

tlast = time.time()

for x in range(100):

frame = cap.get_frame_robust()

jpeg = frame.jpeg_buffer

print("%s (%d bytes)" % (type(jpeg), len(jpeg)))

#img = frame.img

tnow = time.time()

print("%.3f" % (tnow - tlast))

tlast = tnow

cap = None

main()我每帧得到.033 s,在8%的CPU下计算到~30 CPU。如果我取消对#img = frame.img行的注释,在99%的CPU上,它会上升到~.054 s/帧或~18 CPU(解码时间限制了捕获率)。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/55588207

复制相关文章

相似问题