如何使用sbt程序集排除测试依赖项

如何使用sbt程序集排除测试依赖项

提问于 2019-04-02 08:18:21

我有一个sbt项目,我试图用sbt组装插件构建到一个jar中。

build.sbt:

name := "project-name"

version := "0.1"

scalaVersion := "2.11.12"

val sparkVersion = "2.4.0"

libraryDependencies ++= Seq(

"org.scalatest" %% "scalatest" % "3.0.5" % "test",

"org.apache.spark" %% "spark-core" % sparkVersion % "provided",

"org.apache.spark" %% "spark-sql" % sparkVersion % "provided",

"org.apache.spark" %% "spark-streaming" % sparkVersion % "provided",

"com.holdenkarau" %% "spark-testing-base" % "2.3.1_0.10.0" % "test",

// spark-hive dependencies for DataFrameSuiteBase. https://github.com/holdenk/spark-testing-base/issues/143

"org.apache.spark" %% "spark-hive" % sparkVersion % "provided",

"com.amazonaws" % "aws-java-sdk" % "1.11.513" % "provided",

"com.amazonaws" % "aws-java-sdk-sqs" % "1.11.513" % "provided",

"com.amazonaws" % "aws-java-sdk-s3" % "1.11.513" % "provided",

//"org.apache.hadoop" % "hadoop-aws" % "3.1.1"

"org.json" % "json" % "20180813"

)

assemblyOption in assembly := (assemblyOption in assembly).value.copy(includeScala = false)

assemblyMergeStrategy in assembly := {

case PathList("META-INF", xs @ _*) => MergeStrategy.discard

case x => MergeStrategy.first

}

test in assembly := {}

// https://github.com/holdenk/spark-testing-base

fork in Test := true

javaOptions ++= Seq("-Xms512M", "-Xmx2048M", "-XX:MaxPermSize=2048M", "-XX:+CMSClassUnloadingEnabled")

parallelExecution in Test := false当我用sbt程序集构建项目时,得到的jar包含/org/junit/.和/org/opentest4j/。文件

有没有办法不将这些与测试相关的文件包含在最后的jar中?

我试着换了这条线:

"org.scalatest" %% "scalatest" % "3.0.5" % "test"通过以下方式:

"org.scalatest" %% "scalatest" % "3.0.5" % "provided"我还想知道jar中如何包含这些文件,因为junit没有在build.sbt中引用(然而,在项目中有junit测试)?

更新:

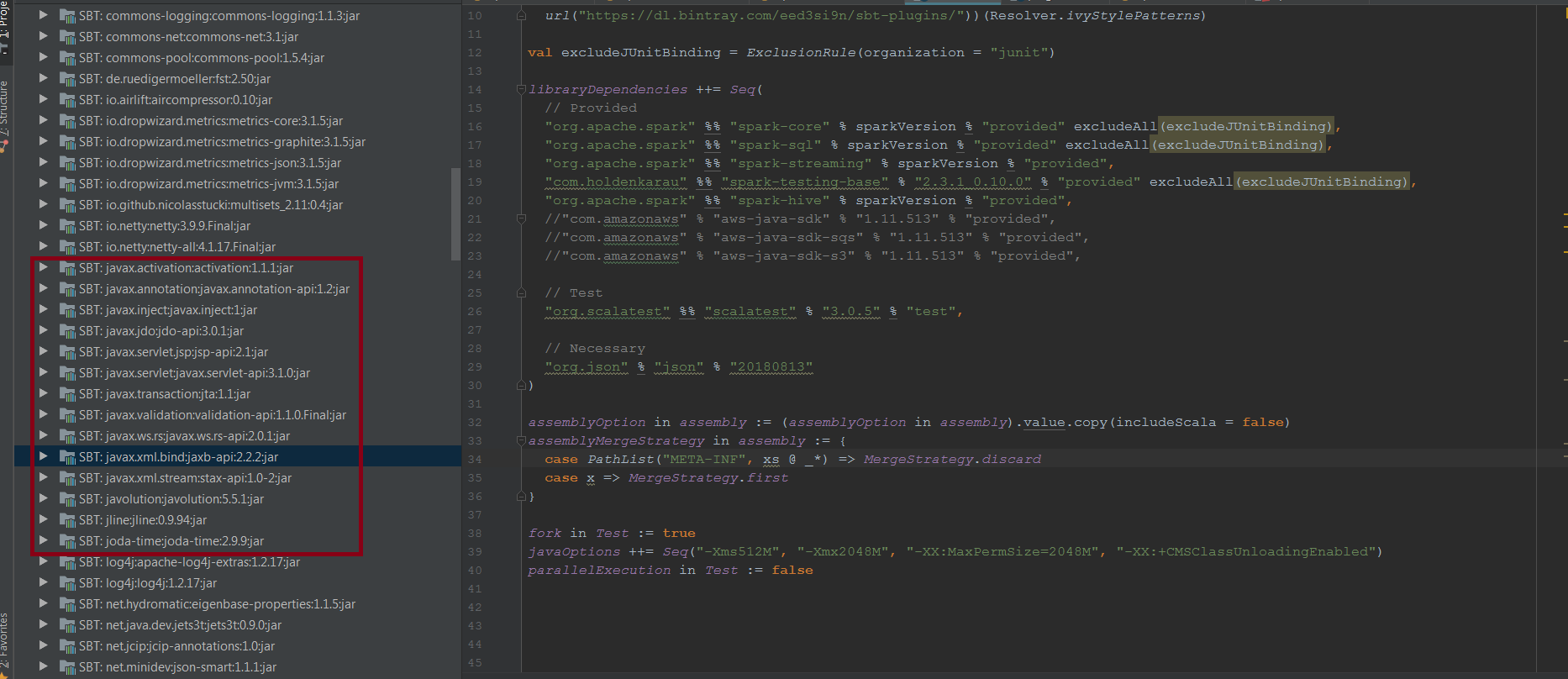

name := "project-name"

version := "0.1"

scalaVersion := "2.11.12"

val sparkVersion = "2.4.0"

val excludeJUnitBinding = ExclusionRule(organization = "junit")

libraryDependencies ++= Seq(

// Provided

"org.apache.spark" %% "spark-core" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-sql" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-streaming" % sparkVersion % "provided",

"com.holdenkarau" %% "spark-testing-base" % "2.3.1_0.10.0" % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-hive" % sparkVersion % "provided",

"com.amazonaws" % "aws-java-sdk" % "1.11.513" % "provided",

"com.amazonaws" % "aws-java-sdk-sqs" % "1.11.513" % "provided",

"com.amazonaws" % "aws-java-sdk-s3" % "1.11.513" % "provided",

// Test

"org.scalatest" %% "scalatest" % "3.0.5" % "test",

// Necessary

"org.json" % "json" % "20180813"

)

excludeDependencies += excludeJUnitBinding

// https://stackoverflow.com/questions/25144484/sbt-assembly-deduplication-found-error

assemblyOption in assembly := (assemblyOption in assembly).value.copy(includeScala = false)

assemblyMergeStrategy in assembly := {

case PathList("META-INF", xs @ _*) => MergeStrategy.discard

case x => MergeStrategy.first

}

// https://github.com/holdenk/spark-testing-base

fork in Test := true

javaOptions ++= Seq("-Xms512M", "-Xmx2048M", "-XX:MaxPermSize=2048M", "-XX:+CMSClassUnloadingEnabled")

parallelExecution in Test := false回答 1

Stack Overflow用户

回答已采纳

发布于 2019-04-02 09:37:34

若要排除依赖项的某些传递依赖项,请使用excludeAll或exclude方法。

应该在为项目发布pom时使用exclude方法。它需要组织和模块名来排除。

例如,:

libraryDependencies +=

"log4j" % "log4j" % "1.2.15" exclude("javax.jms", "jms")excludeAll方法更灵活,但是由于它不能在pom.xml中表示,所以只应该在不需要生成pom时才使用它。

例如,,

libraryDependencies +=

"log4j" % "log4j" % "1.2.15" excludeAll(

ExclusionRule(organization = "com.sun.jdmk"),

ExclusionRule(organization = "com.sun.jmx"),

ExclusionRule(organization = "javax.jms")

)在某些情况下,应将传递依赖排除在所有依赖项之外。这可以通过在ExclusionRules中设置excludeDependencies(对于SBT0.13.8和更高版本)来实现。

excludeDependencies ++= Seq(

ExclusionRule("commons-logging", "commons-logging")

)JUnit jar文件下载是以下依赖关系的一部分。

"org.apache.spark" %% "spark-core" % sparkVersion % "provided" //(junit)

"org.apache.spark" %% "spark-sql" % sparkVersion % "provided"// (junit)

"com.holdenkarau" %% "spark-testing-base" % "2.3.1_0.10.0" % "test" //(org.junit)若要排除junit文件,请按以下方式更新您的依赖项。

val excludeJUnitBinding = ExclusionRule(organization = "junit")

"org.scalatest" %% "scalatest" % "3.0.5" % "test",

"org.apache.spark" %% "spark-core" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-sql" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-streaming" % sparkVersion % "provided",

"com.holdenkarau" %% "spark-testing-base" % "2.3.1_0.10.0" % "test" excludeAll(excludeJUnitBinding)更新:请按以下方式更新您的build.abt。

resolvers += Resolver.url("bintray-sbt-plugins",

url("https://dl.bintray.com/eed3si9n/sbt-plugins/"))(Resolver.ivyStylePatterns)

val excludeJUnitBinding = ExclusionRule(organization = "junit")

libraryDependencies ++= Seq(

// Provided

"org.apache.spark" %% "spark-core" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-sql" % sparkVersion % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-streaming" % sparkVersion % "provided",

"com.holdenkarau" %% "spark-testing-base" % "2.3.1_0.10.0" % "provided" excludeAll(excludeJUnitBinding),

"org.apache.spark" %% "spark-hive" % sparkVersion % "provided",

//"com.amazonaws" % "aws-java-sdk" % "1.11.513" % "provided",

//"com.amazonaws" % "aws-java-sdk-sqs" % "1.11.513" % "provided",

//"com.amazonaws" % "aws-java-sdk-s3" % "1.11.513" % "provided",

// Test

"org.scalatest" %% "scalatest" % "3.0.5" % "test",

// Necessary

"org.json" % "json" % "20180813"

)

assemblyOption in assembly := (assemblyOption in assembly).value.copy(includeScala = false)

assemblyMergeStrategy in assembly := {

case PathList("META-INF", xs @ _*) => MergeStrategy.discard

case x => MergeStrategy.first

}

fork in Test := true

javaOptions ++= Seq("-Xms512M", "-Xmx2048M", "-XX:MaxPermSize=2048M", "-XX:+CMSClassUnloadingEnabled")

parallelExecution in Test := falseplugin.sbt

addSbtPlugin("com.eed3si9n" % "sbt-assembly" % "0.13.0")我试过了,它没有下载junit jar文件。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/55469980

复制相关文章

相似问题