如何通过skopt/BayesSearchCV搜索绘制学习曲线?

如何通过skopt/BayesSearchCV搜索绘制学习曲线?

提问于 2019-03-06 01:22:33

我很难从剪刀优化中画出一个学习曲线。以下是我尝试过的:

from skopt.space import Real, Integer, Categorical

from skopt.utils import use_named_args

from skopt import BayesSearchCV

from skopt.plots import plot_convergence

rf = RandomForestRegressor(random_state =7, n_jobs=4)

def RunSKOpt(X_train, y_train):

hyper_parameters = {"n_estimators": (5, 500),

"max_depth": Categorical([3, None]),

"min_samples_split": (2, 10),

"min_samples_leaf": (1, 10)

}

search = BayesSearchCV(rf,

hyper_parameters,

n_iter = 40,

n_jobs = 4,

cv = 10,

verbose = 1,

return_train_score = False

)

return search

search = RunSKOpt(X_train, y_train)

search.fit(X_train, y_train)

plot_convergence(search)情节是空的。请告诉我我做错了什么。

查尔斯

回答 1

Stack Overflow用户

发布于 2019-05-29 18:18:22

直接从这个Github问题线程:https://github.com/scikit-optimize/scikit-optimize/issues/751

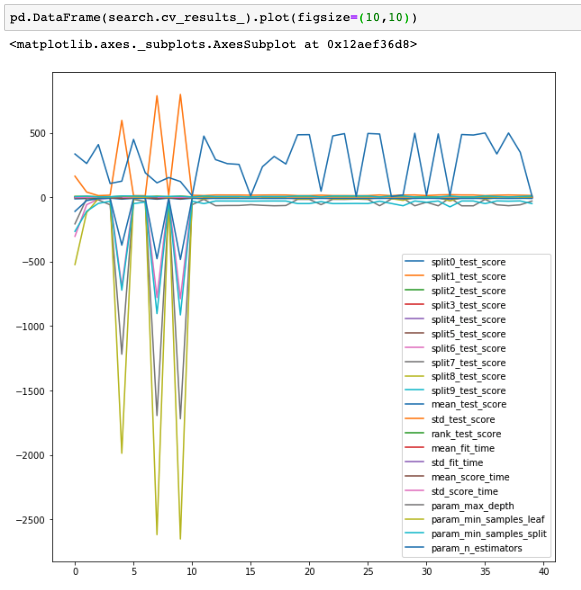

BayesSearchCV不是用来绘制会聚图的。不过,您可以使用*SearchCV的cv_results_属性,将其转换为熊猫(应该只是在cv_results_属性之外创建数据),然后可视化不同迭代的估计器性能。该属性类似于GridSearchCV的属性:

下面就是一个例子:

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/55014090

复制相关文章

相似问题