crawler4j按照什么步骤来获取数据?

crawler4j按照什么步骤来获取数据?

提问于 2018-11-17 13:31:21

我想学习,

- crawler4j是如何工作的?

- 它是获取网页,然后下载其内容并提取它吗?

- 那么.db和.cvs文件及其结构呢?

一般情况下,它会遵循什么顺序?

拜托,我要一个描述性的内容

谢谢

回答 1

Stack Overflow用户

回答已采纳

发布于 2018-12-07 13:27:55

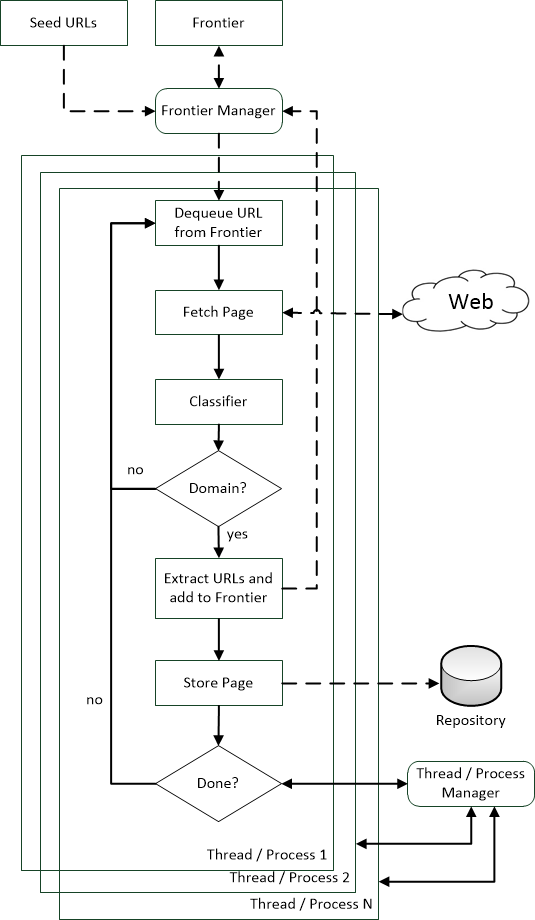

广义Crawler过程

典型的多线程爬行器的过程如下:

- 我们有一个队列数据结构,称为

frontier。新发现的URL(或起始点,所谓的种子)被添加到这个数据结构中。此外,为每个URL分配一个唯一的ID,以确定是否先前访问了给定的URL。 - 然后,爬虫线程从

frontier中获取URL,并为以后的处理安排它们。 - 实际处理开始:

- The `robots.txt` for the given URL is determined and parsed to honour exclusion criteria and be a polite web-crawler (configurable)

- Next, the thread will check for politeness, i.e. time to wait before visting the same host of an URL again.

- The actual URL is vistied by the crawler and the content is downloaded (this can be literally everything)

- If we have HTML content, this content is parsed and potential new URLs are extracted and added to the frontier (in `crawler4j` this can be controlled via `shouldVisit(...)`).

- 整个过程被重复,直到没有新的URL被添加到

frontier。

一般(重点) Crawler架构

除了crawler4j的实现细节之外,一个或多或少的通用(聚焦)爬虫体系结构(在单个服务器/pc上)如下所示:

免责声明:图像是我自己的作品。请参考这篇文章来尊重这一点。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/53351712

复制相关文章

相似问题