tm Bigrams解决方案仍在生成unigram

tm Bigrams解决方案仍在生成unigram

提问于 2018-08-10 16:00:17

我正在尝试使用tm的DocumentTermMatrix函数来生成一个矩阵,而不是单一图。我尝试在我的函数中使用概述的这里和这里示例(以下是三个示例):

make_dtm = function(main_df, stem=F){

tokenize_ngrams = function(x, n=2) return(rownames(as.data.frame(unclass(textcnt(x,method="string",n=n)))))

decisions = Corpus(VectorSource(main_df$CaseTranscriptText))

decisions.dtm = DocumentTermMatrix(decisions, control = list(tokenize=tokenize_ngrams,

stopwords=T,

tolower=T,

removeNumbers=T,

removePunctuation=T,

stemming = stem))

return(decisions.dtm)

}

make_dtm = function(main_df, stem=F){

BigramTokenizer = function(x) NGramTokenizer(x, Weka_control(min = 2, max = 2))

decisions = Corpus(VectorSource(main_df$CaseTranscriptText))

decisions.dtm = DocumentTermMatrix(decisions, control = list(tokenize=BigramTokenizer,

stopwords=T,

tolower=T,

removeNumbers=T,

removePunctuation=T,

stemming = stem))

return(decisions.dtm)

}

make_dtm = function(main_df, stem=F){

BigramTokenizer = function(x) unlist(lapply(ngrams(words(x), 2), paste, collapse = " "), use.names = FALSE)

decisions = Corpus(VectorSource(main_df$CaseTranscriptText))

decisions.dtm = DocumentTermMatrix(decisions, control = list(tokenize=BigramTokenizer,

stopwords=T,

tolower=T,

removeNumbers=T,

removePunctuation=T,

stemming = stem))

return(decisions.dtm)

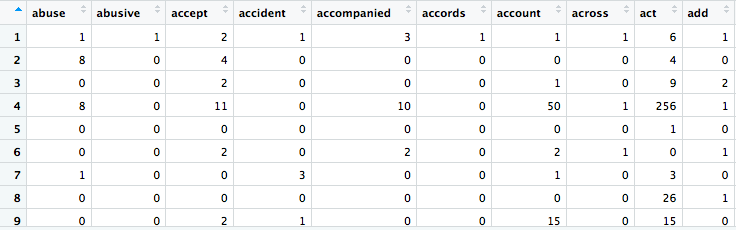

}然而,不幸的是,这三个函数版本中的每一个都产生了完全相同的输出:带有unigram的DTM,而不是bigram(为了简单起见包含的图像):

为了您的方便,下面是我正在处理的数据的子集:

x = data.frame("CaseName" = c("Attorney General's Reference (No.23 of 2011)", "Attorney General's Reference (No.31 of 2016)", "Joseph Hill & Co Solicitors, Re"),

"CaseID"= c("[2011]EWCACrim1496", "[2016]EWCACrim1386", "[2013]EWCACrim775"),

"CaseTranscriptText" = c("sanchez 2011 02187 6 appeal criminal division 8 2011 2011 ewca crim 14962011 wl 844075 wales wednesday 8 2011 attorney general reference 23 2011 36 criminal act 1988 representation qc general qc appeared behalf attorney general",

"attorney general reference 31 2016 201601021 2 appeal criminal division 20 2016 2016 ewca crim 13862016 wl 05335394 dbe honour qc sitting cacd wednesday 20 th 2016 reference attorney general 36 criminal act 1988 representation",

"matter wasted costs against company solicitors 201205544 5 appeal criminal division 21 2013 2013 ewca crim 7752013 wl 2110641 date 21 05 2013 appeal honour pawlak 20111354 hearing date 13 th 2013 representation toole respondent qc appellants"))回答 1

Stack Overflow用户

回答已采纳

发布于 2018-08-10 17:49:28

您的代码有几个问题。我只关注您创建的最后一个函数,因为我不使用τ或Rweka包。

1要使用令牌程序,需要指定tokenizer = ...,而不是tokenize = ...

2而不是Corpus,您需要VCorpus。

3在您的函数make_dtm中调整这一功能之后,我对结果不满意。没有正确处理控件选项中指定的所有内容。我创建了第二个函数make_dtm_adjusted,这样您就可以看到2之间的区别。

# OP's function adjusted to make it work

make_dtm = function(main_df, stem=F){

BigramTokenizer = function(x) unlist(lapply(ngrams(words(x), 2), paste, collapse = " "), use.names = FALSE)

decisions = VCorpus(VectorSource(main_df$CaseTranscriptText))

decisions.dtm = DocumentTermMatrix(decisions, control = list(tokenizer=BigramTokenizer,

stopwords=T,

tolower=T,

removeNumbers=T,

removePunctuation=T,

stemming = stem))

return(decisions.dtm)

}

# improved function

make_dtm_adjusted = function(main_df, stem=F){

BigramTokenizer = function(x) unlist(lapply(ngrams(words(x), 2), paste, collapse = " "), use.names = FALSE)

decisions = VCorpus(VectorSource(main_df$CaseTranscriptText))

decisions <- tm_map(decisions, content_transformer(tolower))

decisions <- tm_map(decisions, removeNumbers)

decisions <- tm_map(decisions, removePunctuation)

# specifying your own stopword list is better as you can use stopwords("smart")

# or your own list

decisions <- tm_map(decisions, removeWords, stopwords("english"))

decisions <- tm_map(decisions, stripWhitespace)

decisions.dtm = DocumentTermMatrix(decisions, control = list(stemming = stem,

tokenizer=BigramTokenizer))

return(decisions.dtm)

}页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/51790212

复制相关文章

相似问题