BeautifulSoup刮擦格式

BeautifulSoup刮擦格式

提问于 2018-07-20 04:11:09

这是我第一次使用BeautifulSoup,我试图从本地便利店中删除存储位置数据。

但是,当数据被传递到CSV文件时,我在尝试删除空行时遇到了一些问题,我尝试过.replace('\n','')和.strip()都没有工作。

另外,我在分割数据时遇到了问题,这些数据被刮掉并包含在相同的同级方法中。

我在下面添加了脚本:

from bs4 import BeautifulSoup

from requests import get

import urllib.request

import sched, time

import csv

url = 'http://www.cheers.com.sg/web/store_location.jsp'

response = get(url)

soup = BeautifulSoup(response.text, 'html.parser')

#print (soup.prettify())

#open a file for writing

location_data = open('data/soupdata.csv', 'w', newline='')

#create the csv writer object

csvwriter = csv.writer(location_data)

cheers = soup.find('div' , id="store_container")

count = 0

#Loop for Header tags

for paragraph in cheers.find_all('b'):

header1 = paragraph.text.replace(':' , '')

header2 = paragraph.find_next('b').text.replace(':' , '')

header3 = paragraph.find_next_siblings('b')[1].text.replace(':' , '')

if count == 0:

csvwriter.writerow([header1, header2, header3])

count += 1

break

for paragraph in cheers.find_all('br'):

brnext = paragraph.next_sibling.strip()

brnext1 = paragraph.next_sibling

test1 = brnext1.next_sibling.next_sibling

print(test1)

csvwriter.writerow([brnext, test1])

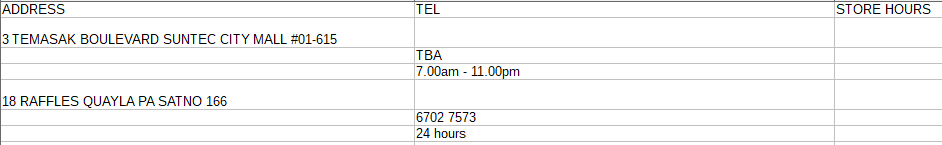

location_data.close()产生的产出样本:

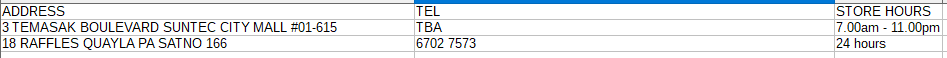

输出应该是什么样子的示例:

我怎样才能做到这一点?

提前谢谢。

回答 2

Stack Overflow用户

回答已采纳

发布于 2018-07-20 05:08:06

您只需要改变提取地址、电话和存储时间的方式

import csv

from bs4 import BeautifulSoup

from requests import get

url = 'http://www.cheers.com.sg/web/store_location.jsp'

response = get(url)

soup = BeautifulSoup(response.text, 'html.parser')

# print (soup.prettify())

# open a file for writing

location_data = open('data/soupdata.csv', 'w', newline='')

# create the csv writer object

csvwriter = csv.writer(location_data)

cheers = soup.find('div', id="store_container")

count = 0

# Loop for Header tags

for paragraph in cheers.find_all('b'):

header1 = paragraph.text.replace(':', '')

header2 = paragraph.find_next('b').text.replace(':', '')

header3 = paragraph.find_next_siblings('b')[1].text.replace(':', '')

if count == 0:

csvwriter.writerow([header1, header2, header3])

count += 1

break

for paragraph in cheers.find_all('div'):

label = paragraph.find_all('b')

if len(label) == 3:

print(label)

address = label[0].next_sibling.next_sibling

tel = label[1].next_sibling

hours = label[2].next_sibling

csvwriter.writerow([address, tel, hours])

location_data.close()Stack Overflow用户

发布于 2018-07-20 07:03:46

要使它稍微组织起来,您可以尝试如下所示。我使用了.select()而不是全部()。

import csv

from bs4 import BeautifulSoup

import requests

url = 'http://www.cheers.com.sg/web/store_location.jsp'

response = requests.get(url)

soup = BeautifulSoup(response.text, 'html.parser')

with open("output.csv","w",newline="") as infile:

writer = csv.writer(infile)

writer.writerow(["Address","Telephone","Store hours"])

for items in soup.select("#store_container .store_col"):

addr = items.select_one("b").next_sibling.next_sibling

tel = items.select_one("b:nth-of-type(2)").next_sibling

store = items.select_one("b:nth-of-type(3)").next_sibling

writer.writerow([addr,tel,store])页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/51434949

复制相关文章

相似问题