Druid,Kafka,Superset的流媒体

Druid,Kafka,Superset的流媒体

提问于 2018-04-25 15:00:58

我正在使用Kafka,Druid和SuperSet测试数据流。

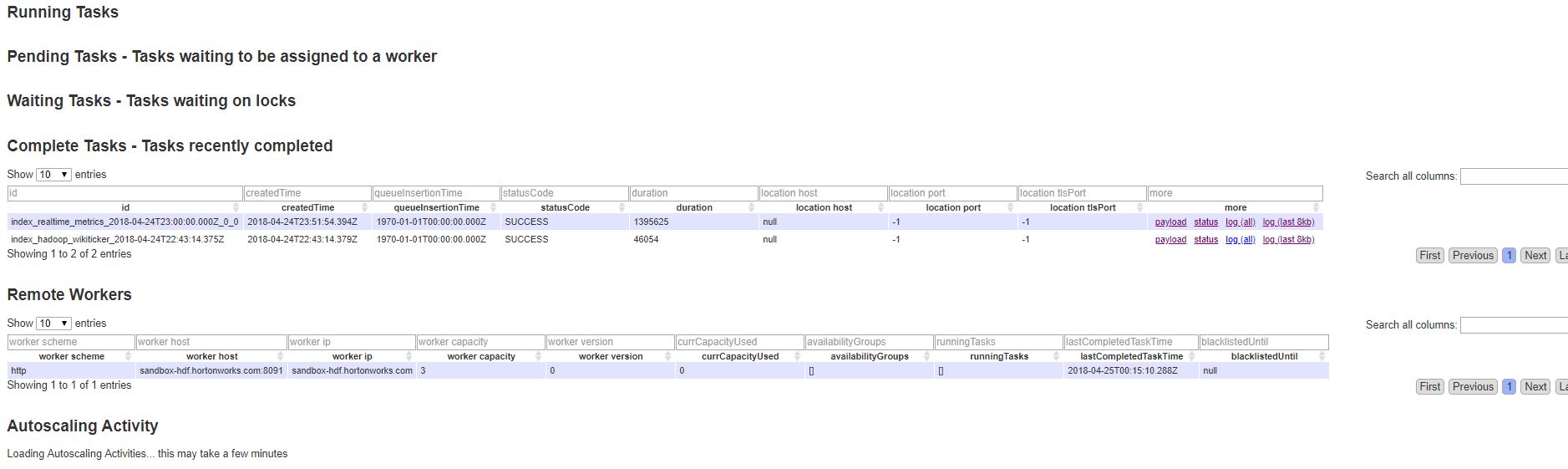

我在德鲁伊有一些数据(见1.pic胞)。

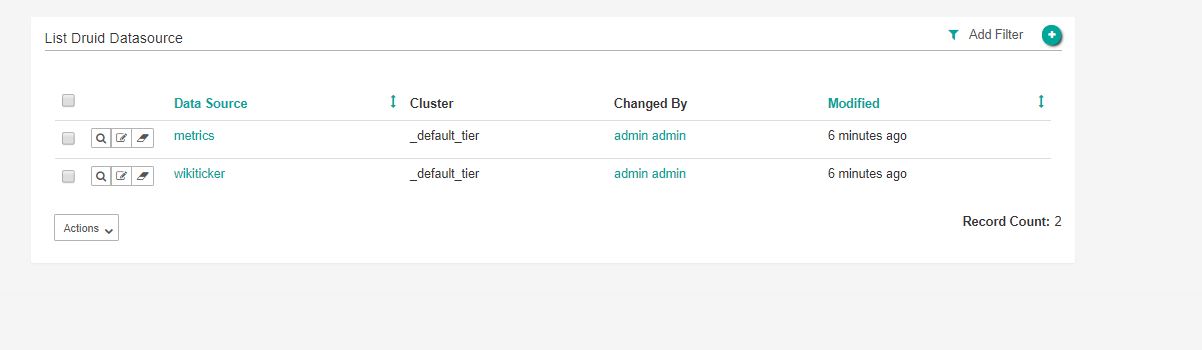

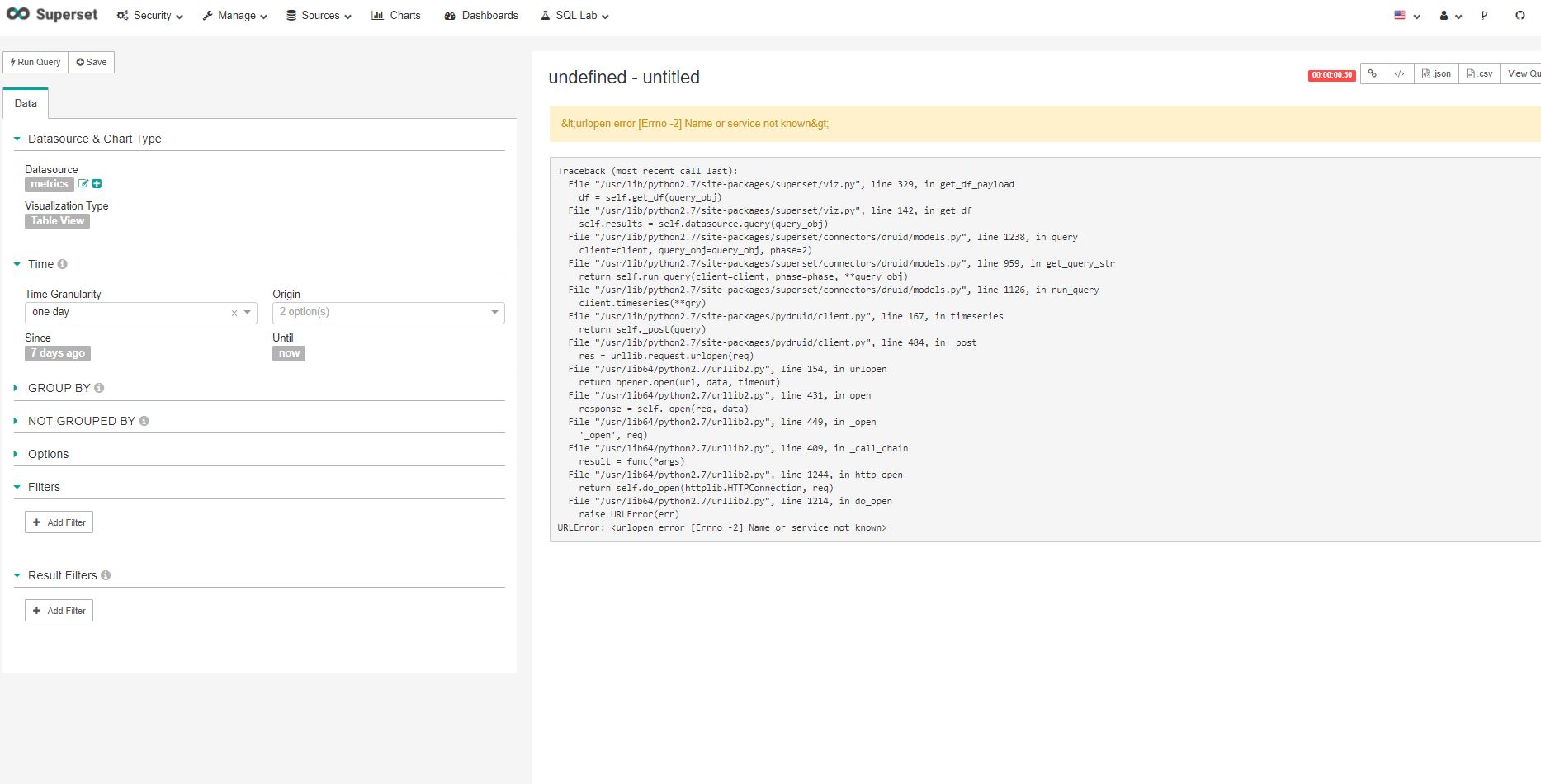

在此之后,我可以通过选项“刷新Druid元数据”(参见2.pic)在Superset中生成Druid数据源,问题是当我想要查询数据时,我会得到以下错误消息:

URLError: <urlopen error [Errno -2] Name or service not known>

Traceback (most recent call last):

File "/usr/lib/python2.7/site-packages/superset/viz.py", line 329, in get_df_payload

df = self.get_df(query_obj)

File "/usr/lib/python2.7/site-packages/superset/viz.py", line 142, in get_df

self.results = self.datasource.query(query_obj)

File "/usr/lib/python2.7/site-packages/superset/connectors/druid/models.py", line 1238, in query

client=client, query_obj=query_obj, phase=2)

File "/usr/lib/python2.7/site-packages/superset/connectors/druid/models.py", line 959, in get_query_str

return self.run_query(client=client, phase=phase, **query_obj)

File "/usr/lib/python2.7/site-packages/superset/connectors/druid/models.py", line 1126, in run_query

client.timeseries(**qry)

File "/usr/lib/python2.7/site-packages/pydruid/client.py", line 167, in timeseries

return self._post(query)

File "/usr/lib/python2.7/site-packages/pydruid/client.py", line 484, in _post

res = urllib.request.urlopen(req)

File "/usr/lib64/python2.7/urllib2.py", line 154, in urlopen

return opener.open(url, data, timeout)

File "/usr/lib64/python2.7/urllib2.py", line 431, in open

response = self._open(req, data)

File "/usr/lib64/python2.7/urllib2.py", line 449, in _open

'_open', req)

File "/usr/lib64/python2.7/urllib2.py", line 409, in _call_chain

result = func(*args)

File "/usr/lib64/python2.7/urllib2.py", line 1244, in http_open

return self.do_open(httplib.HTTPConnection, req)

File "/usr/lib64/python2.7/urllib2.py", line 1214, in do_open

raise URLError(err)

URLError: <urlopen error [Errno -2] Name or service not known>也见图3。

你知道什么是问题吗?

我给卡夫卡通过NiFi,然后我有卡夫卡的来源钩住德鲁伊目标在SAM。

谢谢!

- 皮特儿

- 皮特儿

- 皮特儿

- 在超集中没有数据

回答 2

Stack Overflow用户

回答已采纳

发布于 2018-05-07 10:34:36

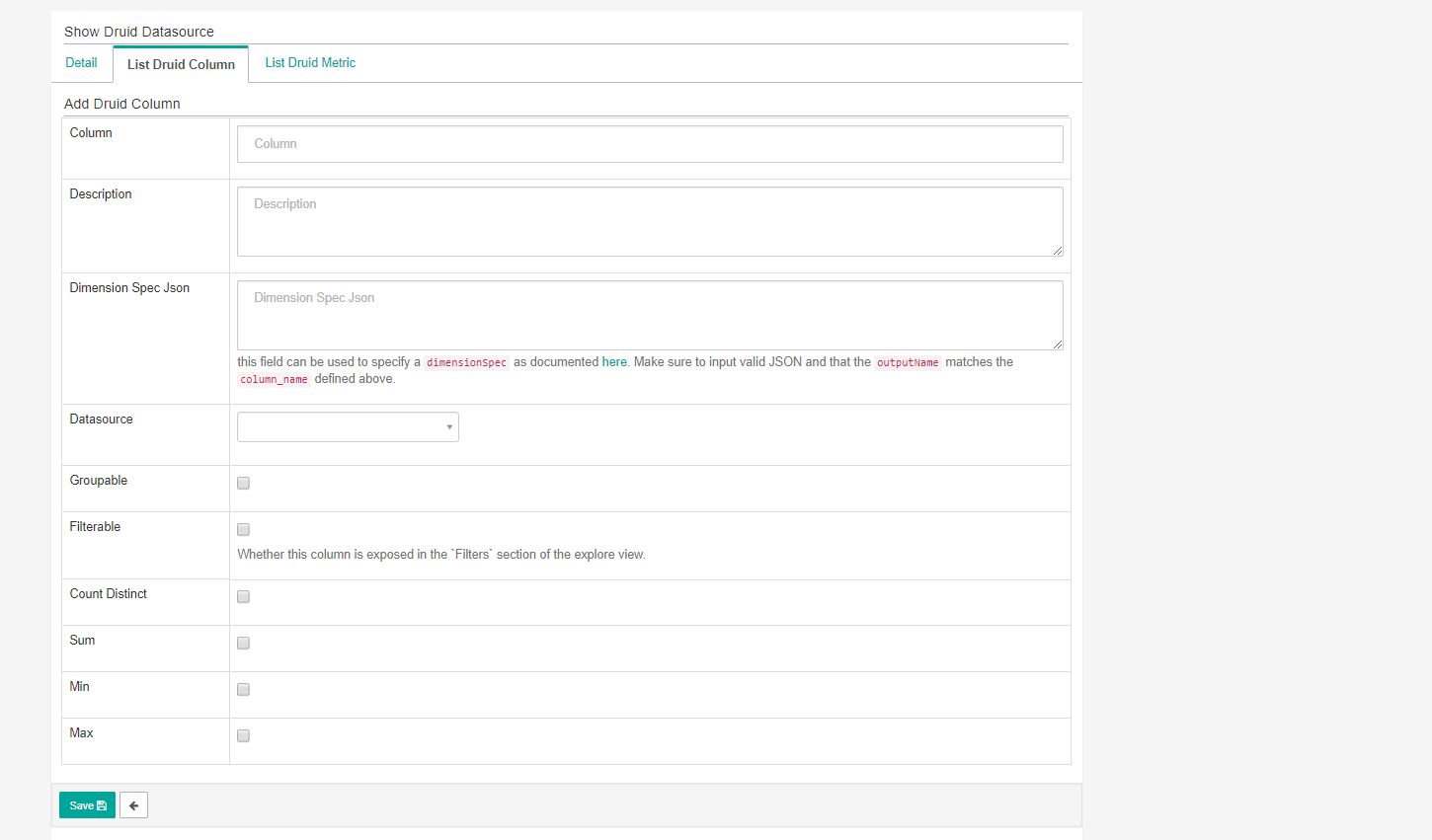

问题解决了,问题是在超级集UI中没有在集群配置中定义代理主机。我将其设置为value: localhost,现在它已经启动并运行。

Stack Overflow用户

发布于 2018-05-03 06:47:35

似乎Superset遇到了连接到您的代理节点的问题。检查你的集群健康状况。特别是代理和协调节点日志。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/50025523

复制相关文章

相似问题