转换为CMSampleBuffer的vImage帧有错误的颜色

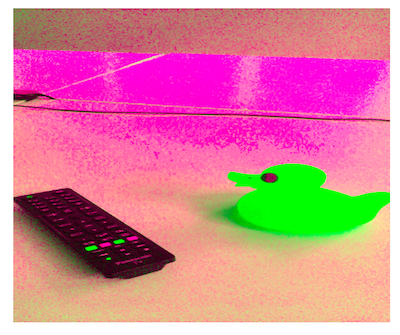

我正在尝试将CMSampleBuffer从相机输出转换为vImage,然后应用一些处理。不幸的是,即使没有任何进一步的编辑,我从缓冲区获得的框架也有错误的颜色:

实现(不考虑内存管理和错误):

配置视频输出设备:

videoDataOutput = AVCaptureVideoDataOutput()

videoDataOutput.videoSettings = [String(kCVPixelBufferPixelFormatTypeKey): kCVPixelFormatType_32BGRA]

videoDataOutput.alwaysDiscardsLateVideoFrames = true

videoDataOutput.setSampleBufferDelegate(self, queue: captureQueue)

videoConnection = videoDataOutput.connection(withMediaType: AVMediaTypeVideo)

captureSession.sessionPreset = AVCaptureSessionPreset1280x720

let videoDevice = AVCaptureDevice.defaultDevice(withMediaType: AVMediaTypeVideo)

guard let videoDeviceInput = try? AVCaptureDeviceInput(device: videoDevice) else {

return

}从摄像机接收到的vImage创建CASampleBuffer:

// Convert `CASampleBuffer` to `CVImageBuffer`

guard let pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer) else { return }

var buffer: vImage_Buffer = vImage_Buffer()

buffer.data = CVPixelBufferGetBaseAddress(pixelBuffer)

buffer.rowBytes = CVPixelBufferGetBytesPerRow(pixelBuffer)

buffer.width = vImagePixelCount(CVPixelBufferGetWidth(pixelBuffer))

buffer.height = vImagePixelCount(CVPixelBufferGetHeight(pixelBuffer))

let vformat = vImageCVImageFormat_CreateWithCVPixelBuffer(pixelBuffer)

let bitmapInfo:CGBitmapInfo = CGBitmapInfo(rawValue: CGImageAlphaInfo.last.rawValue | CGBitmapInfo.byteOrder32Little.rawValue)

var cgFormat = vImage_CGImageFormat(bitsPerComponent: 8,

bitsPerPixel: 32,

colorSpace: nil,

bitmapInfo: bitmapInfo,

version: 0,

decode: nil,

renderingIntent: .defaultIntent)

// Create vImage

vImageBuffer_InitWithCVPixelBuffer(&buffer, &cgFormat, pixelBuffer, vformat!.takeRetainedValue(), cgColor, vImage_Flags(kvImageNoFlags))将缓冲区转换为UIImage:

为了进行测试,CVPixelBuffer被导出到UIImage,但是将它添加到视频缓冲区具有相同的结果。

var dstPixelBuffer: CVPixelBuffer?

let status = CVPixelBufferCreateWithBytes(nil, Int(buffer.width), Int(buffer.height),

kCVPixelFormatType_32BGRA, buffer.data,

Int(buffer.rowBytes), releaseCallback,

nil, nil, &dstPixelBuffer)

let destCGImage = vImageCreateCGImageFromBuffer(&buffer, &cgFormat, nil, nil, numericCast(kvImageNoFlags), nil)?.takeRetainedValue()

// create a UIImage

let exportedImage = destCGImage.flatMap { UIImage(cgImage: $0, scale: 0.0, orientation: UIImageOrientation.right) }

DispatchQueue.main.async {

self.previewView.image = exportedImage

}回答 2

Stack Overflow用户

发布于 2018-01-11 15:51:58

尝试在您的简历图像格式上设置颜色空间:

let vformat = vImageCVImageFormat_CreateWithCVPixelBuffer(pixelBuffer).takeRetainedValue()

vImageCVImageFormat_SetColorSpace(vformat,

CGColorSpaceCreateDeviceRGB())...and更新对vImageBuffer_InitWithCVPixelBuffer的调用以反映vformat现在是托管引用的事实:

let error = vImageBuffer_InitWithCVPixelBuffer(&buffer, &cgFormat, pixelBuffer, vformat, nil, vImage_Flags(kvImageNoFlags))最后,您可以删除以下行,vImageBuffer_InitWithCVPixelBuffer正在为您完成此工作:

// buffer.data = CVPixelBufferGetBaseAddress(pixelBuffer)

// buffer.rowBytes = CVPixelBufferGetBytesPerRow(pixelBuffer)

// buffer.width = vImagePixelCount(CVPixelBufferGetWidth(pixelBuffer))

// buffer.height = vImagePixelCount(CVPixelBufferGetHeight(pixelBuffer))请注意,您不需要锁定核心视频像素缓冲区,如果您检查headerdoc,它会说“在调用此函数之前没有必要锁定CVPixelBuffer”。

Stack Overflow用户

发布于 2017-09-11 12:44:38

对vImageBuffer_InitWithCVPixelBuffer的调用正在执行修改vImage_Buffer和CVPixelBuffer内容的操作,这有点顽皮,因为在您的(链接)代码中,您承诺不修改像素。

CVPixelBufferLockBaseAddress(pixelBuffer, .readOnly)初始化CGBitmapInfo for BGRA8888的正确方法是alpha优先,32位小endian,这是不明显的,但在vImage_Utilities.h中的vImage_CGImageFormat头文件中有如下内容:

let bitmapInfo = CGBitmapInfo(rawValue: CGImageAlphaInfo.first.rawValue | CGImageByteOrderInfo.order32Little.rawValue)我不明白为什么vImageBuffer_InitWithCVPixelBuffer要修改您的缓冲区,因为cgFormat (desiredFormat)应该与vformat匹配,尽管它是用来修改缓冲区的,所以也许您应该先复制数据。

https://stackoverflow.com/questions/46140785

复制相似问题