我是否可以在集群部署模式下运行笔记本?

我是否可以在集群部署模式下运行笔记本?

提问于 2017-09-01 09:29:55

上下文:集群配置如下:

- 所有东西都在用码头文件运行。

- node1:火花母版

- node2: jupyter集线器(我也在这里运行笔记本)

- 节点3-7:火花工作节点

- 我可以将工作节点的telnet和ping发送到node2,反之亦然。

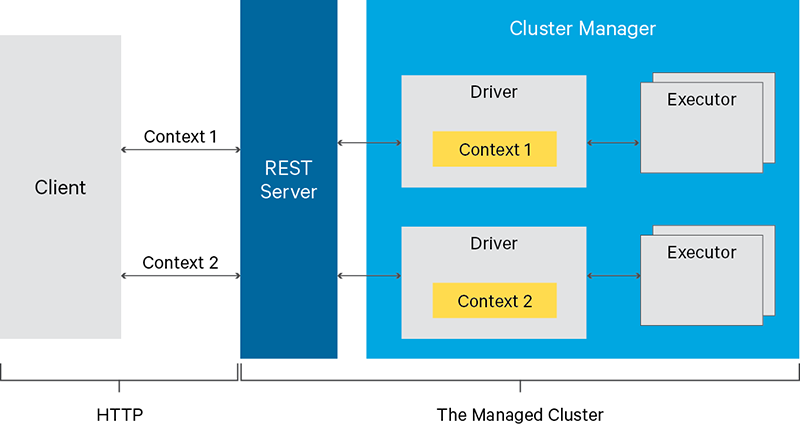

问题:--我正试图在吡火花jupyter笔记本中创建一个火花会话,它以集群部署模式运行。我试图让驱动程序在一个不是运行jupyter笔记本的节点上运行。现在,我可以在集群上运行作业,但只能在node2上运行驱动程序。

经过深入研究,我发现了这个堆叠溢流柱,它声称,如果您运行一个带有火花的交互式shell,您只能在本地部署模式下这样做(在该模式下,驱动程序位于您正在处理的机器上)。这篇文章接着说,像jupyter这样的东西在集群部署模式下也不能工作,但我无法找到任何可以证实这一点的文档。有人能确认jupyter集线器是否可以在集群模式下运行吗?

我尝试在集群部署模式下运行spark会话:

from pyspark.sql import SparkSession

spark = SparkSession.builder\

.enableHiveSupport()\

.config("spark.local.ip",<node 3 ip>)\

.config("spark.driver.host",<node 3 ip>)\

.config('spark.submit.deployMode','cluster')\

.getOrCreate()错误:

/usr/spark/python/pyspark/sql/session.py in getOrCreate(self)

167 for key, value in self._options.items():

168 sparkConf.set(key, value)

--> 169 sc = SparkContext.getOrCreate(sparkConf)

170 # This SparkContext may be an existing one.

171 for key, value in self._options.items():

/usr/spark/python/pyspark/context.py in getOrCreate(cls, conf)

308 with SparkContext._lock:

309 if SparkContext._active_spark_context is None:

--> 310 SparkContext(conf=conf or SparkConf())

311 return SparkContext._active_spark_context

312

/usr/spark/python/pyspark/context.py in __init__(self, master, appName, sparkHome, pyFiles, environment, batchSize, serializer, conf, gateway, jsc, profiler_cls)

113 """

114 self._callsite = first_spark_call() or CallSite(None, None, None)

--> 115 SparkContext._ensure_initialized(self, gateway=gateway, conf=conf)

116 try:

117 self._do_init(master, appName, sparkHome, pyFiles, environment, batchSize, serializer,

/usr/spark/python/pyspark/context.py in _ensure_initialized(cls, instance, gateway, conf)

257 with SparkContext._lock:

258 if not SparkContext._gateway:

--> 259 SparkContext._gateway = gateway or launch_gateway(conf)

260 SparkContext._jvm = SparkContext._gateway.jvm

261

/usr/spark/python/pyspark/java_gateway.py in launch_gateway(conf)

93 callback_socket.close()

94 if gateway_port is None:

---> 95 raise Exception("Java gateway process exited before sending the driver its port number")

96

97 # In Windows, ensure the Java child processes do not linger after Python has exited.

Exception: Java gateway process exited before sending the driver its port number回答 3

Stack Overflow用户

回答已采纳

发布于 2017-09-01 09:46:34

目前,独立模式不支持Python应用程序的集群模式。

即使你可以集群模式不适用于交互环境。

case (_, CLUSTER) if isShell(args.primaryResource) =>

error("Cluster deploy mode is not applicable to Spark shells.")

case (_, CLUSTER) if isSqlShell(args.mainClass) =>

error("Cluster deploy mode is not applicable to Spark SQL shell.")Stack Overflow用户

发布于 2019-09-09 21:30:50

- 间接使用纱线的一种“支持”方式--

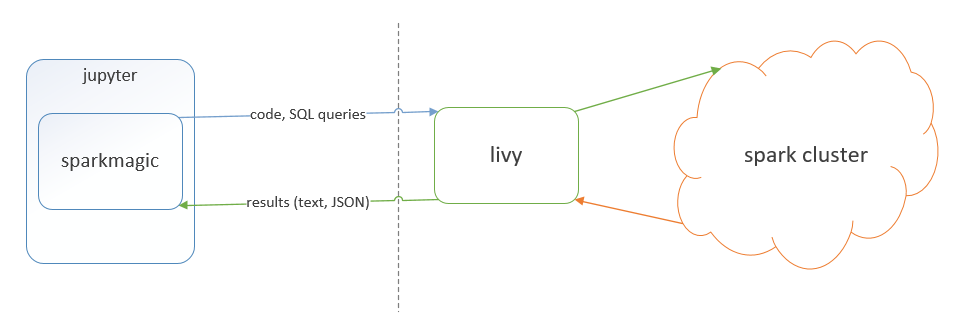

Jupyter中的集群模式是通过阿帕奇·利维。

基本上,Livy是Spark集群的REST服务。

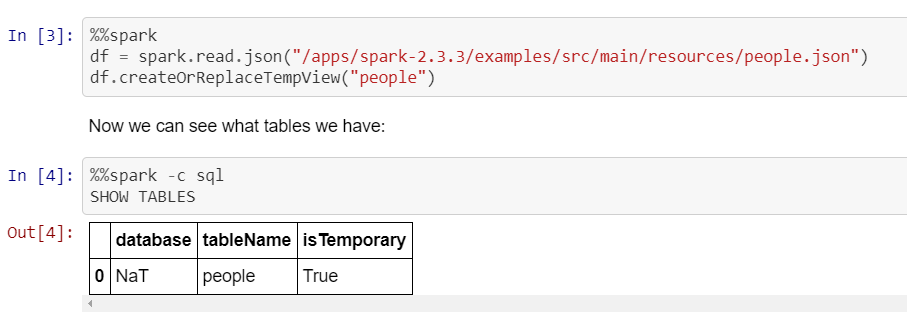

Jupyter有一个扩展"火花魔法“,允许将Livy与Jupyter集成。

带有Jupyter绑定的Spark-magic示例(驱动程序在纱线集群中运行,而在本例中不是本地运行,如上文所述):

- 在木星中使用

YARN-cluster模式的另一种方法是使用Jupyter Enterprise Gatewayhttps://jupyter-enterprise-gateway.readthedocs.io/en/latest/kernel-yarn-cluster-mode.html#configuring-kernels-for-yarn-cluster-mode - 还有一些商业选项通常使用我上面列出的方法之一。例如,我们的一些用户使用IBM (也称为IBM )使用上面的第一个选项

Apache Livy。

Stack Overflow用户

发布于 2017-09-01 09:46:41

我不是PySpark方面的专家,但是您是否尝试过更改pyspark内核的kernel.json文件?

也许您可以在其中添加选项部署模式群集。

"env": {

"SPARK_HOME": "/your_dir/spark",

"PYTHONPATH": "/your_dir/spark/python:/your_dir/spark/python/lib/py4j-0.9-src.zip",

"PYTHONSTARTUP": "/your_dir/spark/python/pyspark/shell.py",

"PYSPARK_SUBMIT_ARGS": "--master local[*] pyspark-shell"

}你改变了这句话:

"PYSPARK_SUBMIT_ARGS": "--master local[*] pyspark-shell"使用群集主ip和--部署模式集群

不确定这会改变什么,但也许这是可行的,我也很想知道!

祝好运

编辑:我发现这个也许能帮到你,尽管这是2015年的事

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/45997150

复制相关文章

相似问题