我将如何从链接列表中获取信息,然后将其转储到JSON对象中?

我将如何从链接列表中获取信息,然后将其转储到JSON对象中?

提问于 2017-07-07 04:40:03

Python和BeautifulSoup都是新手。任何帮助都是非常感谢的。

我知道如何构建 one 列表中的公司信息,但这是在点击一个链接之后。

import requests

from bs4 import BeautifulSoup

url = "http://data-interview.enigmalabs.org/companies/"

r = requests.get(url)

soup = BeautifulSoup(r.content)

links = soup.find_all("a")

link_list = []

for link in links:

print link.get("href"), link.text

g_data = soup.find_all("div",{"class": "table-responsive"})

for link in links:

print link_list.append(link)有人能给出如何先抓取链接,然后为站点构建所有公司列表数据的JSON吗?

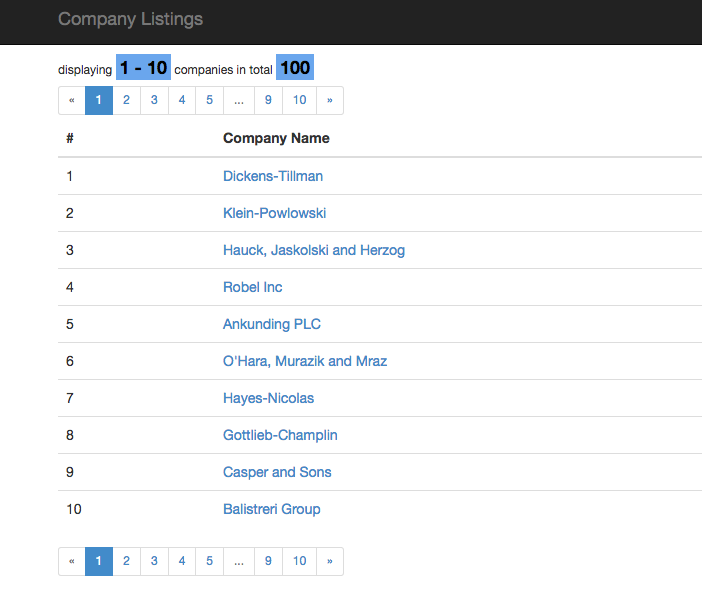

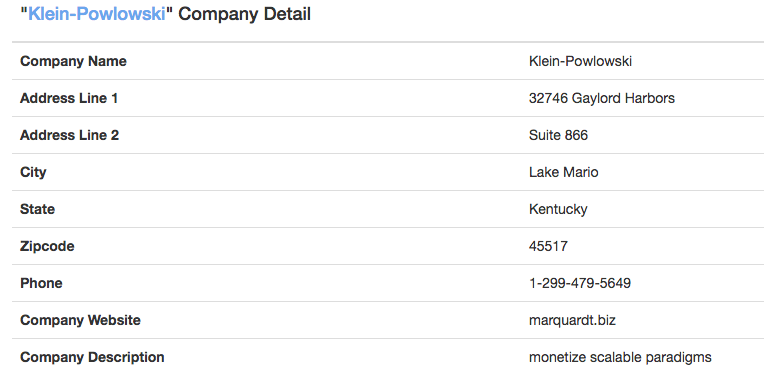

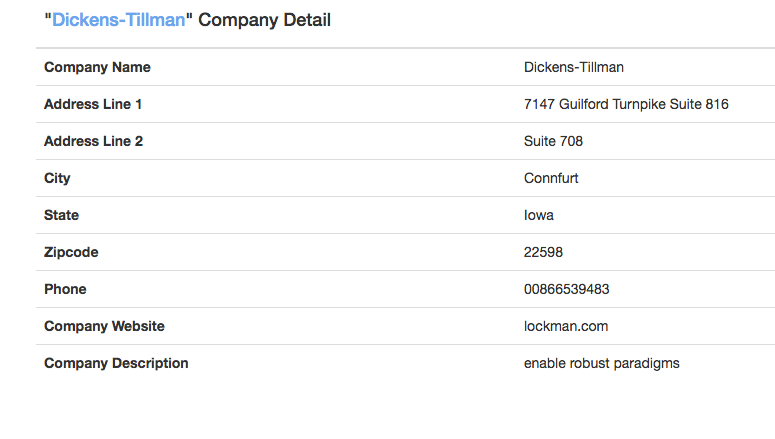

为了更好的可视化,我附上了示例图像。

,我如何在不必单击每个单独链接的情况下刮掉站点并构建一个像下面的示例一样的JSON呢?

示例预期输出:

all_listing = [ {"Dickens-Tillman":{'Company Detail':

{'Company Name': 'Dickens-Tillman',

'Address Line 1 ': '7147 Guilford Turnpike Suit816',

'Address Line 2 ': 'Suite 708',

'City': 'Connfurt',

'State': 'Iowa',

'Zipcode ': '22598',

'Phone': '00866539483',

'Company Website ': 'lockman.com',

'Company Description': 'enable robust paradigms'}}},

`{'"Klein-Powlowski" ':{'Company Detail':

{'Company Name': 'Klein-Powlowski',

'Address Line 1 ': '32746 Gaylord Harbors',

'Address Line 2 ': 'Suite 866',

'City': 'Lake Mario',

'State': 'Kentucky',

'Zipcode ': '45517',

'Phone': '1-299-479-5649',

'Company Website ': 'marquardt.biz',

'Company Description': 'monetize scalable paradigms'}}}]

print all_listing`

回答 1

Stack Overflow用户

发布于 2017-07-13 22:19:18

这是我对我提出的问题的最后解决办法。

import bs4, urlparse, json, requests,csv

from os.path import basename as bn

links = []

data = {}

base = 'http://data-interview.enigmalabs.org/'

#Approach

#1. Each individual pages, collect the links

#2. Iterate over each link in a list

#3. Before moving on each the list for links if correct move on, if not review step 2 then 1

#4. Push correct data to a JSON file

def bs(r):

return bs4.BeautifulSoup(requests.get(urlparse.urljoin(base, r).encode()).content, 'html.parser').find('table')

for i in range(1,11):

print 'Collecting page %d' % i

links += [a['href'] for a in bs('companies?page=%d' % i).findAll('a')]

# Search a the given range of "a" on each page

# Now that I have collected all links into an list,iterate over each link

# All the info is within a html table, so search and collect all company info in data

for link in links:

print 'Processing %s' % link

name = bn(link)

data[name] = {}

for row in bs(link).findAll('tr'):

desc, cont = row.findAll('td')

data[name][desc.text.encode()] = cont.text.encode()

print json.dumps(data)

# Final step is to have all data formating

json_data = json.dumps(data, indent=4)

file = open("solution.json","w")

file.write(json_data)

file.close()页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/44962661

复制相关文章

相似问题