Python中基于印象的n-图分析

Python中基于印象的n-图分析

提问于 2017-04-20 19:29:14

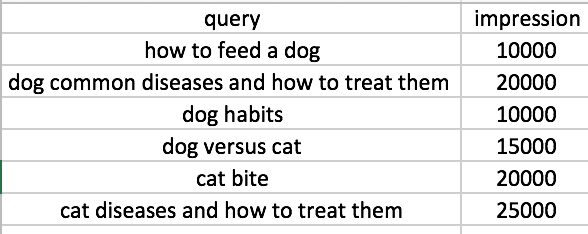

我的示例数据集是这样的:

我的目标是了解一个单词、两个单词、三个单词、四个单词、五个单词和六个单词有多少印象。我以前运行N-g算法,但它只返回计数。这是我现在的n克密码。

def find_ngrams(text, n):

word_vectorizer = CountVectorizer(ngram_range=(n,n), analyzer='word')

sparse_matrix = word_vectorizer.fit_transform(text)

frequencies = sum(sparse_matrix).toarray()[0]

ngram =

pd.DataFrame(frequencies,index=word_vectorizer.get_feature_names(),columns=

['frequency'])

ngram = ngram.sort_values(by=['frequency'], ascending=[False])

return ngram

one = find_ngrams(df['query'],1)

bi = find_ngrams(df['query'],2)

tri = find_ngrams(df['query'],3)

quad = find_ngrams(df['query'],4)

pent = find_ngrams(df['query'],5)

hexx = find_ngrams(df['query'],6)我想我需要做的是: 1.将查询拆分成一个单词到6个单词。2.把印象附加到分词上。3.重组所有的分词,并对印象进行总结。

以第二个查询“狗常见疾病及如何治疗”为例。

(1) 1-gram: dog, common, diseases, and, how, to, treat, them;

(2) 2-gram: dog common, common diseases, diseases and, and how, how to, to treat, treat them;

(3) 3-gram: dog common diseases, common diseases and, diseases and how, and how to, how to treat, to treat them;

(4) 4-gram: dog common diseases and, common diseases and how, diseases and how to, and how to treat, how to treat them;

(5) 5-gram: dog common diseases and how, the queries into one word, diseases and how to treat, and how to treat them;

(6) 6-gram: dog common diseases and how to, common diseases and how to treat, diseases and how to treat them;回答 2

Stack Overflow用户

回答已采纳

发布于 2017-04-20 20:28:10

这是一种方法!不是最有效的,但是,我们不要过早地进行优化。其思想是使用apply获得一个新的pd.DataFrame,为所有的ngram提供新的列,将其与旧的dataframe连接起来,并进行一些叠加和分组。

import pandas as pd

df = pd.DataFrame({

"squery": ["how to feed a dog", "dog habits", "to cat or not to cat", "dog owners"],

"count": [1000, 200, 100, 150]

})

def n_grams(txt):

grams = list()

words = txt.split(' ')

for i in range(len(words)):

for k in range(1, len(words) - i + 1):

grams.append(" ".join(words[i:i+k]))

return pd.Series(grams)

counts = df.squery.apply(n_grams).join(df)

counts.drop("squery", axis=1).set_index("count").unstack()\

.rename("ngram").dropna().reset_index()\

.drop("level_0", axis=1).groupby("ngram")["count"].sum()最后一个表达式将返回一个pd.Series,如下所示。

ngram

a 1000

a dog 1000

cat 200

cat or 100

cat or not 100

cat or not to 100

cat or not to cat 100

dog 1350

dog habits 200

dog owners 150

feed 1000

feed a 1000

feed a dog 1000

habits 200

how 1000

how to 1000

how to feed 1000

how to feed a 1000

how to feed a dog 1000

not 100

not to 100

not to cat 100

or 100

or not 100

or not to 100

or not to cat 100

owners 150

to 1200

to cat 200

to cat or 100

to cat or not 100

to cat or not to 100

to cat or not to cat 100

to feed 1000

to feed a 1000

to feed a dog 1000Spiffy方法

这个可能会更有效一些,但它仍然可以实现CountVectorizer中的密集的n-g向量。它将每列上的一个与印象数相乘,然后在各列上相加,得到每克印象的总数。它给出了与上面相同的结果。需要注意的一点是,具有重复ngram的查询也计算双倍。

import numpy as np

from sklearn.feature_extraction.text import CountVectorizer

cv = CountVectorizer(ngram_range=(1, 5))

ngrams = cv.fit_transform(df.squery)

mask = np.repeat(df['count'].values.reshape(-1, 1), repeats = len(cv.vocabulary_), axis = 1)

index = list(map(lambda x: x[0], sorted(cv.vocabulary_.items(), key = lambda x: x[1])))

pd.Series(np.multiply(mask, ngrams.toarray()).sum(axis = 0), name = "counts", index = index)Stack Overflow用户

发布于 2017-04-20 20:50:30

像这样的事怎么样:

def find_ngrams(input, n):

# from http://locallyoptimal.com/blog/2013/01/20/elegant-n-gram-generation-in-python/

return zip(*[input[i:] for i in range(n)])

def impressions_by_ngrams(data, ngram_max):

from collections import defaultdict

result = [defaultdict(int) for n in range(ngram_max)]

for query, impressions in data:

words = query.split()

for n in range(ngram_max):

for ngram in find_ngrams(words, n + 1):

result[n][ngram] += impressions

return result示例:

>>> data = [('how to feed a dog', 10000),

... ('see a dog run', 20000)]

>>> ngrams = impressions_by_ngrams(data, 3)

>>> ngrams[0] # unigrams

defaultdict(<type 'int'>, {('a',): 30000, ('how',): 10000, ('run',): 20000, ('feed',): 10000, ('to',): 10000, ('see',): 20000, ('dog',): 30000})

>>> ngrams[1][('a', 'dog')] # impressions for bigram 'a dog'

30000页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/43528296

复制相关文章

相似问题