实现决策树

实现决策树

提问于 2016-10-03 08:10:14

我正在学习如何在C#中实现简单的决策树。有人能解释一下,在伪代码中它是什么样子,或者有一些简单的教程可以在c#中实现吗?

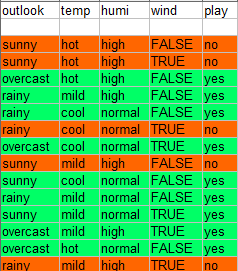

我有这个数据集:

(出发地:http://storm.cis.fordham.edu/~gweiss/data-mining/weka-data/weather.nominal.arff )

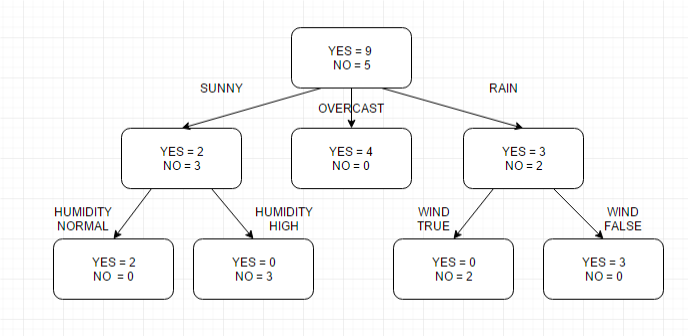

我做了一个图形的决策树

(对不起我的英语)

我的想法只是这样:

if outlook = "overcast" then no

if outlook = "sunny" and humidity = "normal" then yes

if outlook = "sunny" and humidity = "high" then no

if outlook = "rain" and wind = "true" then no

if outlook = "rain" and wind = "fasle" then yes我真的不知道,怎么继续

回答 2

Stack Overflow用户

发布于 2016-10-03 08:32:12

为了部分回答这个问题,显然决策树的概念被描述为这里。要实现上述类型的决策树,可以从问题中的表中声明一个与类型匹配的类。基于该类型,您需要创建一个不限制子级数量的树数据结构。虽然实际数据仅包含在叶子中,但最好将基本类型的每个成员定义为可空。这样,在每个节点中,您只能设置被设置为其子节点的特定值的成员。另外,应该表示值为no和yes的节点数。

Stack Overflow用户

发布于 2016-12-08 11:01:35

如果您正在构建基于ID3算法的决策树,则可以引用此伪代码。

ID3 (Examples, Target_Attribute, Attributes)

Create a root node for the tree

If all examples are positive, Return the single-node tree Root, with label = +.

If all examples are negative, Return the single-node tree Root, with label = -.

If number of predicting attributes is empty, then Return the single node tree Root,

with label = most common value of the target attribute in the examples.

Otherwise Begin

A ← The Attribute that best classifies examples.

Decision Tree attribute for Root = A.

For each possible value, vi, of A,

Add a new tree branch below Root, corresponding to the test A = vi.

Let Examples(vi) be the subset of examples that have the value vi for A

If Examples(vi) is empty

Then below this new branch add a leaf node with label = most common target value in the examples

Else below this new branch add the subtree ID3 (Examples(vi), Target_Attribute, Attributes – {A})

End

Return Root如果您想了解更多关于ID3算法的知识,请转到链接ID3算法

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/39827088

复制相关文章

相似问题