在R中创建单词云时出错(simple_triplet_matrix中的错误:'i,j,v‘不同长度)

我在R中有下面的代码来获取最近关于当地市长候选人的推文并创建一个wordcloud:

library(twitteR)

library(ROAuth)

require(RCurl)

library(stringr)

library(tm)

library(ggmap)

library(plyr)

library(dplyr)

library(SnowballC)

library(wordcloud)

(...)

setup_twitter_oauth(...)

N = 10000 #Number of twetts

S = 200 #200Km radius from Natal (Covers the whole Natal area)

candidate = 'Carlos+Eduardo'

#Lists so I can add more cities in future codes

lats = c(-5.7792569)

lons = c(-35.200916)

# Gets the tweets from every city

result = do.call(

rbind,

lapply(

1:length(lats),

function(i) searchTwitter(

candidate,

lang="pt-br",

n=N,

resultType="recent",

geocode=paste(lats[i], lons[i], paste0(S,"km"), sep=",")

)

)

)

# Get the latitude and longitude of each tweet,

# the tweet itself, how many times it was re-twitted and favorited,

# the date and time it was twitted, etc and builds a data frame.

result_lat = sapply(result, function(x) as.numeric(x$getLatitude()))

result_lat = sapply(result_lat, function(z) ifelse(length(z) != 0, z, NA))

result_lon = sapply(result, function(x) as.numeric(x$getLongitude()))

result_lon = sapply(result_lon, function(z) ifelse(length(z) != 0, z, NA))

result_date = lapply(result, function(x) x$getCreated())

result_date = sapply(result_date,

function(x) strftime(x, format="%d/%m/%Y %H:%M%S", tz="UTC")

)

result_text = sapply(result, function(x) x$getText())

result_text = unlist(result_text)

is_retweet = sapply(result, function(x) x$getIsRetweet())

retweeted = sapply(result, function(x) x$getRetweeted())

retweet_count = sapply(result, function(x) x$getRetweetCount())

favorite_count = sapply(result, function(x) x$getFavoriteCount())

favorited = sapply(result, function(x) x$getFavorited())

tweets = data.frame(

cbind(

tweet = result_text,

date = result_date,

lat = result_lat,

lon = result_lon,

is_retweet=is_retweet,

retweeted = retweeted,

retweet_count = retweet_count,

favorite_count = favorite_count,

favorited = favorited

)

)

# World Cloud

#Text stemming require the package ‘SnowballC’.

#https://cran.r-project.org/web/packages/SnowballC/index.html

#Create corpus

corpus = Corpus(VectorSource(tweets$tweet))

corpus = tm_map(corpus, removePunctuation)

corpus = tm_map(corpus, removeWords, stopwords('portuguese'))

corpus = tm_map(corpus, stemDocument)

wordcloud(corpus, max.words = 50, random.order = FALSE)但我发现了这些错误:

Simple_triplet_matrix中的错误(i= i,j= j,v= as.numeric(v),nrow = length(allTerms),: 'i,j,v‘不同的长度 此外:警告信息: 1:在doRppAPICall中(“搜索/tweet”,n,params = params,retryOnRateLimit = retryOnRateLimit,: 请求了10000条tweet,但API只能返回518条。 #我支持这个,我不能得到更多存在的推特 2:在mclapply中(unname(content(X))、termFreq、control):所有计划好的核心都在用户代码中遇到错误 3:在simple_triplet_matrix中(i= i,j= j,v= as.numeric(v),nrow = length(allTerms),:NAs )

这是我第一次构建wordcloud,我遵循了像这个一这样的教程。

有办法解决吗?另一件事是:tweets$tweet的类是“因素”,我应该转换它还是什么?如果是,我是怎么做到的?

回答 2

Stack Overflow用户

发布于 2016-09-30 16:21:20

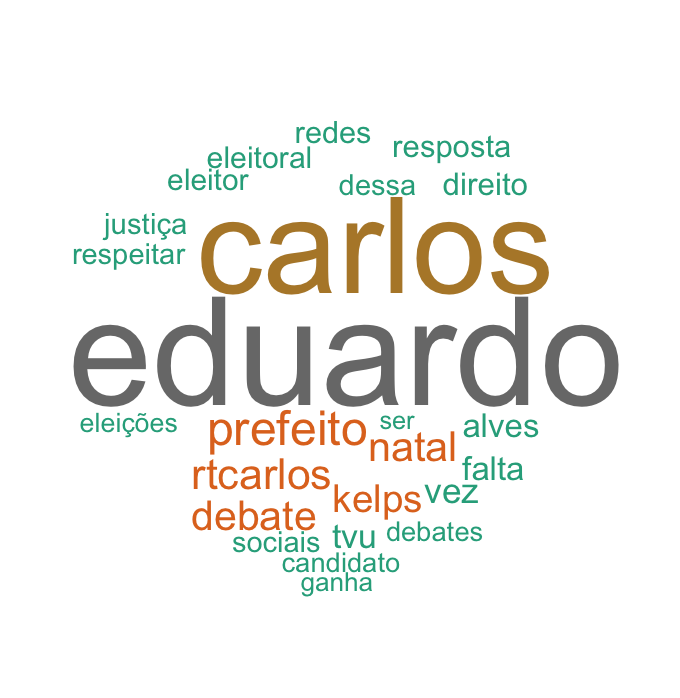

我遵循这个教程,它定义了一个函数来“清理”文本,并在构建wordcloud之前创建一个TermDocumentMatrix而不是stemDocument。现在正常工作了。

Stack Overflow用户

发布于 2016-09-24 17:55:55

我认为问题在于wordcloud不是为tm语料库对象定义的。安装quanteda包,并尝试如下:

plot(quanteda::corpus(corpus), max.words = 50, random.order = FALSE)https://stackoverflow.com/questions/39668845

复制相似问题