从印度专利网站抓取专利数据

从印度专利网站抓取专利数据

提问于 2016-09-06 19:44:12

我正试图为印度专利搜索网站编写一个网页文件,以获取有关专利的数据。这是我到目前为止掌握的代码。

#import the necessary modules

import urllib2

#import the beautifulsoup functions to parse the data

from bs4 import BeautifulSoup

#mention the website that you are trying to scrape

patentsite="http://ipindiaservices.gov.in/publicsearch/"

#Query the website and return the html to the variable 'page'

page = urllib2.urlopen(patentsite)

#Parse the html in the 'page' variable, and store it in Beautiful Soup format

soup = BeautifulSoup(page)

print soup不幸的是,印度专利网站不健全,或者我不知道如何在这方面更进一步。

这是上述代码的输出。

<!--

###################################################################

## ##

## ##

## SIDDHAST.COM ##

## ##

## ##

###################################################################

--><!DOCTYPE HTML>

<html>

<head>

<meta content="IE=edge" http-equiv="X-UA-Compatible"/>

<meta charset="utf-8"/>

<title>:: InPASS - Indian Patent Advanced Search System ::</title>

<link href="resources/ipats-all.css" rel="stylesheet"/>

<script src="app.js" type="text/javascript"></script>

<link href="resources/app.css" rel="stylesheet"/>

</head>

<body></body>

</html>我想给出的是,假设我提供了一个公司名称,刮刀应该获得该公司的所有专利。我想做其他的事情,如果我能得到这个部分,如提供一组输入,刮刀将用于寻找专利。但我被困在我无法继续前进的那部分。

任何关于如何获取这些数据的提示都将受到极大的赞赏。

回答 1

Stack Overflow用户

回答已采纳

发布于 2016-09-06 23:22:29

你只需请求就可以做到这一点。这个帖子是用一个param http://ipindiaservices.gov.in/publicsearch/resources/webservices/search.php来写的,_rc_是我们用_time.time创建的一个时间戳。

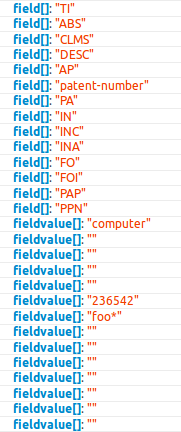

"field[]"中的每个值应该与"fieldvalue[]"中的每个值相匹配,然后再匹配到"operator[]",无论您选择*AND* *OR*还是*NOT*,每个键后的[]指定我们传递的是一个值数组,如果没有这样的操作,什么都不会起作用。

data = {

"publication_type_published": "on",

"publication_type_granted": "on",

"fieldDate": "APD",

"datefieldfrom": "19120101",

"datefieldto": "20160906",

"operatordate": " AND ",

"field[]": ["PA"], # claims,.description, patent-number codes go here

"fieldvalue[]": ["chris*"], # matching values for ^^ go here

"operator[]": [" AND "], # matching sql logic for ^^ goes here

"page": "1", # gives you next page results

"start": "0", # not sure what effect this actually has.

"limit": "25"} # not sure how this relates as len(r.json()[u'record']) stays 25 regardless

import requests

from time import time

post = "http://ipindiaservices.gov.in/publicsearch/resources/webservices/search.php?_dc={}".format(

str(time()).replace(".", ""))

with requests.Session() as s:

s.get("http://ipindiaservices.gov.in/publicsearch/")

s.headers.update({"X-Requested-With": "XMLHttpRequest"})

r = s.post(post, data=data)

print(r.json())输出将如下所示,我无法将其全部添加,因为有太多的数据无法发布:

{u'success': True, u'record': [{u'Publication_Status': u'Published', u'appDate': u'2016/06/16', u'pubDate': u'2016/08/31', u'title': u'ACTUATOR FOR DEPLOYABLE IMPLANT', u'sourceID': u'inpat', u'abstract': u'\n Systems and methods are provided for usin.............如果使用记录键,就会得到如下所示的字典列表:

{u'Publication_Status': u'Published', u'appDate': u'2015/01/27', u'pubDate': u'2015/06/26', u'title': u'CORRUGATED PALLET', u'sourceID': u'inpat', u'abstract': u'\n A corrugated paperboard pallet is produced from two flat blanks which comprise a pallet top and a pallet bottom. The two blanks are each folded to produce only two parallel vertically extending double thickness ribs three horizontal panels two vertical side walls and two horizontal flaps. The ribs of the pallet top and pallet bottom lock each other from opening in the center of the pallet by intersecting perpendicularly with notches in the ribs. The horizontal flaps lock the ribs from opening at the edges of the pallet by intersecting perpendicularly with notches and the vertical sidewalls include vertical flaps that open inward defining fork passages whereby the vertical flaps lock said horizontal flaps from opening.\n ', u'Assignee': u'OLVEY Douglas A., SKETO James L., GUMBERT Sean G., DANKO Joseph J., GABRYS Christopher W., ', u'field_of_invention': u'FI10', u'publication_no': u'26/2015', u'patent_no': u'', u'application_no': u'642/DELNP/2015', u'UCID': u'WVJ4NVVIYzFLcUQvVnJsZGczcVRmSS96Vkh3NWsrS1h3Qk43S2xHczJ2WT0%3D', u'Publication_Type': u'A'}这是你的专利信息。

您可以看到,如果我们在浏览器中选择几个值,所有字段值中的值、字段和操作符排列起来,AND是默认的,因此您可以看到对于每个选项:

所以,找出代码,选择你想要的,然后发出去。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/39356677

复制相关文章

相似问题