火花SQL:为什么一个查询有两个作业?

火花SQL:为什么一个查询有两个作业?

提问于 2016-06-10 19:37:55

实验

我在Spark 1.6.1上尝试了下面的片段。

val soDF = sqlContext.read.parquet("/batchPoC/saleOrder") # This has 45 files

soDF.registerTempTable("so")

sqlContext.sql("select dpHour, count(*) as cnt from so group by dpHour order by cnt").write.parquet("/out/")Physical Plan是:

== Physical Plan ==

Sort [cnt#59L ASC], true, 0

+- ConvertToUnsafe

+- Exchange rangepartitioning(cnt#59L ASC,200), None

+- ConvertToSafe

+- TungstenAggregate(key=[dpHour#38], functions=[(count(1),mode=Final,isDistinct=false)], output=[dpHour#38,cnt#59L])

+- TungstenExchange hashpartitioning(dpHour#38,200), None

+- TungstenAggregate(key=[dpHour#38], functions=[(count(1),mode=Partial,isDistinct=false)], output=[dpHour#38,count#63L])

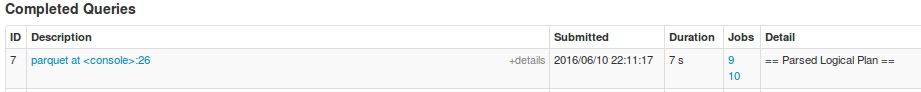

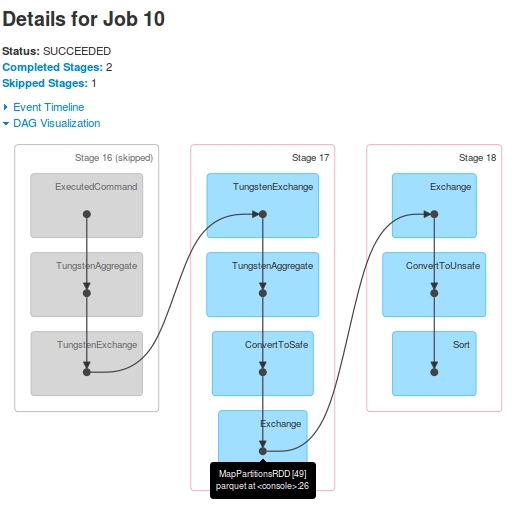

+- Scan ParquetRelation[dpHour#38] InputPaths: hdfs://hdfsNode:8020/batchPoC/saleOrder对于这个查询,我有两个作业:Job 9和Job 10

对于Job 9,DAG是:

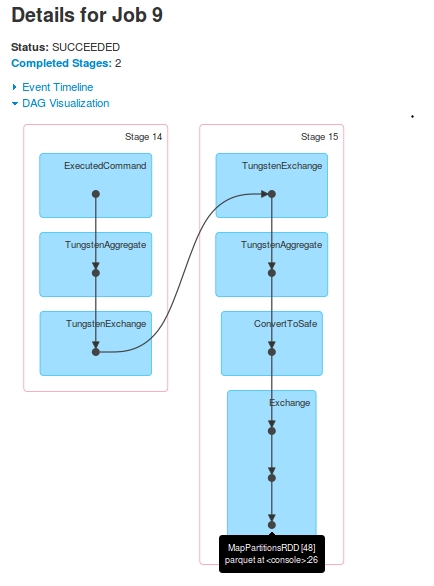

对于Job 10,DAG是:

Observations

- 显然,一个查询有两个

jobs。 Stage-16(在Job 9中标记为Stage-14)在Job 10中跳过。Stage-15的最后一个RDD[48]和Stage-17的最后一个RDD[49]是一样的。怎么?我在日志中看到Stage-15执行之后,RDD[48]被注册为RDD[49]。Stage-17显示在driver-logs中,但从未在Executors执行。在driver-logs上显示了任务执行,但是当我查看Yarn容器的日志时,没有证据显示从Stage-17接收到任何task。

支持这些观察的日志(只有driver-logs,由于后来的崩溃,我丢失了executor日志)。可以看出,在Stage-17启动之前,RDD[49]是注册的:

16/06/10 22:11:22 INFO TaskSetManager: Finished task 196.0 in stage 15.0 (TID 1121) in 21 ms on slave-1 (199/200)

16/06/10 22:11:22 INFO TaskSetManager: Finished task 198.0 in stage 15.0 (TID 1123) in 20 ms on slave-1 (200/200)

16/06/10 22:11:22 INFO YarnScheduler: Removed TaskSet 15.0, whose tasks have all completed, from pool

16/06/10 22:11:22 INFO DAGScheduler: ResultStage 15 (parquet at <console>:26) finished in 0.505 s

16/06/10 22:11:22 INFO DAGScheduler: Job 9 finished: parquet at <console>:26, took 5.054011 s

16/06/10 22:11:22 INFO ParquetRelation: Using default output committer for Parquet: org.apache.parquet.hadoop.ParquetOutputCommitter

16/06/10 22:11:22 INFO FileOutputCommitter: File Output Committer Algorithm version is 1

16/06/10 22:11:22 INFO DefaultWriterContainer: Using user defined output committer class org.apache.parquet.hadoop.ParquetOutputCommitter

16/06/10 22:11:22 INFO FileOutputCommitter: File Output Committer Algorithm version is 1

16/06/10 22:11:22 INFO SparkContext: Starting job: parquet at <console>:26

16/06/10 22:11:22 INFO DAGScheduler: Registering RDD 49 (parquet at <console>:26)

16/06/10 22:11:22 INFO DAGScheduler: Got job 10 (parquet at <console>:26) with 25 output partitions

16/06/10 22:11:22 INFO DAGScheduler: Final stage: ResultStage 18 (parquet at <console>:26)

16/06/10 22:11:22 INFO DAGScheduler: Parents of final stage: List(ShuffleMapStage 17)

16/06/10 22:11:22 INFO DAGScheduler: Missing parents: List(ShuffleMapStage 17)

16/06/10 22:11:22 INFO DAGScheduler: Submitting ShuffleMapStage 17 (MapPartitionsRDD[49] at parquet at <console>:26), which has no missing parents

16/06/10 22:11:22 INFO MemoryStore: Block broadcast_25 stored as values in memory (estimated size 17.4 KB, free 512.3 KB)

16/06/10 22:11:22 INFO MemoryStore: Block broadcast_25_piece0 stored as bytes in memory (estimated size 8.9 KB, free 521.2 KB)

16/06/10 22:11:22 INFO BlockManagerInfo: Added broadcast_25_piece0 in memory on 172.16.20.57:44944 (size: 8.9 KB, free: 517.3 MB)

16/06/10 22:11:22 INFO SparkContext: Created broadcast 25 from broadcast at DAGScheduler.scala:1006

16/06/10 22:11:22 INFO DAGScheduler: Submitting 200 missing tasks from ShuffleMapStage 17 (MapPartitionsRDD[49] at parquet at <console>:26)

16/06/10 22:11:22 INFO YarnScheduler: Adding task set 17.0 with 200 tasks

16/06/10 22:11:23 INFO TaskSetManager: Starting task 0.0 in stage 17.0 (TID 1125, slave-1, partition 0,NODE_LOCAL, 1988 bytes)

16/06/10 22:11:23 INFO TaskSetManager: Starting task 1.0 in stage 17.0 (TID 1126, slave-2, partition 1,NODE_LOCAL, 1988 bytes)

16/06/10 22:11:23 INFO TaskSetManager: Starting task 2.0 in stage 17.0 (TID 1127, slave-1, partition 2,NODE_LOCAL, 1988 bytes)

16/06/10 22:11:23 INFO TaskSetManager: Starting task 3.0 in stage 17.0 (TID 1128, slave-2, partition 3,NODE_LOCAL, 1988 bytes)

16/06/10 22:11:23 INFO TaskSetManager: Starting task 4.0 in stage 17.0 (TID 1129, slave-1, partition 4,NODE_LOCAL, 1988 bytes)

16/06/10 22:11:23 INFO TaskSetManager: Starting task 5.0 in stage 17.0 (TID 1130, slave-2, partition 5,NODE_LOCAL, 1988 bytes)问题

- 为什么是两个

Jobs?把一个DAG分解成两个jobs的目的是什么? Job 10的DAG看起来像执行查询的complete。有特定的Job 9在做什么吗?- 为什么不跳过

Stage-17?它看起来像是创建了虚拟tasks,它们有什么用途吗? - 后来,我尝试了另一个更简单的查询。出乎意料的是,它创建了3

Jobs.sqlContext.sql(“从so中选择dphour").write.parquet("/out2/")

回答 1

Stack Overflow用户

回答已采纳

发布于 2016-06-11 18:35:24

当您使用高级dataframe/dataset API时,您需要由Spark来确定执行计划,包括作业/阶段分块。这取决于许多因素,如执行并行性、缓存/持久化数据结构等。在未来版本的Spark中,随着优化器复杂程度的增加,您可能会看到更多的每个查询作业,例如,一些数据源被采样以参数化基于成本的执行优化。

例如,我经常(但并不总是)看到写作从涉及洗牌的处理中产生不同的作业。

总之,如果您使用的是高级别的API,除非您必须使用大量的数据进行非常详细的优化,否则深入研究具体的数据块是不值得的。与处理/输出相比,工作启动成本极低。

另一方面,如果您对Spark内部程序感到好奇,请阅读优化器代码,并参与Spark开发人员邮件列表。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/37755963

复制相关文章

相似问题