高偏置卷积神经网络不改进的多层/多滤波器

高偏置卷积神经网络不改进的多层/多滤波器

提问于 2016-05-17 06:47:11

我正在使用TensorFlow训练一个卷积神经网络,将建筑物的图像分类为5级。

Training dataset:

Class 1 - 3000 images

Class 2 - 3000 images

Class 3 - 3000 images

Class 4 - 3000 images

Class 5 - 3000 images我从一个非常简单的架构开始:

Input image - 256 x 256 x 3

Convolutional layer 1 - 128 x 128 x 16 (3x3 filters, 16 filters, stride=2)

Convolutional layer 2 - 64 x 64 x 32 (3x3 filters, 32 filters, stride=2)

Convolutional layer 3 - 32 x 32 x 64 (3x3 filters, 64 filters, stride=2)

Max-pooling layer - 16 x 16 x 64 (2x2 pooling)

Fully-connected layer 1 - 1 x 1024

Fully-connected layer 2 - 1 x 64

Output - 1 x 5我的网络的其他细节:

Cost-function: tf.softmax_cross_entropy_with_logits

Optimizer: Adam optimizer (Learning rate=0.01, Epsilon=0.1)

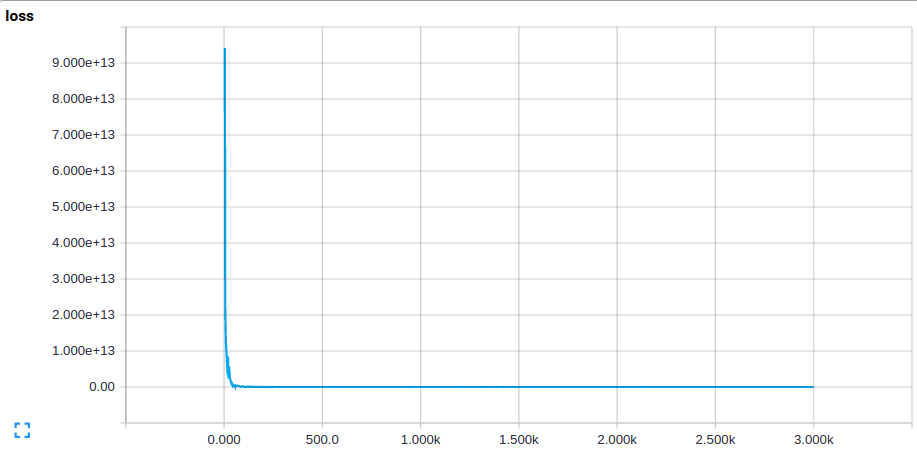

Mini-batch size: 5我的成本函数的起始值很高,约为10^10,然后迅速下降到大约1.6 (经过几百次迭代后),并在此值下饱和(无论我训练网络的时间长短)。测试集上的成本函数值是相同的.这个值相当于预测每个类的概率大致相等,并对所有图像进行相同的预测。我的预测是这样的:

[0.191877 0.203651 0.194455 0.200043 0.203081]

在训练集和测试集上的一个高误差表明高偏差,即欠拟合的。我通过添加层和增加过滤器的数量增加了网络的复杂性,我的最新网络是这样的(层数和过滤器大小类似于AlexNet):

Input image - 256 x 256 x 3

Convolutional layer 1 - 64 x 64 x 64 (11x11 filters, 64 filters, stride=4)

Convolutional layer 2 - 32 x 32 x 128 (5x5 filters, 128 filters, stride=2)

Convolutional layer 3 - 16 x 16 x 256 (3x3 filters, 256 filters, stride=2)

Convolutional layer 4 - 8 x 8 x 512 (3x3 filters, 512 filters, stride=2)

Convolutional layer 5 - 8 x 8 x 256 (3x3 filters, 256 filters, stride=1)

Fully-connected layer 1 - 1 x 4096

Fully-connected layer 2 - 1 x 4096

Fully-connected layer 3 - 1 x 4096

Dropout layer (0.5 probability)

Output - 1 x 5然而,我的成本函数是,仍然是饱和在大约1.6,并作出同样的预测.

我的问题是:

- 我应该尝试修复高偏置网络的其他解决方案吗?我已经(现在仍在)尝试不同的学习速度和权值的初始化--但都没有结果。

- 是因为我的训练场太小了吗?一个小的训练集合不是会导致一个高方差的网络吗?它适应于训练图像,训练误差小,但测试误差大。

- 有没有可能这些图像中没有明显的特征?然而,考虑到其他CNN可以区分不同品种的狗,这似乎是不可能的。

- 作为一个健全的检查,我正在训练我的网络在一个非常小的数据集(50张图像),我是期待它过度适合。然而,看起来不像,它看起来也会出现同样的问题。

代码:

import tensorflow as tf

sess = tf.Session()

BATCH_SIZE = 50

MAX_CAPACITY = 300

TRAINING_STEPS = 3001

# To get the list of image filenames and labels from the text file

def read_labeled_image_list(list_filename):

f = open(list_filename,'r')

filenames = []

labels = []

for line in f:

filename, label = line[:-1].split(' ')

filenames.append(filename)

labels.append(int(label))

return filenames,labels

# To get images and labels in batches

def add_to_batch(image,label):

image_batch,label_batch = tf.train.batch([image,label],batch_size=BATCH_SIZE,num_threads=1,capacity=MAX_CAPACITY)

return image_batch, tf.reshape(label_batch,[BATCH_SIZE])

# To decode a single image and its label

def read_image_with_label(input_queue):

""" Image """

# Read

file_contents = tf.read_file(input_queue[0])

example = tf.image.decode_png(file_contents)

# Reshape

my_image = tf.cast(example,tf.float32)

my_image = tf.reshape(my_image,[256,256,3])

# Normalisation

my_image = my_image/255

my_mean = tf.reduce_mean(my_image)

# Centralisation

my_image = my_image - my_mean

""" Label """

label = input_queue[1]-1

return add_to_batch(my_image,label)

# Network

def inference(x):

""" Layer 1: Convolutional """

# Initialise variables

W_conv1 = tf.Variable(tf.truncated_normal([11,11,3,64],stddev=0.0001),name='W_conv1')

b_conv1 = tf.Variable(tf.constant(0.1,shape=[64]),name='b_conv1')

# Convolutional layer

h_conv1 = tf.nn.relu(tf.nn.conv2d(x,W_conv1,strides=[1,4,4,1],padding='SAME') + b_conv1)

""" Layer 2: Convolutional """

# Initialise variables

W_conv2 = tf.Variable(tf.truncated_normal([5,5,64,128],stddev=0.0001),name='W_conv2')

b_conv2 = tf.Variable(tf.constant(0.1,shape=[128]),name='b_conv2')

# Convolutional layer

h_conv2 = tf.nn.relu(tf.nn.conv2d(h_conv1,W_conv2,strides=[1,2,2,1],padding='SAME') + b_conv2)

""" Layer 3: Convolutional """

# Initialise variables

W_conv3 = tf.Variable(tf.truncated_normal([3,3,128,256],stddev=0.0001),name='W_conv3')

b_conv3 = tf.Variable(tf.constant(0.1,shape=[256]),name='b_conv3')

# Convolutional layer

h_conv3 = tf.nn.relu(tf.nn.conv2d(h_conv2,W_conv3,strides=[1,2,2,1],padding='SAME') + b_conv3)

""" Layer 4: Convolutional """

# Initialise variables

W_conv4 = tf.Variable(tf.truncated_normal([3,3,256,512],stddev=0.0001),name='W_conv4')

b_conv4 = tf.Variable(tf.constant(0.1,shape=[512]),name='b_conv4')

# Convolutional layer

h_conv4 = tf.nn.relu(tf.nn.conv2d(h_conv3,W_conv4,strides=[1,2,2,1],padding='SAME') + b_conv4)

""" Layer 5: Convolutional """

# Initialise variables

W_conv5 = tf.Variable(tf.truncated_normal([3,3,512,256],stddev=0.0001),name='W_conv5')

b_conv5 = tf.Variable(tf.constant(0.1,shape=[256]),name='b_conv5')

# Convolutional layer

h_conv5 = tf.nn.relu(tf.nn.conv2d(h_conv4,W_conv5,strides=[1,1,1,1],padding='SAME') + b_conv5)

""" Layer X: Pooling

# Pooling layer

h_pool1 = tf.nn.max_pool(h_conv3,ksize=[1,2,2,1],strides=[1,2,2,1],padding='SAME')"""

""" Layer 6: Fully-connected """

# Initialise variables

W_fc1 = tf.Variable(tf.truncated_normal([8*8*256,4096],stddev=0.0001),name='W_fc1')

b_fc1 = tf.Variable(tf.constant(0.1,shape=[4096]),name='b_fc1')

# Multiplication layer

h_conv5_reshaped = tf.reshape(h_conv5,[-1,8*8*256])

h_fc1 = tf.nn.relu(tf.matmul(h_conv5_reshaped, W_fc1) + b_fc1)

""" Layer 7: Fully-connected """

# Initialise variables

W_fc2 = tf.Variable(tf.truncated_normal([4096,4096],stddev=0.0001),name='W_fc2')

b_fc2 = tf.Variable(tf.constant(0.1,shape=[4096]),name='b_fc2')

# Multiplication layer

h_fc2 = tf.nn.relu(tf.matmul(h_fc1, W_fc2) + b_fc2)

""" Layer 8: Fully-connected """

# Initialise variables

W_fc3 = tf.Variable(tf.truncated_normal([4096,4096],stddev=0.0001),name='W_fc3')

b_fc3 = tf.Variable(tf.constant(0.1,shape=[4096]),name='b_fc3')

# Multiplication layer

h_fc3 = tf.nn.relu(tf.matmul(h_fc2, W_fc3) + b_fc3)

""" Layer 9: Dropout layer """

# Keep/drop nodes with 50% chance

h_dropout = tf.nn.dropout(h_fc3,0.5)

""" Readout layer: Softmax """

# Initialise variables

W_softmax = tf.Variable(tf.truncated_normal([4096,5],stddev=0.0001),name='W_softmax')

b_softmax = tf.Variable(tf.constant(0.1,shape=[5]),name='b_softmax')

# Multiplication layer

y_conv = tf.nn.relu(tf.matmul(h_dropout,W_softmax) + b_softmax)

""" Summaries """

tf.histogram_summary('W_conv1',W_conv1)

tf.histogram_summary('W_conv2',W_conv2)

tf.histogram_summary('W_conv3',W_conv3)

tf.histogram_summary('W_conv4',W_conv4)

tf.histogram_summary('W_conv5',W_conv5)

tf.histogram_summary('W_fc1',W_fc1)

tf.histogram_summary('W_fc2',W_fc2)

tf.histogram_summary('W_fc3',W_fc3)

tf.histogram_summary('W_softmax',W_softmax)

tf.histogram_summary('b_conv1',b_conv1)

tf.histogram_summary('b_conv2',b_conv2)

tf.histogram_summary('b_conv3',b_conv3)

tf.histogram_summary('b_conv4',b_conv4)

tf.histogram_summary('b_conv5',b_conv5)

tf.histogram_summary('b_fc1',b_fc1)

tf.histogram_summary('b_fc2',b_fc2)

tf.histogram_summary('b_fc3',b_fc3)

tf.histogram_summary('b_softmax',b_softmax)

return y_conv

# Training

def cost_function(y_label,y_conv):

# Reshape y_label to one-hot vectors

sparse_labels = tf.reshape(y_label,[BATCH_SIZE,1])

indices = tf.reshape(tf.range(BATCH_SIZE),[BATCH_SIZE,1])

concated = tf.concat(1,[indices,sparse_labels])

dense_labels = tf.sparse_to_dense(concated,[BATCH_SIZE,5],1.0,0.0)

# Cross-entropy

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(y_conv,dense_labels))

# Accuracy

y_prob = tf.nn.softmax(y_conv)

correct_prediction = tf.equal(tf.argmax(dense_labels,1), tf.argmax(y_prob,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# Add to summary

tf.scalar_summary('loss',cost)

tf.scalar_summary('accuracy',accuracy)

return cost, accuracy

def main ():

# To get list of filenames and labels

filename = '/labels/filenames_with_labels_server.txt'

image_list, label_list = read_labeled_image_list(filename)

images = tf.convert_to_tensor(image_list, dtype=tf.string)

labels = tf.convert_to_tensor(label_list,dtype=tf.int32)

# To create the queue

input_queue = tf.train.slice_input_producer([images,labels],shuffle=True,capacity=MAX_CAPACITY)

# To train network

image,label = read_image_with_label(input_queue)

y_conv = inference(image)

loss,acc = cost_function(label,y_conv)

train_step = tf.train.AdamOptimizer(learning_rate=0.001,epsilon=0.1).minimize(loss)

# To write and merge summaries

writer = tf.train.SummaryWriter('/SummaryLogs/log', sess.graph)

merged = tf.merge_all_summaries()

# To save variables

saver = tf.train.Saver()

""" Run session """

sess.run(tf.initialize_all_variables())

tf.train.start_queue_runners(sess=sess)

print('Running...')

for step in range(1,TRAINING_STEPS):

loss_val,acc_val,_,summary_str = sess.run([loss,acc,train_step,merged])

writer.add_summary(summary_str,step)

print "Step %d, Loss %g, Accuracy %g"%(step,loss_val,acc_val)

if(step == 1):

save_path = saver.save(sess,'/SavedVariables/model',global_step=step)

print "Initial model saved: %s"%save_path

save_path = saver.save(sess,'/SavedVariables/model-final')

print "Final model saved: %s"%save_path

""" Close session """

print('Finished')

sess.close()

if __name__ == '__main__':

main()编辑:

在做了一些修改后,我设法让网络适应于一个由50张图片组成的小训练集。

更改:

- 使用Xavier初始化初始化权值

- 将偏差初始化为零

- 不对图像进行规范化,即不除以255个

- 通过减去平均像素值(在整个训练集上计算)来集中图像。在这种情况下,平均数是114。

在此鼓舞下,我开始在整个训练集上训练我的网络,但又遇到了同样的问题。这些是产出:

Step 1, Loss 1.37815, Accuracy 0.4

y_conv (before softmax):

[[ 0.30913264 0. 1.20176554 0. 0. ]

[ 0. 0. 1.23200822 0. 0. ]

[ 0. 0. 0. 0. 0. ]

[ 0. 0. 1.65852785 0.01910716 0. ]

[ 0. 0. 0.94612855 0. 0.10457891]]

y_prob (after softmax):

[[ 0.1771856 0.130069 0.43260741 0.130069 0.130069 ]

[ 0.13462381 0.13462381 0.46150482 0.13462381 0.13462381]

[ 0.2 0.2 0.2 0.2 0.2 ]

[ 0.1078648 0.1078648 0.56646001 0.1099456 0.1078648 ]

[ 0.14956713 0.14956713 0.38524282 0.14956713 0.16605586]]很快就变成:

Step 39, Loss 1.60944, Accuracy 0.2

y_conv (before softmax):

[[ 0. 0. 0. 0. 0.]

[ 0. 0. 0. 0. 0.]

[ 0. 0. 0. 0. 0.]

[ 0. 0. 0. 0. 0.]

[ 0. 0. 0. 0. 0.]]

y_prob (after softmax):

[[ 0.2 0.2 0.2 0.2 0.2]

[ 0.2 0.2 0.2 0.2 0.2]

[ 0.2 0.2 0.2 0.2 0.2]

[ 0.2 0.2 0.2 0.2 0.2]

[ 0.2 0.2 0.2 0.2 0.2]]显然,所有零的y_conv不是一个好兆头。从直方图上看,初始化后的权重变量不会改变,只有偏差变量会发生变化。

回答 1

Stack Overflow用户

发布于 2016-05-19 01:23:07

这与其说是一个“完整的”答案,不如说是一个,如果您面临类似的问题,可以尝试“答案”。

我设法让我的网络通过以下更改开始学习一些东西:

- Xavier权值初始化

- 零偏差初始化

- 不将图像归一化为0,1

- 从图像中减去平均像素值(在整个训练集上计算)

- 在计算ReLU的最后一层中没有

y_conv

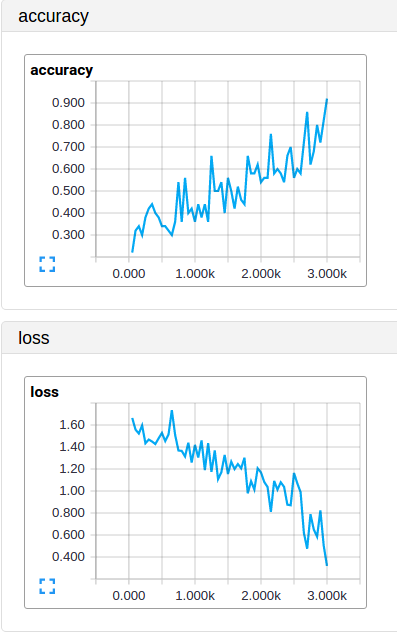

经过3000次的训练,训练的大小为50幅(约10个历元):

在测试集上,它的表现不太好,因为我的训练集非常小,而且我的网络过于合适;这是预料中的,所以我对此并不感到惊讶。至少现在我知道,我必须专注于获得更大的训练集,增加更多的正规化或简化我的网络。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/37268974

复制相关文章

相似问题