OpenCV3.0: SURF检测和FlannBased匹配器跟踪矩形问题

OpenCV3.0: SURF检测和FlannBased匹配器跟踪矩形问题

提问于 2016-01-12 15:38:46

我正在尝试实现使用FlannBased匹配器的冲浪检测和跟踪。我的代码是正常工作的检测部分,但问题是跟踪。

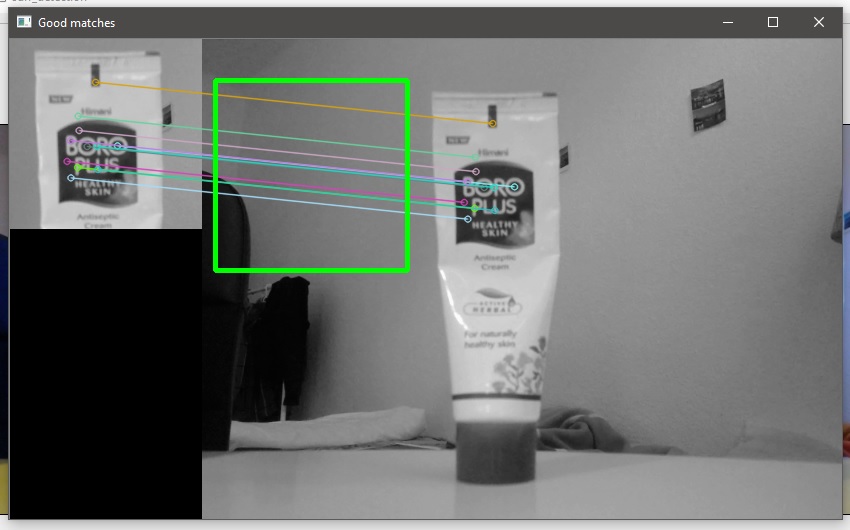

您可以在上面的图像中看到跟踪矩形没有聚焦到正确的对象上。而且,即使我移动相机,矩形也是静止的。我不知道我哪里出了问题。

下面是我实现的代码

void surf_detection::surf_detect(){

UMat img_extractor, snap_extractor;

if (crop_image_.empty())

cv_snapshot.copyTo(dst);

else

crop_image_.copyTo(dst);

//dst = QImagetocv(crop_image_);

imshow("dst", dst);

Ptr<SURF> detector = SURF::create(minHessian);

Ptr<DescriptorExtractor> extractor = SURF::create(minHessian);

cvtColor(dst, src, CV_BGR2GRAY);

cvtColor(frame, gray_image, CV_BGR2GRAY);

detector->detect(src, keypoints_1);

//printf("Object: %d keypoints detected\n", (int)keypoints_1.size());

detector->detect(gray_image, keypoints_2);

//printf("Object: %d keypoints detected\n", (int)keypoints_1.size());

extractor->compute(src, keypoints_1, img_extractor);

// printf("Object: %d descriptors extracted\n", img_extractor.rows);

extractor->compute(gray_image, keypoints_2, snap_extractor);

std::vector<Point2f> scene_corners(4);

std::vector<Point2f> obj_corners(4);

obj_corners[0] = (cvPoint(0, 0));

obj_corners[1] = (cvPoint(src.cols, 0));

obj_corners[2] = (cvPoint(src.cols, src.rows));

obj_corners[3] = (cvPoint(0, src.rows));

vector<DMatch> matches;

matcher.match(img_extractor, snap_extractor, matches);

double max_dist = 0; double min_dist = 100;

//-- Quick calculation of max and min distances between keypoints

for (int i = 0; i < img_extractor.rows; i++)

{

double dist = matches[i].distance;

if (dist < min_dist) min_dist = dist;

if (dist > max_dist) max_dist = dist;

}

//printf("-- Max dist : %f \n", max_dist);

//printf("-- Min dist : %f \n", min_dist);

vector< DMatch > good_matches;

for (int i = 0; i < img_extractor.rows; i++)

{

if (matches[i].distance <= max(2 * min_dist, 0.02))

{

good_matches.push_back(matches[i]);

}

}

UMat img_matches;

drawMatches(src, keypoints_1, gray_image, keypoints_2,

good_matches, img_matches, Scalar::all(-1), Scalar::all(-1),

vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS);

if (good_matches.size() >= 4){

for (int i = 0; i<good_matches.size(); i++){

//get the keypoints from good matches

obj.push_back(keypoints_1[good_matches[i].queryIdx].pt);

scene.push_back(keypoints_2[good_matches[i].trainIdx].pt);

}

}

H = findHomography(obj, scene, CV_RANSAC);

perspectiveTransform(obj_corners, scene_corners, H);

line(img_matches, scene_corners[0], scene_corners[1], Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[1], scene_corners[2], Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[2], scene_corners[3], Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[3], scene_corners[0], Scalar(0, 255, 0), 4);

imshow("Good matches", img_matches);

}回答 1

Stack Overflow用户

回答已采纳

发布于 2016-01-12 16:28:50

你的火柴是正确的,你只是简单地显示错了。匹配指的是gray_image坐标系,但在img_matches坐标系中显示它们。

因此,基本上,您需要用src宽度来转换它们:

line(img_matches, scene_corners[0] + Point2f(src.cols,0), scene_corners[1] + Point2f(src.cols,0), Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[1] + Point2f(src.cols,0), scene_corners[2] + Point2f(src.cols,0), Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[2] + Point2f(src.cols,0), scene_corners[3] + Point2f(src.cols,0), Scalar(0, 255, 0), 4);

line(img_matches, scene_corners[3] + Point2f(src.cols,0), scene_corners[0] + Point2f(src.cols,0), Scalar(0, 255, 0), 4);另见this相关答案。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/34747804

复制相关文章

相似问题