如何在Python中找到两个单词之间最短的依赖路径?

我试图在Python给定的依赖树中找到两个单词之间的依赖路径。

换句

大众文化中的机器人在那里提醒我们,没有束缚的人是多么的令人敬畏。

我使用了practnlptools (https://github.com/biplab-iitb/practNLPTools)来获得依赖解析结果,如下所示:

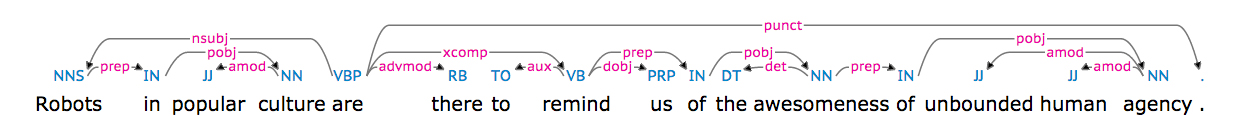

nsubj(are-5, Robots-1)

xsubj(remind-8, Robots-1)

amod(culture-4, popular-3)

prep_in(Robots-1, culture-4)

root(ROOT-0, are-5)

advmod(are-5, there-6)

aux(remind-8, to-7)

xcomp(are-5, remind-8)

dobj(remind-8, us-9)

det(awesomeness-12, the-11)

prep_of(remind-8, awesomeness-12)

amod(agency-16, unbound-14)

amod(agency-16, human-15)

prep_of(awesomeness-12, agency-16)也可以可视化为(从https://demos.explosion.ai/displacy/获取的图片)。

“机器人”与“机器人”之间的路径长度为1,“机器人”与“敬畏”之间的路径长度为4。

我的问题是上面的相关性解析结果,我如何才能得到依赖路径或依赖路径长度之间的两个字?

从我目前的搜索结果来看,nltk的ParentedTree会有帮助吗?

谢谢!

回答 3

Stack Overflow用户

发布于 2015-10-01 19:13:07

您的问题可以很容易地理解为一个图问题,在这个问题中,我们必须在两个节点之间找到最短路径。

要在图中转换依赖关系解析,我们首先必须处理一个事实,即它以字符串的形式出现。你想得到这个:

'nsubj(are-5, Robots-1)\nxsubj(remind-8, Robots-1)\namod(culture-4, popular-3)\nprep_in(Robots-1, culture-4)\nroot(ROOT-0, are-5)\nadvmod(are-5, there-6)\naux(remind-8, to-7)\nxcomp(are-5, remind-8)\ndobj(remind-8, us-9)\ndet(awesomeness-12, the-11)\nprep_of(remind-8, awesomeness-12)\namod(agency-16, unbound-14)\namod(agency-16, human-15)\nprep_of(awesomeness-12, agency-16)'像这样:

[('are-5', 'Robots-1'), ('remind-8', 'Robots-1'), ('culture-4', 'popular-3'), ('Robots-1', 'culture-4'), ('ROOT-0', 'are-5'), ('are-5', 'there-6'), ('remind-8', 'to-7'), ('are-5', 'remind-8'), ('remind-8', 'us-9'), ('awesomeness-12', 'the-11'), ('remind-8', 'awesomeness-12'), ('agency-16', 'unbound-14'), ('agency-16', 'human-15'), ('awesomeness-12', 'agency-16')]通过这种方式,您可以从网络模块将元组列表提供给图构造函数,该构造函数将分析列表并为您构建一个图,并给出一个简洁的方法,给出两个给定节点之间最短路径的长度。

必需进口

import re

import networkx as nx

from practnlptools.tools import Annotator如何以所需的元组列表格式获得字符串

annotator = Annotator()

text = """Robots in popular culture are there to remind us of the awesomeness of unbound human agency."""

dep_parse = annotator.getAnnotations(text, dep_parse=True)['dep_parse']

dp_list = dep_parse.split('\n')

pattern = re.compile(r'.+?\((.+?), (.+?)\)')

edges = []

for dep in dp_list:

m = pattern.search(dep)

edges.append((m.group(1), m.group(2)))如何构建图形

graph = nx.Graph(edges) # Well that was easy如何计算最短路径长度

print(nx.shortest_path_length(graph, source='Robots-1', target='awesomeness-12'))此脚本将显示给定依赖解析的最短路径实际上是长度为2的路径,因为您可以通过遍历Robots-1从awesomeness-12到remind-8。

1. xsubj(remind-8, Robots-1)

2. prep_of(remind-8, awesomeness-12)如果您不喜欢这个结果,您可能需要考虑过滤一些依赖项,在本例中不允许将xsubj依赖项添加到图中。

Stack Overflow用户

发布于 2017-01-23 23:56:50

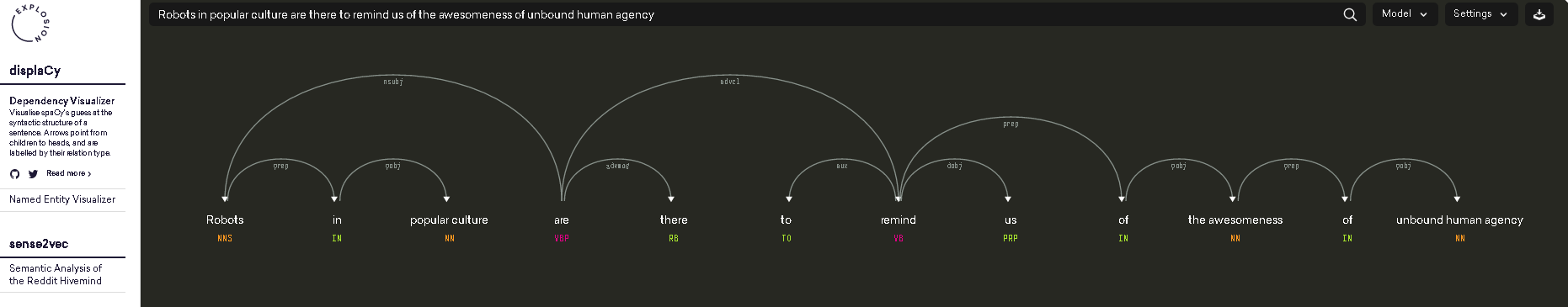

雨果·梅尔霍特的回答很棒。我将为希望在两个单词之间找到最短依赖路径的空间性用户编写类似的文章(而HugoMailhot的答案依赖于practNLPTools)。

句子:

大众文化中的机器人在那里提醒我们,没有束缚的人是多么的令人敬畏。

下面是查找两个单词之间最短依赖路径的代码:

import networkx as nx

import spacy

nlp = spacy.load('en')

# https://spacy.io/docs/usage/processing-text

document = nlp(u'Robots in popular culture are there to remind us of the awesomeness of unbound human agency.', parse=True)

print('document: {0}'.format(document))

# Load spacy's dependency tree into a networkx graph

edges = []

for token in document:

# FYI https://spacy.io/docs/api/token

for child in token.children:

edges.append(('{0}-{1}'.format(token.lower_,token.i),

'{0}-{1}'.format(child.lower_,child.i)))

graph = nx.Graph(edges)

# https://networkx.github.io/documentation/networkx-1.10/reference/algorithms.shortest_paths.html

print(nx.shortest_path_length(graph, source='robots-0', target='awesomeness-11'))

print(nx.shortest_path(graph, source='robots-0', target='awesomeness-11'))

print(nx.shortest_path(graph, source='robots-0', target='agency-15'))输出:

4

['robots-0', 'are-4', 'remind-7', 'of-9', 'awesomeness-11']

['robots-0', 'are-4', 'remind-7', 'of-9', 'awesomeness-11', 'of-12', 'agency-15']安装spacy和networkx:

sudo pip install networkx

sudo pip install spacy

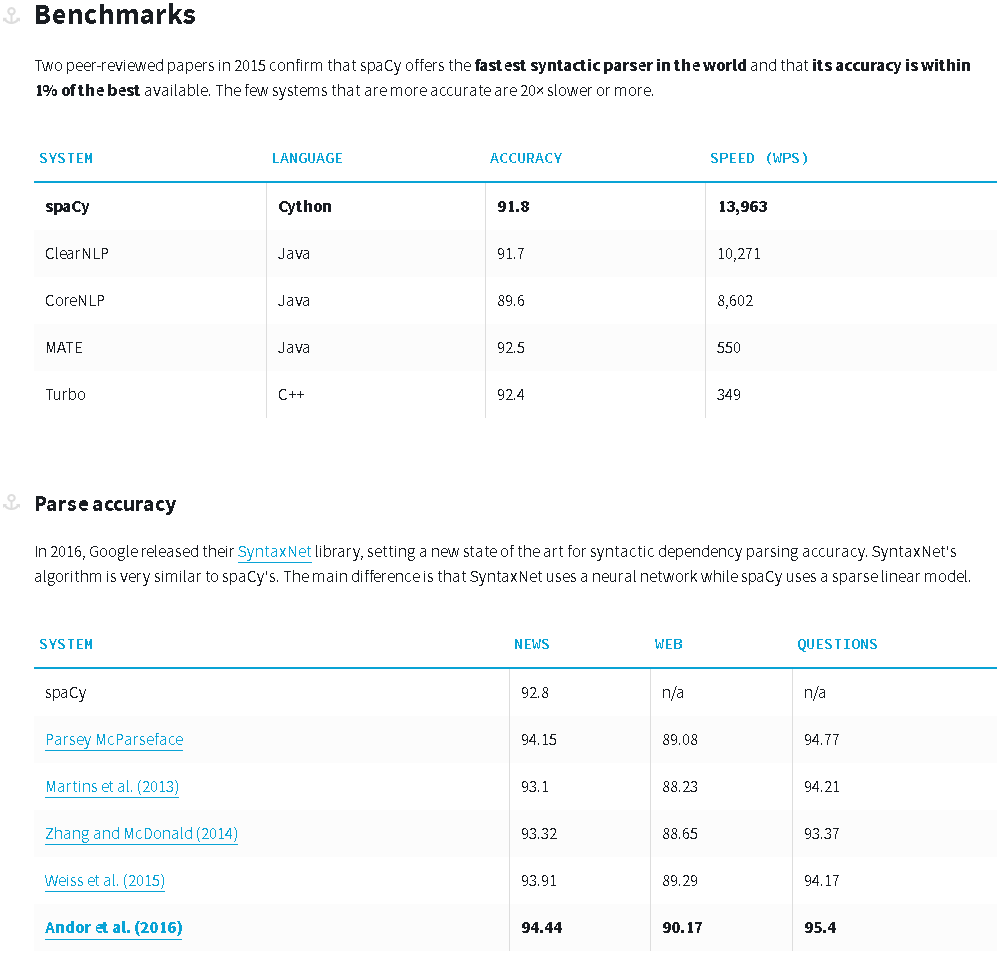

sudo python -m spacy.en.download parser # will take 0.5 GB关于spacy的依赖分析的一些基准测试:https://spacy.io/docs/api/

Stack Overflow用户

发布于 2017-01-25 22:51:35

这个答案依赖于斯坦福大学CoreNLP获得句子的依赖树。在使用networkx时,它借用了HugoMailhot的回答中的一些代码。

在运行代码之前,需要:

sudo pip install pycorenlp(斯坦福CoreNLP的python接口)- 下载斯坦福大学CoreNLP

- 启动斯坦福CoreNLP服务器如下: edu.stanford.nlp.pipeline.StanfordCoreNLPServer -mx4g -cp "*“-port 9000 -timeout 50000

然后可以运行以下代码来查找两个单词之间的最短依赖路径:

import networkx as nx

from pycorenlp import StanfordCoreNLP

from pprint import pprint

nlp = StanfordCoreNLP('http://localhost:{0}'.format(9000))

def get_stanford_annotations(text, port=9000,

annotators='tokenize,ssplit,pos,lemma,depparse,parse'):

output = nlp.annotate(text, properties={

"timeout": "10000",

"ssplit.newlineIsSentenceBreak": "two",

'annotators': annotators,

'outputFormat': 'json'

})

return output

# The code expects the document to contains exactly one sentence.

document = 'Robots in popular culture are there to remind us of the awesomeness of'\

'unbound human agency.'

print('document: {0}'.format(document))

# Parse the text

annotations = get_stanford_annotations(document, port=9000,

annotators='tokenize,ssplit,pos,lemma,depparse')

tokens = annotations['sentences'][0]['tokens']

# Load Stanford CoreNLP's dependency tree into a networkx graph

edges = []

dependencies = {}

for edge in annotations['sentences'][0]['basic-dependencies']:

edges.append((edge['governor'], edge['dependent']))

dependencies[(min(edge['governor'], edge['dependent']),

max(edge['governor'], edge['dependent']))] = edge

graph = nx.Graph(edges)

#pprint(dependencies)

#print('edges: {0}'.format(edges))

# Find the shortest path

token1 = 'Robots'

token2 = 'awesomeness'

for token in tokens:

if token1 == token['originalText']:

token1_index = token['index']

if token2 == token['originalText']:

token2_index = token['index']

path = nx.shortest_path(graph, source=token1_index, target=token2_index)

print('path: {0}'.format(path))

for token_id in path:

token = tokens[token_id-1]

token_text = token['originalText']

print('Node {0}\ttoken_text: {1}'.format(token_id,token_text))产出如下:

document: Robots in popular culture are there to remind us of the awesomeness of unbound human agency.

path: [1, 5, 8, 12]

Node 1 token_text: Robots

Node 5 token_text: are

Node 8 token_text: remind

Node 12 token_text: awesomeness请注意,斯坦福大学的CoreNLP可以在线测试:http://nlp.stanford.edu:8080/parser/index.jsp

这个答案在斯坦福大学CoreNLP 3.6.0、pycorenlp0.3.0和python3.5 x64上进行了测试。

https://stackoverflow.com/questions/32835291

复制相似问题