非负矩阵分解:交替最小二乘法

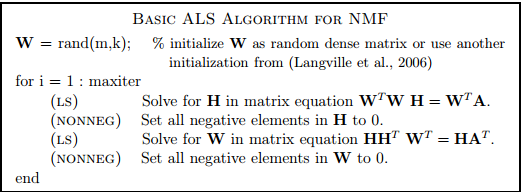

我试图用交替最小二乘方法来实现NMF。我只是对这个问题的以下基本实现感到好奇:

如果我理解正确的话,我们可以用封闭形式的解来解这个伪码中所描述的每个矩阵方程,用封闭的形式解,并将负项设为0,用蛮力的方式。这种理解是正确的吗?这是一个基本的选择,更复杂的,约束的优化问题,例如,我们使用投影梯度下降?更重要的是,如果以这种基本方式实现,该算法是否具有实用价值?我希望将NMF用于变量约简,而且我使用NMF很重要,因为我的数据定义为非负数据。我正在征求对这个问题的意见。

回答 3

Stack Overflow用户

发布于 2015-12-14 14:11:55

- 如果我理解正确的话,我们可以用封闭形式的解来解这个伪码中所描述的每个矩阵方程,用封闭的形式解,并将负项设为0,用蛮力的方式。这样理解正确吗?是的,

- 这是一个最基本的替代方案,可以替代更复杂的,约束的优化问题,例如,我们使用投影梯度下降吗?--从某种意义上说,是的。这确实是一种快速的非负因式分解方法。然而,有关NMF的文章指出,虽然该方法速度快,但不能保证非负因子的收敛性。一个更好的实现将是分层交替最小二乘的NMF (HALS-NMF)。查看本文,比较几种常用的NMF算法:http://www.cc.gatech.edu/~hpark/papers/jgo.pdf。

- 更重要的是,如果以这种基本方式实现,该算法是否具有实用价值?根据我的经验,我会说结果不如HALS或BPP(块旋转原理)那么好。

Stack Overflow用户

发布于 2017-03-22 08:44:30

在这个算法中使用非负最小二乘(而不是截断负值)显然会更好,但总的来说,我不推荐这种基本的ALS/ANNLS方法,因为它的收敛性很差(它经常波动,甚至可以显示散度)--一种更好方法的最小Matlab实现,加速-分层交替最小二乘法用于NMF ( Cichocki等人的),这是目前最快的方法之一(由Nicolas Gillis编写):

% Accelerated hierarchical alternating least squares (HALS) algorithm of

% Cichocki et al.

%

% See N. Gillis and F. Glineur, "Accelerated Multiplicative Updates and

% Hierarchical ALS Algorithms for Nonnegative Matrix Factorization”,

% Neural Computation 24 (4), pp. 1085-1105, 2012.

% See http://sites.google.com/site/nicolasgillis/

%

% [U,V,e,t] = HALSacc(M,U,V,alpha,delta,maxiter,timelimit)

%

% Input.

% M : (m x n) matrix to factorize

% (U,V) : initial matrices of dimensions (m x r) and (r x n)

% alpha : nonnegative parameter of the accelerated method

% (alpha=0.5 seems to work well)

% delta : parameter to stop inner iterations when they become

% inneffective (delta=0.1 seems to work well).

% maxiter : maximum number of iterations

% timelimit : maximum time alloted to the algorithm

%

% Output.

% (U,V) : nonnegative matrices s.t. UV approximate M

% (e,t) : error and time after each iteration,

% can be displayed with plot(t,e)

%

% Remark. With alpha = 0, it reduces to the original HALS algorithm.

function [U,V,e,t] = HALSacc(M,U,V,alpha,delta,maxiter,timelimit)

% Initialization

etime = cputime; nM = norm(M,'fro')^2;

[m,n] = size(M); [m,r] = size(U);

a = 0; e = []; t = []; iter = 0;

if nargin <= 3, alpha = 0.5; end

if nargin <= 4, delta = 0.1; end

if nargin <= 5, maxiter = 100; end

if nargin <= 6, timelimit = 60; end

% Scaling, p. 72 of the thesis

eit1 = cputime; A = M*V'; B = V*V'; eit1 = cputime-eit1; j = 0;

scaling = sum(sum(A.*U))/sum(sum( B.*(U'*U) )); U = U*scaling;

% Main loop

while iter <= maxiter && cputime-etime <= timelimit

% Update of U

if j == 1, % Do not recompute A and B at first pass

% Use actual computational time instead of estimates rhoU

eit1 = cputime; A = M*V'; B = V*V'; eit1 = cputime-eit1;

end

j = 1; eit2 = cputime; eps = 1; eps0 = 1;

U = HALSupdt(U',B',A',eit1,alpha,delta); U = U';

% Update of V

eit1 = cputime; A = (U'*M); B = (U'*U); eit1 = cputime-eit1;

eit2 = cputime; eps = 1; eps0 = 1;

V = HALSupdt(V,B,A,eit1,alpha,delta);

% Evaluation of the error e at time t

if nargout >= 3

cnT = cputime;

e = [e sqrt( (nM-2*sum(sum(V.*A))+ sum(sum(B.*(V*V')))) )];

etime = etime+(cputime-cnT);

t = [t cputime-etime];

end

iter = iter + 1; j = 1;

end

% Update of V <- HALS(M,U,V)

% i.e., optimizing min_{V >= 0} ||M-UV||_F^2

% with an exact block-coordinate descent scheme

function V = HALSupdt(V,UtU,UtM,eit1,alpha,delta)

[r,n] = size(V);

eit2 = cputime; % Use actual computational time instead of estimates rhoU

cnt = 1; % Enter the loop at least once

eps = 1; eps0 = 1; eit3 = 0;

while cnt == 1 || (cputime-eit2 < (eit1+eit3)*alpha && eps >= (delta)^2*eps0)

nodelta = 0; if cnt == 1, eit3 = cputime; end

for k = 1 : r

deltaV = max((UtM(k,:)-UtU(k,:)*V)/UtU(k,k),-V(k,:));

V(k,:) = V(k,:) + deltaV;

nodelta = nodelta + deltaV*deltaV'; % used to compute norm(V0-V,'fro')^2;

if V(k,:) == 0, V(k,:) = 1e-16*max(V(:)); end % safety procedure

end

if cnt == 1

eps0 = nodelta;

eit3 = cputime-eit3;

end

eps = nodelta; cnt = 0;

end有关完整代码和与其他方法的比较,请参见https://sites.google.com/site/nicolasgillis/code (NMF加速MU和HALS算法一节)和N. Gillis和F. Glineur,“用于非负矩阵分解的加速乘法更新和分级ALS算法”,“神经计算24 (4)”,第1085至1105页,2012年。。

Stack Overflow用户

发布于 2021-03-06 23:53:40

是的,这是可以做到的,但不,你不应该这样做。

NMF的瓶颈不是非负最小二乘计算,而是最小二乘方程右侧的计算和损失计算(如果用于确定收敛性的话)。在我的经验中,通过一个快速的NNLS求解器,与基本最小二乘求解相比,NNLS增加的相对运行时小于1%。现在(当你问这个问题的时候可能没有),有非常快的方法,如TNT和顺序坐标下降,这使得事情变得非常快。

我试过这种方法,模型质量很差。这很难让人联想到HALS或乘法更新。

https://stackoverflow.com/questions/32612502

复制相似问题