MATLAB中3类分类器单层感知器的编码

MATLAB中3类分类器单层感知器的编码

提问于 2014-09-04 03:23:43

为了识别三个类,我采取了三个单层感知器,

如果数据属于1类,那么perceptron1=1、perceptron2=0、perceptron3=0

如果数据属于2类,那么perceptron1=0、perceptron2=1、perceptron3=0

如果数据属于3类,那么perceptron1=0、perceptron2=0、perceptron3=1

我确实写了代码,现在奇怪的是,我看到识别1级和3级r级的感知器工作得很好,但不是2级,现在我找不到错误所在。你能帮帮我吗?

主程序代码:

clc;

% INPUT

% amount of values

points = 300;

% stepsize

s = 1.0;

% INITIALIZE

% Booleans

TRUE = 1;

FALSE = 0;

feature = 3;

% generate data

%D = generateRandomData(points);

%keeps tracks for hits for each classes in each iteration

p1=0;

p2=0;

p3=0;

ax = 10;

bx = 70;

d=zeros(3,points);

xx1 = 2 + 1.*randn(points/3,1);

xx2 = 10 + 1.*randn(points/3,1);

xx3 = 20 + 1.*randn(points/3,1);

D=(bx-ax).*rand(points,feature) + ax;

for k = 1:points/3

D(k,1) = xx1(k);

D(k+points/3,1) = xx2(k);

D(k+2*points/3,1) = xx3(k);

end

D = D(randperm(end),:);

for n = drange(1:points)

if D(n,1) <=5.0000

d(1,n)=1;

d(2,n)=0;

d(3,n)=0;

elseif D(n,1) >=7.0000 && D(n,1) <= 13.0000

d(1,n)=0;

d(2,n)=1;

d(3,n)=0;

elseif D(n,1) >= 17.0000

d(1,n)=0;

d(2,n)=0;

d(3,n)=1;

end

end

% x-values

% training set

%d = D(:,feature+1);

% weights

w1 = zeros(feature+1,1);

w2 = zeros(feature+1,1);

w3 = zeros(feature+1,1);

% bias

b = 1;

% sucsess flag

isCorrect = FALSE;

% correctly predicted values counter

p = 0;

% COMPUTE

% while at east one point is not correctly classified

while isCorrect == FALSE

% for every point in the dataset

for i=1 : points

% calculate outcome with current weight

%CLASS 1

sumx=0;sum1=0;

for n =1 : feature

sumx=D(i,n) * w1(n+1);

sum1=sum1+sumx;

end

bias1=b*w1(1);

m1=bias1+sum1;

c1 = activate( m1 );

a1 = errorFunction(c1,d(1,i));

if a1 ~= 0

% ajust weights

for n1 = 1 : feature

h=n1+1;

w1(h) = w1(h)+a1*s*D(i,n1);

end

w1(1)=w1(1)+a1*s*b;

else

% increase correctness counter

p1 = p1 + 1;

end

%CLASS 2

sumy=0;sum2=0;

for n =1 : feature

sumy=D(i,n) * w2(n+1);

sum2=sumy+sum2;

end

bias2=b*w2(1);

m2=bias2+sum2;

c2 = activate( m2 );

disp('-------------');

disp(c2);

disp(d(2,i));

disp('-------------');

a2 = errorFunction(c2,d(2,i));

if a2 ~= 0

% ajust weights

for n1 = 1 : feature

h=n1+1;

w2(h) = w2(h)+a2*s*D(i,n1);

end

w2(1)=w2(1)+a2*s*b;

else

% increase correctness counter

p2 = p2 + 1;

end

%CLASS 3

sumz=0;sum3=0;

for n =1 : feature

sumz=D(i,n) * w3(n+1);

sum3=sumz+sum3;

end

bias3=b*w3(1);

m3=bias3+sum3;

c3 = activate( m3 );

a3 = errorFunction(c3,d(3,i));

if a3 ~= 0

% ajust weights

for n1 = 1 : feature

h=n1+1;

w3(h) = w3(h)+a3*s*D(i,n1);

end

w3(1)=w3(1)+a3*s*b;

else

% increase correctness counter

p3 = p3 + 1;

end

end

% p/3 >= points/1.4

%p2 == p1 && p1 == p

if (p1+p2+p3)/2>=points

%disp(p);

isCorrect = TRUE;

else

%p33=p22;

%p22=p11;

%p11=p;

%p=0;

p1=0;

p2=0;

p3=0;

end

end

disp(p1);

disp(p2);

disp(p3);

test=15;

ax = 10;

bx = 70;

D1=(bx-ax).*rand(test,feature) + ax;

xy1 = 2 + 1.*randn(test/3,1);

xy2 = 10 + 1.*randn(test/3,1);

xy3 = 20 + 1.*randn(test/3,1);

for k = 1:test/3

D1(k,1) = xy1(k);

D1(k+test/3,1) = xy2(k);

D1(k+2*test/3,1) = xy3(k);

end

D1 = D1(randperm(end),:);

test1=zeros(3,test);

for n = drange(1:test)

if D1(n,1) <= 5.0000

test1(1,n)=1;

test1(2,n)=0;

test1(3,n)=0;

end

if D1(n,1) >=7.0000 && D1(n,1) <= 13.0000

test1(1,n)=0;

test1(2,n)=1;

test1(3,n)=0;

end

if D1(n,1) >= 17.0000

test1(1,n)=0;

test1(2,n)=0;

test1(3,n)=1;

end

end

for i=1 : test

sumx=0;sum1=0;sum2=0;sum3=0;

for n =1 : feature

sumx=D1(i,n) * w1(n+1);

sum1=sumx+sum1;

end

bias=b*w1(1);

m=bias+sum1;

c = activate( m );

a1 = errorFunction(c,test1(1,i));

%CLASS 2

for n =1 : feature

sumx=D1(i,n) * w2(n+1);

sum2=sumx+sum2;

end

bias=b*w2(1);

m=bias+sum2;

c = activate( m );

a2 = errorFunction(c,test1(2,i));

%CLASS 3

for n =1 : feature

sumx=D1(i,n) * w3(n+1);

sum3=sumx+sum3;

end

bias=b*w3(1);

m=bias+sum3;

c = activate( m );

a3 = errorFunction(c,test1(3,i));

% if outcome was wrong

if a1 == 0.0 && a2 == 0.0 && a3 == 0.0

disp('correct');

else disp('incorrect');

end

end激活函数代码

function f = activate(x)

f = (1/2)*(sign(x)+1);

%f = 1 / (1 + exp(-x));

%f = tanh(x);

end错误函数检查代码

function f = errorFunction(c,d)

% w has been classified as c - w should be d

if c < d

%reaction too small

f = +1;

elseif c > d

%reaction too large

f = -1;

else

%reaction correct

f = 0.0;

end结束

回答 1

Stack Overflow用户

回答已采纳

发布于 2015-06-02 07:17:37

从总体上看,快速查看代码似乎很好,但数据似乎不适合感知器培训。

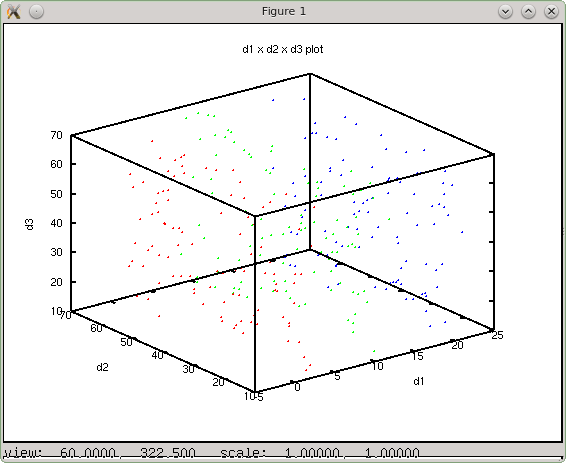

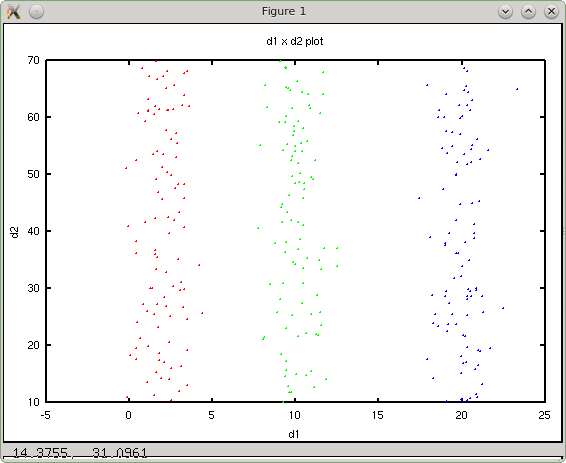

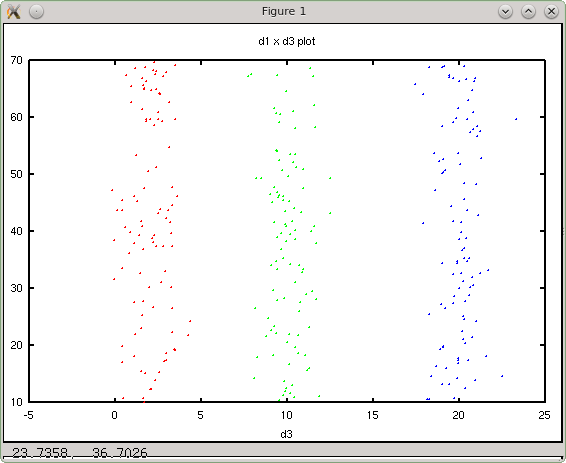

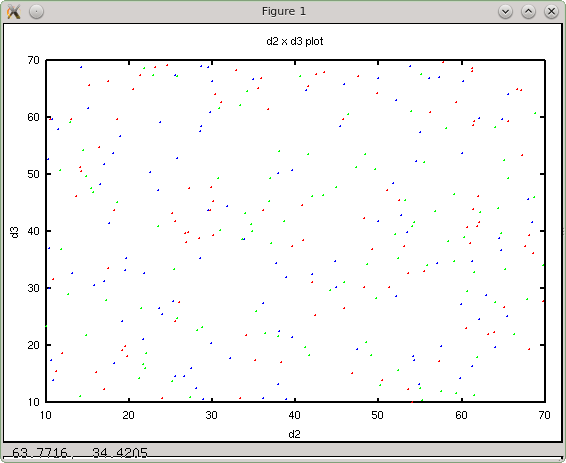

训练生成的数据似乎不是线性可分的,这一点从三维图和每个维度的其他2d图中都可以看出。具体来说,数据在第2和第3维上不是线性可分的。

三维绘图

dim 1 x dim 2

dim 1 x dim3

dim 2 x dim 3

生成的数据沿维数2和3不是线性可分的,因此感知器单元永远不会收敛。因此,训练的方式肯定会给出不准确的结果。

我建议在这种情况下使用口袋算法,这是最接近于目前的算法,可能多层感知器,逻辑单元或神经网络将是合适的。虽然dim 2和dim 3情节似乎很难分类。可能在一个明确线性可分离的数据集上尝试当前实现的代码将是可行的。

另一个建议是为培训和预测步骤编写函数,使其更易于维护和阅读。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/25656780

复制相关文章

相似问题