MPI将多个内部通信系统合并为一个内部模型

MPI将多个内部通信系统合并为一个内部模型

提问于 2014-07-17 14:48:39

我试着把一堆产生的过程设置成一个内模。我需要将不同的进程生成到唯一的工作目录中,因为这些子进程会写出一堆文件。在产生所有进程之后,希望将它们合并到一个内部通信器中。为了尝试这一点,我设置了一个简单的测试程序。

int main(int argc, const char * argv[]) {

int rank, size;

const int num_children = 5;

int error_codes;

MPI_Init(&argc, (char ***)&argv);

MPI_Comm parentcomm;

MPI_Comm childcomm;

MPI_Comm intracomm;

MPI_Comm_get_parent(&parentcomm);

if (parentcomm == MPI_COMM_NULL) {

printf("Current Command %s\n", argv[0]);

for (size_t i = 0; i < num_children; i++) {

MPI_Comm_spawn(argv[0], MPI_ARGV_NULL, 1, MPI_INFO_NULL, 0, MPI_COMM_WORLD, &childcomm, &error_codes);

MPI_Intercomm_merge(childcomm, 0, &intracomm);

MPI_Barrier(childcomm);

}

} else {

MPI_Intercomm_merge(parentcomm, 1, &intracomm);

MPI_Barrier(parentcomm);

}

printf("Test\n");

MPI_Barrier(intracomm);

printf("Test2\n");

MPI_Comm_rank(intracomm, &rank);

MPI_Comm_size(intracomm, &size);

printf("Rank %d of %d\n", rank + 1, size);

MPI_Barrier(intracomm);

MPI_Finalize();

return 0;

}当我运行这个程序时,我得到了所有的6个进程,但是我的内部处理只是在父进程和最后一个子进程之间进行的。结果的输出是

Test

Test

Test

Test

Test

Test

Test2

Rank 1 of 2

Test2

Rank 2 of 2有办法将多个通信器合并成一个通信器吗?还要注意的是,我每次都要执行这些子进程,因为我需要在一个唯一的工作目录中执行每个子进程。

回答 2

Stack Overflow用户

回答已采纳

发布于 2014-07-17 15:55:46

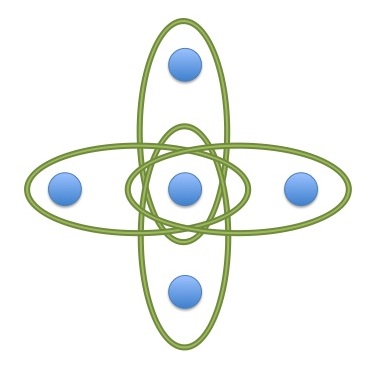

如果要通过多次调用MPI_COMM_SPAWN来完成此操作,则必须更加小心。在您第一次调用SPAWN之后,生成的进程还需要参与下一次对SPAWN的调用,否则它将被排除在您要合并的通信器之外。最后看起来是这样的:

问题是,每个MPI_INTERCOMM_MERGE中只有两个进程参与,您不能合并三个通信器,因此永远不会有一个大的通信器。

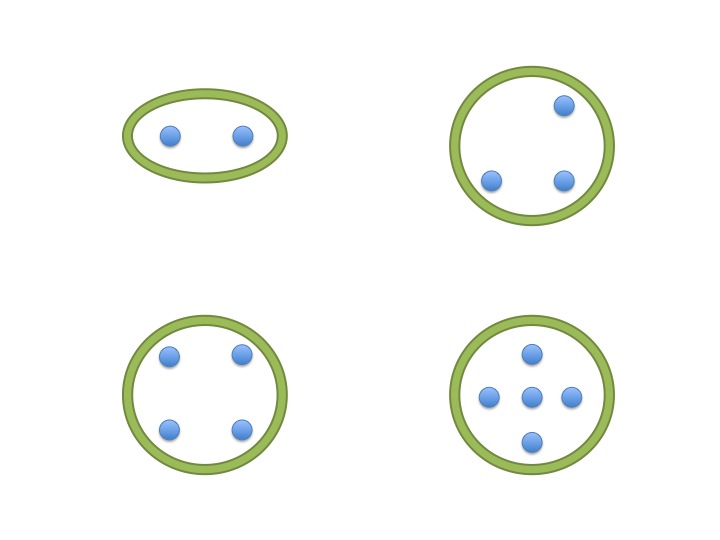

如果您让每个进程按其进行的方式参与合并,那么最终您将得到一个大型的通信器:

当然,您可以一次性生成所有额外的进程,但听起来您可能有其他原因不这样做。

Stack Overflow用户

发布于 2015-06-30 21:25:59

我意识到我对这个问题的回答已经过时了一年,但我想也许其他人会希望看到这个答案的实现。正如最初的被访者所说,没有办法合并三个(或更多)通信者。你得一次建立一个新的内部通讯系统。这是我使用的代码。此版本删除原始的内部通信;您可能希望或不希望这样做,具体取决于您的特定应用程序:

#include <mpi.h>

// The Borg routine: given

// (1) a (quiesced) intra-communicator with one or more members, and

// (2) a (quiesced) inter-communicator with exactly two members, one

// of which is rank zero of the intra-communicator, and

// the other of which is an unrelated spawned rank,

// return a new intra-communicator which is the union of both inputs.

//

// This is a collective operation. All ranks of the intra-

// communicator, and the remote rank of the inter-communicator, must

// call this routine. Ranks that are members of the intra-comm must

// supply the proper value for the "intra" argument, and MPI_COMM_NULL

// for the "inter" argument. The remote inter-comm rank must

// supply MPI_COMM_NULL for the "intra" argument, and the proper value

// for the "inter" argument. Rank zero (only) of the intra-comm must

// supply proper values for both arguments.

//

// N.B. It would make a certain amount of sense to split this into

// separate routines for the intra-communicator processes and the

// remote inter-communicator process. The reason we don't do that is

// that, despite the relatively few lines of code, what's going on here

// is really pretty complicated, and requires close coordination of the

// participating processes. Putting all the code for all the processes

// into this one routine makes it easier to be sure everything "lines up"

// properly.

MPI_Comm

assimilateComm(MPI_Comm intra, MPI_Comm inter)

{

MPI_Comm peer = MPI_COMM_NULL;

MPI_Comm newInterComm = MPI_COMM_NULL;

MPI_Comm newIntraComm = MPI_COMM_NULL;

// The spawned rank will be the "high" rank in the new intra-comm

int high = (MPI_COMM_NULL == intra) ? 1 : 0;

// If this is one of the (two) ranks in the inter-comm,

// create a new intra-comm from the inter-comm

if (MPI_COMM_NULL != inter) {

MPI_Intercomm_merge(inter, high, &peer);

} else {

peer = MPI_COMM_NULL;

}

// Create a new inter-comm between the pre-existing intra-comm

// (all of it, not only rank zero), and the remote (spawned) rank,

// using the just-created intra-comm as the peer communicator.

int tag = 12345;

if (MPI_COMM_NULL != intra) {

// This task is a member of the pre-existing intra-comm

MPI_Intercomm_create(intra, 0, peer, 1, tag, &newInterComm);

}

else {

// This is the remote (spawned) task

MPI_Intercomm_create(MPI_COMM_SELF, 0, peer, 0, tag, &newInterComm);

}

// Now convert this inter-comm into an intra-comm

MPI_Intercomm_merge(newInterComm, high, &newIntraComm);

// Clean up the intermediaries

if (MPI_COMM_NULL != peer) MPI_Comm_free(&peer);

MPI_Comm_free(&newInterComm);

// Delete the original intra-comm

if (MPI_COMM_NULL != intra) MPI_Comm_free(&intra);

// Return the new intra-comm

return newIntraComm;

}页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/24806782

复制相关文章

相似问题