矩阵核

矩阵核

提问于 2014-01-18 14:11:08

我们正在进行一个项目,并试图通过KPCA获得一些结果。

我们有一个数据集(手写数字),并且已经接受了每个数字的200个第一个数字,所以我们完整的列数据矩阵是2000x784 (784是维度)。当我们进行KPCA时,我们得到了一个包含新的低维数据集的矩阵,例如2000x100。但是我们不明白结果。难道我们不应该像我们为pca做svd时那样得到其他矩阵吗?我们用于KPCA的代码如下:

function data_out = kernelpca(data_in,num_dim)

%% Checking to ensure output dimensions are lesser than input dimension.

if num_dim > size(data_in,1)

fprintf('\nDimensions of output data has to be lesser than the dimensions of input data\n');

fprintf('Closing program\n');

return

end

%% Using the Gaussian Kernel to construct the Kernel K

% K(x,y) = -exp((x-y)^2/(sigma)^2)

% K is a symmetric Kernel

K = zeros(size(data_in,2),size(data_in,2));

for row = 1:size(data_in,2)

for col = 1:row

temp = sum(((data_in(:,row) - data_in(:,col)).^2));

K(row,col) = exp(-temp); % sigma = 1

end

end

K = K + K';

% Dividing the diagonal element by 2 since it has been added to itself

for row = 1:size(data_in,2)

K(row,row) = K(row,row)/2;

end

% We know that for PCA the data has to be centered. Even if the input data

% set 'X' lets say in centered, there is no gurantee the data when mapped

% in the feature space [phi(x)] is also centered. Since we actually never

% work in the feature space we cannot center the data. To include this

% correction a pseudo centering is done using the Kernel.

one_mat = ones(size(K));

K_center = K - one_mat*K - K*one_mat + one_mat*K*one_mat;

clear K

%% Obtaining the low dimensional projection

% The following equation needs to be satisfied for K

% N*lamda*K*alpha = K*alpha

% Thus lamda's has to be normalized by the number of points

opts.issym=1;

opts.disp = 0;

opts.isreal = 1;

neigs = 30;

[eigvec eigval] = eigs(K_center,[],neigs,'lm',opts);

eig_val = eigval ~= 0;

eig_val = eig_val./size(data_in,2);

% Again 1 = lamda*(alpha.alpha)

% Here '.' indicated dot product

for col = 1:size(eigvec,2)

eigvec(:,col) = eigvec(:,col)./(sqrt(eig_val(col,col)));

end

[~, index] = sort(eig_val,'descend');

eigvec = eigvec(:,index);

%% Projecting the data in lower dimensions

data_out = zeros(num_dim,size(data_in,2));

for count = 1:num_dim

data_out(count,:) = eigvec(:,count)'*K_center';

end我们已经读了很多文件,但仍然无法掌握kpca的逻辑!

任何帮助都将不胜感激!

回答 1

Stack Overflow用户

回答已采纳

发布于 2014-01-18 19:10:29

PCA算法

- 主成分分析数据样本

- 计算平均

- 计算协方差

- 解决

*协方差矩阵。

协方差矩阵的特征向量。

协方差矩阵的特征值。

对于第一个第n个特征向量,您将数据的维数降到n维。您可以使用这个代码作为主成分分析,它有一个完整的例子,它很简单。

KPCA算法

我们在您的代码中选择一个内核函数,这是由以下代码指定的:

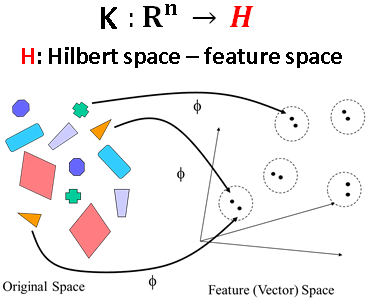

K(x,y) = -exp((x-y)^2/(sigma)^2)为了在高维空间中表示数据,在这个空间中,您的数据将被很好地表示为更多的细节,如分类或聚类,而这一任务在初始特征空间中可能更难解决。这个技巧也被称为“内核戏法”。看人形。

Step1 构造矩阵

K = zeros(size(data_in,2),size(data_in,2));

for row = 1:size(data_in,2)

for col = 1:row

temp = sum(((data_in(:,row) - data_in(:,col)).^2));

K(row,col) = exp(-temp); % sigma = 1

end

end

K = K + K';

% Dividing the diagonal element by 2 since it has been added to itself

for row = 1:size(data_in,2)

K(row,row) = K(row,row)/2;

end在这里,由于g矩阵是综合的,计算了一半的值,最后的结果是通过添加到目前为止计算的克矩阵和它的转置。最后,如评论所述,我们除以2。

Step2 对核矩阵进行规范化

这是由代码的这一部分完成的:

K_center = K - one_mat*K - K*one_mat + one_mat*K*one_mat;正如评论中提到的那样,必须进行一个假中心化的程序。关于证明的想法,这里。

Step3 求解特征值问题

For this task this part of the code is responsible.

%% Obtaining the low dimensional projection

% The following equation needs to be satisfied for K

% N*lamda*K*alpha = K*alpha

% Thus lamda's has to be normalized by the number of points

opts.issym=1;

opts.disp = 0;

opts.isreal = 1;

neigs = 30;

[eigvec eigval] = eigs(K_center,[],neigs,'lm',opts);

eig_val = eigval ~= 0;

eig_val = eig_val./size(data_in,2);

% Again 1 = lamda*(alpha.alpha)

% Here '.' indicated dot product

for col = 1:size(eigvec,2)

eigvec(:,col) = eigvec(:,col)./(sqrt(eig_val(col,col)));

end

[~, index] = sort(eig_val,'descend');

eigvec = eigvec(:,index);Step4 更改每个数据点的表示

对于此任务,代码的这一部分负责。

%% Projecting the data in lower dimensions

data_out = zeros(num_dim,size(data_in,2));

for count = 1:num_dim

data_out(count,:) = eigvec(:,count)'*K_center';

end看看细节这里。

PS:我要求您使用从 作者 编写的代码,并包含直观的示例.

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/21205233

复制相关文章

相似问题