按ID选择多行,有比IN更快的方法吗?

我有一个multiple,我想按ID选择多个行,例如,我希望从我的表中获得ID为1、5和9的行。

我在这样做的时候发表了一项类似于以下内容的WHERE声明:

SELECT [Id]

FROM [MyTable]

WHERE [Id] IN (1,5,9)然而,对于“in”子句中的大量项来说,这是相当慢的。

下面是使用where从包含1,000,000行的表中选择行的一些性能数据

Querying for 1 random keys (where in) took 0ms

Querying for 1000 random keys (where in) took 46ms

Querying for 2000 random keys (where in) took 94ms

Querying for 3000 random keys (where in) took 249ms

Querying for 4000 random keys (where in) took 316ms

Querying for 5000 random keys (where in) took 391ms

Querying for 6000 random keys (where in) took 466ms

Querying for 7000 random keys (where in) took 552ms

Querying for 8000 random keys (where in) took 644ms

Querying for 9000 random keys (where in) took 743ms

Querying for 10000 random keys (where in) took 853ms有没有比用WHERE做这个更快的方法。

我们不能连接,因为这是在断开的系统之间。

我听说过一个in memory temp table joined to the data in MYSQL may be faster,但从我的研究来看,MSSQL没有一个内存表选项,即便如此,它也不会像in有相同的索引扫描插入临时表吗?

编辑:

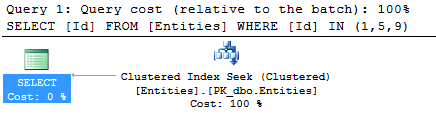

此表的ID为PK,因此默认PK索引cf

CREATE TABLE [dbo].[Entities](

[Id] [int] IDENTITY(1,1) NOT NULL,

CONSTRAINT [PK_dbo.Entities] PRIMARY KEY CLUSTERED

(

[Id] ASC

)WITH (PAD_INDEX = OFF, STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF, ALLOW_ROW_LOCKS = ON, ALLOW_PAGE_LOCKS = ON) ON [PRIMARY]

) ON [PRIMARY]执行计划

下面是一个控制台应用程序的GIST,它生成这些性能结果https://gist.github.com/lukemcgregor/5914774

编辑2 --我创建了一个函数,该函数从逗号分隔的字符串创建临时表,然后与该表连接。虽然速度更快,但我认为主要是因为在

Querying for 1 random keys took 1ms

Querying for 1000 random keys took 34ms

Querying for 2000 random keys took 69ms

Querying for 3000 random keys took 111ms

Querying for 4000 random keys took 143ms

Querying for 5000 random keys took 182ms

Querying for 6000 random keys took 224ms

Querying for 7000 random keys took 271ms

Querying for 8000 random keys took 315ms

Querying for 9000 random keys took 361ms

Querying for 10000 random keys took 411ms回答 3

Stack Overflow用户

发布于 2013-07-03 04:02:05

好的,我定义了一个表类型,然后将该类型直接传递到查询中并连接到它,从而使它运行得非常快。

在SQL中

CREATE TYPE [dbo].[IntTable] AS TABLE(

[value] [int] NULL

)用代码

DataTable dataTable = new DataTable("mythang");

dataTable.Columns.Add("value", typeof(Int32));

toSelect.ToList().ForEach(selectItem => dataTable.Rows.Add(selectItem));

using (SqlCommand command = new SqlCommand(

@"SELECT *

FROM [dbo].[Entities] e

INNER JOIN @ids on e.id = value", con))

{

var parameter = command.Parameters.AddWithValue("@ids", dataTable);

parameter.SqlDbType = System.Data.SqlDbType.Structured;

parameter.TypeName = "IntTable";

using (SqlDataReader reader = command.ExecuteReader())

{

while (reader.Read())

{

results.Add(reader.GetInt32(0));

}

}

}这将产生以下结果

Querying for 1 random keys (passed in table value) took 2ms

Querying for 1000 random keys (passed in table value) took 3ms

Querying for 2000 random keys (passed in table value) took 4ms

Querying for 3000 random keys (passed in table value) took 6ms

Querying for 4000 random keys (passed in table value) took 8ms

Querying for 5000 random keys (passed in table value) took 9ms

Querying for 6000 random keys (passed in table value) took 11ms

Querying for 7000 random keys (passed in table value) took 13ms

Querying for 8000 random keys (passed in table value) took 17ms

Querying for 9000 random keys (passed in table value) took 16ms

Querying for 10000 random keys (passed in table value) took 18msStack Overflow用户

发布于 2013-07-03 00:33:02

我想,如果您使用由主键索引的内存表加入表,如:

declare @tbl table (ids int primary key)您可以用您需要的id填充这个表,并预先形成一个优化的内部连接。

问题可能在于填补这个问题所需的时间。我想您可以为此拥有一个链接的服务器,也可以使用BCP实用程序来填充临时表,然后删除它。

Stack Overflow用户

发布于 2013-07-03 01:07:32

首先,我认为声称您的数据具有O(n log(n))的暗示是一种过分的说法。(顺便说一句,你做了性能测试真是太棒了。)以下是每个值的时间:

1000 0.046

2000 0.047

3000 0.083

4000 0.079

5000 0.078

6000 0.078

7000 0.079

8000 0.081

9000 0.083

10000 0.085虽然随着时间的推移略有增加,但从2000-3000年开始的跃升要显著得多。如果这是可重复的,那么我的问题是为什么会有这样的间断。

对我来说,这更像是O(n)和O(n log(n))的建议。但是,理论值的经验估计很难近似。所以,确切的限制并不那么重要。

我希望性能是O(n) ( n是实际值,而不是某些估计中的比特长度)。我的理解是,in的行为就像一组巨大的or。大多数记录都没有通过测试,所以他们必须做所有的比较。因此出现了O(n)。

下一个问题是您是否在id字段上有一个索引。在这种情况下,您可以在O(n log(n)) time (log (n)for traversing the index andn`中为每个值获取匹配it集)。这种情况更糟,但我们忽略了原表大小的因素。这应该是一场大胜利。

正如Andre所建议的,您可以加载一个表并对一个临时表进行连接。我会省略索引,因为在较大的表上使用索引可能更好。这将使您获得O(n log(n)) --不依赖于原始表的大小。或者,您可以省略索引,使用O(n * m),其中m是原始表的大小。我认为临时表上的任何索引构建都会使您回到O(n log(n))性能(假设数据没有预先预置)。

将所有内容放置在查询中有一个类似的、未声明的问题--解析查询。当字符串变长时,这需要更长的时间。

简而言之,我赞扬您进行了性能度量,但不是因为您得出了关于算法复杂性的结论。我不认为你的数据支持你的结论。而且,查询的处理比您建议的要复杂一些,而且您忽略了较大表的大小--这可能会产生主要影响。我很好奇在2000到3000行之间发生了什么。

https://stackoverflow.com/questions/17437802

复制相似问题