XCode 4.5.1 AudioQueue & linker命令失败

我会尽我所能。我会用我的录音队列录制一个声音。我有一个项目,并添加了我的项目所需的所有框架(所以这不是问题)。我只更改了两个文件:

- ViewController.h

- ViewController.m

在开始之前:我不确定文件类型(void)或(IBAction)(我无法测试它)。

这是我的ViewController.h的源代码

#import <UIKit/UIKit.h>

//#import <AudioToolbox/AudioQueue.h> //(don't know to use that)

//#import <AudioToolbox/AudioFile.h> //(don't know to use that)

#import <AudioUnit/AudioUnit.h>

#import <AudioToolbox/AudioToolbox.h>

#define NUM_BUFFERS 3

#define SECONDS_TO_RECORD 10

typedef struct

{

AudioStreamBasicDescription dataFormat;

AudioQueueRef queue;

AudioQueueBufferRef buffers[NUM_BUFFERS];

AudioFileID audioFile;

SInt64 currentPacket;

bool recording;

} RecordState;

typedef struct

{

AudioStreamBasicDescription dataFormat;

AudioQueueRef queue;

AudioQueueBufferRef buffers[NUM_BUFFERS];

AudioFileID audioFile;

SInt64 currentPacket;

bool playing;

} PlayState;

@interface ViewController : UIViewController{

IBOutlet UILabel* labelStatus;

IBOutlet UIButton* buttonRecord;

IBOutlet UIButton* buttonPlay;

RecordState recordState;

PlayState playState;

CFURLRef fileURL;

}

- (BOOL)getFilename:(char*)buffer maxLenth:(int)maxBufferLength;

- (void)setupAudioFormat:(AudioStreamBasicDescription*)format;

- (void)recordPressed:(id)sender;

- (void)playPressed:(id)sender;

- (IBAction)startRecording;

- (IBAction)stopRecording;

- (IBAction)startPlayback;

- (IBAction)stopPlayback;这是我的ViewController.m的源代码

(我在funktion startPlayback注释中出现了一个错误)

#import "ViewController.h"

@interface ViewController ()

@end

@implementation ViewController

void AudioInputCallback(

void *inUserData,

AudioQueueRef inAQ,

AudioQueueBufferRef inBuffer,

const AudioTimeStamp *inStartTime,

UInt32 inNumberPacketDescriptions,

const AudioStreamPacketDescription *inPacketDescs)

{

RecordState* recordState = (RecordState*)inUserData;

if(!recordState->recording)

{

printf("Not recording, returning\n");

}

//if(inNumberPacketDescriptions == 0 && recordState->dataFormat.mBytesPerPacket != 0)

//{

// inNumberPacketDescriptions = inBuffer->mAudioDataByteSize / recordState->dataFormat.mBytesPerPacket;

//}

printf("Writing buffer %lld\n", recordState->currentPacket);

OSStatus status = AudioFileWritePackets(recordState->audioFile,

false,

inBuffer->mAudioDataByteSize,

inPacketDescs,

recordState->currentPacket,

&inNumberPacketDescriptions,

inBuffer->mAudioData);

if(status == 0)

{

recordState->currentPacket += inNumberPacketDescriptions;

}

AudioQueueEnqueueBuffer(recordState->queue, inBuffer, 0, NULL);

}

void AudioOutputCallback(

void* inUserData,

AudioQueueRef outAQ,

AudioQueueBufferRef outBuffer)

{

PlayState* playState = (PlayState*)inUserData;

if(!playState->playing)

{

printf("Not playing, returning\n");

return;

}

printf("Queuing buffer %lld for playback\n", playState->currentPacket);

AudioStreamPacketDescription* packetDescs = NULL;

UInt32 bytesRead;

UInt32 numPackets = 8000;

OSStatus status;

status = AudioFileReadPackets(

playState->audioFile,

false,

&bytesRead,

packetDescs,

playState->currentPacket,

&numPackets,

outBuffer->mAudioData);

if(numPackets)

{

outBuffer->mAudioDataByteSize = bytesRead;

status = AudioQueueEnqueueBuffer(

playState->queue,

outBuffer,

0,

packetDescs);

playState->currentPacket += numPackets;

}

else

{

if(playState->playing)

{

AudioQueueStop(playState->queue, false);

AudioFileClose(playState->audioFile);

playState->playing = false;

}

AudioQueueFreeBuffer(playState->queue, outBuffer);

}

}

- (void)setupAudioFormat:(AudioStreamBasicDescription*)format

{

format->mSampleRate = 8000.0;

format->mFormatID = kAudioFormatLinearPCM;

format->mFramesPerPacket = 1;

format->mChannelsPerFrame = 1;

format->mBytesPerFrame = 2;

format->mBytesPerPacket = 2;

format->mBitsPerChannel = 16;

format->mReserved = 0;

format->mFormatFlags = kLinearPCMFormatFlagIsBigEndian |

kLinearPCMFormatFlagIsSignedInteger |

kLinearPCMFormatFlagIsPacked;

}

- (void)recordPressed:(id)sender

{

if(!playState.playing)

{

if(!recordState.recording)

{

printf("Starting recording\n");

[self startRecording];

}

else

{

printf("Stopping recording\n");

[self stopRecording];

}

}

else

{

printf("Can't start recording, currently playing\n");

}

}

- (void)playPressed:(id)sender

{

if(!recordState.recording)

{

if(!playState.playing)

{

printf("Starting playback\n");

[self startPlayback];

}

else

{

printf("Stopping playback\n");

[self stopPlayback];

}

}

}

- (IBAction)startRecording

{

[self setupAudioFormat:&recordState.dataFormat];

recordState.currentPacket = 0;

OSStatus status;

status = AudioQueueNewInput(&recordState.dataFormat,

AudioInputCallback,

&recordState,

CFRunLoopGetCurrent(),

kCFRunLoopCommonModes,

0,

&recordState.queue);

if(status == 0)

{

for(int i = 0; i < NUM_BUFFERS; i++)

{

AudioQueueAllocateBuffer(recordState.queue,

16000, &recordState.buffers[i]);

AudioQueueEnqueueBuffer(recordState.queue,

recordState.buffers[i], 0, NULL);

}

status = AudioFileCreateWithURL(fileURL,

kAudioFileAIFFType,

&recordState.dataFormat,

kAudioFileFlags_EraseFile,

&recordState.audioFile);

if(status == 0)

{

recordState.recording = true;

status = AudioQueueStart(recordState.queue, NULL);

if(status == 0)

{

labelStatus.text = @"Recording";

}

}

}

if(status != 0)

{

[self stopRecording];

labelStatus.text = @"Record Failed";

}

}

- (IBAction)stopRecording

{

recordState.recording = false;

AudioQueueStop(recordState.queue, true);

for(int i = 0; i < NUM_BUFFERS; i++)

{

AudioQueueFreeBuffer(recordState.queue,

recordState.buffers[i]);

}

AudioQueueDispose(recordState.queue, true);

AudioFileClose(recordState.audioFile);

labelStatus.text = @"Idle";

}

- (IBAction)startPlayback

{

playState.currentPacket = 0;

[self setupAudioFormat:&playState.dataFormat];

OSStatus status;

// I get here an error

// Use of undeclared identifier 'fsRdPerm'

// How to fix that?

status = AudioFileOpenURL(fileURL, fsRdPerm, kAudioFileAIFFType, &playState.audioFile);

if(status == 0)

{

status = AudioQueueNewOutput(

&playState.dataFormat,

AudioOutputCallback,

&playState,

CFRunLoopGetCurrent(),

kCFRunLoopCommonModes,

0,

&playState.queue);

if(status == 0)

{

playState.playing = true;

for(int i = 0; i < NUM_BUFFERS && playState.playing; i++)

{

if(playState.playing)

{

AudioQueueAllocateBuffer(playState.queue, 16000, &playState.buffers[i]);

AudioOutputCallback(&playState, playState.queue, playState.buffers[i]);

}

}

if(playState.playing)

{

status = AudioQueueStart(playState.queue, NULL);

if(status == 0)

{

labelStatus.text = @"Playing";

}

}

}

}

if(status != 0)

{

[self stopPlayback];

labelStatus.text = @"Play failed";

}

}

- (void)stopPlayback

{

playState.playing = false;

for(int i = 0; i < NUM_BUFFERS; i++)

{

AudioQueueFreeBuffer(playState.queue, playState.buffers[i]);

}

AudioQueueDispose(playState.queue, true);

AudioFileClose(playState.audioFile);

}

- (BOOL)getFilename:(char*)buffer maxLenth:(int)maxBufferLength

{

NSArray *paths = NSSearchPathForDirectoriesInDomains(NSDocumentDirectory,

NSUserDomainMask, YES);

NSString* docDir = [paths objectAtIndex:0];

NSString* file = [docDir stringByAppendingString:@"/recording.aif"];

return [file getCString:buffer maxLength:maxBufferLength encoding:NSUTF8StringEncoding];

}

- (void)viewDidLoad

{

[super viewDidLoad];

// Do any additional setup after loading the view, typically from a nib.

}

- (void)didReceiveMemoryWarning

{

[super didReceiveMemoryWarning];

// Dispose of any resources that can be recreated.

}

@end我不知道如何解决这个问题,我可以用我的项目。如果我将funktion startPlayback注释掉,就会得到以下错误:

Ld /Users/NAME/Library/Developer/Xcode/DerivedData/recorder_test2-gehymgoneospsldgfpxnbjdapebu/Build/Products/Debug-iphonesimulator/recorder_test2.app/recorder_test2普通i386光盘/用户/名称/桌面/记录器_i386 2 setenv IPHONEOS_DEPLOYMENT_TARGET 6.0 setenv路径"/Applications/Xcode.app/Contents/Developer/Platforms/iPhoneSimulator.platform/Developer/usr/bin:/Applications/Xcode.app/Contents/Developer/usr/bin:/usr/bin:/bin:/usr//Applications/Xcode.app/Contents/Developer/Toolchains/XcodeDefault.xctoolchain/usr/bin/clang -arch i386 -isysroot -filelist/Users/NAME/Library/Developer/Xcode/DerivedData/recorder_test2-gehymgoneospsldgfpxnbjdapebu/Build/Intermediates/recorder_test2.build/Debug-iphonesimulator/recorder_test2.build/Objects-normal/i386/recorder_test2.LinkFileList -Xlinker -objc_abi_version -Xlinker 2-fobjc-弧形-fobjc-链接-运行时-Xlinker -no_implicit_dylibs -mios-模拟器-版本-min=6.0 -framework AudioToolbox -framework AudioUnit -framework -framework UIKit -framework Foundation -framework CoreGraphics -framework/User/NAME/Library/Developer/Xcode/DerivedData/recorder_test2-gehymgoneospsldgfpxnbjdapebu/Build/Products/Debug-iphonesimulator/recorder_test2.app/recorder_test2 ld:框架未找到AudioUnit clang: error: linker命令失败,退出代码1(使用-v查看调用)

请使用这两个源文件,并测试它自己和帮助我。

回答 2

Stack Overflow用户

发布于 2012-10-18 12:31:00

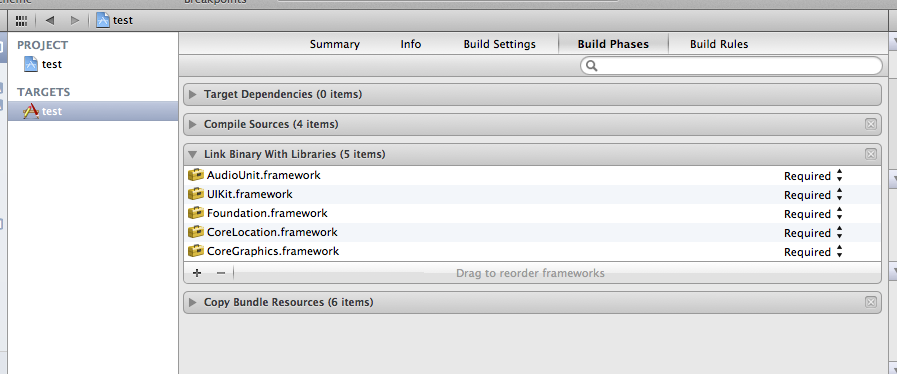

只需在项目设置中添加AudioUnit并确保有正确的路径即可。

Stack Overflow用户

发布于 2012-12-03 20:22:19

从项目中删除AudioUnit.framework并将fsRdPerm替换为kAudioFileReadPermission。

说来话长:

虽然经过一段时间的谷歌之旅,我找不到任何证据,但我几乎可以肯定,fsRdPerm不再存在于iOS 6的任何音频框架中。我在iOS6模拟器中搜索过它,它只出现在CarbonCore.framework中,这是一个遗留的框架,因此很古老:

pwds2622:Frameworks mac$ pwd

/Applications/Xcode.app/Contents/Developer/Platforms/iPhoneSimulator.platform/Developer/SDKs/iPhoneSimulator6.0.sdk/System/Library/Frameworks

s2622:Frameworks mac$ grep -sr fsRdPerm .

./CoreServices.framework/Frameworks/CarbonCore.framework/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Frameworks/CarbonCore.framework/Versions/A/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Frameworks/CarbonCore.framework/Versions/Current/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/A/Frameworks/CarbonCore.framework/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/A/Frameworks/CarbonCore.framework/Versions/A/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/A/Frameworks/CarbonCore.framework/Versions/Current/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/Current/Frameworks/CarbonCore.framework/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/Current/Frameworks/CarbonCore.framework/Versions/A/Headers/Files.h: fsRdPerm = 0x01,

./CoreServices.framework/Versions/Current/Frameworks/CarbonCore.framework/Versions/Current/Headers/Files.h: fsRdPerm = 0x01,我发现论坛上的帖子建议使用kAudioFileReadPermission而不是fsRdPerm。这是可行的,实际上,kAudioFileReadPermission的文档说这是“AudioFileOpenURL和AudioFileOpen函数使用的标志”之一。在音频文件权限标志阅读更多内容。

https://stackoverflow.com/questions/12954503

复制相似问题