模式- KAFKA中的注册表无法检索群集ID

模式- KAFKA中的注册表无法检索群集ID

提问于 2021-08-05 16:30:58

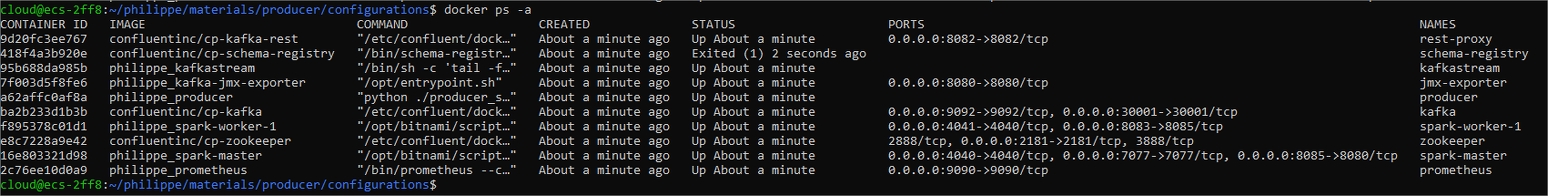

我试图安装一个基于合流图像的Kafka环境。在“docker-复合up”之后,我的所有容器都已启动并运行,但一分钟后模式注册表失败。

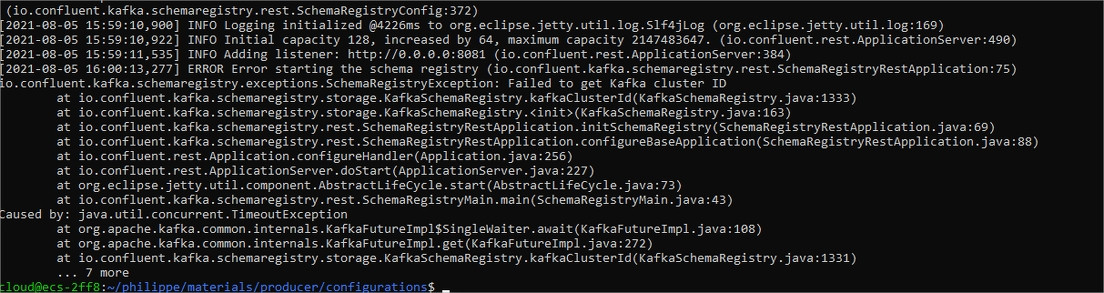

在that registry中,我找到了一条错误消息,解释了它无法获得Kafka集群Id。

我查了一下卡夫卡的日志,发现了这个:

"2021-08-05 15:59:17,074 INFO ID = ddchQ8odQM-hF67TJO97Ng (kafka.server.KafkaServer)“

因此,集群ID很好地创建了。模式注册表似乎无法收回集群ID,但我真的不明白这里发生了什么,我认为这是一个网络问题,我尝试了很多方法来修复它,但是没有成功。

这里是我的船坞-合成人。

services:

zookeeper:

image: confluentinc/cp-zookeeper

hostname: zookeeper

container_name: zookeeper

# networks:

# - my-network

ports:

- 2181:2181

environment:

ZOOKEEPER_CLIENT_PORT: 2181

ZOOKEEPER_TICK_TIME: 2000

deploy:

resources:

limits:

cpus: "1.00"

memory: "1024M"

kafka:

image: confluentinc/cp-kafka

container_name: kafka

depends_on:

- zookeeper

# networks:

# - my-network

ports:

- 9092:9092

- 30001:30001

environment:

# KAFKA_CREATE_TOPICS: toto

KAFKA_BROKER_ID: 1

KAFKA_ZOOKEEPER_CONNECT: zookeeper:2181

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: PLAINTEXT:PLAINTEXT,PLAINTEXT_HOST:PLAINTEXT

KAFKA_INTER_BROKER_LISTENER_NAME: PLAINTEXT

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://kafka:29092,PLAINTEXT_HOST://localhost:9092

KAFKA_AUTO_CREATE_TOPICS_ENABLE: "true"

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

KAFKA_GROUP_INITIAL_REBALANCE_DELAY_MS: 100

KAFKA_JMX_PORT: 30001

KAFKA_JMX_HOSTNAME: kafka

KAFKA_CONFLUENT_SCHEMA_REGISTRY_URL: http://schema-registry:8081

deploy:

resources:

limits:

cpus: "1.00"

memory: "2048M"

kafka-jmx-exporter:

build: ./materials/tools/prometheus-jmx-exporter

container_name: jmx-exporter

ports:

- 8080:8080

links:

- kafka

# networks:

# - my-network

environment:

JMX_PORT: 30001

JMX_HOST: kafka

HTTP_PORT: 8080

JMX_EXPORTER_CONFIG_FILE: kafka.yml

deploy:

resources:

limits:

cpus: "1.00"

memory: "1024M"

prometheus:

build: ./materials/tools/prometheus

container_name: prometheus

# networks:

# - my-network

ports:

- 9090:9090

spark-master:

container_name: spark-master

build: ./materials/spark

user: root

# networks:

# - my-network

volumes:

- ./materials/spark/connectors:/connectors

- ./materials/spark/scripts:/scripts/

- ./materials/consumer:/scripts/consumer

- ./secrets:/scripts/secrets

- ./materials/spark/jars_dir:/opt/bitnami/spark/.ivy2:z

ports:

- 8085:8080

- 7077:7077

- 4040:4040

environment:

- INIT_DAEMON_STEP=setup_spark

deploy:

resources:

limits:

cpus: "1.00"

memory: "1024M"

# - SPARK_MODE=master

# - SPARK_RPC_AUTHENTICATION_ENABLED=no

# - SPARK_RPC_ENCRYPTION_ENABLED=no

# - SPARK_LOCAL_STORAGE_ENCRYPTION_ENABLED=no

# - SPARK_SSL_ENABLED=no

spark-worker-1:

container_name: spark-worker-1

build: ./materials/spark

user: root

# networks:

# - my-network

depends_on:

- spark-master

ports:

- 8083:8085

- 4041:4040

environment:

- "SPARK_MASTER=spark://spark-master:7077"

- SPARK_MODE=worker

- SPARK_MASTER_URL=spark://spark-master:7077

- SPARK_WORKER_MEMORY=1G

- SPARK_WORKER_CORES=1

- SPARK_RPC_AUTHENTICATION_ENABLED=no

- SPARK_RPC_ENCRYPTION_ENABLED=no

- SPARK_LOCAL_STORAGE_ENCRYPTION_ENABLED=no

- SPARK_SSL_ENABLED=no

deploy:

resources:

limits:

cpus: "1.00"

memory: "2048M"

reservations:

cpus: "1.00"

memory: "1024M"

schema-registry:

image: confluentinc/cp-schema-registry

hostname : schema-registry

container_name : schema-registry

#command: /bin/sh -c 'tail -f /dev/null'

command: /bin/schema-registry-start /etc/schema-registry/schema-registry.properties

depends_on:

- kafka

ports:

- 8081:8081

# networks:

# - my-network

environment:

# SCHEMA_REGISTRY_KAFKASTORE_BOOTSTRAP_SERVERS: kafka:29092

SCHEMA_REGISTRY_KAFKASTORE_BOOTSTRAP_SERVERS: kafka-1:9092

SCHEMA_REGISTRY_HOST_NAME: schema-registry

SCHEMA_REGISTRY_LISTENERS: http://0.0.0.0:8081

SCHEMA_REGISTRY_DEBUG: "true"

SCHEMA_REGISTRY_KAFKASTORE.INIT.TIMEOUT.MS: 120000

deploy:

resources:

limits:

cpus: "1.00"

memory: "2048M"

producer:

build: ./materials/producer

container_name: producer

depends_on:

- kafka

# networks:

# - my-network

environment:

KAFKA_BROKER_URL: kafka-1:9092

TRANSACTIONS_PER_SECOND: 30

kafkastream:

build: ./materials/kafkastream

container_name: kafkastream

depends_on:

- kafka

# networks:

# - my-network

environment:

KAFKA_BROKER_URL: kafka-1:9092

TRANSACTIONS_PER_SECOND: 5

rest-proxy:

image: confluentinc/cp-kafka-rest

depends_on:

- kafka

- schema-registry

# networks:

# - my-network

ports:

- 8082:8082

hostname: rest-proxy

container_name: rest-proxy

#command: /bin/kafka-rest-start

environment:

KAFKA_REST_HOST_NAME: rest-proxy

KAFKA_REST_BOOTSTRAP_SERVERS: kafka:29092

KAFKA_REST_LISTENERS: http://0.0.0.0:8082

KAFKA_REST_SCHEMA_REGISTRY_URL: http://schema-registry:8081

#networks:

#my-network:

# external: false

# my-network:我的最后一次尝试是完全删除坞-撰写文件中的网络,这就是为什么与网络相关的所有行都在这里进行注释的原因。

任何暗示或想法都将受到赞赏。

谢谢

回答 2

Stack Overflow用户

发布于 2021-08-06 12:59:29

我终于找到了解决办法。我的主要任务是在我的docker-compose.yml文件中添加以下行:“命令:/bin/schema- /etc/schema-registry/schema-registry.properties". -启动”通过这种方式,模式-注册表首先将模式-Registry.properties文件的默认配置(当然不适合我的本地安装)带到acount中,而忽略了在docke-compose.yaml文件中传递的所有环境参数。

Stack Overflow用户

发布于 2021-08-05 21:36:16

明文_主机://localhost:9092,改为kafka-1或使用kafka:29092

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/68670354

复制相关文章

相似问题