如何创建带有TreeExplainer形状图y轴标签的列表?

如何创建带有TreeExplainer形状图y轴标签的列表?

提问于 2021-06-06 01:21:48

如何创建带有TreeExplainer形状图y轴标签的列表?

你好,

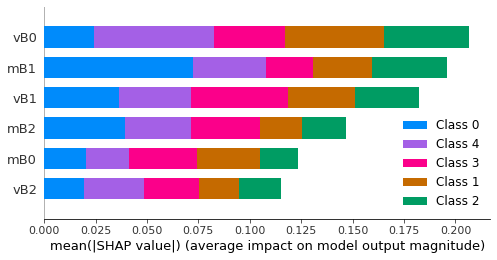

我能够生成一个图表,按照y轴上的重要性顺序对变量排序。以图形形式可视化是一个重要的解决方案,但现在我需要提取有序变量的列表,因为它们位于图的y轴上。有人知道怎么做吗?我在这里举了一个例子。

对不起,我不能补充一个最小的可重复的例子。我不知道如何在这里粘贴朱庇特笔记本单元格,所以我在下面粘贴到通过Github共享的代码链接下面。

在这个例子中,列表将是"vB0,mB1,vB1,mB2,mB0,vB2“。

## SHAP GRAPHIC

import pandas as pd

import seaborn as sns

import numpy as np # for sample data

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import cross_val_score

from sklearn.inspection import permutation_importance

import shap

from matplotlib import pyplot as plt

# set seed for reproducibility

np.random.seed(1)

# create arrays of random sample data

cl = np.random.choice(range(1, 6), size=(100, 1))

d = np.random.random_sample(size=(100, 6))

# combine the two arrays

data = np.concatenate([cl, d], axis=1)

# create a dataframe

data = pd.DataFrame(data, columns=['classe', 'mB0', 'mB1', 'mB2', 'vB0', 'vB1', 'vB2'])

# create an 'id' column with sequential numbering

#fonte: https://pythonexamples.org/pandas-set-column-as-index/#:~:text=Pandas%20%E2%80%93%20Set%20Column%20as%20Index&text=To%20set%20a%20column%20as,index%2C%20to%20set_index()%20method.

data['id'] = data.index

#Specifying Predictors (X) and Target Variable (y)

X = data.drop(['classe','id'], axis=1) #assigning predictors to X

y = data['classe'] #subsetting only the target variable to y

# implementing train-test-split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=1, stratify=y)

X_test.shape, y_test.shape

#Creating the RF Classifier model with 100 trees

rf = RandomForestClassifier(n_estimators=100)

#Fitting the classifier to the data

rf.fit(X_train, y_train)

#Getting predictions

y_rf_pred = rf.predict(X_test)

##--------------------------- Random Forests Classification ---------------------------

#Creating the RF Classifier model with 100 trees

rf = RandomForestClassifier(n_estimators=100)

#Fitting the classifier to the data

rf.fit(X_train, y_train)

#Getting predictions

y_rf_pred = rf.predict(X_test)

## Finding optimal parameters for this model

from sklearn.model_selection import RandomizedSearchCV

# number of trees in random forest

n_estimators = [int(x) for x in np.linspace(start = 100, stop = 500, num = 10)]

# number of features at every split

max_features = ['auto', 'sqrt']

# max depth

max_depth = [int(x) for x in np.linspace(1, 50, num = 11)]

max_depth.append(None)

# create random grid

random_grid = {

'n_estimators': n_estimators,

'max_features': max_features,

'max_depth': max_depth

}

# Random search of parameters

rfc_random = RandomizedSearchCV(estimator = rf, param_distributions = random_grid, n_iter = 100, cv = 10, verbose=2, random_state=42, n_jobs = -1, return_train_score=True)

# Fit the model

rfc_random.fit(X_train, y_train)

cross_val = rfc_random.cv_results_

### Running the model with optimized hyperparameters

rf_tuned = RandomForestClassifier(n_estimators=144, max_depth=10, max_features='sqrt')

rf_tuned.fit(X_train,y_train)

y_pred_rftuned = rf_tuned.predict(X_test)

rf_cv_score = cross_val_score(rf_tuned, X, y, cv=10)

# #Summarized classification report

# print("=== Classification Report ===")

# from sklearn.metrics import classification_report, confusion_matrix, ConfusionMatrixDisplay

# print(classification_report(y_test, y_pred_rftuned, digits=4))

# print('\n')

# print("=== Confusion Matrix ===")

# print(confusion_matrix(y_test, y_pred_rftuned))

# cm = confusion_matrix(y_test, y_pred_rftuned)

# disp = ConfusionMatrixDisplay(confusion_matrix=cm, display_labels=rf_tuned.classes_)

# ##Saving the tuned model to a external file to be used again without having to train all over again

# from joblib import dump, load

# dump(rf_tuned, 'test3_rf_classifier.joblib')

## Feature Importance Computed with SHAP Values

explainer = shap.TreeExplainer(rf_tuned)

shap_values = explainer.shap_values(X_test, approximate=False, check_additivity=False)

shap.summary_plot(shap_values, X_test, max_display=1000)

#print ordered columns according to shap values

sv = np.array(shap_values)

sv_mean=np.abs(sv).mean(1).sum(0)

order = np.argsort(sv_mean)[::-1]

ordered_cols = X_test.columns[order]

print(ordered_cols)回答 1

Stack Overflow用户

回答已采纳

发布于 2021-06-07 08:05:10

TL;博士

sv = np.array(shap_values)

sv_mean=np.abs(sv).mean(1).sum(0)

order = np.argsort(sv_mean)[::-1]

ordered_cols = X_test.columns[order]

print(ordered_cols)把上面的代码块附加到你链接的笔记本上,你就会得到你想要的东西。

全答

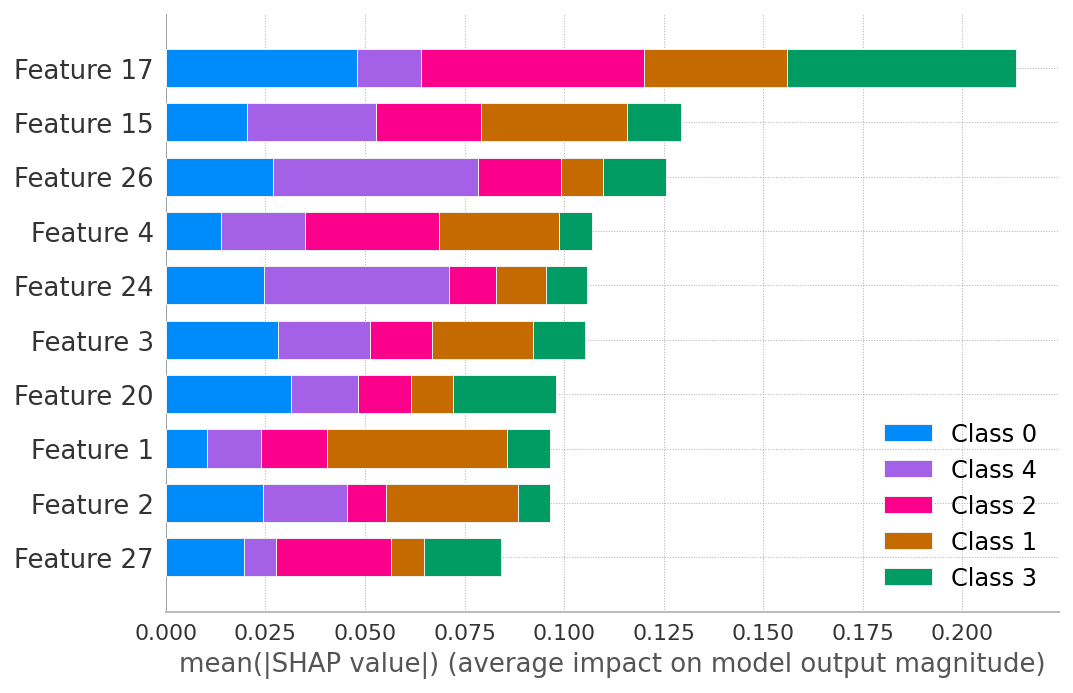

summary_plot显示按SHAP值abs平均值排序的列。你不能从情节中提取它们,但你可以计算它们。

完全可复制的例子:

from sklearn.datasets import make_classification

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split

from shap import TreeExplainer, summary_plot

X, y = make_classification(n_samples=1000, n_features=30,

n_classes=5, n_informative=10, random_state=42)

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=42)

rf_clf = RandomForestClassifier(random_state=42)

rf_clf.fit(X_train, y_train)

explainer = TreeExplainer(rf_clf)

shap_values = explainer.shap_values(X_test)

summary_plot(shap_values, max_display=10)

sv = np.array(shap_values)

sv_mean=np.abs(sv).mean(1).sum(0)

order = np.argsort(sv_mean)[::-1]

print(order)array([17, 15, 26, 4, 24, 3, 20, 1, 2, 27, 10, 12, 22, 11, 21, 23, 9,

28, 19, 7, 16, 8, 5, 14, 25, 0, 13, 6, 29, 18])页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/67855111

复制相关文章

相似问题