利用Tensorboard实时监控训练,可视化模型体系结构

利用Tensorboard实时监控训练,可视化模型体系结构

提问于 2019-09-26 10:52:23

我正在学习使用Tensorboard -- Tensorflow 2.0。

特别是,我希望实时监控学习曲线,并能直观地检查和交流我的模型的体系结构。

下面我将为一个可复制的示例提供代码。

我有三个问题:

,

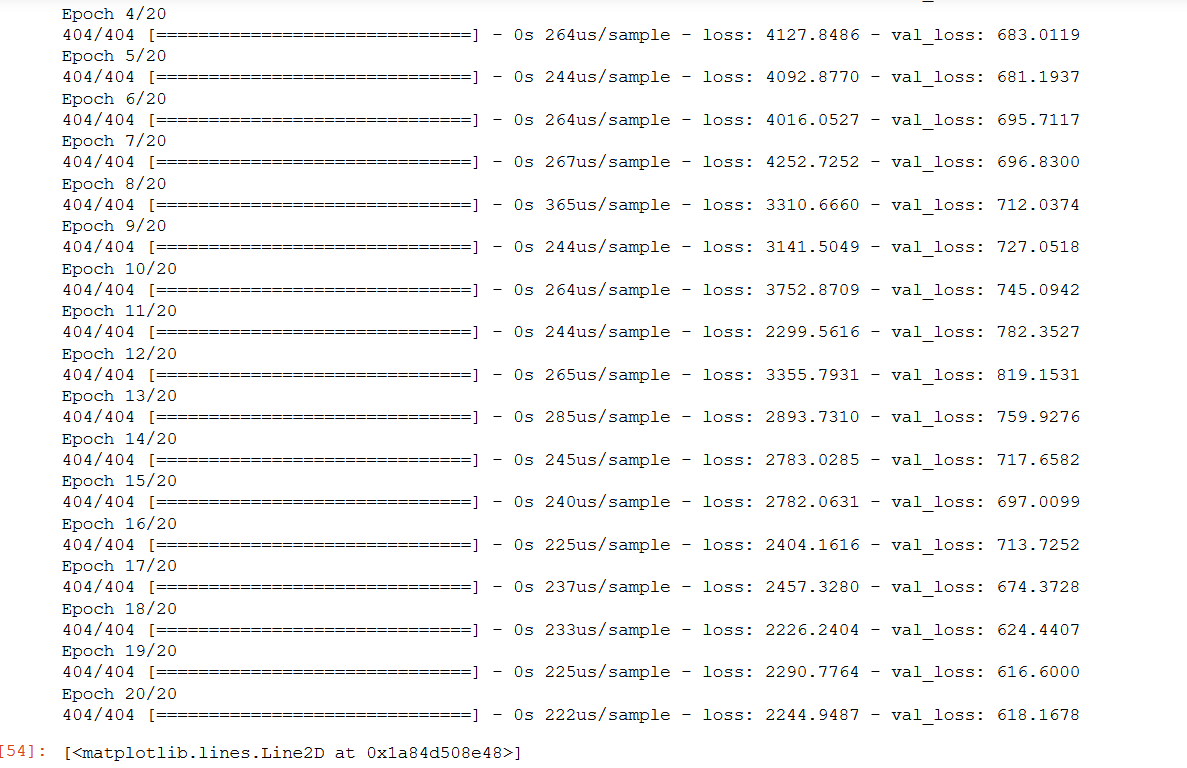

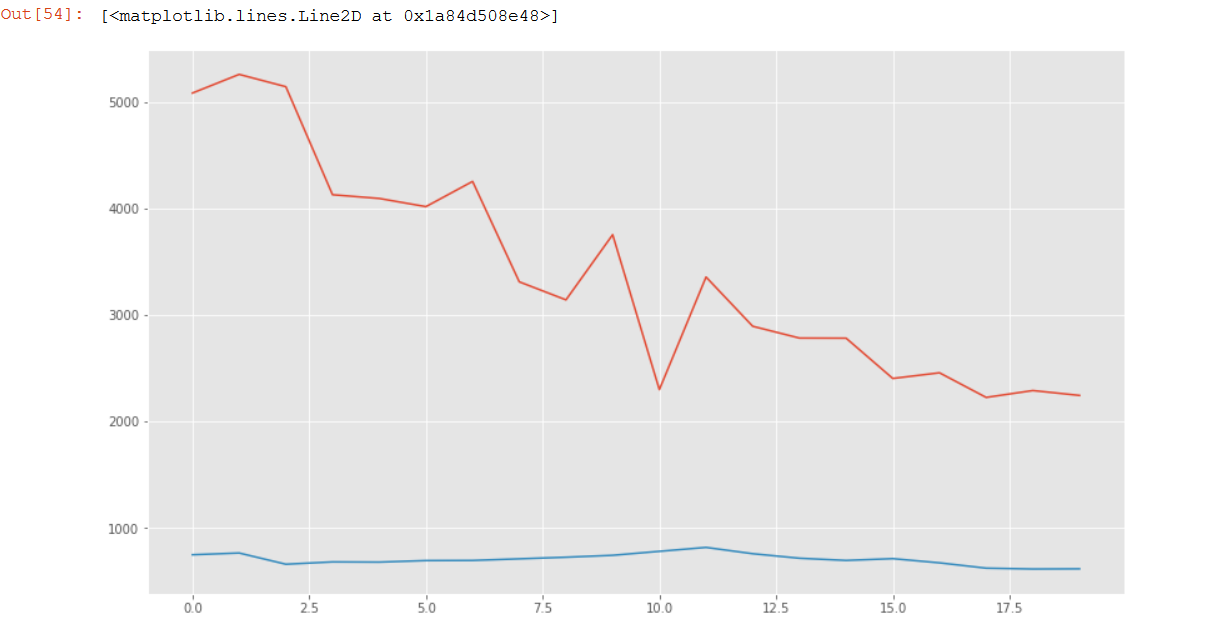

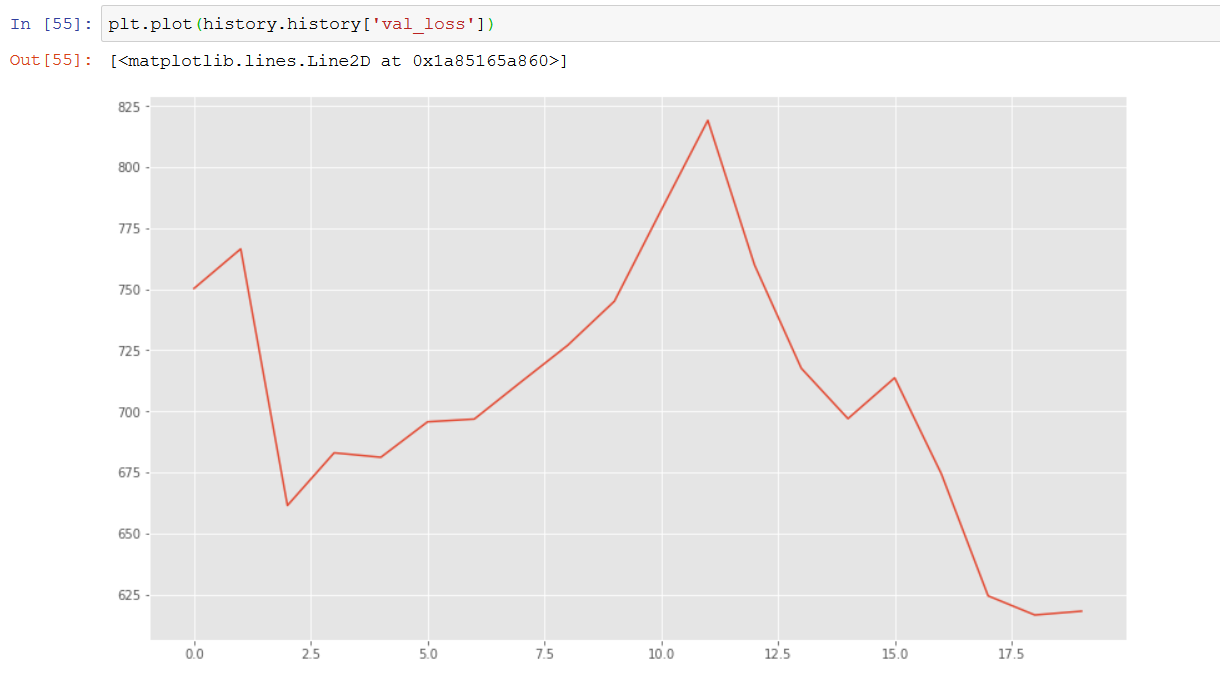

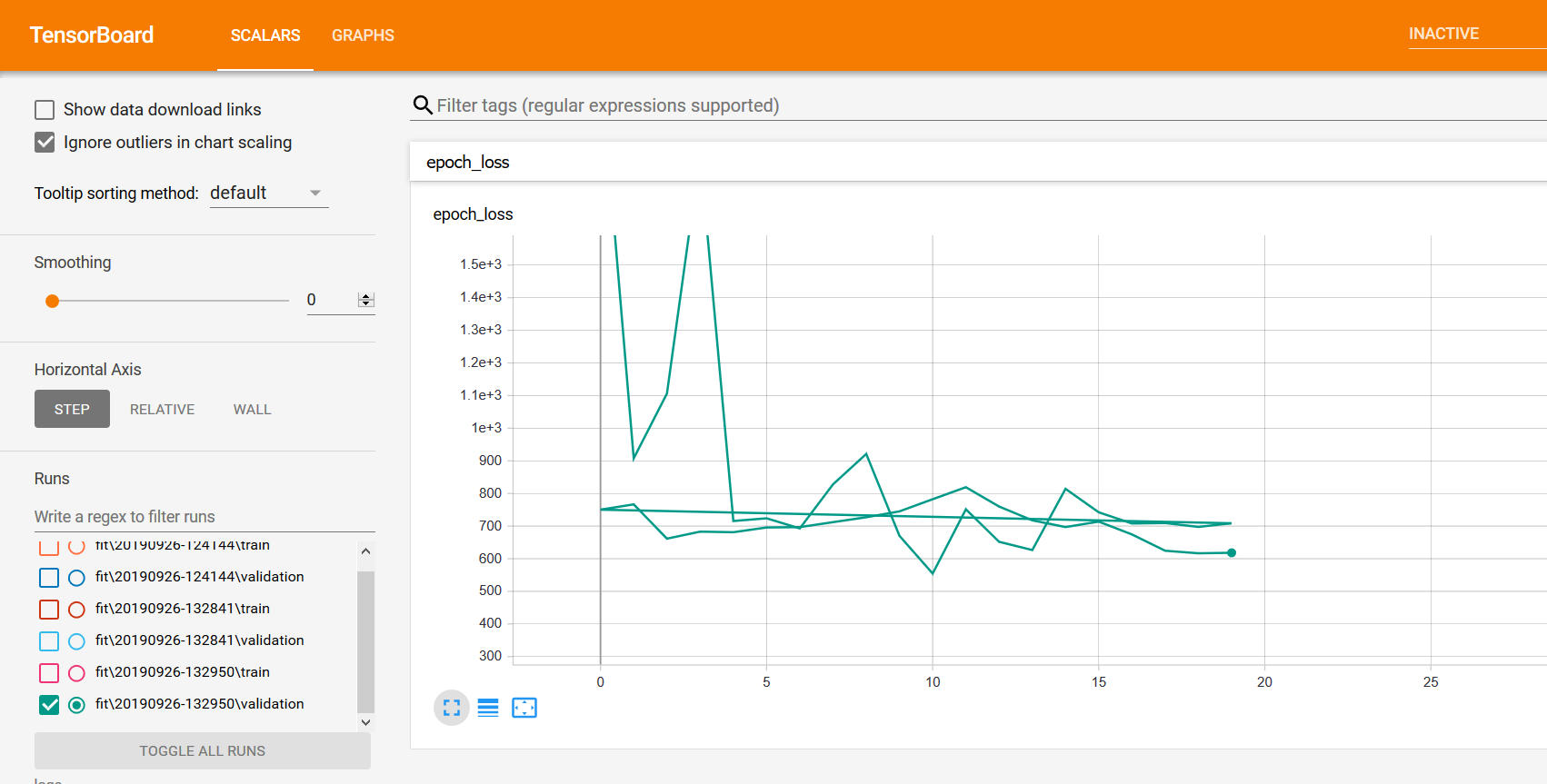

- ,虽然我在训练结束后得到了学习曲线,但我不知道如何在实时

- 中监测它们。从Tensorboard获得的学习曲线与history.history的图不一致。实际上是很奇怪的,很难解释它的反转。

,

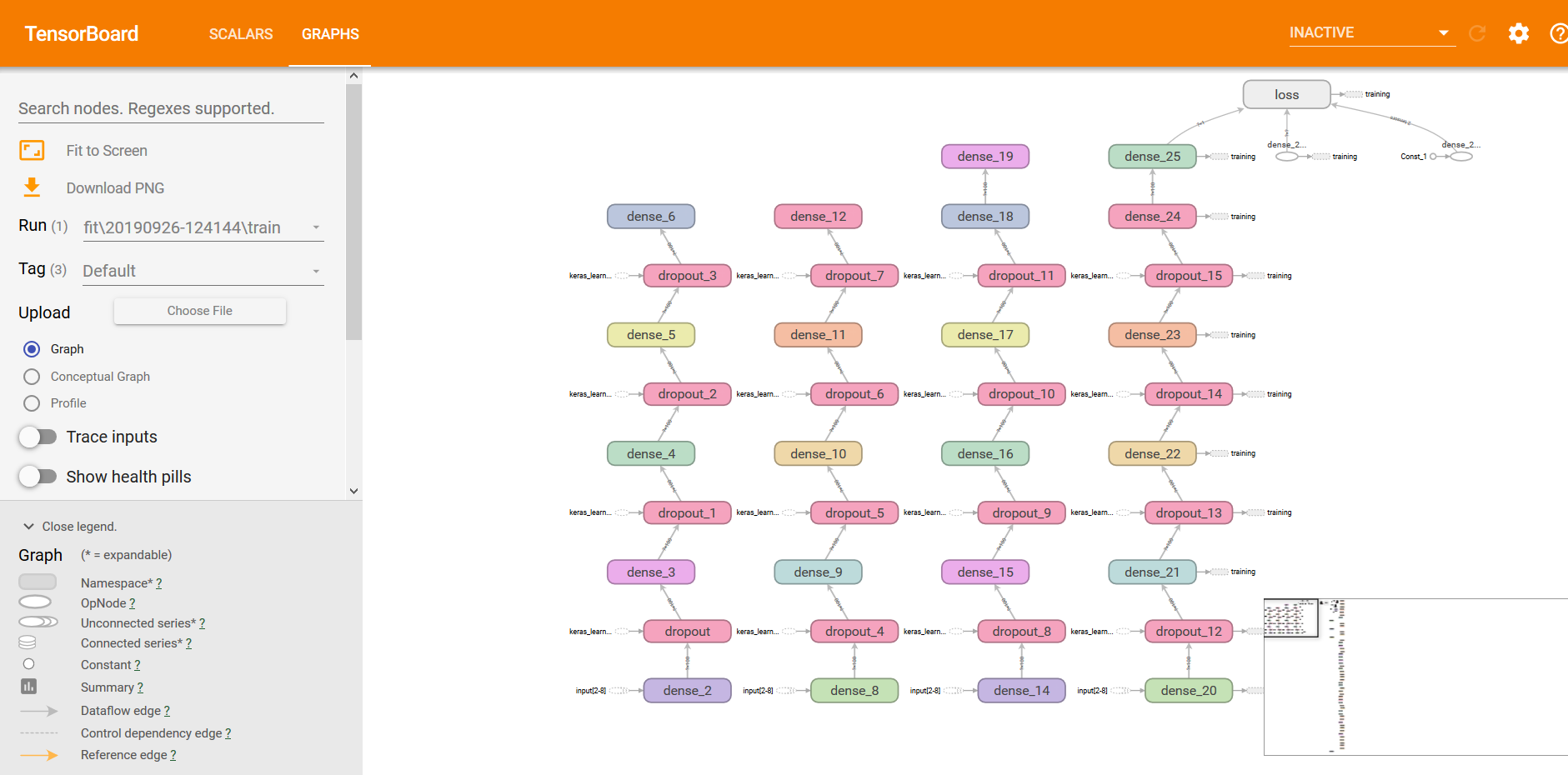

- ,I不能理解这个图。我已经训练了一个连续的模型,其中有5个密集层和辍学层。Tensorboard向我展示的是其中更多的元素。--

我的代码如下:

from keras.datasets import boston_housing

(train_data, train_targets), (test_data, test_targets) = boston_housing.load_data()

inputs = Input(shape = (train_data.shape[1], ))

x1 = Dense(100, kernel_initializer = 'he_normal', activation = 'elu')(inputs)

x1a = Dropout(0.5)(x1)

x2 = Dense(100, kernel_initializer = 'he_normal', activation = 'elu')(x1a)

x2a = Dropout(0.5)(x2)

x3 = Dense(100, kernel_initializer = 'he_normal', activation = 'elu')(x2a)

x3a = Dropout(0.5)(x3)

x4 = Dense(100, kernel_initializer = 'he_normal', activation = 'elu')(x3a)

x4a = Dropout(0.5)(x4)

x5 = Dense(100, kernel_initializer = 'he_normal', activation = 'elu')(x4a)

predictions = Dense(1)(x5)

model = Model(inputs = inputs, outputs = predictions)

model.compile(optimizer = 'Adam', loss = 'mse')

logdir="logs\\fit\\" + datetime.now().strftime("%Y%m%d-%H%M%S")

tensorboard_callback = keras.callbacks.TensorBoard(log_dir=logdir)

history = model.fit(train_data, train_targets,

batch_size= 32,

epochs= 20,

validation_data=(test_data, test_targets),

shuffle=True,

callbacks=[tensorboard_callback ])

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.plot(history.history['val_loss'])

回答 1

Stack Overflow用户

发布于 2020-12-16 11:11:12

我认为您可以做的是在调用模型上的TensorBoard之前启动.fit()。如果您正在使用IPython (木星或Colab),并且已经安装了TensorBoard,下面是您可以修改代码的方法;

from keras.datasets import boston_housing

(train_data, train_targets), (test_data, test_targets) = boston_housing.load_data()

inputs = Input(shape = (train_data.shape[1], ))

x1 = Dense(100, kernel_initializer = 'he_normal', activation = 'relu')(inputs)

x1a = Dropout(0.5)(x1)

x2 = Dense(100, kernel_initializer = 'he_normal', activation = 'relu')(x1a)

x2a = Dropout(0.5)(x2)

x3 = Dense(100, kernel_initializer = 'he_normal', activation = 'relu')(x2a)

x3a = Dropout(0.5)(x3)

x4 = Dense(100, kernel_initializer = 'he_normal', activation = 'relu')(x3a)

x4a = Dropout(0.5)(x4)

x5 = Dense(100, kernel_initializer = 'he_normal', activation = 'relu')(x4a)

predictions = Dense(1)(x5)

model = Model(inputs = inputs, outputs = predictions)

model.compile(optimizer = 'Adam', loss = 'mse')

logdir="logs\\fit\\" + datetime.now().strftime("%Y%m%d-%H%M%S")

tensorboard_callback = keras.callbacks.TensorBoard(log_dir=logdir)在另一个牢房里,你可以运行;

# Magic func to use TensorBoard directly in IPython

%load_ext tensorboard通过在另一个单元中运行此操作启动TensorBoard;

# Launch TensorBoard with objects in the log directory

# This should launch tensorboard in your browser, but you may not see your metadata.

%tensorboard --logdir=logdir 最后,您可以在另一个单元中调用模型上的.fit();

history = model.fit(train_data, train_targets,

batch_size= 32,

epochs= 20,

validation_data=(test_data, test_targets),

shuffle=True,

callbacks=[tensorboard_callback ])

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])如果您没有使用IPython,那么您可能只需要在培训您的模型期间或之前启动TensorBoard,以便实时监视它。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/58115212

复制相关文章

相似问题