Tensorflow不使用GPU,查找xla_gpu而不是gpu

Tensorflow不使用GPU,查找xla_gpu而不是gpu

提问于 2019-10-01 17:05:30

我刚刚开始探索人工智能,从来没有使用过Tensorflow,甚至Linux对我来说也是新的。

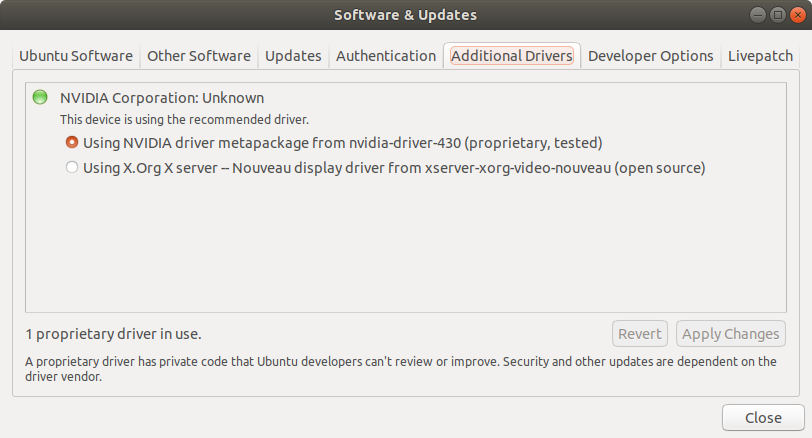

我以前安装了NVIDIA驱动器430。它附带了CUDA 10.1

由于Tensorflow-gpu 1.14不支持CUDA 10.1,我卸载了CUDA 10.1并下载了CUDA 10.0

cuda_10.0.130_410.48_linux.run一旦安装好,我就跑

nvcc --version nvcc: NVIDIA (R) Cuda compiler driver Copyright (c) 2005-2018 NVIDIA Corporation Built on Sat_Aug_25_21:08:01_CDT_2018 Cuda compilation tools, release 10.0, V10.0.130当我尝试在朱庇特笔记本中使用GPU时,代码仍然不能工作

import tensorflow as tf

with tf.device('/gpu:0'):

a = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[2, 3], name='a')

b = tf.constant([1.0, 2.0, 3.0, 4.0, 5.0, 6.0], shape=[3, 2], name='b')

c = tf.matmul(a, b)

with tf.Session() as sess:

print (sess.run(c))错误:

---------------------------------------------------------------------------

InvalidArgumentError Traceback (most recent call last)

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _do_call(self, fn, *args)

1355 try:

-> 1356 return fn(*args)

1357 except errors.OpError as e:

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _run_fn(feed_dict, fetch_list, target_list, options, run_metadata)

1338 # Ensure any changes to the graph are reflected in the runtime.

-> 1339 self._extend_graph()

1340 return self._call_tf_sessionrun(

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _extend_graph(self)

1373 with self._graph._session_run_lock(): # pylint: disable=protected-access

-> 1374 tf_session.ExtendSession(self._session)

1375

InvalidArgumentError: Cannot assign a device for operation MatMul: {{node MatMul}}was explicitly assigned to /device:GPU:0 but available devices are [ /job:localhost/replica:0/task:0/device:CPU:0, /job:localhost/replica:0/task:0/device:XLA_CPU:0, /job:localhost/replica:0/task:0/device:XLA_GPU:0 ]. Make sure the device specification refers to a valid device.

[[MatMul]]

During handling of the above exception, another exception occurred:

InvalidArgumentError Traceback (most recent call last)

<ipython-input-19-3a5be606bcc9> in <module>

6

7 with tf.Session() as sess:

----> 8 print (sess.run(c))

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in run(self, fetches, feed_dict, options, run_metadata)

948 try:

949 result = self._run(None, fetches, feed_dict, options_ptr,

--> 950 run_metadata_ptr)

951 if run_metadata:

952 proto_data = tf_session.TF_GetBuffer(run_metadata_ptr)

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _run(self, handle, fetches, feed_dict, options, run_metadata)

1171 if final_fetches or final_targets or (handle and feed_dict_tensor):

1172 results = self._do_run(handle, final_targets, final_fetches,

-> 1173 feed_dict_tensor, options, run_metadata)

1174 else:

1175 results = []

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _do_run(self, handle, target_list, fetch_list, feed_dict, options, run_metadata)

1348 if handle is None:

1349 return self._do_call(_run_fn, feeds, fetches, targets, options,

-> 1350 run_metadata)

1351 else:

1352 return self._do_call(_prun_fn, handle, feeds, fetches)

~/anaconda3/lib/python3.7/site-packages/tensorflow/python/client/session.py in _do_call(self, fn, *args)

1368 pass

1369 message = error_interpolation.interpolate(message, self._graph)

-> 1370 raise type(e)(node_def, op, message)

1371

1372 def _extend_graph(self):

InvalidArgumentError: Cannot assign a device for operation MatMul: node MatMul (defined at <ipython-input-9-b145a02709f7>:5) was explicitly assigned to /device:GPU:0 but available devices are [ /job:localhost/replica:0/task:0/device:CPU:0, /job:localhost/replica:0/task:0/device:XLA_CPU:0, /job:localhost/replica:0/task:0/device:XLA_GPU:0 ]. Make sure the device specification refers to a valid device.

[[MatMul]]

Errors may have originated from an input operation.

Input Source operations connected to node MatMul:

b (defined at <ipython-input-9-b145a02709f7>:4)

a (defined at <ipython-input-9-b145a02709f7>:3)但是,如果我在Python中的终端运行这段代码,它就能工作。我能看到输出

[22.28.]

回答 1

Stack Overflow用户

回答已采纳

发布于 2019-10-02 15:36:34

您需要确保安装了适当的CUDA和CuDNN版本。

- 您可以使用以下链接中的建议检查您的

CuDNN版本:如何验证CuDNN安装?- 或者在linux机器上运行

cat /usr/local/cuda/include/cudnn.h | grep CUDNN_MAJOR -A 2。

- 或者在linux机器上运行

- 您可以在这里查看

CUDA版本:xcat.docsnvcc -V- 或者通过运行

nvidia-smi

- 并在这里阅读

xla_gpu的文章:tensorflow xla和gpu问题- xla是由tensorflow制造的,比标准的tensorflow更快。

- 我不知道为什么没有

CUDA的CuDNN调用gpu的xla_gpus。Nvidia GPU需要CUDA和CuDNN与Tensorflow正常工作,所以看起来tensorflow试图使用自己的库在GPU上进行计算。但是我不太确定。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/58189394

复制相关文章

相似问题