如何使用Python创建带时间的Dataproc集群

如何使用Python创建带时间的Dataproc集群

提问于 2020-02-11 12:20:56

我尝试使用python 创建一个Dataproc集群,该集群的生存期为1天。为此,Dataproc的v1beta2引入了LifecycleConfig对象,它是ClusterConfig对象的子对象。

我在传递给create_cluster方法的JSON文件中使用了这个对象。要设置特定的TTL,我使用字段auto_delete_ttl,该字段的值应为86,400秒(一天)。

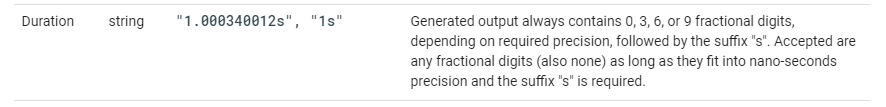

关于如何在JSON文件中表示持续时间的Google协议缓冲区的文档非常具体:工期应表示为带有后缀s的字符串,持续时间为0、3、6或9个小数秒:

但是,如果使用此格式传递持续时间,则会得到以下错误:

参数MergeFrom()必须是同一个类的实例:预期的google.protobuf.Duration获得str

我就是这样创建集群的:

from google.cloud import dataproc_v1beta2

project = "your_project_id"

region = "europe-west4"

cluster = "" #see below for cluster JSON file

client = dataproc_v1beta2.ClusterControllerClient(client_options={

'api_endpoint': '{}-dataproc.googleapis.com:443'.format(region)

})

# Create the cluster

operation = client.create_cluster(project, region, cluster)变量集群包含描述所需集群的JSON对象:

{

"cluster_name":"my_cluster",

"config":{

"config_bucket":"my_conf_bucket",

"gce_cluster_config":{

"zone_uri":"europe-west4-a",

"metadata":{

"PIP_PACKAGES":"google-cloud-storage google-cloud-bigquery"

},

"subnetwork_uri":"my subnet",

"service_account_scopes":[

"https://www.googleapis.com/auth/cloud-platform"

],

"tags":[

"some tags"

]

},

"master_config":{

"num_instances":1,

"machine_type_uri":"n1-highmem-4",

"disk_config":{

"boot_disk_type":"pd-standard",

"boot_disk_size_gb":200,

"num_local_ssds":0

},

"accelerators":[

]

},

"software_config":{

"image_version":"1.4-debian9",

"properties":{

"dataproc:dataproc.allow.zero.workers":"true",

"yarn:yarn.log-aggregation-enable":"true",

"dataproc:dataproc.logging.stackdriver.job.driver.enable":"true",

"dataproc:dataproc.logging.stackdriver.enable":"true",

"dataproc:jobs.file-backed-output.enable":"true"

},

"optional_components":[

]

},

"lifecycle_config":{

"auto_delete_ttl":"86400s"

},

"initialization_actions":[

{

"executable_file":"gs://some-init-script"

}

]

},

"project_id":"project_id"

}我正在使用的包版本:

- google-cloud-dataproc: 0.6.1

- 原型机: 3.11.3

- 谷歌跳跃-公共-原型: 1.6.0

我是不是做错了什么,是错误的包版本的问题,还是甚至是一个错误?

回答 1

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/60168673

复制相关文章

相似问题