为什么与rjags和R2Jags匹配的模型输出是不同的?

我正在用群组水平预测来拟合一个多层次的logistic回归模型。我通过R使用JAGS,当我将模型与runjags和R2Jags包相匹配时,我得到了不同的行为。

我试着写一个可重复的例子来说明这个问题。下面,我从一个二项式模型中模拟数据,将数据索引到8个地块和2个块,然后拟合一个多层次的logistic回归来恢复下面代码中的成功概率(b1和b2)。滚动到底部,查看两者的摘要。

我的问题是:

,

- ,为什么这两种搭配的后排不同?我使用相同的数据,一个单一的模型规范,并在每个模型之前设置随机数生成器。为什么后验的平均值不同,为什么Rhat值如此不同?

# -------------------------------------------------------------------

# Loading required packages

# -------------------------------------------------------------------

library(rjags)

library(R2jags)

library(MCMCvis)软件包版本信息:

jags.version()

[1] ‘4.3.0’

R2jags_0.5-7 MCMCvis_0.13.5 rjags_4-10# -------------------------------------------------------------------

# Simulate data

# -------------------------------------------------------------------

set.seed(10)

N.plots = 8

N.blocks = 2

trials=400

n = rep(100,trials)

N=length(n)

plotReps=N/N.plots

blockReps=N/N.blocks

# Block 1

b1<-rep(c(.25,.75,.9,.1),each=plotReps)-.05

# Block 2

b2<-rep(c(.25,.75,.9,.1),each=plotReps)+.05

y = rbinom(trials, 100, p = c(b1,b2))

# vectors indexing plots and blocks

plot = rep(1:8,each=plotReps)

block = rep(1:2,each=blockReps)

# pass data to list for JAGS

data = list(

y = y,

n = n,

N = length(n),

plot = plot,

block= block,

N.plots = N.plots,

N.blocks = N.blocks

)# -------------------------------------------------------------------

# Code for JAGS model

# -------------------------------------------------------------------

modelString <- "model {

## Priors

# hyperpriors

mu.alpha ~ dnorm(0, 0.0001)

sigma.plot ~ dunif(0,100)

tau.plot <- 1 / sigma.plot^2

sigma.block ~ dunif(0,100)

tau.block <- 1 / sigma.block^2

# priors

for(i in 1:N.plots){

eps.plot[i]~dnorm(0,tau.plot)

}

for(i in 1:N.blocks){

eps.block[i]~dnorm(0,tau.block)

}

# Likelihood

for(i in 1:N){

logit(p[i]) <- mu.alpha + eps.plot[plot[i]] + eps.block[block[i]]

y[i] ~ dbin(p[i], n[i])

}

}"# -------------------------------------------------------------------

# Initial values

# -------------------------------------------------------------------

# set inits for rjags

inits = list(list(mu.alpha = 0,sigma.plot=2,sigma.block=2),

list(mu.alpha = 0,sigma.plot=2,sigma.block=2),

list(mu.alpha = 0,sigma.plot=2,sigma.block=2))

# set inits function for R2jags

initsFun<-function(){list(

mu.alpha=0,

sigma.plot=2,

sigma.block=2

)}# -------------------------------------------------------------------

# Set JAGS parameters and random seed

# -------------------------------------------------------------------

# scalars that specify the

# number of iterations in the chain for adaptation

# number of iterations for burn-in

# number of samples in the final chain

n.adapt = 500

n.update = 5000

n.iterations = 1000

n.thin = 1

parsToMonitor = c("mu.alpha","sigma.plot","sigma.block","eps.plot","eps.block")# -------------------------------------------------------------------

# Call to JAGS via rjags

# -------------------------------------------------------------------

set.seed(2)

# tuning (n.adapt)

jm = jags.model(textConnection(modelString), data = data, inits = inits,

n.chains = length(inits), n.adapt = n.adapt)

# burn-in (n.update)

update(jm, n.iterations = n.update)

# chain (n.iter)

samples.rjags = coda.samples(jm, variable.names = c(parsToMonitor), n.iter = n.iterations, thin = n.thin)# -------------------------------------------------------------------

# Call to JAGS via R2jags

# -------------------------------------------------------------------

set.seed(2)

samples.R2jags <-jags(data=data,inits=initsFun,parameters.to.save=parsToMonitor,model.file=textConnection(modelString),

n.thin=n.thin,n.chains=length(inits),n.burnin=n.adapt,n.iter=n.iterations,DIC=T)# -------------------------------------------------------------------

# Summarize posteriors using MCMCvis

# -------------------------------------------------------------------

sum.rjags <- MCMCvis::MCMCsummary(samples.rjags,params=c("mu.alpha","eps.plot","sigma.plot","sigma.block","eps.block"))

sum.rjags

sum.R2jags2 <- MCMCvis::MCMCsummary(samples.R2jags,params=c("mu.alpha","eps.plot","sigma.plot","sigma.block","eps.block"))

sum.R2jags2下面是rjags的输出:

mean sd 2.5% 50% 97.5% Rhat n.eff

mu.alpha 0.07858079 21.2186737 -48.99286669 -0.04046538 45.16440893 1.11 4063

eps.plot[1] -1.77570813 0.8605892 -3.45736942 -1.77762035 -0.02258692 1.00 2857

eps.plot[2] -0.37359614 0.8614370 -2.07913650 -0.37581522 1.36611635 1.00 2846

eps.plot[3] 0.43387001 0.8612820 -1.24273657 0.42332033 2.20253810 1.00 2833

eps.plot[4] 1.31279883 0.8615840 -0.38750596 1.31179143 3.06307745 1.00 2673

eps.plot[5] -1.34317034 0.8749558 -3.06843578 -1.34747145 0.44451006 1.00 2664

eps.plot[6] -0.40064738 0.8749104 -2.13233876 -0.41530587 1.37910977 1.00 2677

eps.plot[7] 0.36515253 0.8738092 -1.35364716 0.35784379 2.15597251 1.00 2692

eps.plot[8] 1.71826293 0.8765952 -0.01057452 1.70627507 3.50314147 1.00 2650

sigma.plot 1.67540914 0.6244529 0.88895789 1.53080631 3.27418094 1.01 741

sigma.block 19.54287007 26.1348353 0.14556791 6.68959552 93.21927035 1.22 94

eps.block[1] -0.55924545 21.2126905 -46.34099332 -0.24261169 48.81435107 1.11 4009

eps.block[2] 0.35658731 21.2177540 -44.65998407 0.25801739 49.31921639 1.11 4457下面是来自R2jags fit的输出:

mean sd 2.5% 50% 97.5% Rhat n.eff

mu.alpha -0.09358847 19.9972601 -45.81215297 -0.03905447 47.32288503 1.04 1785

eps.plot[1] -1.70448172 0.8954054 -3.41749845 -1.70817566 0.08187877 1.00 1141

eps.plot[2] -0.30070570 0.8940527 -2.01982416 -0.30458798 1.46954632 1.00 1125

eps.plot[3] 0.50295713 0.8932038 -1.20985348 0.50458106 2.29271214 1.01 1156

eps.plot[4] 1.37862742 0.8950657 -0.34965321 1.37627777 3.19545411 1.01 1142

eps.plot[5] -1.40421696 0.8496819 -3.10743244 -1.41880218 0.25843323 1.01 1400

eps.plot[6] -0.45810643 0.8504694 -2.16755579 -0.47087931 1.20827684 1.01 1406

eps.plot[7] 0.30319019 0.8492508 -1.39045509 0.28668886 1.96325582 1.01 1500

eps.plot[8] 1.65474420 0.8500635 -0.03632306 1.63399429 3.29585024 1.01 1395

sigma.plot 1.66375532 0.6681285 0.88231891 1.49564854 3.45544415 1.04 304

sigma.block 20.64694333 23.0418085 0.41071589 11.10308188 85.56459886 1.09 78

eps.block[1] -0.45810120 19.9981027 -46.85060339 -0.33090743 46.27709625 1.04 1795

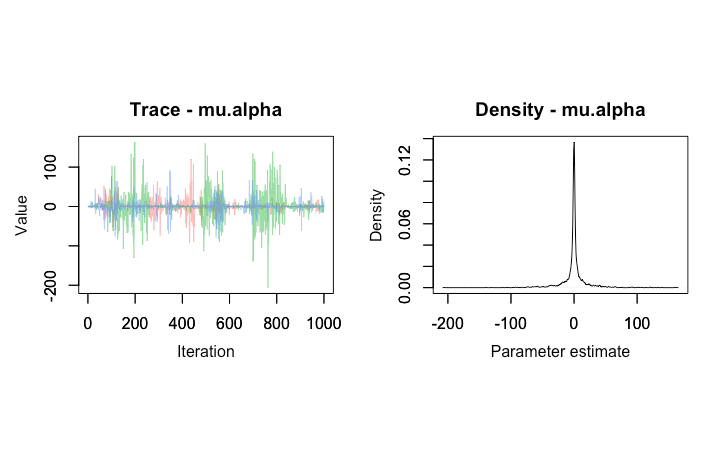

eps.block[2] 0.58896195 19.9552211 -46.39310677 0.28183123 46.57874408 1.04 1769下面是mu.alpha从2 fits中得到的跟踪图。首先,从rjags:

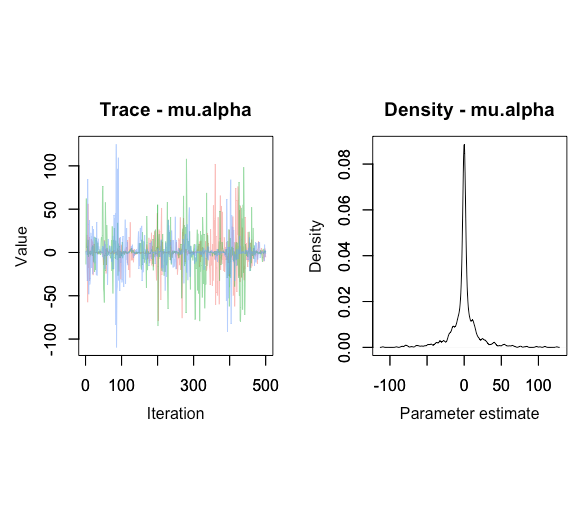

其次,来自R2jags fit:

回答 2

Stack Overflow用户

发布于 2020-02-20 16:47:56

虽然部分问题与mu.alpha缺乏收敛性有关,但另一个问题是两个包如何确定从后验分布中收集样本的数量。此外,update在jags.model之后的调用应该是:

update(jm, n.iter = n.update)

而不是

update(jm, n.iterations = n.update)

对于rjags,您可以很容易地指定适应步骤、更新步骤和迭代步骤的数量。查看samples.rjags,很明显,每个链都有一个长度为n.iterations的后验,总共(在本例中)有3000个样本(n.iterations * n.chains)。相反,R2jags::jags将对后验进行采样,其次数等于n.iter参数减去n.burnin参数的次数。因此,正如您已经指定的那样,您没有将n.update步骤包含到R2jags::jags中,并且2)只对后面的步骤进行了总共1500次的采样(每个链只保存了500个样本),而不是rjags的3000次。

如果您想要进行类似的刻录,并尝试相同的次数,则可以运行:

samples.R2jags <-jags(

data=data,

inits=inits,

parameters.to.save=parsToMonitor,

model.file=textConnection(modelString),

n.thin=n.thin,

n.chains=length(inits),

n.burnin=n.adapt + n.update ,

n.iter=n.iterations +n.update + n.adapt,

DIC=T

)最后,R2jags默认加载glm模块,而rjags不加载。这可能会导致一些差异,因为所使用的采样器可能有所不同(至少在这种情况下是因为您正在拟合glm)。在调用rjags::load.module('glm')之前,您可以使用一个jags.model调用加载glm模块。

虽然这与问题本身无关,但我将避免您在模型中的每个循环的for循环中使用i (如果循环的迭代次数不同,则使用不同的字母):

modelString <- "model {

## Priors

# hyperpriors

mu.alpha ~ dnorm(0, 0.0001)

sigma.plot ~ dunif(0,100)

tau.plot <- 1 / sigma.plot^2

sigma.block ~ dunif(0,100)

tau.block <- 1 / sigma.block^2

# priors

for(i in 1:N.plots){

eps.plot[i]~dnorm(0,tau.plot)

}

for(j in 1:N.blocks){

eps.block[j]~dnorm(0,tau.block)

}

# Likelihood

for(k in 1:N){

logit(p[k]) <- mu.alpha + eps.plot[plot[k]] + eps.block[block[k]]

y[k] ~ dbin(p[k], n[k])

}

}"Stack Overflow用户

发布于 2020-06-23 01:07:19

我很确定你的后验不一样的原因是因为贾格斯不关心R码中的种子集。

然而,!虽然set.seed()不直接为Jags做任何事情,在通过rjag直接调用Jags时也是无用的,但是当您使用R2Jags时,它确实会被传播。

让我们比较一下:

- rjags是一个较低级别的接口。如果您不提供随机生成器和种子的选择,那么在timestamp.

- R2Jags Jags中,它们的初始化将基于rjags函数周围的当前

inits包装。jags()(R2Jags)函数调用jags.model()(rjags)。如果您签出jags()函数的R -代码,您将看到它使用R中的runif()函数为每个链生成一个种子,因为Jags种子依赖于R中runif()函数的输出,在R中设置一个种子将确保每次运行Jags都会得到相同的种子。

https://stackoverflow.com/questions/60305150

复制相似问题