在wp插件中用Beautifulsoup获取多个urls收集元数据-用时间戳进行排序

在wp插件中用Beautifulsoup获取多个urls收集元数据-用时间戳进行排序

提问于 2020-04-08 17:17:50

我试着从一个站点上抓取一小块信息:但是它一直在打印“无”,好像标题或任何标签(如果我替换它)都不存在一样。

项目:关于wordpress的元数据列表-插件:-大约50个插件是感兴趣的!但挑战是:我想获取所有现有插件的元数据。之后,我想过滤掉的是那些具有最新时间戳的插件,这些插件是最近更新的(大多数)。这一切都是偶然的.

https://wordpress.org/plugins/wp-job-manager

https://wordpress.org/plugins/ninja-forms

https://wordpress.org/plugins/participants-database ....and so on and so forth.

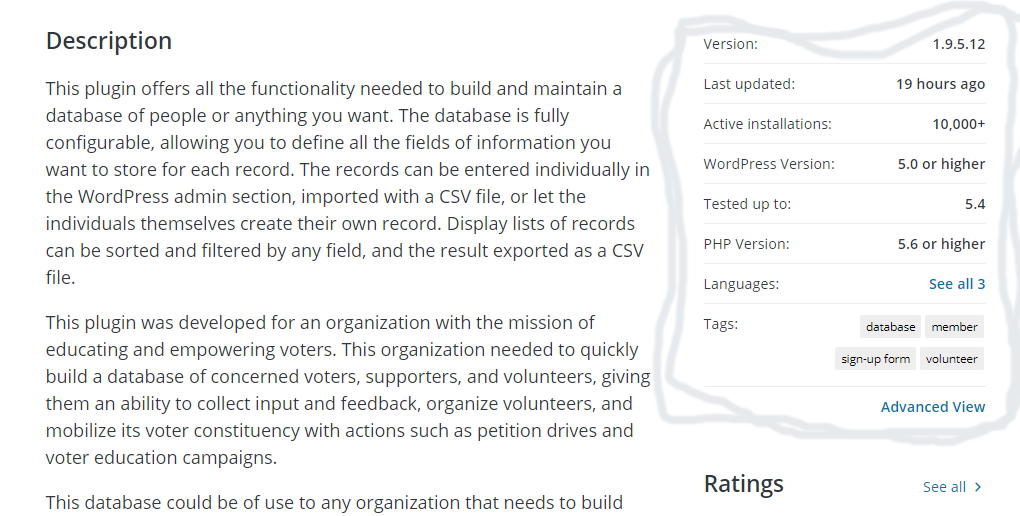

我们为每个wordpress插件提供了以下一组元数据:

Version: 1.9.5.12

installations: 10,000+

WordPress Version: 5.0 or higher

Tested up to: 5.4 PHP

Version: 5.6 or higher

Tags 3 Tags:databasemembersign-up formvolunteer

Last updated: 19 hours ago

enter code here该项目由两个部分组成:循环-part:(这似乎非常简单)。解析器--部分:我有一些问题--见下文。我试图循环遍历一个URL数组,并从wordpress-plugins列表中抓取下面的数据。看我下面的循环-

from bs4 import BeautifulSoup

import requests

#array of URLs to loop through, will be larger once I get the loop working correctly

plugins = ['https://wordpress.org/plugins/wp-job-manager', 'https://wordpress.org/plugins/ninja-forms']这样做是可以的。

ttt = page_soup.find("div", {"class":"plugin-meta"})

text_nodes = [node.text.strip() for node in ttt.ul.findChildren('li')[:-1:2]]输出 of text_nodes:

“版本:1.9.5.12”,“活动安装:10,000+”,“测试达到: 5.6”

但是,如果我们想要获取所有wordpress插件的数据并对它们进行巧妙的排序,以显示-let,我们说-最新的50个更新插件。这将是一项有趣的任务:

- 首先,我们需要获取urls

- 然后我们获取信息,并整理出最新的-最新的时间戳。最新更新的插件

- 列出50个最近更新的插件.

挑战:如何避免在获取所有URL的同时重载RAM。(请参阅这里,如何使用BeautifulSoup提取网站中的所有URL提供了有趣的见解、方法和想法。

目前,我试图找出如何获取所有的urls -and来解析它们:

a. how to fetch the meta-data of each plugin:

b. and how to sort out the range of the newest updates…

c. afterward how to pick out the 50 newest回答 1

Stack Overflow用户

回答已采纳

发布于 2020-04-09 06:24:43

import requests

from bs4 import BeautifulSoup

from concurrent.futures.thread import ThreadPoolExecutor

url = "https://wordpress.org/plugins/browse/popular/{}"

def main(url, num):

with requests.Session() as req:

print(f"Collecting Page# {num}")

r = req.get(url.format(num))

soup = BeautifulSoup(r.content, 'html.parser')

link = [item.get("href")

for item in soup.findAll("a", rel="bookmark")]

return set(link)

with ThreadPoolExecutor(max_workers=20) as executor:

futures = [executor.submit(main, url, num)

for num in [""]+[f"page/{x}/" for x in range(2, 50)]]

allin = []

for future in futures:

allin.extend(future.result())

def parser(url):

with requests.Session() as req:

print(f"Extracting {url}")

r = req.get(url)

soup = BeautifulSoup(r.content, 'html.parser')

target = [item.get_text(strip=True, separator=" ") for item in soup.find(

"h3", class_="screen-reader-text").find_next("ul").findAll("li")[:8]]

head = [soup.find("h1", class_="plugin-title").text]

new = [x for x in target if x.startswith(

("V", "Las", "Ac", "W", "T", "P"))]

return head + new

with ThreadPoolExecutor(max_workers=50) as executor1:

futures1 = [executor1.submit(parser, url) for url in allin]

for future in futures1:

print(future.result())输出:查看-联机

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/61106309

复制相关文章

相似问题