跨越自定义异常,但打印所有其他异常。

我正在运行以下尝试--除了代码:

try:

paths = file_system_client.get_paths("{0}/{1}/0/{2}/{3}/{4}".format(container_initial_folder, container_second_folder, chronological_date[0], chronological_date[1], chronological_date[2]), recursive=True)

list_of_paths=["abfss://{0}@{1}.dfs.core.windows.net/".format(storage_container_name, storage_account_name)+path.name for path in paths if ".avro" in path.name]

except Exception as e:

if e=="AccountIsDisabled":

pass

else:

print(e)如果我的尝试失败了,我既不想打印以下错误--除非失败了--如果我遇到这个错误,也不想停止我的程序执行:

"(AccountIsDisabled) The specified account is disabled.

RequestId:3159a59e-d01f-0091-5f71-2ff884000000

Time:2020-05-21T13:09:03.3540242Z"我只想立交桥,它和打印任何其他错误/异常(例如。( TypeError,ValueError等)将要发生的。

这在Python 3中可行吗?

请注意,.get_paths()方法属于azure.storage.filedatalake模块,该模块允许直接连接azure.storage.filedatalake与Azure数据湖进行路径提取。

我要指出的是,我试图绕过的例外并不是一个内置的例外。

在遵循建议的附加答案之后,按顺序更新,我将代码修改如下:

import sys

from concurrent.futures import ThreadPoolExecutor

from azure.storage.filedatalake._models import StorageErrorException

from azure.storage.filedatalake import DataLakeServiceClient, DataLakeFileClient

storage_container_name="name1" #confidential

storage_account_name="name2" #confidential

storage_account_key="password" #confidential

container_initial_folder="name3" #confidential

container_second_folder="name4" #confidential

def datalake_connector(storage_account_name, storage_account_key):

global service_client

datalake_client = DataLakeServiceClient(account_url="{0}://{1}.dfs.core.windows.net".format("https", storage_account_name), credential=storage_account_key)

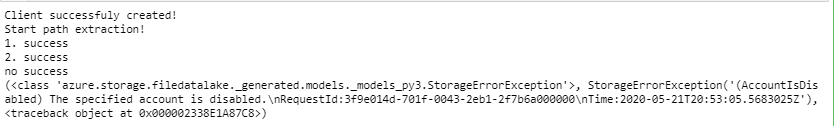

print("Client successfuly created!")

return datalake_client

def create_list_paths(chronological_date,

container_initial_folder="name3",

container_second_folder="name4",

storage_container_name="name1",

storage_account_name="name2"

):

list_of_paths=list()

print("1. success")

paths = file_system_client.get_paths("{0}/{1}/0/{2}/{3}/{4}".format(container_initial_folder, container_second_folder, chronological_date[0], chronological_date[1], chronological_date[2]), recursive=True)

print("2. success")

list_of_paths=["abfss://{0}@{1}.dfs.core.windows.net/".format(storage_container_name, storage_account_name)+path.name for path in paths if ".avro" in path.name]

print("3. success")

list_of_paths=functools.reduce(operator.iconcat, result, [])

return list_of_paths

service_client = datalake_connector(storage_account_name, storage_account_key)

file_system_client = service_client.get_file_system_client(file_system=storage_container_name)

try:

list_of_paths=[]

executor=ThreadPoolExecutor(max_workers=8)

print("Start path extraction!")

list_of_paths=[executor.submit(create_list_paths, i, container_initial_folder, storage_container_name, storage_account_name).result() for i in date_list]

except:

print("no success")

print(sys.exc_info())不幸的是,由于某种原因,StorageErrorException不能由处理,我仍然得到以下标准:

回答 2

Stack Overflow用户

发布于 2020-05-21 13:30:36

清单语句。

实现这一目标有几种方法。这里有一个:

try:

# ...

except StorageErrorException:

pass

except:

print(sys.exc_info()[1])请注意,except:很棘手,因为您可能会默默地处理不应该处理的异常。另一种方法是捕获代码可能显式引发的任何异常。

try:

# ...

except StorageErrorException:

pass

except (SomeException, SomeOtherException, SomeOtherOtherException) as e:

print(e)快速浏览[MS.Docs]:文件数据包和源代码,显示StorageErrorException (扩展[MS.Docs]:HttpResponseError类)是您需要处理的。

可能要检查[所以]:关于抓住任何例外。

与未能捕获异常有关,显然有两个名称相同的:

- azure.storage.blob._generated.models._models_py3.__StorageErrorException (目前进口)

- azure.storage.filedatalake._generated.models._models_py3.__StorageErrorException

我不知道理由(我没有使用这个包),但是考虑到这个包引发了一个在另一个包中定义的异常,当它也定义了一个同名的包时,它看起来很差劲。

无论如何,导入正确的异常解决了问题。

另外,在处理这种情况时,不仅要导入基本名称,还要使用完全合格的名称:

import azure.storage.filedatalake._generated.models.StorageErrorExceptionStack Overflow用户

发布于 2020-05-21 13:25:14

要比较异常的type,请将条件更改为:

if type(e)==AccountIsDisabled:

示例:

class AccountIsDisabled(Exception):

pass

print("try #1")

try:

raise AccountIsDisabled

except Exception as e:

if type(e)==AccountIsDisabled:

pass

else:

print(e)

print("try #2")

try:

raise Exception('hi', 'there')

except Exception as e:

if type(e)==AccountIsDisabled:

pass

else:

print(e)输出:

try #1

try #2

('hi', 'there')https://stackoverflow.com/questions/61935487

复制相似问题