自定义RMSE损失函数和内置RMSE的Lightgbm分数是不同的。

自定义RMSE损失函数和内置RMSE的Lightgbm分数是不同的。

提问于 2020-05-25 13:52:00

为了开始为lightgbm定制目标函数,我开始复制标准的目标RMSE。不幸的是,分数是不同的。我的示例基于这个帖子或github。

Grad和hess与lightgbm中的来源相同,或者在下面的问题中给出的答案是相同的。

自定义RMSE函数有什么问题?

注:在这个例子中,最后的损失似乎接近了,但轨迹完全不同。在其他(更大的)例子中,我在最终损失方面的差异甚至更大。

import numpy as np

import matplotlib.pyplot as plt

from lightgbm import LGBMRegressor

import lightgbm

from sklearn.datasets import make_friedman1

from sklearn.model_selection import train_test_split

X, y = make_friedman1(n_samples=10000, n_features=7, noise=10.0, random_state=11)

X_train, X_valid, y_train, y_valid = train_test_split(X, y, test_size=0.20, random_state=42)

gbm2 = lightgbm.LGBMRegressor(objective='rmse', random_state=33, early_stopping_rounds = 5, n_estimators=10000)

gbm2.fit(X_train, y_train, eval_set=[(X_valid, y_valid)], eval_metric='rmse', verbose=10)

gbm2eval = gbm2.evals_result_

def custom_RMSE(y_true, y_pred):

residual = (y_pred - y_true)

grad = residual

hess = np.ones(len(y_true))

return grad, hess

gbm3 = lightgbm.LGBMRegressor(random_state=33, early_stopping_rounds = 5, n_estimators=10000)

gbm3.set_params(**{'objective': custom_RMSE})

gbm3.fit(X_train, y_train, eval_set=[(X_valid, y_valid)], eval_metric='rmse', verbose=10)

gbm3eval = gbm3.evals_result_

plt.plot(gbm2eval['valid_0']['rmse'],label='rmse')

plt.plot(gbm3eval['valid_0']['rmse'],label='custom rmse')

plt.legend()eval_results for gbm2:

Training until validation scores don't improve for 5 rounds

[10] valid_0's rmse: 10.214

[20] valid_0's rmse: 10.044

[30] valid_0's rmse: 10.0348

Early stopping, best iteration is:

[28] valid_0's rmse: 10.028eval_results for gbm3:

Training until validation scores don't improve for 5 rounds

[10] valid_0's rmse: 11.5991 valid_0's l2: 134.539

[20] valid_0's rmse: 10.2721 valid_0's l2: 105.516

[30] valid_0's rmse: 10.0801 valid_0's l2: 101.608

[40] valid_0's rmse: 10.0424 valid_0's l2: 100.849

Early stopping, best iteration is:

[44] valid_0's rmse: 10.0351 valid_0's l2: 100.703如图所示:标准RMSE和自定义RMSE的损失

回答 1

Stack Overflow用户

发布于 2020-05-25 15:39:06

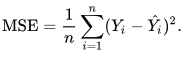

RMSE是MSE (均方误差)的平方根:

因此,如果要最小化RMSE,应该将函数custom_RMSE()更改为平方残差的度量。尝试:

def custom_RMSE(y_true, y_pred):

squared_residual = (y_pred - y_true)**2

grad = squared_residual

hess = np.ones(len(y_true))

return grad, hess无论如何,custom_RMSE()函数看起来不像给出:

梯度->数组形状= n_samples或shape = 类每个样本点的一阶导数(梯度)的值。 每个样本点的二阶导数(Hessian)的值。来源:https://lightgbm.readthedocs.io/en/latest/pythonapi/lightgbm.LGBMRegressor.html

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/62003826

复制相关文章

相似问题