错误添加spark2依赖项,avro到齐柏林0.7.3

错误添加spark2依赖项,avro到齐柏林0.7.3

提问于 2020-08-06 23:36:03

当我试着运行这些笔记时,它就开始了

%spark2

val catDF = spark.read.format("avro").load("/user/dstis/shopee_category_14112019")

catDF.show()

catDF.printSchema()或者这个替代方案

%spark2

val catDF = spark.read.format("com.databricks.spark.avro").load("/user/dstis/shopee_category_14112019")

catDF.show()

catDF.printSchema()这两种方法都返回错误消息如下:

org.apache.spark.sql.AnalysisException: Failed to find data source: avro. Please find an Avro package at http://spark.apache.org/third-party-projects.html;然后,我尝试重新配置maven在高级齐柏林-env,zeppelin_env_content如下,并重新启动齐柏林飞艇。

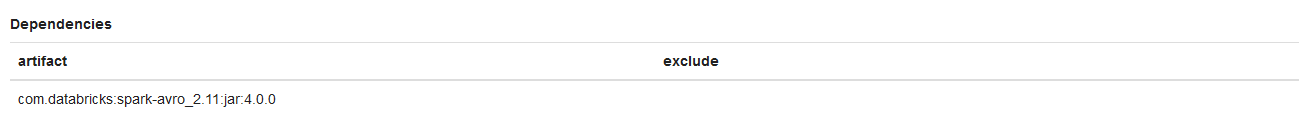

export ZEPPELIN_INTERPRETER_DEP_MVNREPO="https://repo1.maven.org/maven2/"然后,我将avro外部包添加到spark2 interperter依赖项中,如下所示

当我保存spark2 interperter配置时,会出现一条警报消息

Error setting properties for interpreter 'spark.spark2': Cannot fetch dependencies for com.databricks:spark-avro_2.11:jar:4.0.0当我尝试重新运行上面的spark2脚本时,仍然会给出相同的错误消息

然后我试着查看齐柏林飞艇的日志,我发现:

INFO [2020-08-07 05:50:01,496] ({qtp1786364562-929} InterpreterRestApi.java[updateSetting]:138) - Update interpreterSetting 2C4U48MY3_spark2

INFO [2020-08-07 05:50:01,498] ({qtp1786364562-929} FileSystemConfigStorage.java[call]:98) - Save Interpreter Settings to hdfs://mghdop01.dcdms:8020/user/zeppelin/conf/interpreter.json

ERROR [2020-08-07 05:50:02,768] ({Thread-853} InterpreterSettingManager.java[run]:573) - Error while downloading repos for interpreter group : spark, go to interpreter setting page click on edit and save it again to make this interpreter work properly. : Cannot fetch dependencies for com.databricks:spark-avro_2.11:jar:4.0.0

org.sonatype.aether.RepositoryException: Cannot fetch dependencies for com.databricks:spark-avro_2.11:jar:4.0.0

at org.apache.zeppelin.dep.DependencyResolver.getArtifactsWithDep(DependencyResolver.java:181)

at org.apache.zeppelin.dep.DependencyResolver.loadFromMvn(DependencyResolver.java:131)

at org.apache.zeppelin.dep.DependencyResolver.load(DependencyResolver.java:79)

at org.apache.zeppelin.dep.DependencyResolver.load(DependencyResolver.java:96)

at org.apache.zeppelin.dep.DependencyResolver.load(DependencyResolver.java:88)

at org.apache.zeppelin.interpreter.InterpreterSettingManager$3.run(InterpreterSettingManager.java:565)

INFO [2020-08-07 05:50:08,758] ({qtp1786364562-934} InterpreterRestApi.java[restartSetting]:181) - Restart interpreterSetting 2C4U48MY3_spark2, msg=

INFO [2020-08-07 05:50:51,049] ({qtp1786364562-934} NotebookServer.java[sendNote]:711) - New operation from 10.0.77.199 : 54803 : admin : GET_NOTE : 2FG5ZG2T8

WARN [2020-08-07 05:50:51,051] ({qtp1786364562-934} FileSystemNotebookRepo.java[revisionHistory]:171) - revisionHistory is not implemented for HdfsNotebookRepo

INFO [2020-08-07 05:50:51,182] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:188) - Create interpreter instance spark2 for note 2FG5ZG2T8

INFO [2020-08-07 05:50:51,183] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:221) - Interpreter org.apache.zeppelin.spark.SparkInterpreter 1880223590 created

INFO [2020-08-07 05:50:51,186] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:221) - Interpreter org.apache.zeppelin.spark.SparkSqlInterpreter 2127961876 created

INFO [2020-08-07 05:50:51,190] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:221) - Interpreter org.apache.zeppelin.spark.DepInterpreter 2062124054 created

INFO [2020-08-07 05:50:51,191] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:221) - Interpreter org.apache.zeppelin.spark.PySparkInterpreter 1072370991 created

INFO [2020-08-07 05:50:51,192] ({qtp1786364562-944} InterpreterFactory.java[createInterpretersForNote]:221) - Interpreter org.apache.zeppelin.spark.SparkRInterpreter 1082046315 created

INFO [2020-08-07 05:50:54,634] ({pool-2-thread-14} SchedulerFactory.java[jobStarted]:131) - Job paragraph_1596690982396_-221132441 started by scheduler org.apache.zeppelin.interpreter.remote.RemoteInterpretershared_session1767495081

INFO [2020-08-07 05:50:54,635] ({pool-2-thread-14} Paragraph.java[jobRun]:366) - run paragraph 20200806-121622_1573161163 using spark2 org.apache.zeppelin.interpreter.LazyOpenInterpreter@7011ef66

INFO [2020-08-07 05:50:54,636] ({pool-2-thread-14} RemoteInterpreterManagedProcess.java[start]:137) - Run interpreter process [/usr/hdp/current/zeppelin-server/bin/interpreter.sh, -d, /usr/hdp/current/zeppelin-server/interpreter/spark, -p, 33438, -l, /usr/hdp/current/zeppelin-server/local-repo/2C4U48MY3_spark2, -g, spark2]

INFO [2020-08-07 05:50:58,646] ({pool-2-thread-14} RemoteInterpreter.java[init]:248) - Create remote interpreter org.apache.zeppelin.spark.SparkInterpreter

INFO [2020-08-07 05:50:58,941] ({pool-2-thread-14} RemoteInterpreter.java[pushAngularObjectRegistryToRemote]:580) - Push local angular object registry from ZeppelinServer to remote interpreter group 2C4U48MY3_spark2:shared_process

INFO [2020-08-07 05:50:58,975] ({pool-2-thread-14} RemoteInterpreter.java[init]:248) - Create remote interpreter org.apache.zeppelin.spark.SparkSqlInterpreter

INFO [2020-08-07 05:50:58,980] ({pool-2-thread-14} RemoteInterpreter.java[init]:248) - Create remote interpreter org.apache.zeppelin.spark.DepInterpreter

INFO [2020-08-07 05:50:58,984] ({pool-2-thread-14} RemoteInterpreter.java[init]:248) - Create remote interpreter org.apache.zeppelin.spark.PySparkInterpreter

INFO [2020-08-07 05:50:58,993] ({pool-2-thread-14} RemoteInterpreter.java[init]:248) - Create remote interpreter org.apache.zeppelin.spark.SparkRInterpreter

WARN [2020-08-07 05:51:32,241] ({pool-2-thread-14} NotebookServer.java[afterStatusChange]:2074) - Job 20200806-121622_1573161163 is finished, status: ERROR, exception: null, result: %text org.apache.spark.sql.AnalysisException: Failed to find data source: avro. Please find an Avro package at http://spark.apache.org/third-party-projects.html;

at org.apache.spark.sql.execution.datasources.DataSource$.lookupDataSource(DataSource.scala:630)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:190)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:174)

... 47 elided

INFO [2020-08-07 05:51:32,294] ({pool-2-thread-14} SchedulerFactory.java[jobFinished]:137) - Job paragraph_1596690982396_-221132441 finished by scheduler org.apache.zeppelin.interpreter.remote.RemoteInterpretershared_session1767495081如何解决这个问题,因为当我在互联网上浏览它时没有直接的帖子/线程?是否有方法检查外部包avro数据库是否已为齐柏林飞艇下载?

注: HDP 2.6.5齐柏林飞艇素0.7.3火花2.3.0

-谢谢-

回答 1

Stack Overflow用户

回答已采纳

发布于 2020-10-30 04:11:09

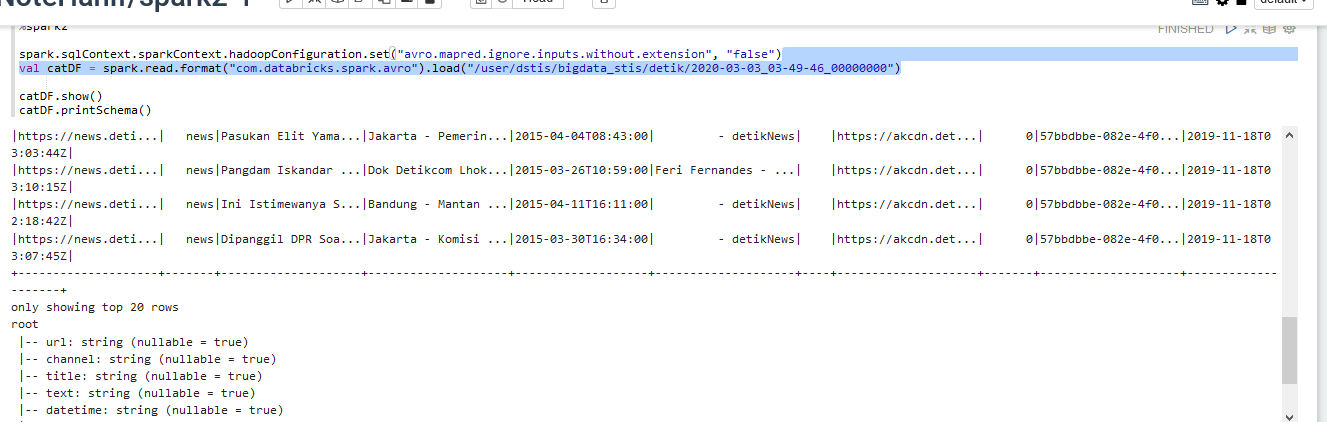

我们已经试着通过

首先,下载spark avro_2.11-4.0.0.jar(从databricks)

- Put /usr/hdp//spark2/jars/

- Go目录下到zeppelin解释器设置,查找spark2 interpreter

- Click编辑并找到spark.jars节

- 将该jar的路径添加到spark.jars节

- 中,将其保存并重新启动,如

下面的图片所示)

或在脚本下面

spark.sqlContext.sparkContext.hadoopConfiguration.set("avro.mapred.ignore.inputs.without.extension", "false")

val catDF = spark.read.format("com.databricks.spark.avro").load("/sample")在第一行中,可以根据文件将扩展名设置为false或true。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/63293167

复制相关文章

相似问题