使用xgb和XGBclassifier比GPU快

由于我是初学者,所以我提前道歉。我正在使用xgb和XGBoost来测试GPU与CPU的测试。结果如下:

passed time with xgb (gpu): 0.390s

passed time with XGBClassifier (gpu): 0.465s

passed time with xgb (cpu): 0.412s

passed time with XGBClassifier (cpu): 0.421s我想知道为什么CPU的性能似乎比GPU好得多,如果不是更好的话。这是我的设计:

- Python 3.6.1

- 操作系统: Windows 10 64位

- GPU: NVIDIA RTX 2070超级8gb vram (驱动程序更新到最新版本)

- CUDA 10.1已安装

- 中央处理器i7 10700 2.9Ghz

- 运行在木星笔记本上

- 通过pip安装了xgboost 1.2.0的夜间构建

**还尝试使用使用pip安装在预置二进制车轮上的xgboost版本:相同的问题。

下面是我使用的测试代码(从这里中提取):

param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'eta':0.5, 'min_child_weight':1,

'tree_method':'gpu_hist'

}

num_round = 100

dtrain = xgb.DMatrix(X_train2, y_train)

tic = time.time()

model = xgb.train(param, dtrain, num_round)

print('passed time with xgb (gpu): %.3fs'%(time.time()-tic))

xgb_param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'learning_rate':0.5, 'min_child_weight':1,

'tree_method':'gpu_hist'}

model = xgb.XGBClassifier(**xgb_param)

tic = time.time()

model.fit(X_train2, y_train)

print('passed time with XGBClassifier (gpu): %.3fs'%(time.time()-tic))

param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'eta':0.5, 'min_child_weight':1,

'tree_method':'hist'}

num_round = 100

dtrain = xgb.DMatrix(X_train2, y_train)

tic = time.time()

model = xgb.train(param, dtrain, num_round)

print('passed time with xgb (cpu): %.3fs'%(time.time()-tic))

xgb_param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'learning_rate':0.5, 'min_child_weight':1,

'tree_method':'hist'}

model = xgb.XGBClassifier(**xgb_param)

tic = time.time()

model.fit(X_train2, y_train)

print('passed time with XGBClassifier (cpu): %.3fs'%(time.time()-tic))我尝试过合并Sklearn网格搜索,以查看我是否会在GPU上获得更快的速度,但它最终比CPU慢得多:

passed time with XGBClassifier (gpu): 2457.510s

Best parameter (CV score=0.490):

{'xgbclass__alpha': 100, 'xgbclass__eta': 0.01, 'xgbclass__gamma': 0.2, 'xgbclass__max_depth': 5, 'xgbclass__n_estimators': 100}

passed time with XGBClassifier (cpu): 383.662s

Best parameter (CV score=0.487):

{'xgbclass__alpha': 100, 'xgbclass__eta': 0.1, 'xgbclass__gamma': 0.2, 'xgbclass__max_depth': 2, 'xgbclass__n_estimators': 20}我使用的数据集有75k的观测值。知道为什么我不会因为使用GPU而加速吗?数据集是否太小,无法从使用GPU中获得收益?

任何帮助都将不胜感激。非常感谢!

回答 3

Stack Overflow用户

发布于 2021-01-11 12:47:09

有趣的问题。就像您注意到的,有几个例子已经在Github和官方的xgboost site上注意到了。

- https://github.com/dmlc/xgboost/issues/2819

- https://discuss.xgboost.ai/t/no-gpu-usage-when-using-gpu-hist/532

也有其他人发表了类似的问题:

有几件事要检查。文件指出:

树的建立(训练)和预测可以加速使用数据自动化系统的GPU.

1.你的GPU数据自动化系统是否启用?

2.您是否使用可能受GPU使用影响的参数?

记住,只有某些参数才能从使用GPU中获益。这些措施是:

是的,是的。大多数都包含在您的超参数集中,这是一件好事。

{subsample, sampling_method, colsample_bytree, colsample_bylevel, max_bin, gamma, gpu_id, predictor, grow_policy, monotone_constraints, interaction_constraints, single_precision_histogram}3.您是否将参数配置为使用GPU支持?

如果您查看XGBoost参数页,您可以找到可能有助于提高您的时间的其他领域。例如,可以将updater设置为grow_gpu_hist (注意,这是没有意义的,因为您已经设置了tree_method,但是对于备注):

grow_gpu_hist:用GPU生长树。

在“参数”页面的底部,还有其他启用gpu_hist的参数,特别是deterministic_histogram (注意,这是没有意义的,因为这是默认的True):

确定地在GPU上建立直方图。由于浮点求和的非相联性,直方图的建立是不确定的.我们使用一个预四舍五入的例程来缓解这个问题,这可能会导致稍低的准确性。设置为false以禁用它。

4.数据

我用一些数据做了一些有趣的实验。因为我无法访问您的数据,所以我使用了sklearn的make_classification,它生成数据以一种相当强劲的方式。

我对您的脚本做了一些更改,但没有注意到任何更改:我更改了gpu上的超参数与cpu示例,运行了这100次,并取得了平均结果,等等。我记得我曾经使用过XGBoost GPU功能来加速一些分析,但是,我正在开发一个大得多的数据集。

我稍微编辑了您的脚本以使用这些数据,并开始更改数据集中的samples和features的数量(通过n_samples和n_features参数)来观察对运行时的影响。看起来,GPU将显著改善高维数据的培训时间,但是使用的大量数据--许多示例--并没有看到很大的改进。请参见下面的脚本:

import xgboost as xgb, numpy, time

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

xgb_gpu = []

xgbclassifier_gpu = []

xgb_cpu = []

xgbclassifier_cpu = []

n_samples = 75000

n_features = 500

for i in range(len(10)):

n_samples += 10000

n_features += 300

# Make my own data since I do not have the data from the SO question

X_train2, y_train = make_classification(n_samples=n_samples, n_features=n_features*0.9, n_informative=n_features*0.1,

n_redundant=100, flip_y=0.10, random_state=8)

# Keep script from OP intact

param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'eta':0.5, 'min_child_weight':1,

'tree_method':'gpu_hist', 'gpu_id': 0

}

num_round = 100

dtrain = xgb.DMatrix(X_train2, y_train)

tic = time.time()

model = xgb.train(param, dtrain, num_round)

print('passed time with xgb (gpu): %.3fs'%(time.time()-tic))

xgb_gpu.append(time.time()-tic)

xgb_param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'learning_rate':0.5, 'min_child_weight':1,

'tree_method':'gpu_hist', 'gpu_id':0}

model = xgb.XGBClassifier(**xgb_param)

tic = time.time()

model.fit(X_train2, y_train)

print('passed time with XGBClassifier (gpu): %.3fs'%(time.time()-tic))

xgbclassifier_gpu.append(time.time()-tic)

param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'eta':0.5, 'min_child_weight':1,

'tree_method':'hist'}

num_round = 100

dtrain = xgb.DMatrix(X_train2, y_train)

tic = time.time()

model = xgb.train(param, dtrain, num_round)

print('passed time with xgb (cpu): %.3fs'%(time.time()-tic))

xgb_cpu.append(time.time()-tic)

xgb_param = {'max_depth':5, 'objective':'binary:logistic', 'subsample':0.8,

'colsample_bytree':0.8, 'learning_rate':0.5, 'min_child_weight':1,

'tree_method':'hist'}

model = xgb.XGBClassifier(**xgb_param)

tic = time.time()

model.fit(X_train2, y_train)

print('passed time with XGBClassifier (cpu): %.3fs'%(time.time()-tic))

xgbclassifier_cpu.append(time.time()-tic)

import pandas as pd

df = pd.DataFrame({'XGB GPU': xgb_gpu, 'XGBClassifier GPU': xgbclassifier_gpu, 'XGB CPU': xgb_cpu, 'XGBClassifier CPU': xgbclassifier_cpu})

#df.to_csv('both_results.csv')我在相同的数据集中分别和一起运行了对每个(样本、特性)的更改。见下文的结果:

| Interval | XGB GPU | XGBClassifier GPU | XGB CPU | XGBClassifier CPU | Metric |

|:--------:|:--------:|:-----------------:|:--------:|:-----------------:|:----------------:|

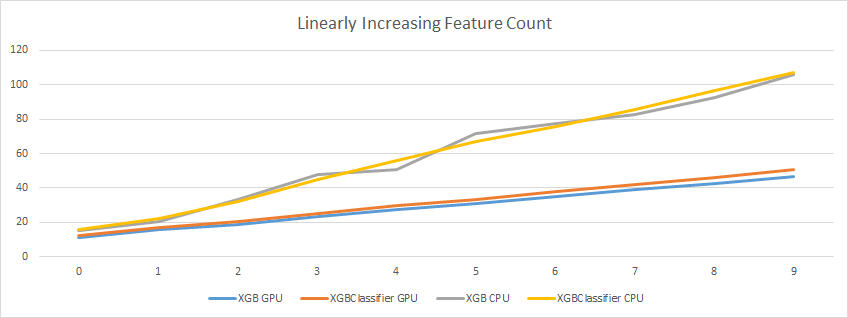

| 0 | 11.3801 | 12.00785 | 15.20124 | 15.48131 | Changed Features |

| 1 | 15.67674 | 16.85668 | 20.63819 | 22.12265 | Changed Features |

| 2 | 18.76029 | 20.39844 | 33.23108 | 32.29926 | Changed Features |

| 3 | 23.147 | 24.91953 | 47.65588 | 44.76052 | Changed Features |

| 4 | 27.42542 | 29.48186 | 50.76428 | 55.88155 | Changed Features |

| 5 | 30.78596 | 33.03594 | 71.4733 | 67.24275 | Changed Features |

| 6 | 35.03331 | 37.74951 | 77.68997 | 75.61216 | Changed Features |

| 7 | 39.13849 | 42.17049 | 82.95307 | 85.83364 | Changed Features |

| 8 | 42.55439 | 45.90751 | 92.33368 | 96.72809 | Changed Features |

| 9 | 46.89023 | 50.57919 | 105.8298 | 107.3893 | Changed Features |

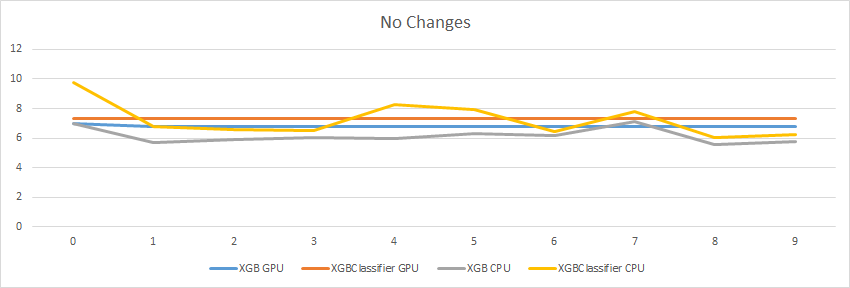

| 0 | 7.013227 | 7.303488 | 6.998254 | 9.733574 | No Changes |

| 1 | 6.757523 | 7.302388 | 5.714839 | 6.805287 | No Changes |

| 2 | 6.753428 | 7.291906 | 5.899611 | 6.603533 | No Changes |

| 3 | 6.749848 | 7.293555 | 6.005773 | 6.486256 | No Changes |

| 4 | 6.755352 | 7.297607 | 5.982163 | 8.280619 | No Changes |

| 5 | 6.756498 | 7.335412 | 6.321188 | 7.900422 | No Changes |

| 6 | 6.792402 | 7.332112 | 6.17904 | 6.443676 | No Changes |

| 7 | 6.786584 | 7.311666 | 7.093638 | 7.811417 | No Changes |

| 8 | 6.7851 | 7.30604 | 5.574762 | 6.045969 | No Changes |

| 9 | 6.789152 | 7.309363 | 5.751018 | 6.213471 | No Changes |

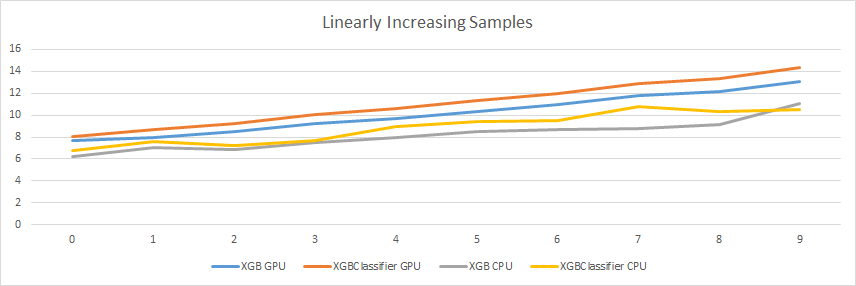

| 0 | 7.696765 | 8.03615 | 6.175457 | 6.764809 | Changed Samples |

| 1 | 7.914885 | 8.646722 | 6.997217 | 7.598789 | Changed Samples |

| 2 | 8.489555 | 9.2526 | 6.899783 | 7.202334 | Changed Samples |

| 3 | 9.197605 | 10.02934 | 7.511708 | 7.724675 | Changed Samples |

| 4 | 9.73642 | 10.64056 | 7.918493 | 8.982463 | Changed Samples |

| 5 | 10.34522 | 11.31103 | 8.524865 | 9.403711 | Changed Samples |

| 6 | 10.94025 | 11.98357 | 8.697257 | 9.49277 | Changed Samples |

| 7 | 11.80717 | 12.93195 | 8.734307 | 10.79595 | Changed Samples |

| 8 | 12.18282 | 13.38646 | 9.175231 | 10.33532 | Changed Samples |

| 9 | 13.05499 | 14.33106 | 11.04398 | 10.50722 | Changed Samples |

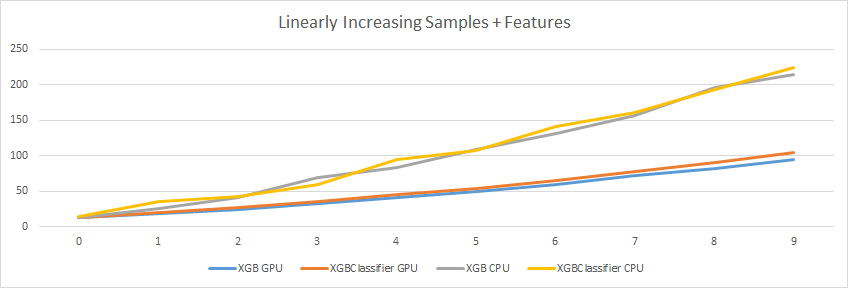

| 0 | 12.43683 | 13.19787 | 12.80741 | 13.86206 | Changed Both |

| 1 | 18.59139 | 20.01569 | 25.61141 | 35.37391 | Changed Both |

| 2 | 24.37475 | 26.44214 | 40.86238 | 42.79259 | Changed Both |

| 3 | 31.96762 | 34.75215 | 68.869 | 59.97797 | Changed Both |

| 4 | 41.26578 | 44.70537 | 83.84672 | 94.62811 | Changed Both |

| 5 | 49.82583 | 54.06252 | 109.197 | 108.0314 | Changed Both |

| 6 | 59.36528 | 64.60577 | 131.1234 | 140.6352 | Changed Both |

| 7 | 71.44678 | 77.71752 | 156.1914 | 161.4897 | Changed Both |

| 8 | 81.79306 | 90.56132 | 196.0033 | 193.4111 | Changed Both |

| 9 | 94.71505 | 104.8044 | 215.0758 | 224.6175 | Changed Both |不变

线性增加特征计数

线性增长样本

线性增长样本+特征

当我开始做更多的研究时,这是有意义的。众所周知,GPU具有很好的高维数据扩展能力,如果您的数据是高维,您将看到训练时间的改进。见以下示例:

- 1/euclid.ss/1294167962

- 支持GPU的高维数据上更快的Kmeans聚类

- https://link.springer.com/article/10.1007/s11063-014-9383-4

虽然我们不能肯定地说,如果不访问您的数据,GPU的硬件功能似乎能够在数据支持时显着地提高性能,而且考虑到您拥有的数据的大小和形状,情况似乎并非如此。

Stack Overflow用户

发布于 2021-04-13 20:05:52

Stack Overflow用户

发布于 2021-01-10 16:03:38

.选择CPU与GPU

神经网络的复杂性也取决于输入特性的数量,而不仅仅是隐藏层中的单元数。如果你的隐藏层有50个单元,数据集中的每个观测都有4个输入特性,那么你的网络是很小的(大约200个参数)。如果每个观察都有500万输入特性,就像在一些大的上下文中需要处理的那样,那么您的网络在参数的数量上是相当大的。

从我的观察来看,上面有几个参数需要处理,所以在GPU中需要花费大量的时间。

根据我个人的经验:

我曾经用CNN算法在GPU中训练一些图像,CPU在完整的数据集上生成经过训练的模型所需的处理时间较短,而GPU则需要更多的时间。

https://stackoverflow.com/questions/63442697

复制相似问题