如何获得包含在多个类中的确切文本

如何获得包含在多个类中的确切文本

提问于 2020-09-17 10:53:37

我是蟒蛇的新手。我试着从网站上抓取数据。但我没能提取出我需要的数据。这里我分享我的python代码

import requests

from bs4 import BeautifulSoup

url = 'https://v2.sherpa.ac.uk/view/publisher_list/1.html'

r = requests.get(url)

htmlContent = r.content

soup = BeautifulSoup(htmlContent, 'html.parser')

title = soup.title

print(soup.find_all("div", {"class" :["ep_view_page ep_view_page_view_publisher_list", "row"]}))我面临的问题是,我需要div class = row中的数据,但是这里有两个具有行名的div类。还有一件事,我应该写些什么来从多个URL和页面中获取数据,如果您看到标签具有类、col 6和col 3;在href标记上,当我链接到它打开一个新页面时,它应该写什么。

<div class="row">

<div class="col span-6">

<a href="http://v2.sherpa.ac.uk/id/publisher/50974?template=romeo">'Grigore Antipa' National Museum of Natural History</a>

</div>

<div class="**col span-3**">

<strong>Romania</strong>

<span class="label">Country</span>

</div>

<div class="**col span-3**">

<strong>1 [<a href="/view/publication_by_publisher/50974.html">view</a> ]</strong>

<span class="label">Publication Count</span>

</div>

</div> 我在这里分享网站地图

<div class="row">

<h1 class="h1_like_h2">Publishers</h1>

<div class="ep_view_page ep_view_page_view_publisher_list">

</p><div class="row">

<div class="**col span-6">

<a href="http://v2.sherpa.ac.uk/id/publisher/50974?template=romeo">'Grigore Antipa' National Museum of Natural History</a>

</div>

<div class="col span-3">

<strong>Romania</strong>

<span class="label">Country</span>

</div>

<div class="col span-3">

<strong>1 [<a href="/view/publication_by_publisher/50974.html">view</a> ]</strong>

<span class="label">Publication Count</span>

</div>

</div>

<p></p><a name="group_=28"></a><h2>(</h2><p>

</p><div class="row">

<div class="col span-6">

<a href="http://v2.sherpa.ac.uk/id/publisher/13937?template=romeo">(ISC)²</a>

</div>

<div class="col span-3">

<strong>United States of America</strong>

<span class="label">Country</span>

</div>

<div class="col span-3">

<strong>1 [<a href="/view/publication_by_publisher/13937.html">view</a> ]</strong>

<span class="label">Publication Count</span>

</div>

</div>

<p></p><a name="group_1"></a><h2>1</h2><p>

</p><div class="row">

<div class="col span-6">

<a href="http://v2.sherpa.ac.uk/id/publisher/1939?template=romeo">1066 Tidsskrift for historie</a>

</div>

<div class="col span-3">

<strong>Denmark</strong>

<span class="label">Country</span>

</div>

<div class="col span-3">

<strong>1 [<a href="/view/publication_by_publisher/1939.html">view</a> ]</strong>

<span class="label">Publication Count</span>

</div>

</div>

<p></p><a name="group_A"></a><h2>A</h2><p>

**so on.......**回答 1

Stack Overflow用户

发布于 2020-09-18 17:53:50

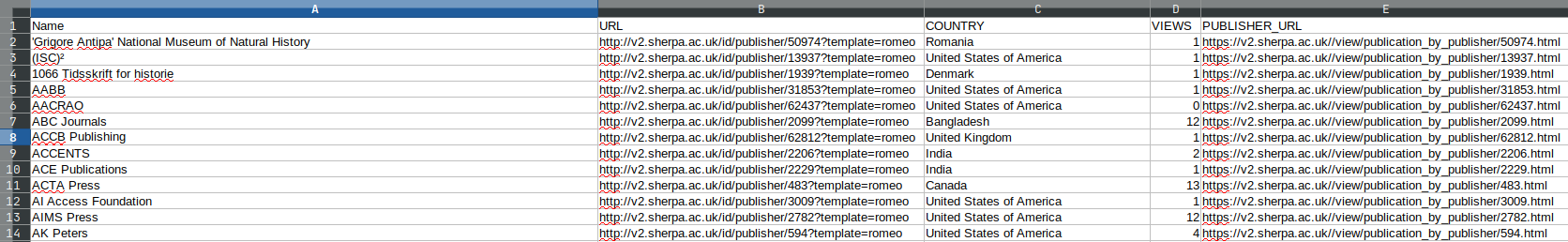

我不太确定这是不是你想要的,但我很高兴能拿到这些东西。

基本上,下面的代码会刮掉整个页面-- 4487 entries - for:

注意:这只是一个数据样本。

实体的名称- ANZAMEMS (Australian and New Zealand Association for Medieval and Early Modern Studies)

- The URL到其子页面-

http://v2.sherpa.ac.uk/id/publisher/1853?template=romeo

- The country -

Australia

- The视图计数-

1

- The,即"publisher url“-

https://v2.sherpa.ac.uk//view/publication_by_publisher/1853.html

并将所有这些输出到一个.csv文件中,该文件如下所示:

下面是代码:

import csv

import requests

from bs4 import BeautifulSoup

def make_soup():

p = requests.get("https://v2.sherpa.ac.uk/view/publisher_list/1.html").text

return BeautifulSoup(p, "html.parser")

main_soup = make_soup()

col_span_6_soup = main_soup.find_all("div", {"class": "col span-6"})

col_span_3_soup = main_soup.find_all("div", {"class": "col span-3"})

def get_names_and_urls():

data = [a.find("a") for a in col_span_6_soup if a is not None]

return [[i.text, i.get("href")] for i in data if "romeo" in i.get("href")]

def get_countries():

return [c.find("strong").text for c in col_span_3_soup[::2]]

def get_views_and_publisher():

return [

[

i.find("strong").text.replace(" [view ]", ""),

f"https://v2.sherpa.ac.uk/{i.find('a').get('href')}",

] for i in col_span_3_soup[1::2]

]

table = zip(get_names_and_urls(), get_countries(), get_views_and_publisher())

with open("loads_of_data.csv", "w") as output:

w = csv.writer(output)

w.writerow(["NAME", "URL", "COUNTRY", "VIEWS", "PUBLISHER_URL"])

for col1, col2, col3 in table:

w.writerow([*col1, col2, *col3])

print("You've got all the data!")页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/63936498

复制相关文章

相似问题