来自Trax的AttentionQKV

来自Trax的AttentionQKV

提问于 2020-09-30 00:36:05

Trax实现的AttentionQKV层如下所示:AttentionQKV

def AttentionQKV(d_feature, n_heads=1, dropout=0.0, mode='train'):

"""Returns a layer that maps (q, k, v, mask) to (activations, mask).

See `Attention` above for further context/details.

Args:

d_feature: Depth/dimensionality of feature embedding.

n_heads: Number of attention heads.

dropout: Probababilistic rate for internal dropout applied to attention

activations (based on query-key pairs) before dotting them with values.

mode: One of `'train'`, `'eval'`, or `'predict'`.

"""

return cb.Serial(

cb.Parallel(

core.Dense(d_feature),

core.Dense(d_feature),

core.Dense(d_feature),

),

PureAttention( # pylint: disable=no-value-for-parameter

n_heads=n_heads, dropout=dropout, mode=mode),

core.Dense(d_feature),

)特别是,三个平行致密层的目的是什么?这个层的输入是q,k,v,掩码。为什么q,k,v被放置在稠密层中?

回答 1

Stack Overflow用户

回答已采纳

发布于 2020-09-30 08:19:05

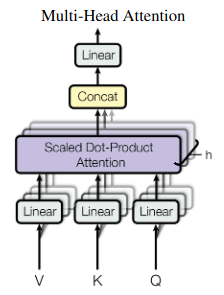

这个代码片段是注意是你所需要的文件第5页上等式的一个实现,该文件在2017年引入了变压器模型。计算结果如图2所示:

隐藏状态被投影到h注意头中,从而并行地进行缩放的点积注意。投影可以解释为提取与头部相关的信息。然后,每个头根据不同的(学习)标准进行概率检索。

页面原文内容由Stack Overflow提供。腾讯云小微IT领域专用引擎提供翻译支持

原文链接:

https://stackoverflow.com/questions/64129393

复制相关文章

相似问题